Suggested workflow

@bobpell said:

If I put it as a VST/CLAP... in one Reaper track, I don't know how to separate the single sounds on different tracks, if still possible.

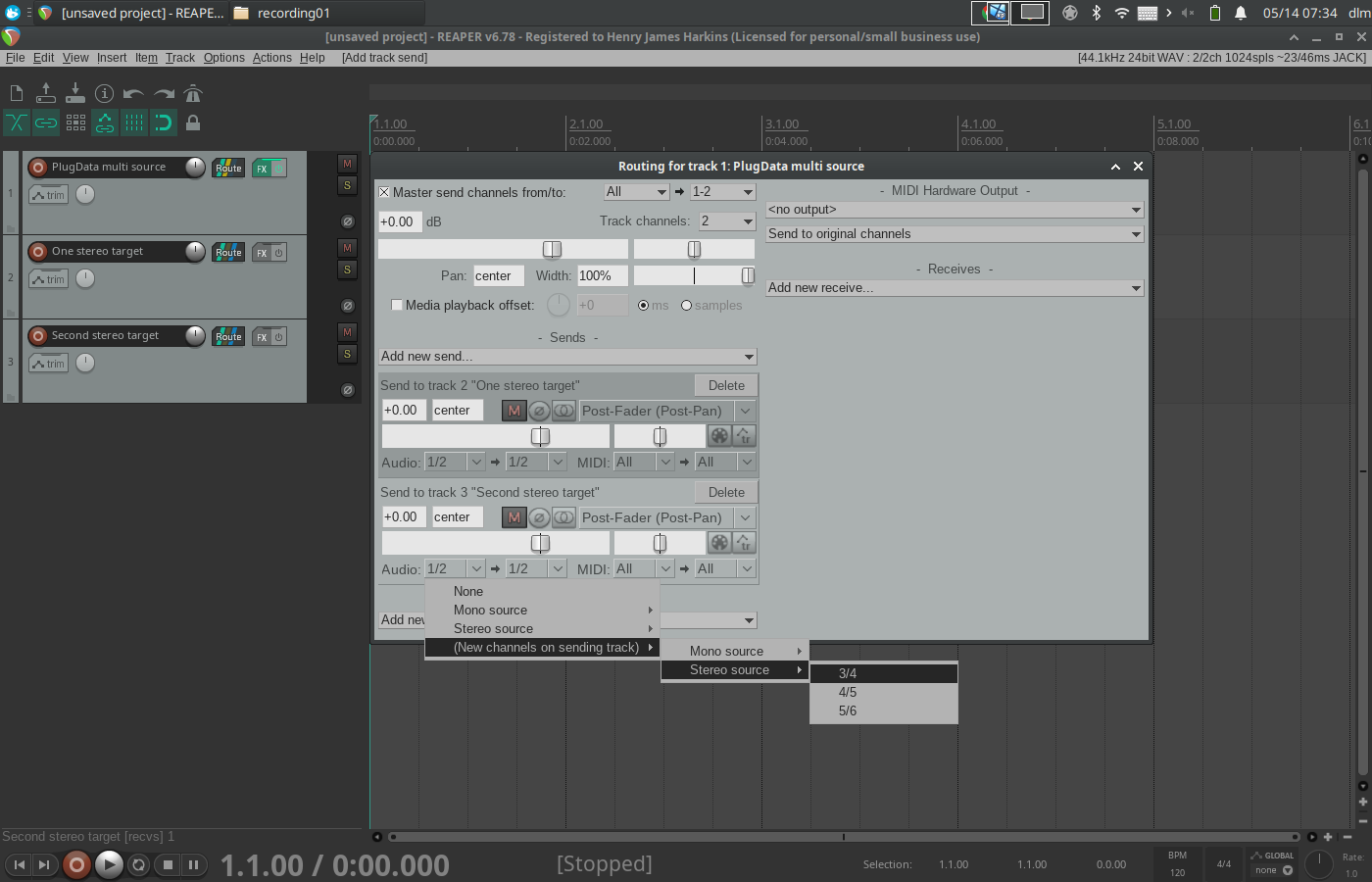

Reaper allows sends to be defined based on subsets of the source channels.

2 - single parts of the project (in different Plugdata patches) opened each in a single Reaper tracks: how can I keep shynchronized them? In the whole project I have a "complex" timer that should drive each sound generation block.

DAW timeline data are available, but inconvenient to use directly. I made an abstraction, [playhead-tick], that generates whole-beat ticks from the raw timeline values, detailed in the second half here:

... using objects in https://github.com/jamshark70/hjh-abs

This way, each individual patch can know what beat it is on the timeline, and this would be the same across all of them.

When you say "'complex' timer," of course there's no way for a reader here to know what you mean. But it's possible to synthesize any timing you like from equally spaced ticks. I also have a [tick-scheduler] object in my abstraction pack where you can reschedule messages at any intervals you like (= synthesizing timing).

[playhead-tick] was originally designed as a companion to the scheduler, but later I felt that a clock-multiplier approach is easier for most cases and more idiomatic to Pd -- so the video demonstrates only the clock multiplier. (To be honest, incremental rescheduling based on time deltas turned out to be a bit clumsy in Pd -- it can be made to work but never felt to me like a natural idiom. There's an example in the [tick-scheduler] help patch.)

hjh

Suggested workflow

Hi Tim, nice to meet you! Thanks for the answer. So I got a first correct approach. As far as Plugdata is concerned, I'm quite keeping tracked on it, already tried to test some very simple patch in Reaper, both under Windows and Linux. And I like it so much

But I'm a noobie, so not all fully clear to me about it's use.

Two scenarios you quoted:

1 - full project inside Plugdata.

If I put it as a VST/CLAP... in one Reaper track, I don't know how to separate the single sounds on different tracks, if still possible.

2 - single parts of the project (in different Plugdata patches) opened each in a single Reaper tracks: how can I keep shynchronized them? In the whole project I have a "complex" timer that should drive each sound generation block.

Sorry if I'm talking non-sense and thanks again

Extract filter params from impulse response?

Early in the pandemic I learned that I could ping a filter, record its impulse response, and convolve another signal with that impulse response to approximate that filter, admittedly in the most inflexible, CPU-hogging, and memory-wasting way. But hey--it was exciting to learn that it was possible!

Is it possible to go the other way, from an impulse response to the parameters for some arrangement of low pass/hi pass/band pass filters? Not for an arbitrary impulse response, but for decaying resonances, like the sound of a tap on the bottom of a plastic cup? The solution doesn't have to be exact, analytic, in real-time, or completely automated, nor does it have to use Pd exclusively. I just want to find a way to model these sounds other than using my ear + trial and error.

Edit: I just tried this--in Reaper, I recorded plastic cup tap, convolved it with white noise, listened and looked at its spectrum. On another track, I tried to match the sound and spectrum using the same white noise through 5 bands of parametric EQ and got closer than I thought I would. I then tried to port that parametric to Pd and pinged it. It only slightly resembles the original cup, but my port of that filter could be junk.

Edit 2: my port IS junk! I just made Pd ping the Reaper EQ and it sounds much closer!

Edit 3: but I still welcome any suggestions on how to do this better. I see that there is an FIR filter in Reaper that allows me to enter an arbitrary frequency response, but I can't alter that response dynamically so it defeats the purpose.

How to reset detuned pitch of a midifile?

@Jona https://www.reddit.com/r/Reaper/comments/9lb5n2/midi_pitch_modulation_affecting_the_next_item/

more....

https://www.pgmusic.com/forums/ubbthreads.php?ubb=showflat&Number=490405

So probably the easy way to reset the pitch is to send a message into [bendout].

I am pretty sure the message is 0 for no bend.......... https://forum.pdpatchrepo.info/topic/4333/bendout-pitch-bending-in-general

David.

Heavy shutdown, released as open source. Compile VST’s, possible externals from your patches

@bocanegra said:

Since the [block~] and [switch~] objects are unsupported, how can i know what block size the vst will use? Will it just default to whatever the daw running it is using?

Yes, the process() function is handled by the DPF wrapper and eventually the host-thread that the plugin is running in.

(is it possible to do one sample feedback delays?)

I don't know, this may depend on the host. Afaik the heavy engine can run at single sample blocks, but in general I don't believe DAWs run their plugins like this.

Besides float, bool and trig, are there any other assignable types for DPF parameters? (is a drop down list selection possible?)

Specifically the case of drop-down lists is something that I want as well! I tried playing with this using an "enumerator" type (there is a branch that does this), but it's a bit of a dirty fix for it. Ideally we come up with a specific method for these kind off messages. It certainly is possible to add this both to the heavy messaging system and to the DPF wrapper.

I can't seem to make lv2 ports work. I am copying the created <pluginname>.lv2 folder to my local .lv2 folder but the plugins dont register...?

You may need to run make twice in order for the ttl to be properly generated. I think atm you also still have to copy over the ttl into the lv2 directory. Small "quality of life"-improvements to be made there.

Other than that, great work! I love it!

Thnx for testing! Latest releases have a bunch of midi improvements and Electro-Smith is working on a more proper integration with their platform that will be coming soon!

In the mean time please direct all issues and questions regarding the project over to Github or the Discord and IRC chats please, I don't frequent these forums very much and it's better to centralize some of the communication around the project.

Would love to hear more feedback on functionality and tests of the various generator targets!

Am now looking at VCV Rack exporting and some people are working on additional embedded targets based on ESP32 hardware.

Lots of things to come!

PD <-> DAW interactions tutorials please?

@jameslo said:

for completeness I suppose one should mention using MIDI and audio loopback software,

@parisgraphics said:

I have access to PD on Linux

In that case Jack server is your friend. Get QjackCtl for easy setup and patching. I do such things as control pd patches from my DAW via midi through jack to PD, translate midi messages from my keyboard in PD and route them to my DAW through jack, route audio from my DAW to PD for processing and back again through jack, the possibilities are endless...

DAW's that work for these kinds of setups on linux (that I have worked with): Ardour, Qtractor, Reaper. Rosegarden - there are probably more. For the time being I prefer reaper

@jameslo said:

the flexible MIDI and audio routing capabilities in Reaper seem to make them unnecessary.

I haven't been using reaper for a more than a couple of months, but at least on linux all external routing has to be patched through jack server

PD <-> DAW interactions tutorials please?

Agreed. I've been playing with Camomile and have written effects plug-ins in Pd that have sent and received MIDI and/or OSC and have been controlled by DAW automation, so that's substantial integration. I defer to @emviveros regarding the support for externals since I'm just a vanilla guy. I've also written effects plug-ins with side-chains for Reaper, so that means your Pd VST patches can be modulated by audio signals from other Reaper tracks. Reaper itself supports fairly complete OSC and MIDI controls (both input and output) so that's another layer of integration that's possible. And for completeness I suppose one should mention using MIDI and audio loopback software, but the flexible MIDI and audio routing capabilities in Reaper seem to make them unnecessary.

send audio from Pd to Reaper

@jameslo said:

@cfry Soundflower has attenuators....check that they are all the way up in Audio MIDI Setup.

I was gonna say this with no knowledge of reaper nor soundflower whatsoever: There is a very high likelyhood that there are adjustable gain stages between pd -> soundflower -> reaper. Don't try to make up for it in PD

send audio from Pd to Reaper

@cfry said:

100 dB from Pd turns magically to -50 dB in Reaper.

One thing is that Pd's objects related to dB add 100 -- 0 dBFS in other software is represented as 100 dB in Pd.

So you don't actually have 100 dB in Pd. Nor would you want that. Full-scale in floating point is -1 to +1. 100 dB represents an amplitude of 10 ^ (100/20) = +/-100000 (edited b/c at 6 am on a tablet, I miscounted zeros). Reaper would not be expecting that, and not handle it well.

That doesn't explain the extra 50 dB difference -- I've no idea about that.

hjh

send audio from Pd to Reaper

Hi,

Sorry if this is a bit off topic, but anyway:

I have to give in and use Reaper for external multiband compression.

But! When I connect reaper with Soundflower the signal is weak, its present but weak. 100 dB from Pd turns magically to -50 dB in Reaper.