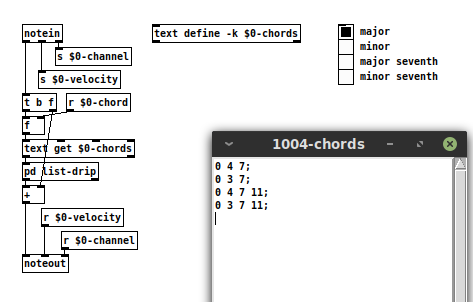

Patch that will play different chords with [midi] input

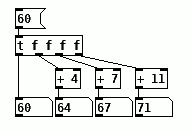

@chopin83 This will just take basic math if you are not concerned about key and be much simpler if you are calculating it from the root instead of from the 7th like the patch you found does. Our major scale is W-W-H-W-W-W-H, to put this in the view of midi note numbers, 2-2-1-2-2-2-1, so we just need to add the appropriate numbers to generate the chords and output the midi. For a Maj7 we just take the midi note and add 4 to get the third, and 3 to that to get 5th and add 4 more to that to get the 7th, for middle C (midi note 60);

60+4 = 64

64+3 = 67

67+4 = 71

Which means our Cmaj7 is midi notes 60-64-67-71 and Dmaj7 would be, 62+4=66, 66+3=69, 69+4=73 or 62-66-69-73 and we can easily change this chord to minor by adding 3 instead of 4 when we calculate the third and this also shows a fault in the math, we can no longer just use the result of the previous calculation in the next because that would give 60+3=63 and 63+3=66 and so on, it flats everything. So we go to calculating all the notes from the root, to get the third we add 4, to get the 5th we 7 and to get the 7th we add 11, now we can do any of out basic chord manipulations easily by adding or subtracting 1 from the appropriate note so our minor would be just adding 3, 7, and 11 to root note number which gives us 60, 63, 67 and 71. So the basic patch for a Major 7th would be as simple as,

Now go make it play minors, suspended and augmented chords in major and minor keys and if you feel ambitious, make it play in key so you get the proper 7th!

Patch that will play different chords with [midi] input

Hello dear PD patchrepo community !

I'm currently trying to make a PD patch that will basically produce different chords when I use different keys on my midi keyboard. I stumbled upon one patch which seems to be a good basis for my work so far but it's too limited ; I'm not very technical savy ( more of a musician actually ) but for my course ( bachelor in sound engineering ) I'm supposed to make a patch that generates chord when I play a note on my MIDI keyboard.

I'd like to generate different chords ( for example minor chords, major chords, and scales for example ) which would be triggered when I use keys on my midi keyboards; do you think this is feasible ?

So far this is what I got;

Just to make it clear for example I'd like my patch to generate a C major seventh cord by inputing the C key on my midi keyboard and add more versatility to my patch by adding extra functionalities such as being able to make a reversed chord too...

I'm struggling to build this patch and any help would be greatly appreciated !

Thanks a lot !

Puredata on Chromebook Crostini difficulties. Can someone help?

@pman I am not a Linux user, but "chrt operation not permitted" probably means that your settings above are being ignored........ https://unix.stackexchange.com/questions/114643/chrt-failed-to-set-pid-xxxs-policy-on-one-machine-but-not-others has a solution.

Then hpet will be necessary I think for jack and alsa and maybe need to be configured in their settings once it is enabled.

https://linuxmusicians.com/viewtopic.php?t=2296

You probably should reduce swappiness to 10 to improve performance as the analysis suggests.

Strange that rtc is not found. Might not be necessary with hpet but probably easily fixed.

If that does you no good because the Kernel problem persists then you will need help in the audio threads in forums for your os.

David.

Thermal noise

@whale-av The 3.5mm jack without a resistor would actually add more noise than with it,. The input jack is a switchting type jack, with nothing plugged in the tip and ring is shorted directly to ground, if you just plugged in a bare plug, it would break that short to ground, the plug would become an antenna and while being very short and limited in the wavelengths it could pickup, it would pickup a good deal and certainly more than the Johnson noise of a resistor, if you just plugged in a cable and left the other end unplugged, you would get even more since your antenna is now longer and can pick up longer wavelengths better. A resistor added in, will just resist, those weak RF signals will need to over come that resistance to reach the preamp and be amplified. Johnson noise is a very small factor, it does contribute, but it is not something one would really want to try and exploit as a noise source, a few feet of and wire will give you considerably more. A rather simplified explanation and not completely correct, consider it practical but not technical.

As an aside, the second ring on the standard tip, ring, ring 3.5 mm plug that we see on phones and anything that can take a headset is powered, those headsets use electret microphones which need some voltage to function. I am not sure what this voltage is, but if you can find a zener diode with a reverse breakdown voltage that is less than the voltage supplied by the jack for a microphone, you could likely build a noise generator into a standard 3.5mm plug with little issue. Zener diodes are generally thought of as poor noise generators, their output level is quite erratic, they are too random to be good noise, but that is great when your needs are random and not pure white noise. There is no real gain to building such a noise source into a plug, just plugging in any cable and leaving the other end floating will do just as well and with less effort.

MIDI into [seq] and Markov chains

@weightless Great!

If you have the chord 88 90 in the [text define $0-chords] and a single note 88 comes in, [text search] would only look in the first column and find the line with 88 90, where this is in fact another element.

If you add ||| or any other unique symbol to the right of each note or chord, it would be 88 90 ||| in the [text]. Now [text search] is looking for 88 ||| and cannot find the line with 88 90 |||. So each note and chord is uniquely identifiable

MIDI into [seq] and Markov chains

@ingox Thanks for the patches, I understand what you mean now. This is clever, but if I got it right it means that the markov chain is either monophonic or polyphonic, it can't have a mix of both (how do you tell a single note from a chord index?). In the MarkovGenerator you'd store each event one after the other regardless of whether they are single notes or chords. I think this behaviour is desirable, or at least more flexible.

I've been thinking about this and I reckon the most logical way to approach it would be to first understand what distinguishes a "chord" from a single note, and the difference is that chord notes all happen at the same time. In Pd that's what a list does basically, and so it makes sense to me if an incoming list is interpreted as a chord. This would be the most practical approach. I went a step further and parsed the right inlet this way: lists are always interpreted as chords, a stream of floats and symbols is also interpreted as a list IF there is no delay between the elements (like in an actual midi chord), otherwise they are interpreted as single floats or symbols respectively.

Then in the memory text, only lists are joined together with [vl2s] rather than converting everything to a symbol, this is a good compromise I think. The advantage is that if you don't send any lists at all, the abstraction acts just like the monophonic version (no encoding).

polymarkov.zip

I hope everything works properly, it will require some testing. I've modified a few things including the clip problem, put everything in a gop (makes more sense than using an argument) and added state saving for the texts and order.

vanilla partitioned convolution abstraction

Ok, here's the working vanilla partitioned convolution patch!

https://www.dropbox.com/s/05xl7ml171noyjq/convolution~.zip…

there are two subpatches for testing, one is light with a relative big window partition (1024) and a short Impulse Response (2 secs).

The other is quite heavy, it's an 8 sec long IR with a window size of 512! This one takes about 57-58% of my CPU power, and I'm on a last generation macbook pro (2.6Ghz processor)... but I need to increase the Delay (msec) from 5 to 10 in the audio settings, otherwise I get terrible clicks!

William Brent's convolve is ridiculously much more efficient, the same parameters take about 14% of my CPU power and I can use a delay of 5 ms in the audio settings.

But anyway, this is useful for teaching and apps that implement a light convolution reverb (short IR/not too short window) need pure vanilla (libpd/camomille and stuff)

ps. Bug, for some reason, you may need to recreate the object so the sound comes out. I have no idea yet why...

Cheers!

streamstretch~ abstraction not working

Tried using the streamstretch~ abstraction by William Brent (http://williambrent.conflations.com/pages/research.html) recently.

However, when I loading the patch from the associated help file in pd-extended, it won't work. I get the following error messages:

clone ./lib/streamstretch-buf-writer-abs 100 2415

... couldn't create

text define $0-streamstretch-chord-text

... couldn't create

text get $0-streamstretch-chord-text

... couldn't create

text tolist $0-streamstretch-chord-text

... couldn't create

text fromlist $0-streamstretch-chord-text

... couldn't create

clone ./lib/streamstretch-buf-writer-abs 100 1536

... couldn't create

Tried loading the patch in Pd Vanilla and I still get error messages:

clone ./lib/streamstretch-buf-writer-abs 100 2415

... couldn't create

clone ./lib/streamstretch-buf-writer-abs 100 1004

... couldn't create

I'm just wondering if anyone else gets the same kinds of error messages when they try to load this abstraction.

Schenkarian generation of music box music

I like the weighted random idea —

I think I'm going to start by implementing the bare-bones core structure of a piece, starting with the root note and mode, and then generating a chord structure based off of this mode. The idea of Schenkarian analysis is to start off by analyzing the larger chord structure of a piece (to find the overarching I-V-I motion) and then move inwards layer by layer in terms of complexity until the whole piece is analyzed down to the individual notes. I'm going to approach generation in the same way — when I get to the point where I am generating the smaller chord progressions I will probably use a weighted random scheme of commonly used motives.