[mimba] physical modelling synth

The mimba synth is heavy on the computer cpu because a large part of the sound production happens in a subpatch with blocksize 1, so sample per sample . On my older (linux) laptop the audio production wasn't reliable and started 'cracking' with 3 or 4 voices. On a more recent (linux) laptop it works well with 8 to 10 voices. So I guess that the crackling sound is related to your laptop performance (and the fact that my synth is so cpu-heavy)...

The error message 'expr divide by zero detected' doesn't relate to the performance of the patch. I also get this message, but my patch and synth work fine. This zero division only happens with the first 10 notes that you play, because mimba has 10-voice polyphony... I guess the zero division is related to a wrong or missing initial value somewhere in the patch.

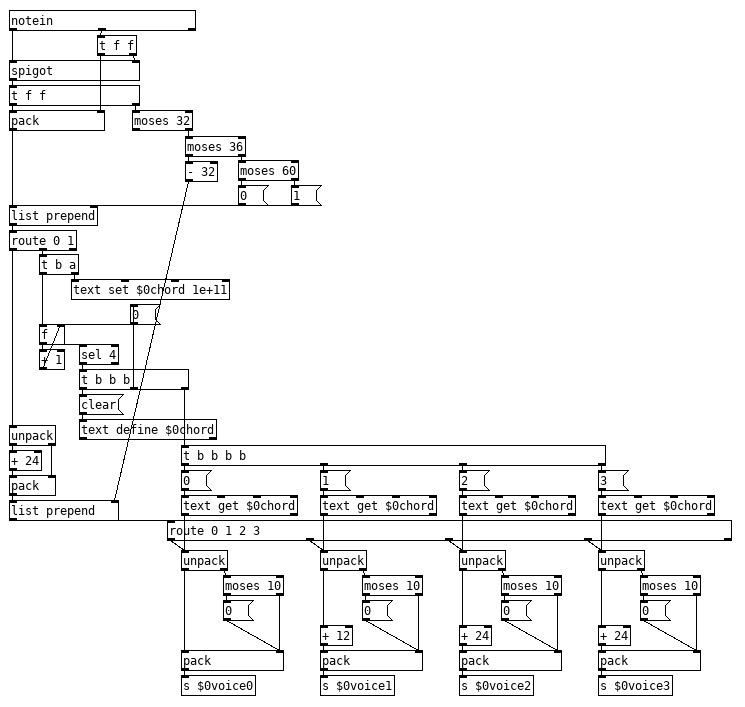

Sorting 4 midi note numbers to 4 different instruments

@ChicoVaca While patching up something using my DSL method it led me to an idea. If you are free to use both hands for playing the strings we can split the keyboard and use the left hand for control and some changes and just let the right hand play the chord and always expect 4 notes from that chord, this means we can skip the delay completely and just have it trigger everytime it gets 4 notes. Quick proof of concept

So any note over 60 gets stored in the [text] in the order received so you have to play the notes in the order you want them to go to the voice, on the fourth notes it sends them to each voice. If you only want to play two notes or just change two you pad the list as needed with dummy notes, anything with a velocity under 10 sets velocity to zero, noteoff. or you can use that to tweak the volume of playing notes in the case of just wanting to change what two notes are playing. Since it only fires on the 4th note you can preload the first 3 and just hit the 4th at the appropriate time. Left hand has notes 32, 33, 34, and 35 being used to select an individual voice, and key pressed between 36-60 will be sent to that voice. Left hand for control could be developed further to add quite a bit of control including programming speed of glissando with velocity, toggling between pizzicato and legato on a per voice basis or to calculate glissando per voice (best guess and would require adding timing data to the [text]) all depending on what you are after and how much you are willing/able to adapt your playing technique.

stringthing.pd

Edit: Uploaded the wrong version of the patch, fixed. After playing with it some, I think I would just have the right hand do 3 notes any time one voice is selected for tweaking with the left hand, end result is more dynamic that way and it is a bit easier to adapt to. Works quite well that way if you also have aftertouch.

Another Edit: Fixed a stupid mistake.

Sorting 4 midi note numbers to 4 different instruments

Thanks you both for your answers!

@whale-av said:

A round-robin approach would work, but you would have to always play 3 note chords and if a note was missed the order of the instruments would be shifted.

Yeah. I was afraid of something like that... Would this be something like {poly]?

I think what I want is pretty difficult to solve. I just want to be able to play a couple of monophonic instruments as an ensamble that plays the legato nicely without interfering a voice with each other.

For example... let's say I wanted to play 2 different monophonic legato instruments. Legato implies the pressing of two keys... two keys per voice. Those keys per voice are not even played together, because timings of the music. And the two voices play together or not depending of the playing style (that is, 2 voices have the same rhythm or are rhythmically independent of each other).

Just thinking out loud to see if I'm getting this right:

If you play just two note chords (same rhythm), that means 4 notes need to be played for legato to be produced, right? 2 notes per voice. If you want the voices to be orderer from lower to higher without crossings, the sequence would be:

- you would first have to order the inicial 2 notes, with the delay time to play/order that you pointed out. That gives you ordered list A.

- you would then have to play and order the 2 other notes, with delay time to play/process. That gives you ordered list B.

- with that, you would have to make so as to the lowest note from list B midi-chokes the lowest one from list A. Same with higher note.

- A little time after (as 2 notes that want to play legato must superpose), you would have to send a velocity 0 message to the notes from list A.

I can't think of a way of doing pretty much all of this... and this is just the simplest (same rhythm) case.

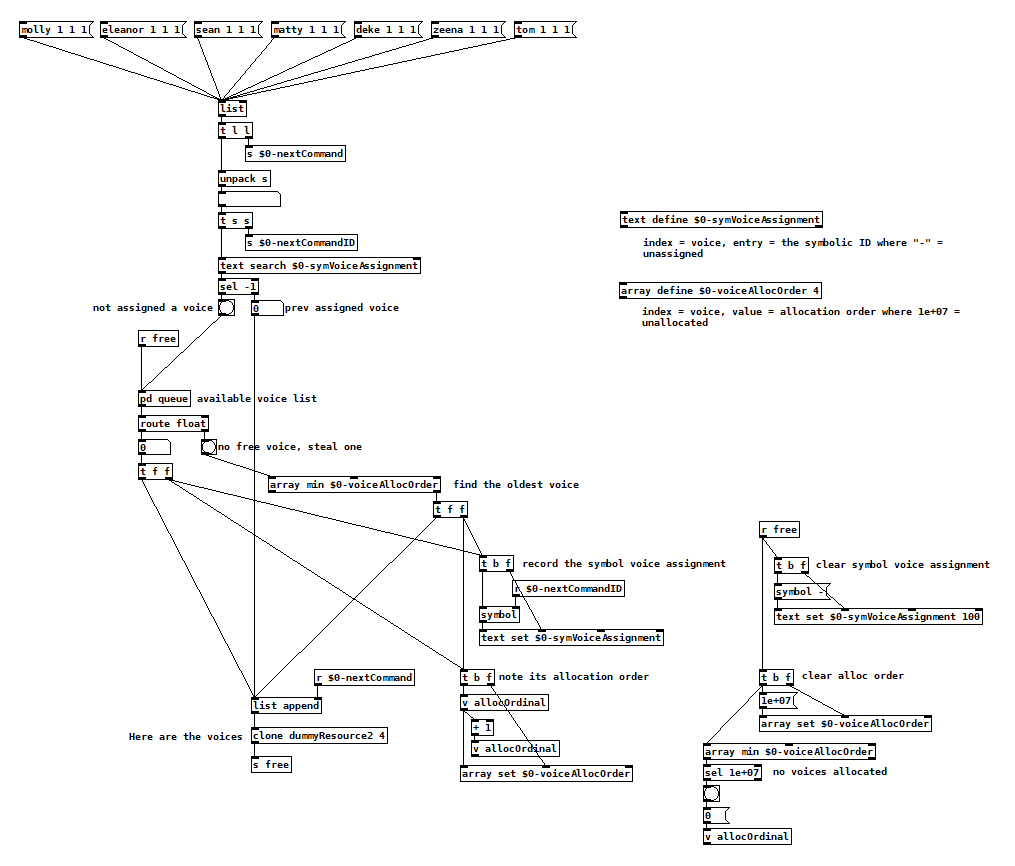

[poly] for anything

Hey, this is fun!

My needs are slightly different in that I usually can't tell when a voice is going to become free to be allocated again, so I make it the voice's responsibility to tell me when it's available. Voices also have to figure out when to retrigger, which happens during voice stealing but also when commands are routed back to the voice that's currently handling a previous instance. Here's pseudo code for my allocator:

next command is issued

has it already been assigned a voice?

yes: send next command to that same voice

no: are there free voices?

yes: record that that command is being handled by that voice

note the order that that voice was allocated

send next command to that voice

no: find the oldest allocated voice

record that that command is being handled by that voice

note the order that that voice was (re)allocated

send next command to that voice

when a voice becomes free

add it back into the list of available voices

clear whatever command it was previously associated with

clear whatever order that it was last allocated

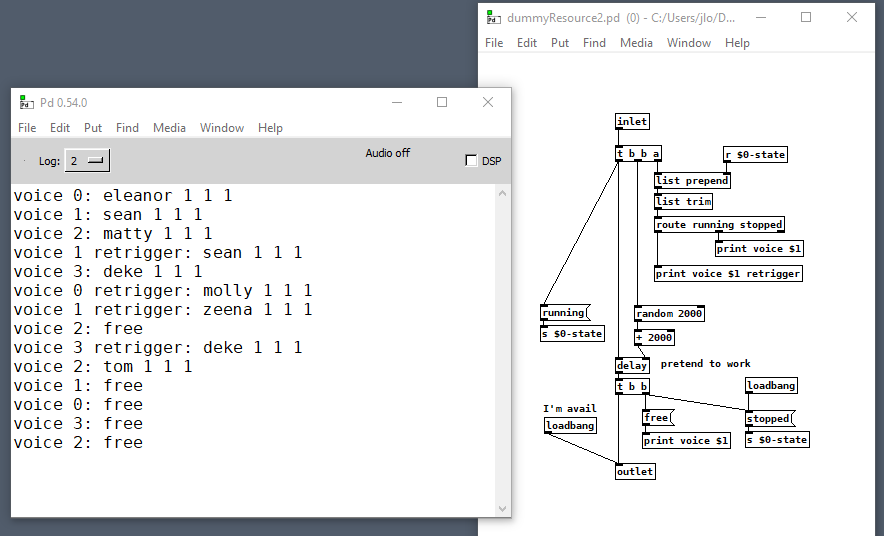

And then inside each voice:

next command comes in

what state are we in?

stopped: trigger the action, go to running state

running: retrigger the action

when the action is finished, go to stopped state and tell the allocator you are available

[poly] for anything

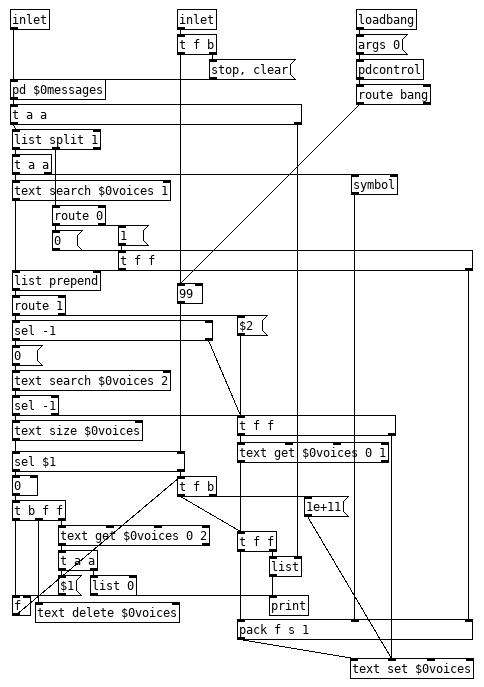

@60hz Voice stealing is not all that difficult to have. Here is 90% of [poly] reworked for symbols and those OSC messages of yours, would be simpler to build this around [poly] but I just simplified an abstraction I already have. The few things it lacks is the ability to turn voice stealing off, changing the number of voices on the fly and an all off, all of which are easy enough to add. Just fire it up with the desired number of voices as an argument. Oh, I also skipped having it bump voices up the list on retrigger/changes since I don't know how retrigger works in your patch and when or if a voice should be bumped up, on retrigger, or on certain changes, or all changes, simple enough to add as well.

spoly.pd

Edit, General clean up of messiness and fixed a bug. And I forgot that I changed the [outlet] to a print for testing, fixed.

ELSE 1.0-0 with Live Electronics Tutorial Release Candidate #9 is out!

Hi! This has gotta be the biggest update ever. geez... there's too much stuff, and I just decided to stop arbitrarily cause there's still lots of stuff to do. Ok, here goes the highlights!

This is the 1st multichannel (MC) aware release of ELSE (so it needs Pd 0.54-0 or later)! There are many many many objects were updated to become MC aware: 42 of them to be exact. There are also many many new objects, and many of them are MC capable, 20 out of 33! Som in total I have 62 objects that deal with MC... that's a good start. More to come later!

Note that 4 of these new ones were just me being lazy and creating new mc oscillators with [clone], I might delete them and just make the original objects MC aware... so basically these new MC objects bring actual new functionalities and many are tools to deal with MC in many ways, like splitting, merging, etc...

With 33 new objects, this is the first release to reach and exceed the mark of 500 externals, what a milestone! (This actually scares me). We now have 509 objects and for the first time ever I have reached the number of examples in the Tutorial, which is also 509 now! But I guess eventually the tutorial will grow larger than the number of externals again...

Since the last release, ELSE comes with an object browser plugin, I have improved it and also included a browser for Vanilla objects. I think it's silly to carry these under ELSE and I hope I can bring this to Vanilla's core. see https://github.com/pure-data/pure-data/pull/1917

A very exciting new object is [sfz~], which is a SFZ player based on 'SFIZZ'. This is more versatile than other externals out there and pretty pretty cool (thanks alex mitchell for the help)!

I have created a rather questionable object called [synth~] which wraps around [clone] and [voices]/[mono], but I think it will be quite interesting to newbies. It loads synth abstractions in a particular template and makes things a bit more convenient. It also allows you to load different abstraction patches with dynamic patching magic.

[plaits~] has been updated to include new 8 synth engines with the latest firmware. Modular people are happy... (thanks amy for doing this)

One cool new object for MC is [voices~], which is a polyphonic voice manager that outputs the different voices in different channels. If you have MC aware oscillators and stuff this allows you to manage polyphonic patches without the need of [clone] at all. This is kinda like VCV works and it opens the door for me to start designing modular inspired abstractions, something I mentioned before and might come next and soon! So much being done, so much to do... What an exciting year for Pd with the incredibly nice MC feature!

There's lots more stuff and details, but I'l just shut up and link to the full changelog here https://github.com/porres/pd-else/releases/tag/v1.0-rc9

You can get ELSE from deken as well. It's up there for macOS, Windows 64 bits, Linux 64 bits and raspberry pi. Please test and tell me if there's something funny.

Cheers!

Chees.

MPE support in Pure Data?

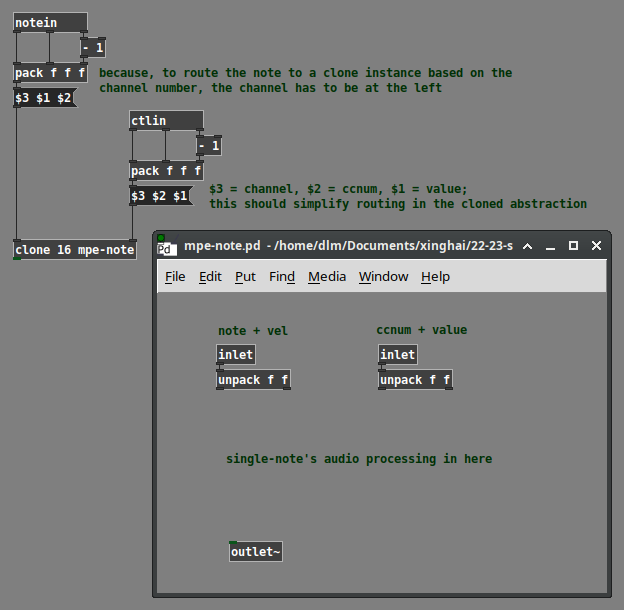

@jamcultur said:

I'm thinking about getting an MPE-capable MIDI controller to use with the synth I made in Pure Data. I'd like to enhance my synth to support the MPE features. Has anyone worked with MIDI MPE in Pure Data? Any advice or suggestions?

Based on a couple of quick reads: "In normal MIDI, Channel-wide messages (such as Pitch Bend) are applied to all notes being played on a single MIDI Channel. In MPE, each note is assigned its own MIDI Channel so that those messages can be applied to each note individually" (https://www.midi.org/midi-articles/midi-polyphonic-expression-mpe) and "MPE repurposes MIDI channels as notes instead of instruments. This means you can only have a single instrument on each port or cable and the instrument is limited to a maximum of 16‑note polyphony (one note on each of the 16 channels). Each note can have its own pitch‑bend, mod wheel and other expression messages, meaning you can now emulate the single‑string pitch vibrato of a guitar or violin without having the vibrato applied across the whole instrument" (https://www.soundonsound.com/sound-advice/mpe-midi-polyphonic-expression), it appears to be significantly less complicated than you might think.

MPE: Channel = voice number.

Where do you have voice numbers in Pd?

[clone]

Messages to [clone] should put the voice number leftmost. [notein], [ctlin] etc. put the channel number as the rightmost output. So you'd have to use [pack] and a message box to reorder the numbers. (Also note that MIDI objects report channels 1-16, but [clone 16] will produce voice numbers 0-15 -- easily handled, just don't omit the [- 1] objects.)

I'll admit that I didn't download the full spec, so there might be some fine print that I'm not aware of. But based on the online descriptions, I think it's pretty much like this.

hjh

Question about Pure Data and decoding a Dx7 sysex patch file....

Hey Seb!

I appreciate the feedback

The routing I am not so concerned about, I already made a nice table based preset system, following pretty strict rules for send/recives for parameter values. So in theory I "just" need to get the data into a table. That side of it I am not so concerned about, I am sure I will find a way.

For me it's more the decoding of the sysex string that I need to research and think a lot about. It's a bit more complicated than the sysex I used for Blofeld.

The 32 voice dump confuses me a bit. I mean most single part(not multitimbral) synths has the same parameter settings for all voices, so I think I can probably do with just decoding 1 voice and send that data to all 16 voices of the synth? The only reason I see one would need to send different data to each voice is if the synth is multitimbral and you can use for example voice 1-8 for part 1, 9-16 for part 2, 17-24 for part 3, 24-32 for part 4. As an example....... Then you would need to set different values for the different voices. I have no plan to make it multitimbral, as it's already pretty heavy on the cpu. Or am I misunderstanding what they mean with voices here?

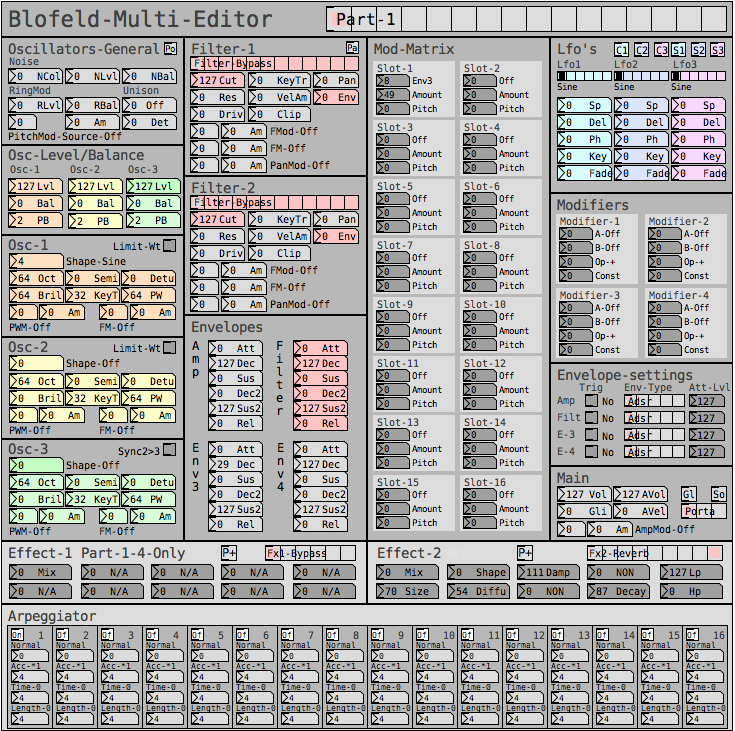

Blofeld:

What I did for Blofeld was to make an editor, so I can control the synth from Pure Data. Blofeld only has 4 knobs, and 100's of parameters for each part.... And there are 16 parts... So thousand + parameters and only 4 knobs....... You get the idea

It's bit of a nightmare of menu diving, so just wanted to make something a bit more easy editable .

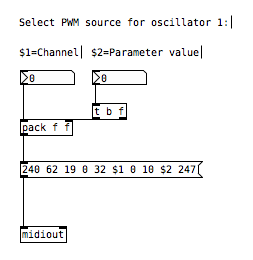

First I simply recorded every single sysex parameter of Blofeld(100's) into Pure data, replaced the parameter value in the parameter value and the channel in the sysex string message with a variable($1+$2), so I can send the data back to Blofeld. I got all parameters working via sysex, but one issue is, that when I change sound/preset in the Pure Data, it sends ALL parameters individually to Blofeld.... Again 100's of parameters sends at once and it does sometimes make Blofeld crash. Still needs a bit of work to be solid and I think learning how to do this decoding/coding of a sysex string can help me get the Blofeld editor working properly too.

I tried several editors for Blofeld, even paid ones and none of them actually works fully they all have different bugs in the parameter assignments or some of them only let's you edit Blofeld in single mode not in multitimbral mode. But good thingis that I actually got ALL parameters working, which is a good start. I just need to find out how to manage the data properly and send it to Blofeld in a manner that does not crash Blofeld, maybe using some smarter approach to sysex.

But anyway, here are some snapshots for the Blofeld editor:

Image of the editor as it is now. Blofeld has is 16 part multitimbral, you chose which part to edit with the top selector:

Here is how I send a single sysex parameter to Blofeld:

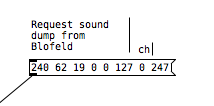

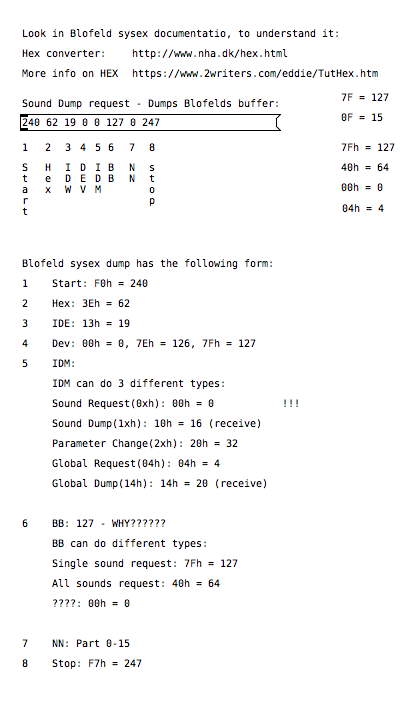

If I want to request a sysex dump of the current selected sound of Blofeld(sound dump) I can do this:

I can then send the sound dump to Blofeld at any times to recall the stored preset. For the sound dump, there are the rules I follow:

For the parameters it was pretty easy, I could just record one into PD and then replace the parameter and channel values with $1 & $2.

For sound dumps I had to learn a bit more, cause I couldn't just record the dump and replace values, I actually had to understand what I was doing. When you do a sysex sound dump from the Blofeld, it does not actually send back the sysex string to request the sound dump, it only sends the actual sound dump.

I am not really a programmer, so it took a while understanding it. Not saying i fully understand everything but parameters are working, hehe

So making something in Lua would be a big task, as I don't know Lua at all. I know some C++, from coding Axoloti objects and VCV rack modules, but yeah. It's a hobby/fun thing  I think i would prefer to keep it all in Pure Data, as I know Pure Data decently.

I think i would prefer to keep it all in Pure Data, as I know Pure Data decently.

So I do see this as a long term project, I need to do it in small steps at a time, learn things step by step.

I do appreciate the feedback a lot and it made me think a bit about some things I can try out. So thanks

Few questions about oversampling

Hi,

About a year ago I started to learn a bit pure data in order to create a patch that would act as a groovebox and that should perform on limited cpu resources since I want it to run on a raspberry pi. First I tried to make somekind of fork of the Martin Brinkmann groovebox patch, even if it allowed me to learn a lot about data flow I didn't went to the core of the patch tweaking with sound generation. This led me to end this attempt at forking MNB groovebox patch because even if I could seperate GUI stuff from sound generation and run it on different thread ect... I couldn't go further in optimization in order to reduce the cpu use.

Then a few weeks ago I decided to start again from scratch my project and this time I wanted to be more patient and learn anything needed in order to be capable of optimizing my patch as much as possible. After making a functional drum machine which runs at 2/3% of cpu with 8 different tracks, 126 steps sequencer, a bit of fx ect... I tried to find synths that would opperate well aside the drum machine. And I basicly didn't find any patch that wouldn't use massive amount of cpu time. So I created my own synths, nothing incredible but I'm happy with what I got, though I noticed some aliasing. I read a bit the floss manual about anti aliasing and apply the method used in the manual(http://write.flossmanuals.net/pure-data/antialiasing/), it work well but my synths almost trippled their cpu use, even if I put all my oscilators in the same subpatch in order to use only one instance of oversampling.

I didn't tried to oversample it less than 16 time but since oversampling is so cpu intensive I'm wondering if there's no other option in order to get a good sound definition at a lower cpu cost. I'm already using banlimited waveform so I don't know what I could do in order to limit the aliasing, especialy for my fm patch where bandlimited waveform isn't very useful in order to reduce aliasing.

Since I want to have at least 4 synth track with some at least one synth having 5 voice polyphony I want to know what the best thing to do. Letting FM aside for this project and use switch~ for oversampling 2 or 4 time my synths that use bandlimited waveform ? Or should I try to run different instances of pd for each synth and controling it from a gui/control patch with netsend(though it wouldn't bring down the cpu use at least it would provide somekind of multithreading for my patch) ? Or is there another way to get some antiliasing ? Or should I review lower my expectation because there is no solution that could provide a decent antialiasing for 4 or more synth running at the same time with a low cpu use in pure data in 2021.

Thanks to everyone that would read my topic and try to give some advice in order to get the best antialising/low cpu use solution.

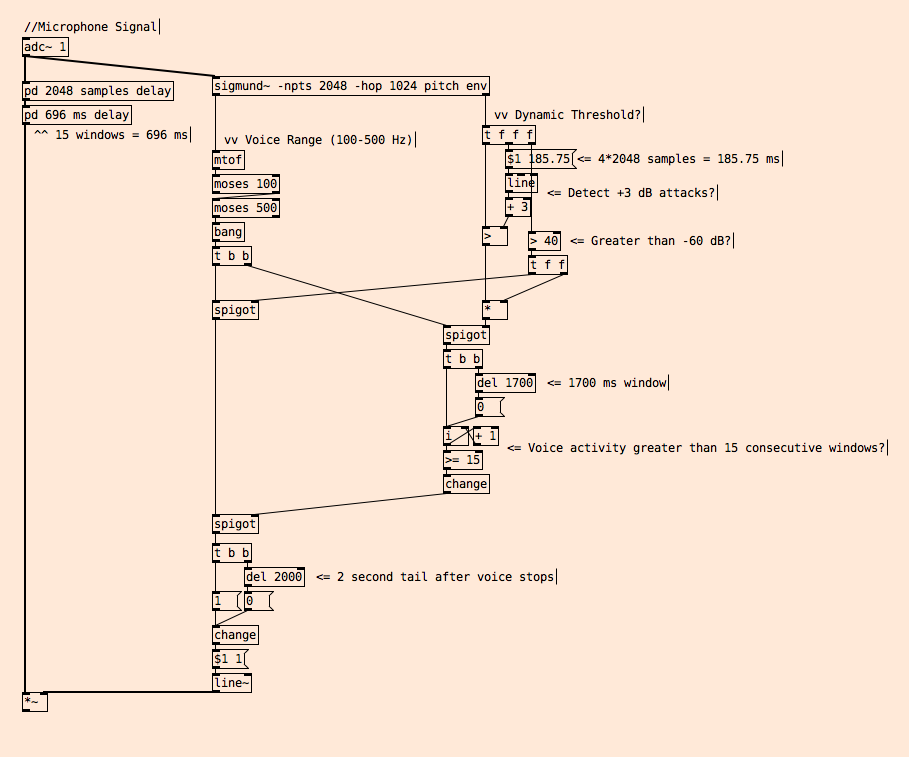

How to do Voice Activity Detection (VAD) Algorithms?

Hello!

I’ve been working on a sound installation that records your voice on a public space and then plays it back on a FM radio transmitter.

Since then, I’ve been searching for different voice activity detection (VAD) algorithms for Pure Data and found very little.

So far, my best lead is this article: https://medium.com/linagoralabs/voice-activity-detection-for-voice-user-interface-2d4bb5600ee3

So I thought I’d share my simple algorithm for VAD in public spaces and ask:

How would you approach detecting voice activity in real-time in a public space with a lot of noises and non-voice signals?

Here's my patch: VAD.pd