ELSE 1.0-0 RC12 with Live Electronics Tutorial Released

Hi, it's been a while, here we go:

RELEASE NOTES:

Hi, it's been almost 8 months without an update and I never took this long!!! So there's a lot of new stuff to cover, because it's not like I've been just sleeping around

The reason for the delay is that I'm trying to pair up with the release cycles of PlugData and we're having trouble syncing up. PlugData 0.9.0 came out recently after a delay of 6 months and we couldn't really sync and pair up then... we had no luck in syncing for a new update now, so now I'm just releasing it up cause enough is enough, and hopefully in the next plugdata release we can sync and offer the same version.

As usual, the development pace is always quite busy and I'm just arbitrarily wrapping things up in the middle of adding more and more things that will just have to wait.

First, I had promised support for double precision. I made changes so we can build for it, but it's not really working yet when I uploaded to deken and tested it. So, next time?

And now for the biggest announcement: - I'm finally and officially releasing a new pack as a submodule, which is a set of abstractions inspired by EuroRack Modules, so I'm thinking of VCV like things but into the Pd paradigm. Some similar stuff has been made for Pd over the years, most notably and famously "Automatonism", but I'm really proud of what I'm offering. I'm not trying to pretend Pd is a modular rack and I'm taking advantage of being in Pd. I'm naming this submodule "Modular EuroRacks Dancing Along" (💩 M.E.R.D.A 💩) and I've been working on it for a year and a half now (amongst many other things I do). PlugData has been offering this for a while now, by the way. Not really fully in sync though.

MERDA modules are polyphonic, thanks to multichannel connections introduced in Pd 0.54! There are 20 modules so far and some are quite high level. I'm offering a PLAITS module based on the Mutable Instruments version. I have a 6-Op Phase Modulation module. A "Gendyn" module which is pretty cool. I'm also including an "extra" module that is not really quite a modular thing at all but fits well called "brane", which was a vanilla patch I first wrote like 15 years ago and is a cool granular live sampler and harmonizer. You'll also find the basics, like oscillators, filters, ADSR envelope and stuff I'm still working on. Lastly, a cool thing is that it has a nice presets system that still needs more work but is doing the job so far.

There are ideas and plans to add hundreds more MERDA modules, let's see when and if I can. People can collaborate and help me and create modules that follow the template by the way

Thanks to Tim Schoen, [play.file~] is now a compiled object instead of an abstraction and it supports MP3, FLAC, WAV, AIF, AAC, OGG & OPUS audio file extensions. A new [sfload] object can import these files into arrays (but still needs lots of more work). There are many other player objects in ELSE that can load and play samples but these don't yet support these new formats (hang in there for the next version update).

Tim also worked on new [pdlink] and [pdlink~] objects, which send control and signal data to/from Pd instances, versions and even forks of Pure Data (it's like [send]/[receive] and [send~]/[receive~], all you need is a symbol, no complicated network or OSC configuration!). And yes, it works via UDP between different computers on the same network. And hell yeah, [pdlink~] has multichannel connections support! By the way, you can also communicate to a [pd~] subprocess. This will be part of ELSE and PlugData of course, and will allow easy communication between PlugData and Pd-Vanilla for instance.

The great pd-lib-build system has been replaced for a 'cmake' build process called 'pd.build' by Pierre Guillot. This was supposed to simplify things. Also, the [sfont~] object was a nightmare to build and with several dependencies that was simply hell to manage, now we have a new and much simpler system and NO DEPENDENCIES AT ALL!!! Some very rare file formats with obscure and seldom sound file extensions may not work though... (and I don't care, most and the 'sane' ones will work). The object now also dumps all preset information with a new message and backwards compatibility broke a bit

I'm now back to offering a modified version of [pdlua] as part of ELSE, which has recently seen major upgrades by Tim to support graphics and signals! This is currently needed in ELSE to provide a new version of [circle] that needed to be rewritten in lua so it'd look the same in PlugData. Ideally I'd hope I could only offer compiled GUI objects, but... things are not ideal

The lua loader works by just loading the ELSE library, no need for anything "else". I'm not providing the actual [pdlua] and [pdluax] objects as they are not necessary, and this is basically the only modification. Since PlugData provides support for externals in lua, if you load ELSE you can make use of stuff made for PlugData with lua without the need to install [pdlua] in Pd-Vanilla.

For next, we're working on a [lua] object that will allow inline scripting and will also work for audio signals (again, wait for the next version)! Also for the next version, I'm saving Ben Wesch's nice 3d oscilloscope made in lua (it'll be called [scope3d~]). There's a lot going on with the lua development, which is very exciting.

As for more actual new objects I'm including, we have [vcf2~] and [damp.osc~]. The first is a complex one pole resonant filter that provides a damping oscillation for a ringing time you can set, the next is an oscillator based on it. There's also the new [velvet~] object, a cool and multichannel velvet noise generator that you can also adjust to morph into white noise.

I wasn't able to add multichannel capabilities to many existing objects in ELSE in this one, just a couple of them ([cosine~] and [pimp~]). Total number of objects that are multichannel aware now are: 92! This is almost a third of the number of audio objects in ELSE. I think that a bit over half might be a reasonably desired target. More multichannel support for existing objects to come in the next releases.

Total number of objects in the ELSE library is now 551!

As for the Live Electronics tutorial, as usual, there are new examples for new objects, and I made a good revision of the advanced filter section, where I added many examples to better explain how [slop~] works, with equivalent [fexpr~] implementations.

Total number of examples in the Live Electronics Tutorial is now 528!

There are more details of course, and breaking changes as usual, but these are the highlights! For a full changelog, check https://github.com/porres/pd-else/releases/tag/v.1.0-rc12 (or below at this post).

As mentioned, unfortunately, ELSE RC12 is not yet fully merged, paired up and 100% synced in PlugData. PlugData is now at version 0.9.1, reaching the 1.0 version soon. Since ELSE is currently so tightly synced to the development of PlugData, the idea is to finally offer a final 1.0 version of ELSE when PlugData 1.0 is out. Hence, it's getting closer than ever  Hopefully we will have a 100% synced ELSE/PlugData release when 0.9.2 is out (with a RC 13 maybe?).

Hopefully we will have a 100% synced ELSE/PlugData release when 0.9.2 is out (with a RC 13 maybe?).

Please support me on Patreon https://www.patreon.com/porres

You can follow me on instagram as well if you like... I'm always posting Pd development stuff over there https://www.instagram.com/alexandre.torres.porres/

cheers

ps. Binaries for mac/linux/windows are available via deken. I needed help for raspberry pi

CHANGELOG:

LIBRARY:

Breaking changes:

- [oscope~] renamed to [scope~]

- [plaits~] changed inlet order of modulation inputs and some method/flags name. If a MIDI pitch of 0 or less input is given, it becomes a '0hz'.

- [gbman~] changed signal output range, it is now filtered to remove DC and rescaled to a sane -1 to 1 audio range.

- [dust~] and [dust2~] go now up to the sample rate and become white noise (removed restriction that forced actual impulses, that is, no conscutive non zero values)

- [cmul~] object removed (this was only used in the old conv~ abstraction to try and reduce a bit the terrible CPU load)

- [findfile] object removed (use vanilla's [file which] now that it has been updated in Pd 0.55-0)

- [voices] swapped retrig modes 0 and 1, 'voices' renamed to 'n', now it always changes voice number by default as in [poly] (this was already happening unintentionally as a bug when one voice was already taken). The 'split' mode was removed (just use [route], will you?)

- [voices~] was also affected by changes in [voices] of course, such as 'voices' message being renamed to 'n'.

- [sr~]/[nyquist] changed output loading time to 'init' bang

- [sample~] object was significantly redesigned and lots of stuff changed, new messages and flags, added support for 64-bit audio files (Pd 0.55 in double precision and ELSE compiled for 64 bits is required for this). Info outlet now also outputs values for lenght in ms and bit depth.

- [sfont~] uses now a simpler build system and this might not load very very rare and unusual sound formats.

Enhancements/fixes/other changes:

- builds for double precision is now supposedly supported, by the way, the build system was changed from pd-lib-builder to pd.build by Pierre Guillot.

- [play.file~] is now a compiled object instead of an abstraction thanks to Tim Schoen, and it supports MP3, FLAC, WAV, AIF, AAC, OGG & OPUS file extensions.

- Support for double precision compilation was improved and should be working for all objects (not yet providing binaries and fully tested yet by the way).

- The ELSE binary now loads a modified version of [pdlua], but no [pdlua] and [pdluax] objects are provided.

- added signal to 2nd inlet of [rm~].

- fixed 'glide' message for [mono~].

- fixed [voices] consistency check bug in rightmost outlet and other minor bugs, added flags for 'n', 'steal' and offset.

- [gain~] and [gain2~] changed learn/forget shortcut

- [knob] fixed sending messages to 'empty' when it shouldn't, ignore nan/inf, prevent a tcl/tk error if lower and upper values are the same; added "learn/forget" messages and shortcut for a midi learn mechanism.

- [mpe.in] now outputs port number and you can select which port to listen to.

- Other MIDI in objects now deal with port number encoded to channel as native Pd objects. Objects affected are [midi.learn], [midi.in], [note.in], [ctl.in], [bend.in], [pgm.in], [touch.in] and [ptouch.in].

- [pi]/[e] now takes a value name argument.

- [sr~]/[nyquist~] take clicks now and a value name argument.

- fixed phase modulation issues with [impulse~] and [pimp~].

- [cosine~] fixed sync input.

- added multichannel features to [cosine~] and [pimp~].

- [plaits~] added a new 'transp' message and a functionality to allow MIDI input to supersede signal connections (needed for the 'merda' version [see below]), fixed MIDI velocity.

- [pluck~] added a new functionality to allow MIDI input to supersede signal connections (needed for the 'merda' version [see below]).

- 26 new objects, [velvet~], [vcf2~], [damp.osc~], [sfload], [pdlink] and [pdlink~], plus abstractions from a newly included submodule called "Modular Euro Racks Dancing Along" (M.E.R.D.A)! Warning, this is all just very very experimental still, the object are: [adsr.m~], [brane.m~], [chorus.m~], [delay.m~], [drive.m~], [flanger.m~], [gendyn.m~], [lfo.m~], [phaser.m~], [plaits.m~], [plate.rev.m~], [pluck.m~], [pm6.m~], [presets.m], [rm.m~], [seq8.m~], [sig.m~], [vca.m~], [vcf.m~] and [vco.m~] (6 of these are multichannel aware).

Objects count: total of 551 (307 signal objects [92 of which are MC aware] and 244 control objects)!

- 311 coded objects (203 signal objects / 108 control objects

- 240 abstractions (104 signal objects / 136 control objects)

TUTORIAL:

- New examples and revisions to add the new objects, features and breaking changes in ELSE.

- Added a couple of examples for network communication via FUDI and [pdlink]/[pdlink~]

- Section 36-Filters(Advanced) revised, added more examples and details on how [slop~] works.

- Total number of examples is now 528!

Where does latency come from in Pure Data?

@Pandas As above..... Pd has an input > output delay equal to its block size.

Put something in and it will output 64 samples later.

But the soundcard cannot convert that to analogue instantly... so we add a buffer that gives it time to process the audio before the next block arrives.... MOTU 2-3ms with ASIO... On board soundcard usually 30-80ms.

I see you posted elsewhere on the forum..... https://forum.pdpatchrepo.info/topic/14495/sync-to-external-daw-midi-clock-audio-latency

I don't know those programs and maybe someone else will help.

Sending the audio samples to another program through a port will still not be instantaneous... but should be close unless you are listening to it at the same time.... but even media players will buffer at least 512 samples before they play a stream.

Does QSynth have compensation settings to delay the midi and attempt to match the audio timing?

What is your OS?

As you are communicating with software rather than a DAC you could try reducing the Delay (mSecs) in Pd media settings,,,,,, down to 3, then 2, then 1.. 0 probably not but you never know.... basically until it hangs and then go back to the last setting that worked.

Apply but don't save the settings until you are done.... or Pd might not start or might not let you change them again while it is "stuck" even if you restart it.

There is a fix for that..... I will upload it if that happens..

Running Pd at 96kHz audio rate should I think reduce the 64 sample (Pd internal) block delay.... you could try that too...... I think it should output in half the 48kHz time.

And try this..... it might help....... depends what you are wanting to achieve...... https://forum.pdpatchrepo.info/topic/13125/batch-processing-audio-faster-than-realtime

David.

Circular buffer issues

@jameslo said:

Honestly, I didn't know if that was @fintg's requirement,

It's certainly a reasonable guess. If the requirement instead were "I just played something cool; write the last 10 seconds to disk" you can do that without a circular buffer at all.

I was just surprised and annoyed that one can only access the delay line's internal buffer at audio rate (and was hoping that someone would prove me wrong).

Access to the internal buffer wouldn't be very useful without also knowing the record-head position. In that case delwrite~ would need an outlet for the current frame being written.

That would actually be a very nice feature request.

In SuperCollider as well, DelayN, DelayL and DelayC don't give you access to the internal buffer. But you can create your own buffer and write into it, with total control over phase, with BufWr -- and, because you control the write phase, you already know what it is. It's quite nice way to do it.

Basically the lack of ipoke~ in vanilla causes some headaches.

Look at the hoops I have to jump through! The extra memory I have to use!

I don't think there is any way to do this without using some extra memory.

In a circular buffer, you have:

|~~~~~~ new audio ~~~~~~|~~~~~~ old audio ~~~~~~|

^ record head

When you write to disk, naturally you want the old audio earlier in the file. There are only two ways to do that. One is to write the "old" chunk without closing the file, and append the "new" chunk, and then close the file.

In SC, if I know the record head position, I'd do it like:

buf.write(path, "wav", "int24", startFrame: recHead, leaveOpen: true, completionMessage: { |buf|

buf.writeMsg(path, "wav", "int24", numFrames: recHead, startFrame: 0, leaveOpen: false)

});

AFAICS Pd does not support this, so you're left with duplicating new after old data. (FWIW, though, there's plenty of memory in modern computers; I wouldn't lose sleep over this.)

Then there is the problem of synchronous vs asynchronous disk access. AFAICS Pd's disk access is synchronous, and because the control layer is triggered from the audio loop, slow disk access could cause audio dropouts. OS file system caching might reduce the risk of that, but you never know. Ross Bencina's article about real-time audio performance advises against time-unbounded operations in the audio thread.

SC's buffer read/write commands run in a lower priority thread; wrt audio, they are asynchronous. This is good for audio stability, but it means that, by the time you get around to writing, the record head has moved forward. So, even though I could do the two-part write easily, I'd get a few ms of new data at the start of the file. I think I would solve that by allocating an extra, say, 2 seconds and then just don't write the 2 seconds after the sampled-held recHead value: startFrame: recHead + (s.sampleRate * 2). (If it takes 2 seconds to write a 10 second audio file, then you have bigger problems than circular buffers.) Then the record head can move freely into that zone without affecting audio written to disk.

hjh

Convert analog reading of a microphone back into sound

@MarcoDonnarumma Just had a cursory look at you video.... very good.

So you will understand more musical tech terms.

With your low sample rate the steps in the waveform are massive. The result is approximately what you would ask of a "fuzz" box.

Your ears only hear the rapid changes where the waveform rises or falls vertically. Your brain can only interpret the audio that way as it gets no intermediate information.

A rapid rise or fall like those you see in the scope are..... because the signal rate is now (after [sig~] ) 44100 samples per second..... actually a very high frequency signal....... one half (the left or right half) of a 22KHz "note".

Usually called "aliasing"...... they are there because there was no information before or after to give the real analogue slope of the wave before it was sampled.

For CD audio the rate is also 44100Hz. The Nyquist is 22050Hz and a low pass filter in the DAC removes everything above 20000Hz so as to remove such artefacts.

Putting a [lop] will smooth out those steps and approximate the original waveform as it was originally sampled. The downside is that you will no longer hear the audio because it is likely outside the range of most peoples hearing (in this case only!.... with audio below 40Hz..... if it was a 200Hz note you would hear it).

If you put a [lop~] (between [sig~] and [dac~] with a fader connected to its right inlet with a range of say 0.3 to 40 you can play with the fader to get an audible signal that is close to what you want to hear.

A sort of "depth of fuzz".

You might need a slightly different range on the fader..... 0.1 to 25 or something.

Looking at your video....... seeing (yes, ok, hearing) that you need audible sound.... not 192KHz audiophile sound.... and bearing in mind the original 40Hz analogue signal is inaudible to a lot of people, I don't think the clock problem is going to have a significant effect.

But if you are mistaken about the 40Hz maximum and there is actually more information that you need....... think heartbeat (low) + blood rushing (high) then upping your sample rate from the arduino will be necessary to get the higher frequencies.

500Hz sample rate will only give you up to 250Hz of audio information, just like 44100Hz only renders 22050Hz for our delectation.

David.

P.S. I don't much like maths..... not true..... it is fascinating but too hard for my feeble brain.

But without bothering to work it out, in theory, to preserve the signal the [lop] should be just below half the nyquist... so for 500Hz sampling a [lop~ 240]  ?? should do that.

?? should do that.

So I was way off base with the [lop~] values I posted above.

The signal would be very reduced (much lower I think) with the values I gave above.

However, [lop~] is not a very "sharp" filter... low dB/octave...... so maybe I was not in fact wrong...... and anyway you will need some distortion so as to properly hear your 40Hz note.

I hope you like to have someone struggling alongside you while you work.

I have to research what that means when the signal has already been oversampled by [sig~]..... but I think it doesn't matter.

Someone clever will tell us first no doubt.

@jameslo 's solution above should give a better outcome though than with a rather uncertain value for [lop~].

It will depend on what you need from your patch.

Raspberry Pi Bluetooth Speaker not showing up in Audio preferences

@eulphean said:

I'm using pure data on a raspberry pi 3+ for a project. I have bluetooth configured properly on it. I can send regular audio to bluetooth speaker connected, but the preferences -> audio in PureData doesn't show the bluetooth speaker. It only shows internal audio card or if I have a USB audio card, that shows too. But no bluetooth device.

How do I configure that? Do I need to use jack or something to route audio to bluetooth for Pd?

My experience with Bluetooth audio in Linux has been:

-

JACK has zero tolerance for the audio driver ever being late -- expect crashes or system lockups if you try to route audio from JACK to Bluetooth. That is, just don't.

-

PulseAudio's support for BT audio is pretty good -- the "regular audio" that you spoke of. Audio production apps typically bypass PulseAudio, in which case BT audio may simply not be supported for them. That is, I expect you'd hit the same problem with SuperCollider, Audacity, Ardour, VCV Rack etc etc etc.

I'm not aware of a solution... That's not to say that there absolutely isn't one, but the Linux audio space is not unified as it is in Mac so audio device support may not be universal.

hjh

Logarithmic glissando

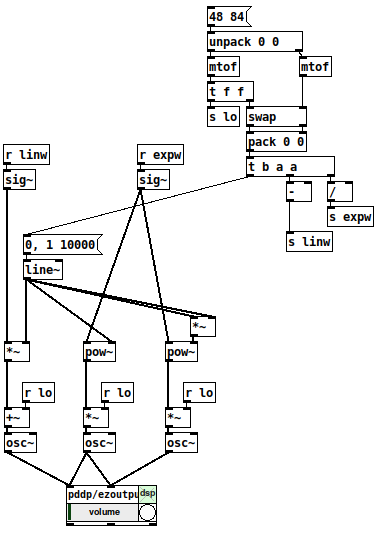

One of the very early lessons that I teach in my interactive multimedia class is range mapping, where I derive the formulas and then leave them with ready-to-use patch structures for them.

-

If it's based on incoming data, first normalize (0 to 1, or -1 to +1, range). If you're generating a control signal, generate a normal range (e.g. [phasor~] is already 0 to 1).

-

For both linear and exponential mapping, there's a low value

loand a high valuehi. (Or, if the normalized range is bipolar -1 to +1, a center value instead oflo.) -

The "width" of the range is: linear

hi - lo, exponentialhi / lo. -

Apply the width to the control signal by: linear, multiplying (

(hi - lo) * signal); exponential, raising to the power of the control signal =(hi / lo) ** signal. -

Then (linear) add the lo or center; (exponential) multiply by the lo or center.

One way to remember this is that the exponential formula "promotes" operators to the "next level up": + --> *, - --> /, * --> power-of (and / --> log, but that would only be needed for normalizing arbitrary exponential data from an external source). So if you know the linear formula and the operator-promotion rule, then you have everything.

- linear: (width * signal) + lo

- exponential: (width ** signal) * lo

(Then the "super-exponential" that bocanegra was hypothesizing would exponentiate twice: ((width ** signal) ** signal) * lo = (width ** (signal * signal)) * lo.)

[mtof~] is a great shortcut, of course, but -- I drill this pretty hard with my students because if you understand this, then you can map any values onto any range, not only MIDI note numbers. IMO this is basic vocabulary -- you'll get much further with, say, western music theory if you know what is a major triad, and you can go much further with electronic music programming if you learn how to map numeric ranges.

hjh

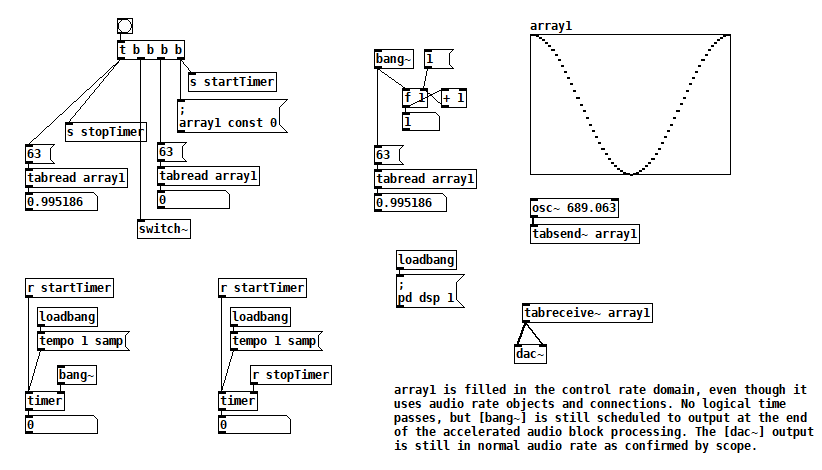

banging [switch~] performs audio computations offline!

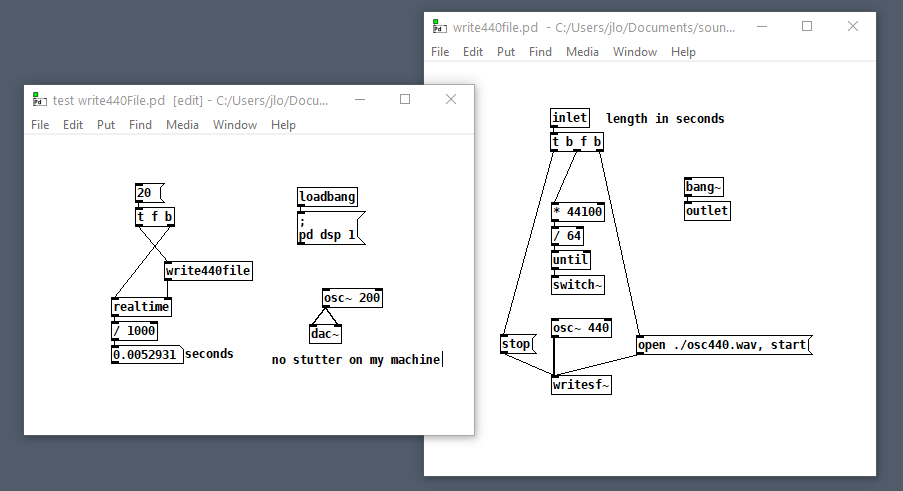

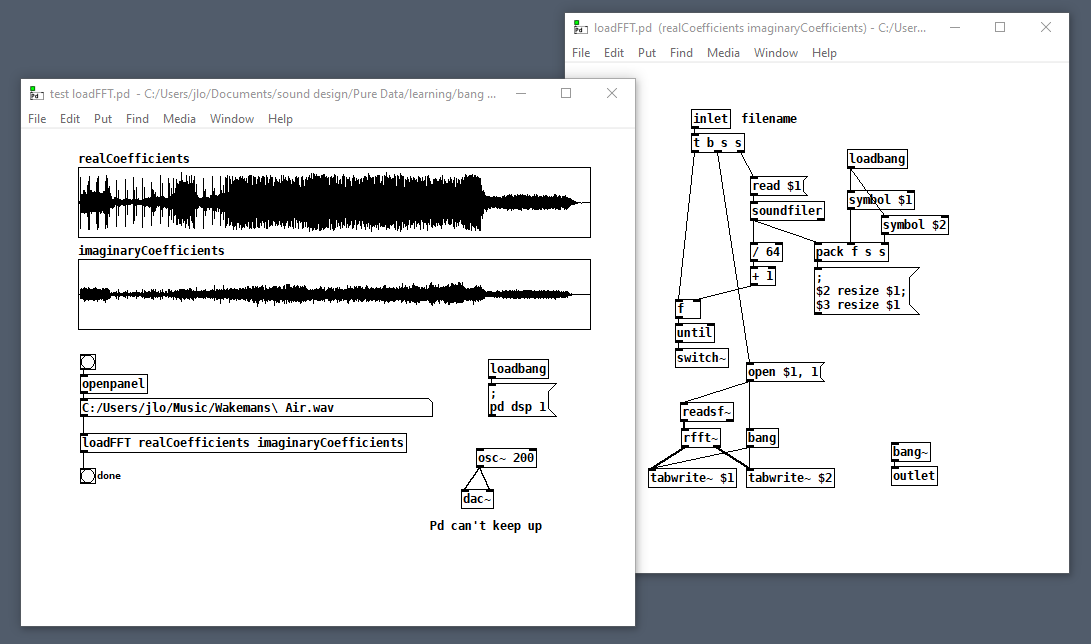

According to block~ help, if you bang [switch~] it runs one block of DSP computations, which is useful for performing computations that are more easily expressed as audio processing. Something I read (which I can't find now) left me with the impression that it runs faster than normal audio computations, i.e. as if it were in control domain. Here are some tests that confirm it, I think: switch~ bang how fast.pd

The key to this test is that all of the bangs sequenced by [t b b b b] run in the same gap between audio block computations. When [switch~] is banged, [osc~] fills array1, but you can see that element 63 of array1 changes after [switch~] is banged. Furthermore, no logical time has elapsed. So it appears that one block of audio processing has occurred between normal audio blocks. [bang~] outputs when that accelerated audio block processing is complete.

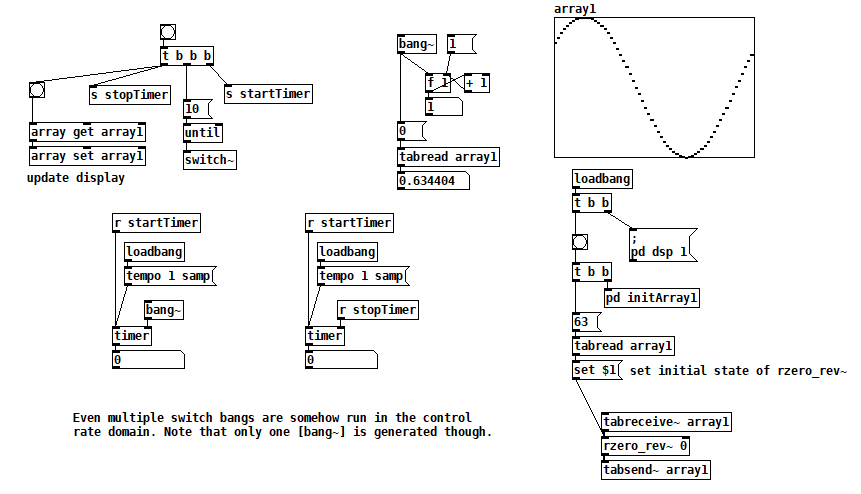

This next test takes things further and bangs [switch~] 10 times at control rate. Still, no logical time elapses, and [bang~] only outputs when all 10 bangs of [switch~] are complete. [rzero_rev~ 0] is just an arcane way of delaying by one sample, so this patch rotates the contents of array1 10 samples to the right. switch~ bang how fast2.pd

(There are better ways to rotate a table than this, but I just needed something to test with. Plus I never pass up a chance to use [rzero_rev~ 0]  )

)

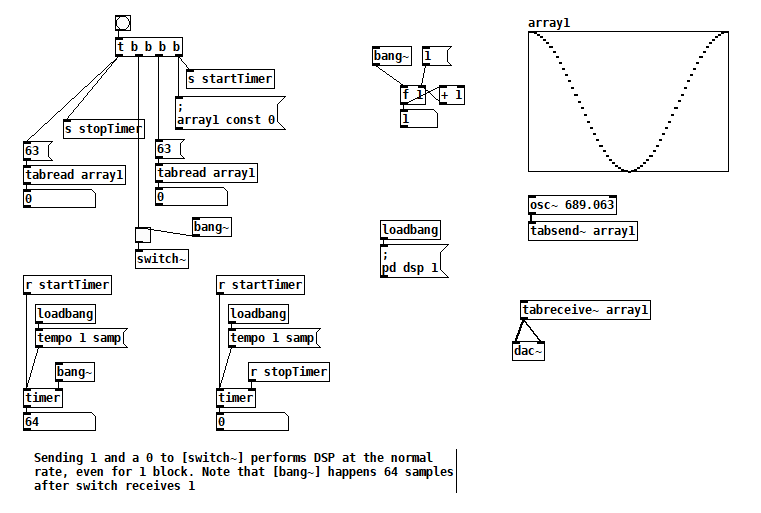

Finally, I've seen some code that sends a 1 to [switch~] and then sends 0 after one block of processing. In this test you can see that one block of audio is processed in one block of logical time, i.e. the normal way. switch~ bang how fast3.pd

But that second test suggests how you could embed arbitrary offline audio processing in a patch that's not being run with Pd's -batch flag or fast-forwarded with the fast-forward message introduced in Pd 0.51-1. Maybe it's an answer to two questions I've seen posted here: Offline analysis on a song and Insant pitch shift. Here's a patch that writes 20s of 440 Hz to a file as fast as possible (adapted from @solipp's patch for the first topic). You just compute how many blocks you need and bang away. write440File.zip

Here's another that computes the real FFT of an audio file as fast as possible: loadFFT.zip

But as with any control rate processing, if you try to do too much this way, Pd will fall behind in normal audio processing and stutter (e.g. listen to the output while running that last patch on a >1 minute file). So no free lunch, just a little subsidy.

s~/r~ throw~/catch~ latency and object creation order

I searched through many of the s~/r~ throw~/catch~ hops in my own code for mistakes based on what I've learned, and it looks like ~50% of all my non-local audio connections carry modulation signals that originate from control rate objects, so those aren't affected much. Only a few programs would have been broken had the non-local connections not matched, but because things were created in signal-flow order where it mattered, there was a consistent (and often unnecessary) 1 block delay everywhere. Many of those patches were later converted to use tabsend~/tabreceive~ under a small block size, and a few were converted to use delay lines. I was burned by the creation order side effect of delwrite~/delread~ once, but it wouldn't have happened had I not taken a lazy shortcut. Hilariously, I also found one test patch that I used to declare definitively, once and for all, that s~/r~ always introduces a 1 block delay if the sort order isn't controlled using the G05 technique. To paraphrase Agent K in the movie Men in Black, imagine what I'll "know" tomorrow!

So as a practical issue, I doubt coders are getting tripped up by this frequently. If you're like me you tend to code in signal flow order, and so you are mostly just introducing latency unnecessarily. RE advice, I agree with @Nicolas-Danet: use local audio connections wherever it matters and never mind the clutter. Even my worst spider web isn't so bad. Subpatches and abstractions can help hide the mess.

But when things are broken and showtime is looming, you'd be foolish not to use what you know, especially when you can always go back after curtain calls and adjust things to satisfy the style police. Here is a summary of the sort rules as best as I've been able to figure them out so far:

- A patch orders its constituent audio subpatches and abstractions, but has no influence over their internal ordering. Consequently, these rules are applied starting at the top level patch and recurse into each subpatch and abstraction.

- Audio chain tributaries and independent audio chains are executed in reverse order of their head's creation, except for those created by [clone], which are executed in the order of their creation (i.e. ascending clone index order).

- Audio branches that start from fanout connections are executed in reverse order of their start connection's creation.

- Whenever the rules conflict, the rule that places the tilde object the latest in line takes precidence.

These sort rules can affect your patch because unless s~ and throw~ buffer their data before their corresponding r~ and catch~ are executed, the latter will have to wait a block to access it. Delread~ will have a minimum 1 block delay.

Finally, I thought of this technique if you're absolutely certain you are going to get burned (or are just paranoid): wrap all s~, r~, throw~, catch~, delwrite~, delread~, and audio chain heads in their own subpatches. I noticed that if you modify the contents of a subpatch, it doesn't change its sort order in the containing patch, so you can add test code (e.g. [sig~ <uniqueNr>]->[tabsend~ <sharedArray>]) to subpatch pairs and check their execution order without changing it. If the order is wrong, then you cut and repaste the appropriate subpatches or audio connections according to the rules above until it isn't. That should take 15 mins, not days.

s~/r~ throw~/catch~ latency and object creation order

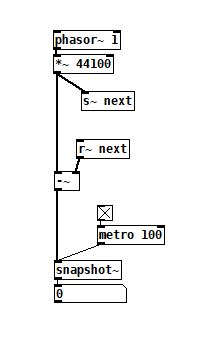

For a topic on matrix mixers by @lacuna I created a patch with audio paths that included a s~/r~ hop as well as a throw~/catch~ hop, fully expecting each hop to contribute a 1 block delay. To my surprise, there was no delay. It reminded me of another topic where @seb-harmonik.ar had investigated how object creation order affects the tilde object sort order, which in turn determines whether there is a 1 block delay or not. Object creation order even appears to affect the minimum delay you can get with a delay line. So I decided to do a deep dive into a small example to try to understand it.

Here's my test patch: s~r~NoLatency.pd

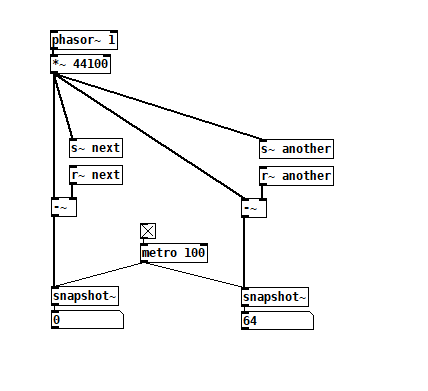

The s~/r~ hop produces either a 64 sample delay, or none at all, depending on the order that the objects are created. Here's an example that combines both: s~r~DifferingLatencies.pd

The s~/r~ hop produces either a 64 sample delay, or none at all, depending on the order that the objects are created. Here's an example that combines both: s~r~DifferingLatencies.pd

That's pretty kooky! On the one hand, it's probably good practice to avoid invisible properties like object creation order to get a particular delay, just as one should avoid using control connection creation order to get a particular execution order and use triggers instead. On the other hand, if you're not considering object creation order, you can't know what delay you will get without measuring it because there's no explicit sort order control. Well...technically there is one and it's described in G05.execution.order, but it defeats the purpose of having a non-local signal connection because it requires a local signal connection. Freeze dried water: just add water.

That's pretty kooky! On the one hand, it's probably good practice to avoid invisible properties like object creation order to get a particular delay, just as one should avoid using control connection creation order to get a particular execution order and use triggers instead. On the other hand, if you're not considering object creation order, you can't know what delay you will get without measuring it because there's no explicit sort order control. Well...technically there is one and it's described in G05.execution.order, but it defeats the purpose of having a non-local signal connection because it requires a local signal connection. Freeze dried water: just add water.

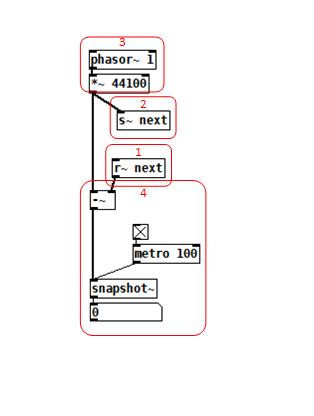

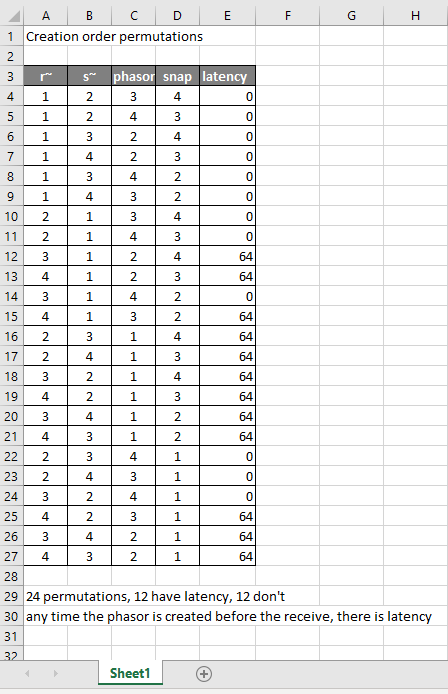

To reduce the number of cases I had to test, I grouped the objects into 4 subsets and permuted their creation orders:

The order labeled in the diagram has no latency and is one that I just stumbled on, but I wanted to know what part of it is significant, so I tested all 24 permuations. (Methodology note: you can see the object creation order if you open the .pd file in a text editor. The lines that begin with "#X obj" list the objects in the order they were created.)

The order labeled in the diagram has no latency and is one that I just stumbled on, but I wanted to know what part of it is significant, so I tested all 24 permuations. (Methodology note: you can see the object creation order if you open the .pd file in a text editor. The lines that begin with "#X obj" list the objects in the order they were created.)

It appears that any time the phasor group is created before the r~, there is latency. Nothing else matters. Why would that be? To test if it's the whole audio chain feeding s~ that has to be created before, or only certain objects in that group, I took the first permutation with latency and cut/pasted [phasor~ 1] and [*~ 44100] in turn to push the object's creation order to the end. Only pushing [phasor~ 1] creation to the end made the delay go away, so maybe it's because that object is the head of the audio chain?

I also tested a few of these permutations using throw~/catch~ and got the same results. And I looked to see if connection creation order mattered but I couldn't find one that did and gave up after a while because there were too many cases to test.

So here's what I think is going on. Both [r~ next] and [phasor~ 1] are the heads of their respective locally connected audio chains which join at [-~]. Pd has to decide which chain to process first, and I assume that it has to choose the [phasor~ 1] chain in order for the data buffered by [s~ next] to be immediately available to [r~ next]. But why it should choose the [phasor~ 1] chain to go first if it's head is created later seems arbitrary to me. Can anyone confirm these observations and conjectures? Is this behavior documented anywhere? Can I count on this behavior moving forward? If so, what does good coding practice look like, when we want the delay and also when we don't?

Mono / stereo detection

aha, so they take messages when you name them?

[quote]What would not work is to patch a control and a signal in simultaneously: the signal will overwrite the control.[/quote]

haha, in max it is like that:

most externals take signals and messages at the signal inlets.

if you connect a signal and a number to math objects like +~, the number would multiply the signal value.

if you want to connect signals and messages to an abstraction, you have to use something like [route start stop int float] inside the abstraction - the signal will come out of the "does not match" output of [route].

to complete the magic, you can pack signals into named connections using [prepend stereoL] [prepend stereoR] [prepend modulation1] [prepend modulation2] and then you can send all 4 signals through one connection into the subpatch.

you can also mix different types of connections that way.

...

regarding the problem of the OP:

in max i made myself abstractions to enhance the function of inlets and outlets, where you can send a [metro 3000] into using s/r in order to find out which inlets are connected and which not.

in theory one could do that with audio, too, and add a pulsetrain of + 1000. to a stream, later decode the stuff by modulo 1000.