16 parameters for 1 voice, continued...

@H.H.-Alejandro I finally found the work I did months ago on the effects...... so here we go:

The messages I have coming from Iannix look like /curve "number" f f f "time" f f.

Which are Y and Z?..... Probably /curve "number" "Y" "Z" f "time" f f?

I can build exactly as you have drawn your curves, but what do "reverb" "chorus" etc. control?

Is it just the "amount" of the effect (volume) or for reverb the "decay time"?

**But for the long term I need to build a patch that you can easily make as you want without my help...... or you will always be waiting!

So I think changes will have to be made for the effect curves.... one curve for each effect.

The curves have 3 "floats" unused? The first unused float (before "time") seems always to be zero. The last two floats seem to change sometimes. Can they, or will they be used?

Timbre is missing...... is that what you now call volume?

If it is then I will add a curve for Y=pan Z=master voice volume

One curve for each effect would allow something like this at least.....

Reverb Y=reverb time Z=volume(dry>wet)

Delay Y=delay time Z=volume(dry>wet)

WahWah Y=speed or Q Z=depth

(( I put them this way around to match the note curve..... Y=Pitch Z=volume))

If these parameters can be fixed for each effect type curve (the curve number can change though) then you would be able to change for a newer better effect when you find one (without my help).... and better control the effects.

To add voices you will just have to add a [voice_gen] for each, with its unique number..... [voice_genX] and its argument for the "notes curve number". Then you can re-order any effect curves, swap effects in and out, change the timbre curves etc..... and save the [voice_gen].

David.

16 parameters for 1 voice, continued...

@whale-av hello! I found a lot more reverb! supposedly these are better than freeverb!

im looking for something like this!:

here are the compressed reverb files: Reverb pd.zip

Thank you so much!

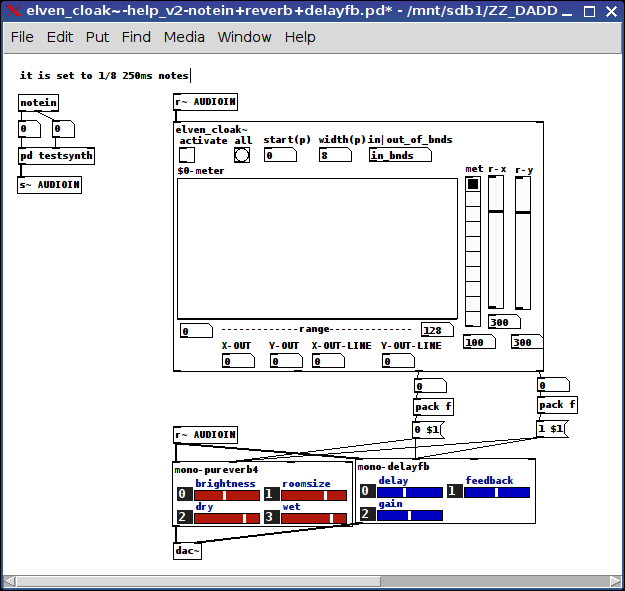

elven_cloak~: Controlling your (effect) parameters by how high (pitch) and loud (env~) you play

elven_cloak~-help_v2-notein+reverb+delayfb.zip

I added [line]'s to control the flow of numbers internally (on the two right outlets, the left two are still raw).

I figured as much, the patch is very well suited to MIDI (because it sends so much clearer and distinctive (i.e, close to a pitch) signals than a mic and it has a very delineated on/off).

So in this zip the "elven_cloak~-help_v2-notein+reverb+delayfb" includes a reverb on the left [dac~] channel and a delayfb on the right [dac~] (with both receiving the pitch(x) and env(y) values) to their brightness/roomsize + delay/feedback respectively)..

It has a very simple synth as the backbone (as a subpatch) but very distinctly shows the variance in the sound quality as you move up and down the keyboard and play softer or harder.

For greater control, adjust the start(p) value to be a little below the minimum pitch you will play then the width (Width*16) to cover the range of pitches you will play up to your maximum pitch value.

Enjoy!

Ciao for now.

S

Psychedellic Audioguide using MobMuPlat or PdParty

Hello,

I make psychedelic audioadventures, guiding multiple, synchronized people with headphones into wonderlandish stories. Sometimes leading them to crazy places that I acoustically augment with soundtracks and binaural stereorecordings from the exact places where people walk bye to create pré- and déjà-vu-ish time-flashs (iE you hear the door infront of you and the white, invisible rabbit tells you that you are too late - so you open the door, hear/feel the exact sound again...and again…). Using synchronized instructions I puppeteer multiple participants into interactions.

I used to manually synch multiple mp3-players but also worked with free gps-audioguide software. Also I have a little yet not perfect web-app-flasmobgenerator to synchronize up to an infinite amount of players with individual audio streams.

One of my biggest inspirations was the RjDj-app that was released at the beginning of the iTimes but sadly shortly after taken from the app store - and now after all this years I finally discovered the Mobmuplat and PdParty-apps and the possibility to not only play the old RjDj-scenes but also to modify them …so I decided to learn some PD! ..which is really not easy at the very beginning, but it literally opens worlds and my brain is working hard to understand all this amazing scenes and different patches out there…

First I really want to thank you from my heart for all this amazing and inspiring works - especially for sharing it! This makes me so very happy and I feel this is really the way it should be (well, a little easier maybe)!

2nd I want to apologize for my questions - i feel still so stupid PD-wise…but:

I am trying to learn how to port a PD-patch to MobMuPlat or PdParty.

Mainly I try to trigger recordings, playbacks and live-effects by a clock.

https://dl.dropboxusercontent.com/u/2643812/AlicePD.pdAlicePD.pd

Today I managed to write a patch that records two samples (A and  and to plays them back later in a different order (BA).

and to plays them back later in a different order (BA).

I found a way to let the sec counter trigger a bang to the recordings/playbacks but I still don´t know how I could add the minutes into that trigger (iE record at 3m14s for 10s)?

In one of the tutorials I´ve heard that there are different ways to record sound: one to memory and one to disk and that a disk recording might stop audio playbacks?

How could I timetrigger live-effects - iE at 4m33s the reverb for the mic-in slowly gets bigger for 1min and then fades back to normal // or the same with a delay // or with a voicepitch rising or falling // or with the volume of the unfiltered live-monitoring (adc~ -> day~) or the volume of the audiofile that plays back simultaneously ->

Is there any way to play back an mp3/or Ogg-file with PdParty or MobMuPlat? I found some unofficial patches that seem to be able to let PD stream shoutcast/or icecast mp3-streams and also sth related to ogg(unofficial), but I don´t really understand if it is possible - and HOW? And on MubMuPlat/PdParty?? It´s just that I can´t put an 1hr wav-file onto peoples iThing.

I found a LANdini tutorial patch and an Ableton-link-patch and now I am wondering if i could somehow synch multiple phones at the same time to play back different audio files at each device while applying 1. /2. and 3. …and if it is maybe even somehow possible to do that via web?!

I am a bit confused about using PD-extended or unofficial or 0.47xyz(vanilla?) …Which one is better to use for the MobMuplat/PdParty development? PdParty seems to name several objects differently, which doesn’t´t allow me to run/test the scenes on my Mac - is there any editor or sth like->

I found an old app from the RjDj development: RJC-1000, but I can´t make it work. Anyone managed to use this app? It seems so much easier then MobMuPlat-editor(+PDwrapper) to connect the patches with a user interface. Did anyone managed to create a working MobMuPlat-interface? I couldn’t´t find any tutorials on that. Would be so great to understand that part.

I also found several scenes and patches that make use of the GPS-data and I would love to learn more about that and understand those patches to use GPS as triggers for my Audiotours. Is there anyone who could help me or maybe even make a tutorial on that?

Last but not least: when switching between apps (macSierra) with cmd+tab to PD (extended and 0.47, too) I have to do it everytime twice …drives me really mad - anyone has the same problem or any idea how to solve this ?!

Is there anyone in Berlin who I could meet to learn sth?

Thank you all for your help and if you come to Berlin one day, please let me guide you to Wonderland!

BÄm

latest 2 (pd-influenced) guitar-improv pieces

for your consideration and hopefully pleasure and inspiration.

note: they are "audio-only" videos.

Peace and good will thru us all

-svanya

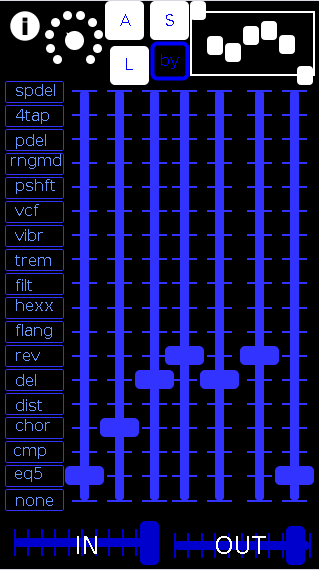

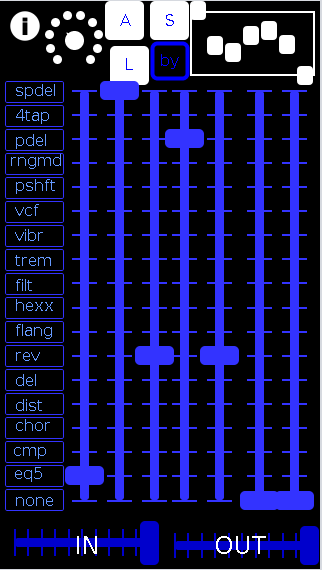

screenshots:

this is how I had the "TiGR" pedal rack (i have built) setup on these two songs

(note: the rack duplicates settings for duplicated pedals, but they do "stack" in their effects)

- eq5>chorus>delay>reverb>delay>reverb>eq5

- eq5>spectral delay (from DIY2)>reverb>pitch-delay>reverb

There were 3 others from this session, but they only used the eq5, my compressor, and recording patches.

So I am not including them here.

song 1

song 2

Swept sine deconvolution

Hello Katjav,

I have been chasing the swept sine technique with Farina's Aurora tools on the Cool Edit/Adobe Audition platform. I too found that octave extension of low frequency side only to use as fade in made possible much better result. Also small fade out at the end for non zero end state and all was greatly improved. But applied to getting IR of sound card loopback produces wrong answer. AC coupling of output and input stages produces min phase Butterworth type answer and swept sine with fades always contaminates with its sinc like IR. MLS gives correct answer, but of course brings its own baggage.

Your webpage: http://www.katjaas.nl/expochirp/expochirp.html shows IR result that I've seen on many of my screens while working on the fade issue. Very nicely done indeed.

Farina released plugins for Audacity for convolution and sweep generation. Audacity display doesn't provide zoom tools for display of fine detail. The plugins do provide ready reference.

The solution to the fade issue is to not use them in producing the sweep pair. The answer is in generating at least one of the sweeps in the frequency domain.

Here is link to a great paper covering some of this: "Transfer Function Measurement with Sweeps," SWEN MÜLLER and PAULO MASSARANI I found it here: http://www.melaudia.net/zdoc/comparisonMesure.PDF

Chapter 5 has got the goods.

Turns out that Kirkeby inverse is perfect tool for the job, and many more! To bad it is trapped in Cool Edit/Audition domain. Perhaps Farina will liberate it soon. The perfect place to apply it is before all the trouble begins. An arbitrary broadband signal of 2^n length may be inverted with Kirkeby, and convolution of the pair returns extremely good result. Samples directly next to pulse of 1e-6 easy to obtain. Starting with white noise, results emulating MLS are possible. Starting with exponential sweep a sweep is returned by Kirkeby with circular convolution properties: To get right convolution result, two copies of forward sweep need to be used and cropped to central result. Alternately Kirkeby may be directed to return result 4x longer for direct convolution. I found that a 2x long Kirkeby result produced artifacts. One may also use 2 copies of a 1x Kirkeby to recover IR with single instance of the seed wave.

I communicated this with Farina, and he honored me by placing his confirmations to: http://pcfarina.eng.unipr.it/Public/Wolkoff/

There are six wave files: 2^20 sample length forward sweep and inverse sweep generated without fades; and 1x,2x,4x Kirkeby inverses of the forward sweep. Additionally a time shift Kirkeby is posted. This is a hack I proposed as a partial work around for straight convolution. It is simply cutting first half of 1x Kirkeby and pasting it back at the end. Fading the ends controls bandwidth, and provides kernel for auralization applications.

Cheers!

IPhone App for Pd

hi all,

here some suggestion on using the rjdj stuff

first you have to do the scene in the rjdj way: a folder named xxxname.rj

this folder contains: the pd patch called _main.pd, the rj folder (that contains the rj externals library), the scene image (320x320 px - called image.jpg - the one you see on the idevice, ),the same image but 55x55 called thumb.jpg, the info.plist file and the license.txt file.

depending on your scene the main folder can contain other stuff like externals or images you want to appear/disappeare when you touch the screen...

to download a scene into the idevice you have to use the rjserver... (on the pc)

you start the rjserver and tell it where your rj scene are on your computer, than you'll have a webpage listing all the rjscenes in that directory.

the page url will be something like http://192.168.1.106:8314

now you connect your ithing to your wifi (it must be the same your pc is using) open safari and go to the same page 192.168.1.......you touch the right link and rjdj opens and downloads and installs the scene onto the device....

not so sure if i've been clear enough, hope it helps

ab

attached freebeats, a tested and working scene for rjdj -

sorry the zip file doesn't attach...maybe a chrome issue

Swept sine deconvolution

Hi Guys,

I was referred to this thread by Serafino Di Rosario, and I will test this PD patch for performing ESS measurements. Everything is very interesting for me, and it seems that Katja did a very good job!

Regarding the problems encountered, I give you these infos:

-

sine-phase-matched sweep. This method is very useful when performing distortion measurements, or computing multiple-order IRS to be used in not-linear convolution processor (for emulating the nonlinearities of a device). For the method to work, it is mandatory that the sine sweep is sine-phased not only at the beginning, but also at the end of each octave. This way, each harmonic-order IR will be phase matched with the linear IR. The provided formulation solves this problem, and it is very good to see it explained here so simply.

The importance of using a phase-synced exponential sweep was first discovered by Antonin Novak, a Ph.D. student of the universities of Prague and Le Mans. -

The ripple at low frequencies can be controlled by proper fade-in. The choice of the "optimal" fade law is still a big subject under scientific discussion. Hann windowing is just a very initial, suboptimal approach. I plan to investigate further the choice of the optimal fade law, and publish something on this topic, soon.

-

The concept of cutting away everything before the arrival of the direct sound is wrong, in my opinion. The "silence" before the arrival of the direct sound has a very important physical meaning, it is the "time-of-flight" of the sound, and provides an accurate measurement of the distance between the source and the receiver. Furthermore, it contains "background noise", which is a very important quantity to know, for example when deriving STI from the IR measurement.

So PLEASE, do not cut away this initial silence! If the IR has to be used as a filter for a convolution-based reverb plugin, the plugin must be intelligent enough for analyzing the IR, and giving the user the possibility to keep this initial silence or cut it away. For example, IR-1 from Waves gives these possibilities. In any case, a measured IR of a room should always contain the time-of-flight... Publishing "pre-cutted" IRs is wrong, and in the long run will cause a lot of troubles... -

The "Fractional delay", for the same reasons, should NOT be corrected! If the time-of-flight is fractional, good, let's stay with this fact. As pointed out, cutting (time-shifting) improperly the measured IR can alter its spectrum. So, please, keep every measured IR as it comes out from the convolution with the inverse sweep... If the higher-order distortion products are not needed, it make sense to only keep the linear part only, but always starting from the true "zero time". Let's make an example: I generate a 20s-long IR at 48 kHz, that is 960,000 samples.

The inverse sweep will also be 960,000 samples long.

I play the sweep, and record the room response for, say, 1,200,000 samples, for being sure of capturing the complete reverberant tail even at the higher frequencies.

Now I convolve the recorded signal (1,200,000 samples) with the inverse sweep (960,000 samples), and I get a convolved signal which is long 2,159,999 samples.

If I want to keep a 4s long IR, containing only the linear response, you should throw away the first 959,999 samples, and keep the following 192,000 samples.

As this signal starts from the true "zero time", the main peak will not be at the very beginning, but delayed of an amount corresponding to the source-receiver distance. If it was 10m, it will be 10/340=0.0294 seconds... -

For performing efficiently convolution of very long filters (in the example above, the Inverse Sweep was nearly 1 million points) it is advisable to employ a partitioned convolution scheme. That is, the filter is splitted in a number of blocks, so that instead of performing a single, very long FFT , a number of shorter FFTs is performed instead. On my web site you find a couple of papers explaining the partitioned convolution algorithm. This is the same algorithm employed in the well-known BruteFIR open-source program by Anders Torger.

Bye!

Angelo Farina

Convolution

Convolution in time domain is multiplication in frequency domain, that is, complex multiplication of the real and imaginary parts in signal spectrum and filter spectrum. If the filter is not strictly zero-phase, the multiplication involves phase shifts, and the output signal will be longer than the input. To avoid fold-over of signal samples (circular convolution), techniques of zero-padding and overlap-save or overlap-scrap are normally implemented for fast convolution. It is even possible to cut the impulse response in parts if it is longer than a reasonable FFT size. In another thread, bassik has pointed to this article describing partitioned convolution:

http://www.acoustics.net/objects/pdf/article_farina04.pdf

It is a very interesting technique because, when the filter is causal, low latency processing can be done even with long FIR filters.

I am not sure if fast convolution can be implemented as a Pd patch, because of the required zero-padding. Partitioned convolution is even more complicated. Fortunately, Ben Saylor has written [partconv~], which is in Pd extended. This object can do it all.

Katja

Swept sine deconvolution

Hello Katjav,

I am finally back even if still with some problems as I don't have internet at home and work is manic....

Anyway let's put everything in the right order:

-

[chirp~]: I am not on mac but I can test it on mac at home but it would be good to compile it for all platforms...do you have any reference where I can understand how to do the compiling?

also when you consider linear sweep, are you considering a different inverse filter which only the time reversal of the test signal? -

In attachment there is an extract of the CATT acoustics manual on calibration of IR and convolution; It is related to audio convolution for auralisation simulations but the same principle can be applied to our purposes to calibrate IR and convoluted responses.

-

Katjav wrote: Oh yeah, there is something else: for proper analysis, the impulse response of the test set (speaker&mic) should be factored out. Taking close-mic sweep response, invert magnitude spectrum, compensate, etc. Is this regularly done in IR measurement practice? If so, how?

Normally in IR measurement practices with Swept sine, the convoluted result will appear in the software with all the non-linearities on its left hand side. In all common software (as far as I know) the removal of the non linearities is done by hand just by changing the start point of the result IR and by windowing the end part of it....after this you start analyzing all the parameters you want to derive from the IR.

I hope I answered your question.

- Calibration of IR and resulted convoluted IR

as far as I know there isn't anything in literature that explains exactly the routine for calibrations but the discussed process in the previous posts will do the job with the important task of storing the gain information in order to be able to calibrate the convoluted anechoic file later.

also please have a look at this paper: http://pcfarina.eng.unipr.it/Public/Papers/238-NordicSound2007.pdf

from page 13 to 24 it explains all the issues that can occurs with sweep sine method.

I will try to start the tests soon and to try to find more info in literature.

Hope this helps

Thank you

Bassik

http://www.pdpatchrepo.info/hurleur/CATT_acoustics_IR_calibration.pdf