Makefile.gnu Linux Compiling Question

Also in the install txt file it says

Requirements

Core build requirements:

- Unix command shell: bash, dash, etc

- C compiler chain: gcc/clang & make

Core runtime requirements:

- Tcl/Tk: Tk wish shell

Optional features:

- autotools: autconf, automake, & libtool (if building with the autotools)

- gettext: build localizations in the po directory

- JACK: audio server support

- FFTW: use the optimized FFTW Fast Fourier Transform library

I have the core build requirements * Unix command shell: bash, dash, etc

- C compiler chain: gcc/clang & make installed. It seems there is a missing dependency included in the package I downloaded and that is highlighted when I try to do a makefile build approach and not an autotools approach

Do different instances of Pure Data run in different threads?

Press control+shift+escape to open the task manager, in details you can right click on wish86.exe and or pd.exe and define the allowed cores for the application.

I never tryed this but just saw it there now.

As @whale-av said, maybe you have to copy the pd folder and maybe rename the second application.

I guess there are other ways to start and run applications on a specific thread in win, but you can search for yourself as this is not Pd specific.

And as @whale-av said, these instances are completly independent and they

won't run in sync. They run asynchronous. Useful for special tasks, for example to do sth with fast-forward-message while the other patch stays in real-time.

If you want to spread patches across different cpu cores but still in sync, use the [pd~] object.

Either way shmem lib is very useful to share memory / share arrays between pd-instances.

(Looking at the task-manager when using [pd~] I see the cores are constanly changing, Looks like Windows is dynamicly changing the cores. Might be good enough or even better to let Windows manage it instead of assigning fixed threads. Same might be true when running asynchronous instances. )

COMPUTATIONAL INTENSITY OF PD

@4poksy A standard sequencer is probably a few dozen instructions to the cpu and even the early rpi can do ~2000 MFLOPS, pd can get a great deal done between audio blocks despite its single thread nature. Has your experience demonstrated that you even need to worry about this? Even my 230k patch which is an entire programming language can accomplish a great deal between audio blocks and it can control all of the parameters for a good number of analog style subtractive voices in real time without issue. Sure my computer is a considerably more performant than a pi but still, it is getting a hell of a lot done between audio blocks.

An arduino will probably not help anything unless you have a good amount of interface stuff and/or are using one of the old single core pis, with a multicore pi you can just do the UI stuff external of pd and let the kernel deal with scheduling everything, it will almost certainly do a better job than a mortal unless your programming skills are getting up around kernel dev level. Even on a single core pi it may not be an issue. If it is a multicore pi than one of the things you can do is isolate a cpu core with the isolcpus kernel parameter, play with IRQ affinity and start pd on that isolated core with taskset or cpuset, this will make sure that the kernel does not stick some other process on the core with pd during a time when pd has an easy load which can cause dropouts when that load suddenly jumps. You can also structure your patches so all UI is separate from DSP which will allow you to isolate a second core and run UI on one, DSP on another and communicate between them with [netsend]/[netreceive].

But none of that may even be a problem, are you having issues with dropouts or are you attempting to preempt such issues? For the former knowing your exact issues would help a great deal in assisting you, for the latter learning more about how pd works and the system on which it will be run will guide you in what preemptive measures you actually need to take.

The stuff above regarding arduino and pi should be taken with a grain of salt, I have no direct experience with either and am basing that off of my electronics/pd/linux knowledge, but someone (hopefully) will come along to correct me in short order if my assumptions are faulty.

Performance of [text] objects

@zigmhount I think in that setup I would not worry much about pd, its load will be tiny compared to a DAW (or most OSes) and that is where I would look for ways to knock CPU use down. Ecasound is a command line DAW which can be completely controlled from pd through various ways (going from memory [command]/[ggee/shell], OSC, UDP), uses much less CPU than the alternatives in my experience and combined with a minimal Slackware system kept me happily running on an old 4-core 1.8gig machine up until about 6 months ago when I decided to get a new computer on a whim.

One thing that can help (depending on your OS?) is isolate a core and run the DAW on that core, can keep the random dropouts from happening but it means everything you do in the DAW has to be doable with a single core. Works better when you have 4 or more cores since you can isolate a core (or two or more) for the none realtime stuff and let the system manage the other cores for the realtime stuff.

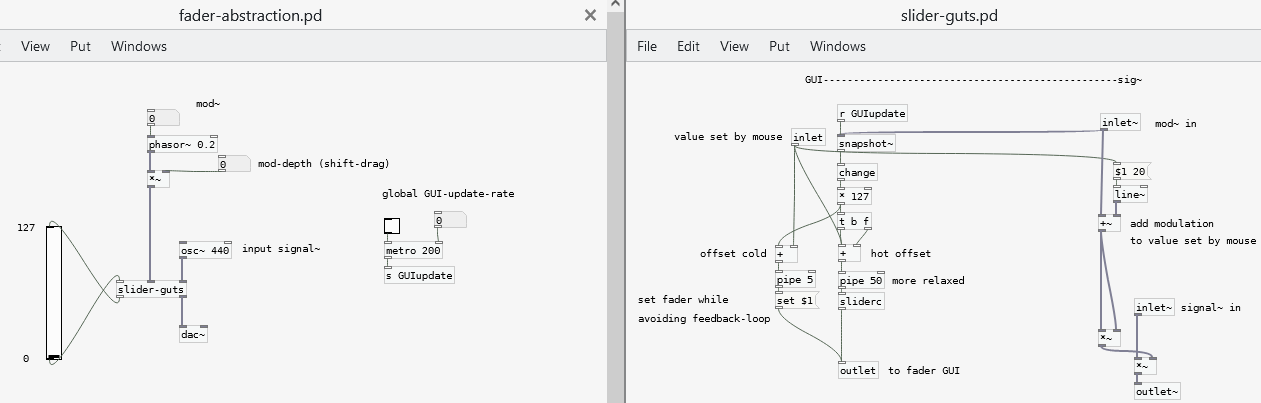

how would you recreate this mixer channelstrip abstraction?

@esaruoho wrote:

16 "LFOs" to "breathe" up and down the volume slider

How does your modulation LFO look like?

Is the modulator a signal or control-object?

Does it offset or master the fader ?

Did you try what I mentioned in your other thread?

https://forum.pdpatchrepo.info/topic/14291/need-a-simpler-way-to-change-slider-background-color-when-on-and-off/6

If you update the GUI on each block

= SR/64 = 44100 /64=689 times per second,

this is overkill and is going to drag down performance, even if the two parts run asynchronously.

<25 frames per second should be enough for the GUI.

Setting many faders and their colour once in a while should not cause any trouble. Limiting the update rate might be enough to run stable. (see the patch below)

Benefit from separating GUI- and audio-parts across two PD-instances would be, if the GUI is lagging, audio still runs without drop-outs as PD-instances run asynchronous.

Ideally run the PD-instances on different CPU-cores (and audio in higher priority) by setting this in the operating system.

(btw offtopic, to complicate things even further: in case you are not aware of it:

there is also the [pd~] object, which does simple multithreading, splitting patches across the CPU-cores. But the parts are running synchronous.

Running one instance of Pure Data only, without [pd~], everything runs on one core only.)

For real-time audio a synchronous chain between input and output is important.

So no, unless you know what you are doing, don't split audio into several PD-instances.

(but yes, on different cores with [pd~], in case the audio-part runs out of CPU-time and you don't want to increase latency).

Sth like this is what I thought about in the other thread, slowing down GUI-update-rate with [metro] and [snapshot~]

and detaching it from the deterministic chain with [pipe],

while maintaining signal-rate amplitude modulation:

fader-abstraction.pd

slider-guts.pd

(I don't have Vanilla here right now, and doesn't work on web-PurrData,. But should work on Vanilla, if there is no bug!?)

EDIT: changed [pipe 5] and added [change] and set fader properties to "jump on click"

Why does Pd look so much worse on linux/windows than in macOS?

Howdy all,

I just found this and want to respond from my perspective as someone who has spent by now a good amount of time (paid & unpaid) working on the Pure Data source code itself.

I'm just writing for myself and don't speak for Miller or anyone else.

Mac looks good

The antialiasing on macOS is provided by the system and utilized by Tk. It's essentially "free" and you can enable or disable it on the canvas. This is by design as I believe Apple pushed antialiasing at the system level starting with Mac OS X 1.

There are even some platform-specific settings to control the underlying CoreGraphics settings which I think Hans tried but had issues with: https://github.com/pure-data/pure-data/blob/master/tcl/apple_events.tcl#L16. As I recall, I actually disabled the font antialiasing as people complained that the canvas fonts on mac were "too fuzzy" while Linux was "nice and crisp."

In addition, the last few versions of Pd have had support for "Retina" high resolution displays enabled and the macOS compositor does a nice job of handling the point to pixel scaling for you, for free, in the background. Again, Tk simply uses the system for this and you can enable/disable via various app bundle plist settings and/or app defaults keys.

This is why the macOS screenshots look so good: antialiasing is on and it's likely the rendering is at double the resolution of the Linux screenshot.

IMO a fair comparison is: normal screen size in Linux vs normal screen size in Mac.

Nope. See above.

It could also just be Apple holding back a bit of the driver code from the open source community to make certain linux/BSD never gets quite as nice as OSX on their hardware, they seem to like to play such games, that one key bit of code that is not free and you must license from them if you want it and they only license it out in high volume and at high cost.

Nah. Apple simply invested in antialiasing via its accelerated compositor when OS X was released. I doubt there are patents or licensing on common antialiasing algorithms which go back to the 60s or even earlier.

tkpath exists, why not use it?

Last I checked, tkpath is long dead. Sure, it has a website and screenshots (uhh Mac OS X 10.2 anyone?) but the latest (and only?) Sourceforge download is dated 2005. I do see a mirror repo on Github but it is archived and the last commit was 5 years ago.

And I did check on this, in fact I spent about a day (unpaid) seeing if I could update the tkpath mac implementation to move away from the ATSU (Apple Type Support) APIs which were not available in 64 bit. In the end, I ran out of energy and stopped as it would be too much work, too many details, and likely to not be maintained reliably by probably anyone.

It makes sense to help out a thriving project but much harder to justify propping something up that is barely active beyond "it still works" on a couple of platforms.

Why aren't the fonts all the same yet?!

I also despise how linux/windows has 'bold' for default

I honestly don't really care about this... but I resisted because I know so many people do and are used to it already. We could clearly and easily make the change but then we have to deal with all the pushback. If you went to the Pd list and got an overwhelming consensus and Miller was fine with it, then ok, that would make sense. As it was, "I think it should be this way because it doesn't make sense to me" was not enough of a carrot for me to personally make and support the change.

Maybe my problem is that I feel a responsibility for making what seems like a quick and easy change to others?

And this view is after having put an in ordinate amount of time just getting (almost) the same font on all platforms, including writing and debugging a custom C Tcl extension just to load arbitrary TTF files on Windows.

Why don't we add abz, 123 to Pd? xyzzy already has it?!

What I've learned is that it's much easier to write new code than it is to maintain it. This is especially true for cross platform projects where you have to figure out platform intricacies and edge cases even when mediated by a common interface like Tk. It's true for any non-native wrapper like QT, WXWidgets, web browsers, etc.

Actually, I am pretty happy that Pd's only core dependencies a Tcl/Tk, PortAudio, and PortMidi as it greatly lowers the amount of vectors for bitrot. That being said, I just spent about 2 hours fixing the help browser for mac after trying Miller's latest 0.52-0test2 build. The end result is 4 lines of code.

For a software community to thrive over the long haul, it needs to attract new users. If new users get turned off by an outdated surface presentation, then it's harder to retain new users.

Yes, this is correct, but first we have to keep the damn thing working at all.  I think most people agree with you, including me when I was teaching with Pd.

I think most people agree with you, including me when I was teaching with Pd.

I've observed, at times, when someone points out a deficiency in Pd, the Pd community's response often downplays, or denies, or gets defensive about the deficiency. (Not always, but often enough for me to mention it.) I'm seeing that trend again here. Pd is all about lines, and the lines don't look good -- and some of the responses are "this is not important" or (oid) "I like the fact that it never changed." That's... thoroughly baffling to me.

I read this as "community" = "active developers." It's true, some people tend to poo poo the same reoccurring ideas but this is largely out of years of hearing discussions and decisions and treatises on the list or the forum or facebook or whatever but nothing more. In the end, code talks, even better, a working technical implementation that is honed with input from people who will most likely end up maintaining it, without probably understanding it completely at first.

This was very hard back on Sourceforge as people had to submit patches(!) to the bug tracker. Thanks to moving development to Github and the improvement of tools and community, I'm happy to see the new engagement over the last 5-10 years. This was one of the pushes for me to help overhaul the build system to make it possible and easy for people to build Pd itself, then they are much more likely to help contribute as opposed to waiting for binary builds and unleashing an unmanageable flood of bug reports and feature requests on the mailing list.

I know it's not going to change anytime soon, because the current options are a/ wait for Tcl/Tk to catch up with modern rendering or b/ burn Pd developer cycles implementing something that Tcl/Tk will(?) eventually implement or c/ rip the guts out of the GUI and rewrite the whole thing using a modern graphics framework like Qt. None of those is good (well, c might be a viable investment in the future -- SuperCollider, around 2010-2011, ripped out the Cocoa GUIs and went to Qt, and the benefits have been massive -- but I know the developer resources aren't there for Pd to dump Tcl/Tk).

A couple of points:

-

Your point (c) already happened... you can use Purr Data (or the new Pd-L2ork etc). The GUI is implemented in Node/Electron/JS (I'm not sure of the details). Is it tracking Pd vanilla releases?... well that's a different issue.

-

As for updating Tk, it's probably not likely to happen as advanced graphics are not their focus. I could be wrong about this.

I agree that updating the GUI itself is the better solution for the long run. I also agree that it's a big undertaking when the current implementation is essentially still working fine after over 20 years, especially since Miller's stated goal was for 50 year project support, ie. pieces composed in the late 90s should work in 2040. This is one reason why we don't just "switch over to QT or Juce so the lines can look like Max." At this point, Pd is aesthetically more Max than Max, at least judging by looking at the original Ircam Max documentation in an archive closet at work.

A way forward: libpd?

I my view, the best way forward is to build upon Jonathan Wilke's work in Purr Data for abstracting the GUI communication. He essentially replaced the raw Tcl commands with abstracted drawing commands such as "draw rectangle here of this color and thickness" or "open this window and put it here."

For those that don't know, "Pd" is actually two processes, similar to SuperCollider, where the "core" manages the audio, patch dsp/msg graph, and most of the canvas interaction event handling (mouse, key). The GUI is a separate process which communicates with the core over a localhost loopback networking connection. The GUI is basically just opening windows, showing settings, and forwarding interaction events to the core. When you open the audio preferences dialog, the core sends the current settings to the GUI, the GUI then sends everything back to the core after you make your changes and close the dialog. The same for working on a patch canvas: your mouse and key events are forwarded to the core, then drawing commands are sent back like "draw object outline here, draw osc~ text here inside. etc."

So basically, the core has almost all of the GUI's logic while the GUI just does the chrome like scroll bars and windows. This means it could be trivial to port the GUI to other toolkits or frameworks as compared to rewriting an overly interconnected monolithic application (trust me, I know...).

Basically, if we take Jonathan's approach, I feel adding a GUI communication abstraction layer to libpd would allow for making custom GUIs much easier. You basically just have to respond to the drawing and windowing commands and forward the input events.

Ideally, then each fork could use the same Pd core internally and implement their own GUIs or platform specific versions such as a pure Cocoa macOS Pd. There is some other re-organization that would be needed in the C core, but we've already ported a number of improvements from extended and Pd-L2ork, so it is indeed possible.

Also note: the libpd C sources are now part of the pure-data repo as of a couple months ago...

Discouraging Initiative?!

But there's a big difference between "we know it's a problem but can't do much about it" vs "it's not a serious problem." The former may invite new developers to take some initiative. The latter discourages initiative. A healthy open source software community should really be careful about the latter.

IMO Pd is healthier now than it has been as long as I've know it (2006). We have so many updates and improvements over every release the last few years, with many contributions by people in this thread. Thank you! THAT is how we make the project sustainable and work toward finding solutions for deep issues and aesthetic issues and usage issues and all of that.

We've managed to integrate a great many changes from Pd-Extended into vanilla and open up/decentralize the externals and in a collaborative manner. For this I am also grateful when I install an external for a project.

At this point, I encourage more people to pitch in. If you work at a university or institution, consider sponsoring some student work on specific issues which volunteering developers could help supervise, organize a Pd conference or developer meetup (this are super useful!), or consider some sort of paid residency or focused project for artists using Pd. A good amount of my own work on Pd and libpd has been sponsored in many of these ways and has helped encourage me to continue.

This is likely to be more positive toward the community as a whole than banging back and forth on the list or the forum. Besides, I'd rather see cool projects made with Pd than keep talking about working on Pd.

That being said, I know everyone here wants to see the project continue and improve and it will. We are still largely opening up the development and figuring how to support/maintain it. As with any such project, this is an ongoing process.

Out

Ok, that was long and rambly and it's way past my bed time.

Good night all.

Contribute to better Pd Documentation

@Nicolas-Danet said:

Put all the help + tutorial files in a new repository on GitHub.

Allow much more people to have admin rights.

Let the community handles that in a centralized place without interacting with the core team.

Make it a safe and friendly area (for newcomers).

Note that more you have materials for documentation, more energy is required to maintain/translate it.

My 2 cents.

I don't know if I really get what this proposal is. Are you saying we should have yet another parallel Pd vanilla documentation on github for help files and everything? The end goal would be to actually merge this into the Pd documentation?

Sorry if I'm wrong, but it sounds like you're proposing yet another independent joint venture, and that would generate more noise, I believe. I say that specially when you say this should be done "without interacting with the core team", so it really sounds like you want something independent and I wonder why.

I'm also just not sure what 'core team' is would even mean. Who is the 'core team'? There's no actual 'team'. Pd Vanilla is developed and maintained by one person: Miller Puckette. Ok, he is not the only one who's done stuff for Pd, others help and contribute to the project, and he picks/accepts stuff. So it is Miller's project with the collaboration of others as a community. No one else has a 'pd core team' work tag and ANYONE can just send a Pull Request.

For instance. I've been contributing to Pd Vanilla with suggestions, bug reports and some PRs. Am I part of the 'core team' (and thus maybe I shouldn't interact)? Well, I would never say I'm part of the core team... I'm just a random person contributing to an open source project called Pure Data by Miller Puckette as part of someone from the Pure Data community.

Anyway, this thread links to a Pure Data github issues. That's on Pd Vanilla's repository. The idea is clearly to work and propose changes to Pd's help files, the manual, propose new stuff to the software. You seem to suggest nothing like that happens and we start something new and independent from scratch as a 'community effort', putting in opposition the 'community' and the 'core team'. If I'm right in my assumption, this sounds really bad to me as it has a divisive mentality, which I think is responsible for all the noise we have when it comes to Pd's documentation.

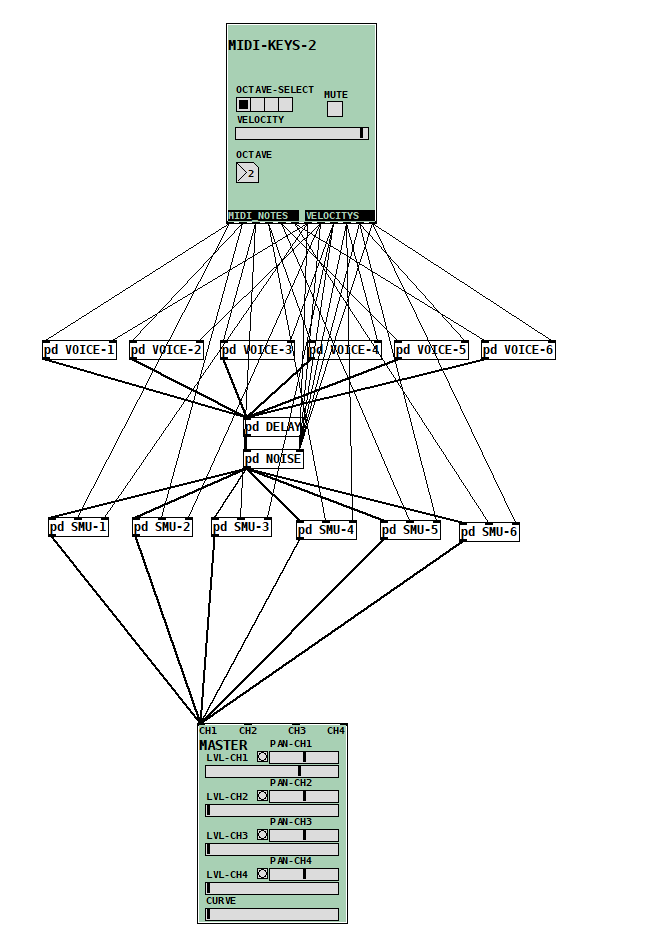

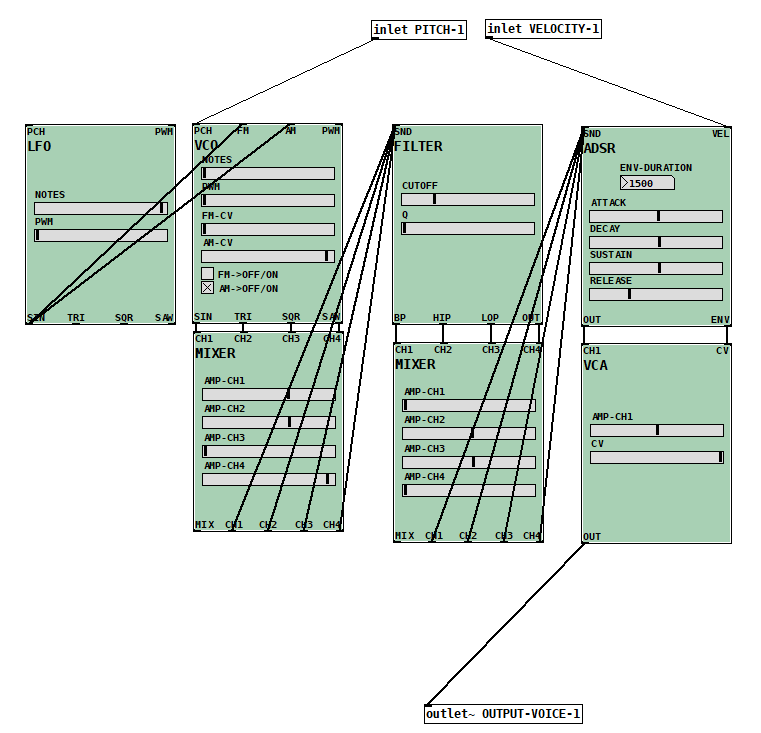

Kor'in - Advance 6-voice polyphonic synthesizer made with NoxSiren [v4.0]

Kor'in an advance example of how to create an entire synthesizer using only NoxSiren [v4.0] system. This is non-standard subtractive synthesizer model but can be modified into a hybrid complex model.

What is NoxSiren system ?? <--

https://forum.pdpatchrepo.info/topic/13122/noxsiren-modular-synthesizer-system-v4-0

Kor'in Download :

Kor'in.rar

-Kor'in Structure-

- Kor'in birds eye view

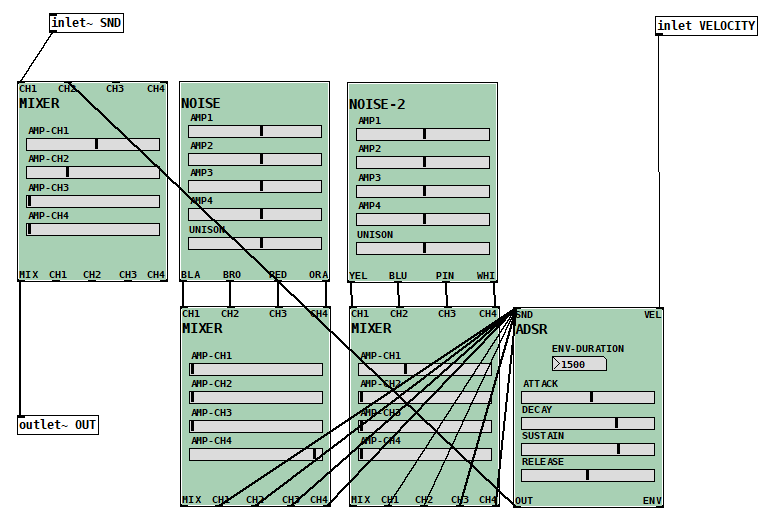

- Kor'in voice unit

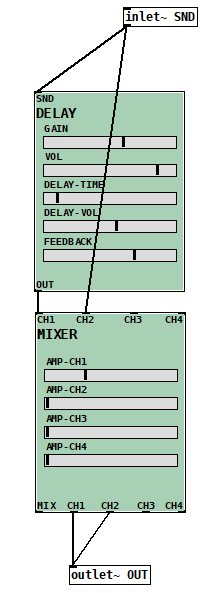

- Kor'in delay unit

- Kor'in noise unit

- Kor'in SMU unit

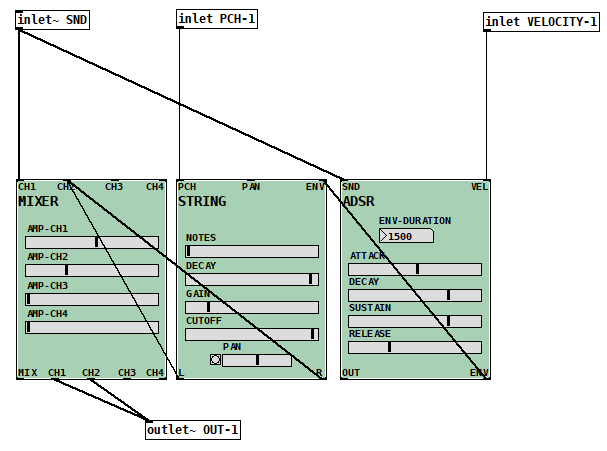

Optimizing pd performances to fit an RPI 3

@lysergik I ran a few tests, although they are a bit meaningless for your PI as I have an I7 2.3GHz.

And so I deleted a bit of my last post.

I suppose the GUI using 5% of an 8 core processor would translate to 60% or more on your Broadcom chip.

The Pd process at around 3% ..... about 40% maybe.

Simply minimising the GUI window removes nearly all of the GUI cpu usage as @Eeight suggests.

If you can effectively split the audio equally over 2 cores with [pd~] you might be able to get down to 20-25% per core on the RPI.

Is it possible in Linux to force the GUI...... the tcl/tk app..... to run on a set core..... core 1 GUI / core3 Pd / core4 [pd~] for example.

The wish app and Pd communicate through ports anyway, so that will be possible if there is a way to permanently assign the executables to the cores.

David

Optimizing pd performances to fit an RPI 3

Well I do not see what is the main difference with the result I've shown before, maybe now we know that vfprintf function call is linked to printf_chk, that I didn't seen before while profiling. Also we got a malloc call and some dac call, so if you got any clue of what it could meant it would be great. Then I have to agree profiling isn't easy(it's my first time doing it ^^) but trying to fix the problem as such low level coudl be the best way to find a real fix to this bottleneck.

@mnb Hello ! First I thank you to have uploaded your patches, they're great ! Then to answer you, no I'm using the groovebox 2, the one you recommend. So the problem isn't from the filter object. But since you coded this patch you maybe could run it on your config and report here how much CPU power it takes on your side. By doing this we could identify if the problem is coming from the code or my hardware(I remember reading somewhere that embed intel GPU could have crappy result with pd, so with a bit of luck i could find somekind of driver fix to this issue).

@EEight Well, when I'll get something running fine on my laptop I'll port it to the RPI and of course I'll use -nogui, even switch~ if it's possible. But on the two version of my ptach I got either 50% of my first core(whith a 2Ghz CPU) or on the v2 of the patch 4 cores running at peak over 50%. Considering that my Pi is using a Broadcom BCM2837, with 1,2 Ghz on four cores and that the frequency is lock by the system around 900 MGhz if I'm right, it's just impossible to run my patch now on the rpi. I thinked that in theory splitting the audio processing to spread the calculation over the processor I would get 1ghz of calculation devided in four and then I could reach 250-300 MHz on each cores, that could run very smoothly on a rpi. But because there is something bugging somewehre I've just multiply the audio processing ressources by FOUR ! And -no-gui(that I already use on my laptop) or other tip for optimization such as latency doesn't help the CPU on my laptop, so I don't even think of its efficency on a RPI. I also noticed that when I increased the latency of my pure data instances, CPU use goes up ! So it's not something I could use for optimization. And didn't find ways to run pd on alsa on my Pi the only way I could get it to run without crahsing when dsp is on is with jack.