playing multiple audio files with sf-play2~

@KMETE said:

I was wondering if there is any difference between multiple sf-play2~ or readsf~ in term of memory usage?

[stereofile] loads the contents into RAM. (sf-play2~ is only the player, not the loader, but it depends on audio data loaded into RAM by stereofile.)

readsf~ streams from disk so its RAM usage should be much smaller.

One of the reasons why I worked on stereofile and sf-play2~ is that I think if you tell it to play an audio file at rate = 1, then it should sound like the file's normal speed at any Pd sample rate. Pd's built-in [tabplay~] does not do this -- if the system sample rate differs from the file's rate, it will play faster or slower than normal. That is, system settings affect the patch's behavior  . I think [readsf~] also has this problem but I haven't checked, could be wrong about that.

. I think [readsf~] also has this problem but I haven't checked, could be wrong about that.

hjh

Trying to add key switch functionality to a midi controller

@whale-av said:

@Alan-Angel You could be right about the order of operations......... https://forum.pdpatchrepo.info/topic/13320/welcome-to-the-forum

You need to store the right hand note so that it can be played later by hitting the left key.

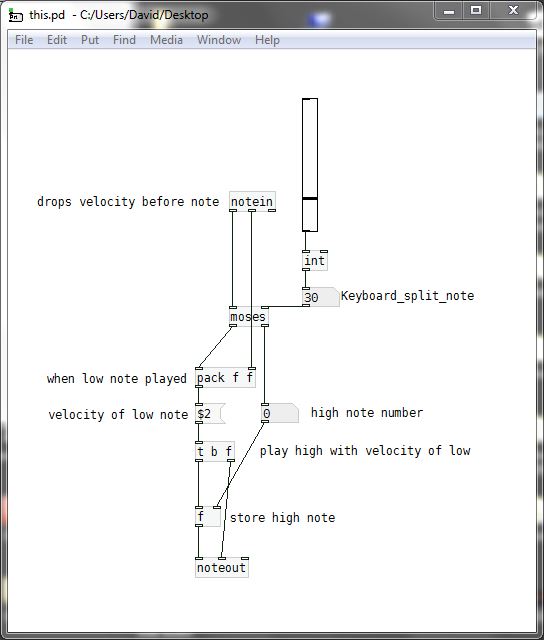

I have broken it down into (I hope) an understandable work flow for a mono player....... this.pd

So that even if it doesn't work you should be able to understand the order of operations and fix the problems...

[pack f f] receives the velocity of high notes.... but that value is replaced by the velocity of the low note just before the low note triggers the playing of the high note.If you want to do this polyphonically you will need to use the [poly] object and to use the finished mono patch as an abstraction within a master patch containing [poly]...... probably using [clone].

That's slightly more complex.... but you will get there.....

David.

Thanks for your help. the low velocity isn't registering, I'll try to figure out why not but I get the logic.

@kosuke16 said:

Whale is right, my bad. I tried it only with the numbers, no audio so while the numbers where good, the timing wasn’t. Note message not being continuous, you have to repack it so it is send at the same time than the velocity to make a coherent list of two values for noteout.

Thanks for your help so far!

@ddw_music said:

My question about this:

Right hand first, then left hand: Note should be produced upon the left-hand note on.

- What if one right-hand note is pressed, and two or three left-hand keys? Multiple notes, or only the first? (Polyphony, I suppose, would have to be right-left, right-left, not the same as right-left-left.)

Left hand first, then right hand: Should it play the note upon receipt of the right hand note-on, with the left-hand velocity? Or not play anything? Or something else?

- And, if it should sound, what about left-right-right? Portamento? Ignore the second?

Because a full solution will be different depending on the answer to these questions. These need to be thought through anyway because you can't guarantee correct input -- the logic needs to handle sequences that are not ideal, too.

hjh

-

I don't plan on play two left hand notes, I just want to play fast galloping basslines like dun du-du dun du-du dun du-du dun and it'seasier to do with one hand and play the notes with the other, like a real bass.

-

Not sure about this one, I guess I'll know the more I play how I want it to work. Maybe default it to a low E like on a real bass for fun?

-

Agree, for now I just want some basic functionality to even see if the instruments I use can handle fast notes in the first place.

Trying to add key switch functionality to a midi controller

My question about this:

-

Right hand first, then left hand: Note should be produced upon the left-hand note on.

- What if one right-hand note is pressed, and two or three left-hand keys? Multiple notes, or only the first? (Polyphony, I suppose, would have to be right-left, right-left, not the same as right-left-left.)

-

Left hand first, then right hand: Should it play the note upon receipt of the right hand note-on, with the left-hand velocity? Or not play anything? Or something else?

- And, if it should sound, what about left-right-right? Portamento? Ignore the second?

Because a full solution will be different depending on the answer to these questions. These need to be thought through anyway because you can't guarantee correct input -- the logic needs to handle sequences that are not ideal, too.

hjh

wanting to use a pitchbend to control array playback pitch?

+1 for rpole~.

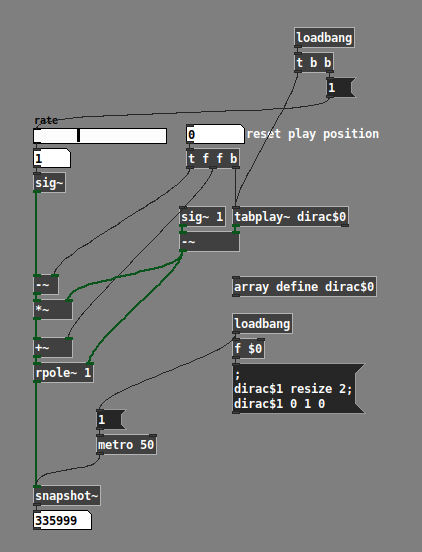

Resetting the playback position using rpole~ isn't obvious, though: you need a single sample where the left inlet = new position, and right inlet = 0, then flip immediately back to left inlet = rate, right inlet = 1.

I had asked awhile back what is the best way to generate a single-sample (Dirac) impulse in response to a bang -- it seems that tabplay~ is the best way. tabplay~ goes to zero at the end of the array, so we have to generate "1, 0 0 0 0 0." The rpole~ right inlet needs "0, 1 1 1 1 1" so, 1 - pulse.

Then we need rate when this inverted pulse is 1, and the new position when it's 0: (rate * invertedpulse) + (pos * (1 - invertedpulse)) but a bit of algebra changes 2 *-es and 1 + into 2 + or --es and 1 *:

(rate * invertedpulse) + (newpos * (1 - invertedpulse))

= rate * invertedpulse + newpos - (newpos * invertedpulse)

= (rate - newpos) * invertedpulse + newpos

You would, of course, replace the snapshot~ with tabread4~.

hjh

Question about [tabread4~]

@lacuna you tell it to read from 0 and go to arraysize-1, but where it would read the 1st sample it outputs the value of the read point for the 2nd sample (as the 1st is a 'guard point') and where it would read the last sample it reads the value at the read point for the 2nd-to-last (as the last is another 'guard point')

I'm not sure I'd say they get 'skipped', but maybe 'replaced' or 'clipped'

Send .syx file

@oid said:

@ddw_music Long sysex messages work just fine if you treat them as what they are and what pd is quite good at dealing with, streams.

I do understand what you're saying, and I began with the same assumption -- that [midiout] should be just a low-level conduit -- byte goes in, byte gets sent out.

Unfortunately it doesn't work that way in practice.

My test scenario looks like this. Launch SuperCollider and Pure Data. It helps in my case that I'm testing in Linux, where I can create and remove MIDI connections arbitrarily in qjackctl's ALSA tab.

SC code:

MIDIClient.init;

MIDIIn.connect(0, MIDIClient.sources.detectIndex { |src| src.device.containsi("pure") });

m = MIDIOut(0).connect(MIDIClient.destinations.detectIndex { |dst| dst.device.containsi("pure") });

m.connect(MIDIClient.destinations.detectIndex { |dst| dst.device.containsi("superc") });

MIDIdef.sysex(\a, { |... args| args.postln });

Then I need to go to qjackctl and connect Pd's MIDI output to Pd's MIDI input.

At this point, I can send MIDI from either Pd or SuperCollider, and both SC and Pd will respond.

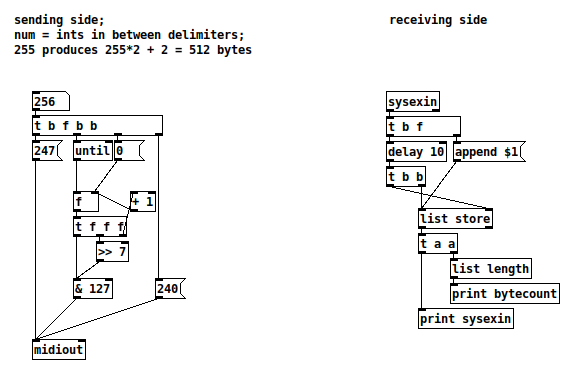

Pd patch, implementing "stream of bytes" (btw I tested both [sysexin] and [midiin], no difference in the results):

(Note also: Because I am collecting the MIDI-in bytes into a list, I also checked whether there is a maximum list length. There may be, but we won't hit any such limit in these tests -- I could go up to 5000 numbers in a list, no problem. So, in case of any message truncation, it must be happening in the MIDI objects, not in list handling.)

The test case will be to send a packet of n 14-bit integers (MSB first). The total packet size will be n*2 + 2, so if we want to produce exactly b bytes, n = (b / 2) - 1.

SuperCollider sending:

(

f = { |n|

var out = Int8Array[240];

n.do { |i|

out = out.add(i >> 7 & 127).add(i & 127);

};

out.add(247);

};

)

// small packet

m.sysex(f.value(10)); // SC OK, Pd OK (22 bytes)

// 512 bytes

m.sysex(f.value(255)); // both OK

// 514 bytes

m.sysex(f.value(256)); // SC OK, Pd did not print

And my results for Pd sending:

- 22 bytes (n = 10): Both OK

- 512 bytes (n = 255): Both OK

- 514 bytes (n = 256): SC did not respond. Pd printed only 512 bytes (truncated).

Conclusions:

- When SC sent 514 bytes, SC received 514 bytes, so we know SC is sending a complete packet.

- Pd did not respond, suggesting that both [sysexin] and [midiin] simply stopped listening after 512 bytes, did not process the ending delimiter, and thus did not output the packet at all.

- When Pd was asked to send 514 bytes, Pd received only 512 bytes. This suggests that [midiout] is buffering the incoming bytes, waiting for a complete packet before sending, but it must have a limit of 512 bytes, causing the message to be truncated. (And SC didn't respond at all, which suggests that the closing delimiter was never sent.)

So again... in principle, you're correct -- it's technically possible for some [midiout] object to relay the data out, byte by byte. But neither [midiin] nor [midiout] are implemented that way.

hjh

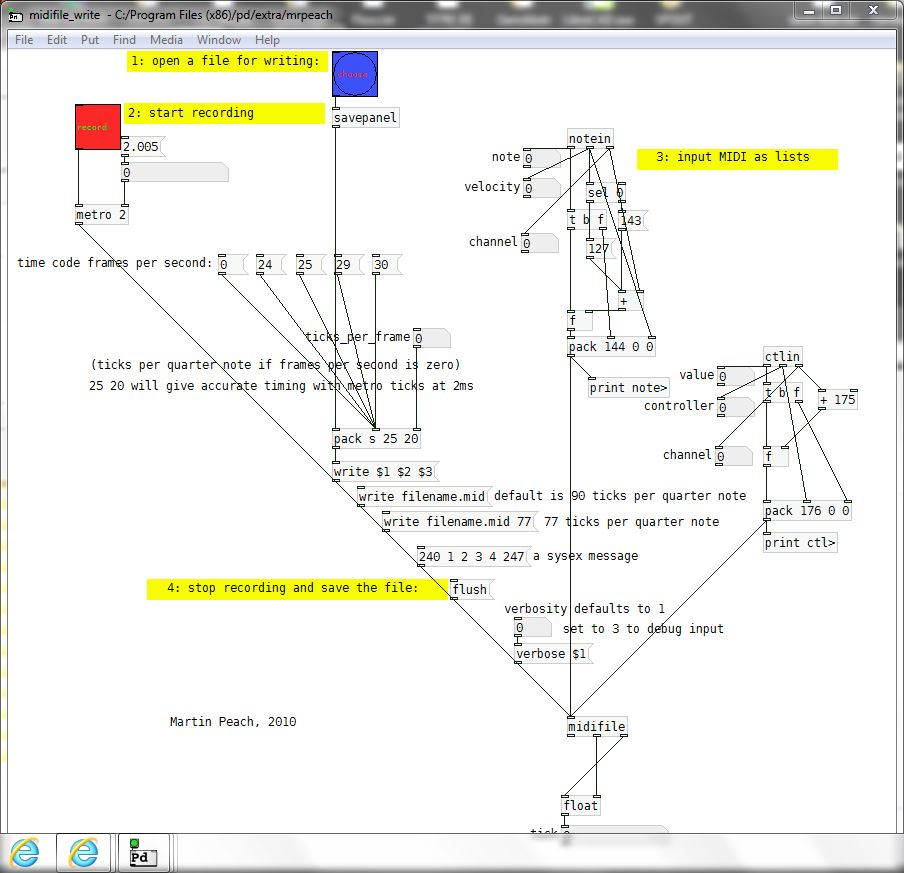

Question(s) about [mrpeach/midifile]

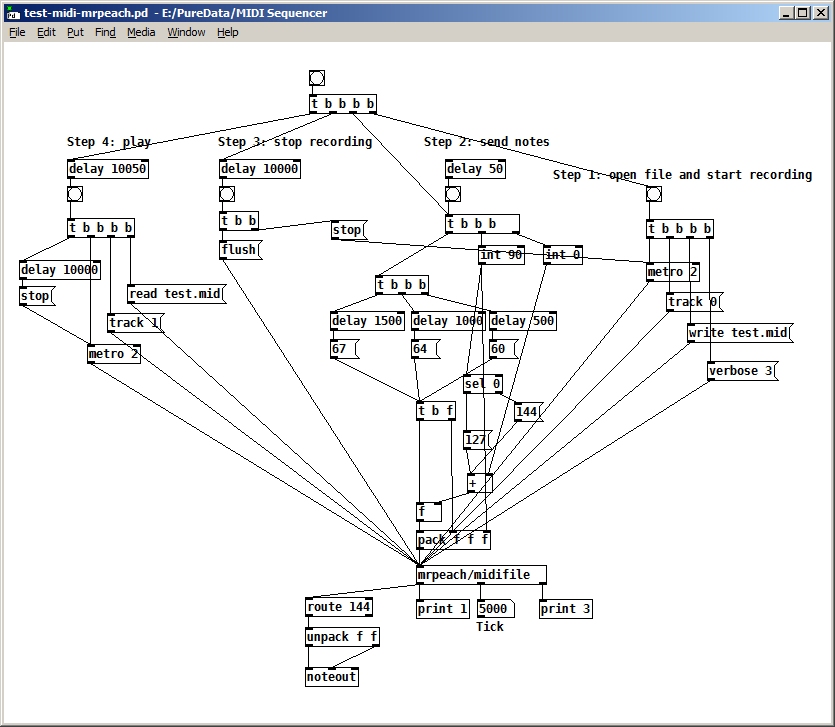

@dfkettle I would expect read/write to be first as they get the file/set the header for writing (MThd).

Then the track.... as that means moving on within the file to the next header for a read, or writing a Track header for a write (MTrk).

That's the order I tried first, but it didn't seem to work. Anyway, I changed it back again to agree with what you suggest, but it still seems to disregard the track number (it plays the track even though I specified track 0 when writing and track 1 when reading. Here's the latest version:

P.S. What does your midifile-help file look like?...... is it this one from 2010?

So for a write the track would go between "1" and "2".

Essentially, although it says "Martin Peach, 2010 - 2018" on mine. However, I'm wondering now how I would create a file with more than one track, if you have to specify the track number between steps "1" and "2". (in other words, before you start recording). Can you change the track number after you've started recording? How could you create two tracks that play at the same time? Maybe that isn't even supported by this library.

EDIT: Does the 'flush' message close the file after writing? I don't see anything in the help file about a 'close' message, so I'm assuming it does.

Question(s) about [mrpeach/midifile]

@dfkettle I would expect read/write to be first as they get the file/set the header for writing (MThd).

Then the track.... as that means moving on within the file to the next header for a read, or writing a Track header for a write (MTrk).

Especially as a read/write is going to flush out and replace the last .mid file loaded.

https://faydoc.tripod.com/formats/mid.htm#AppA

David.

P.S. What does your midifile-help file look like?...... is it this one from 2010?

So for a write the track would go between "1" and "2".

For a read it can probably be called at any time after the file is opened........ but certainly not before..

midifile-help.pd

fx3000~: 30 effect abstraction for use with guitar stompboxes effects racks, etc.

It still does not work.

I added my-guitar-rig/my-guitar-rig~ to a window and it generated a lot of errors

I am running version 0.52.1 of pd

Hopefully this is helpful. The errors I got are:

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

io pair already connected

delay(wavey)(v).pd 39 0 40 0 (snapshot~->gatom) connection failed

tof/pmenu 1 1 black white red

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

io pair already connected

delay(wavey)(v).pd 39 0 40 0 (snapshot~->gatom) connection failed

tof/pmenu 1 1 black white red

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

io pair already connected

delay(wavey)(v).pd 39 0 40 0 (snapshot~->gatom) connection failed

tof/pmenu 1 1 black white red

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

date

... couldn't create

time

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

mknob 42 0 0 1 0 0 empty empty ratio:1.5:1 -2 -6 0 10 -262144 -1 -1 20175 1

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

pd-float: rounding to 2048 points

warning: fx3000-in-2: multiply defined

warning: fx3000-in-2: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-0: multiply defined

warning: fx3000-in-0: multiply defined

pd-float: rounding to 2048 points

pd-float: rounding to 2048 points

warning: fx3000-in-2: multiply defined

warning: fx3000-in-2: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-0: multiply defined

warning: fx3000-in-0: multiply defined

pd-float: rounding to 2048 points

pd-float: rounding to 2048 points

warning: fx3000-in-2: multiply defined

warning: fx3000-in-2: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-0: multiply defined

warning: fx3000-in-0: multiply defined

pd-float: rounding to 2048 points

[comport] serial issues

The arduino code is:

#include <FastLED.h>

#define LED_PIN 7

#define LED_PIN2 6

#define LED_PIN3 5

#define LED_PIN4 4

#define LED_PIN5 3

#define NUM_LEDS 1

CRGB leds[NUM_LEDS];

CRGB leds2[NUM_LEDS];

CRGB leds3[NUM_LEDS];

CRGB leds4[NUM_LEDS];

CRGB leds5[NUM_LEDS];

int incoming[5] = {0, 0, 0, 0, 0};

void setup() {

FastLED.addLeds<WS2812, LED_PIN, GRB>(leds, NUM_LEDS);

FastLED.addLeds<WS2812, LED_PIN2, GRB>(leds2, NUM_LEDS);

FastLED.addLeds<WS2812, LED_PIN3, GRB>(leds, NUM_LEDS);

FastLED.addLeds<WS2812, LED_PIN4, GRB>(leds2, NUM_LEDS);

FastLED.addLeds<WS2812, LED_PIN5, GRB>(leds, NUM_LEDS);

Serial.begin(9600);

}

void loop() {

if (Serial.available() > 0) {

// read the incoming byte:

}

incoming [0] = Serial.read();

incoming [1] = Serial.read();

incoming [2] = Serial.read();

incoming [3] = Serial.read();

incoming [4] = Serial.read();

leds[0] = CRGB(incoming [0], incoming [0], incoming [0]);

FastLED.show();

leds2[0] = CRGB(incoming [1], incoming [1], incoming [1]);

FastLED.show();

leds3[0] = CRGB(incoming [2], incoming [2], incoming [2]);

FastLED.show();

leds4[0] = CRGB(incoming [3], incoming [3], incoming [3]);

FastLED.show();

leds5[0] = CRGB(incoming [4], incoming [4], incoming [4]);

FastLED.show();

}```

Which works using the following max patch: