how can I get the full error text on a Mac?

@jameslo Spacing each loadbang for me has been trial and error..... to get a patch to work.... although you have to think the patch through in terms of "what" should load before "what".

I see you using [catch] but no "no corresponding catch" messages so maybe not the problem.

In the windows patch the arrays are strange? If you open them is there actually anything inside? I doubt it. The $0 has not been translated in their names, and they are exact copies of each other (all the "left" are the same and all the "right" are the same)...... unless the $0's are different having been drawn from separate abstractions with different $0 values. Again, I doubt that is the case looking at your screenshot.. But if they are different it must be a load timing issue again. The OS might need a little more time to re-read the file repeatedly.

So there is probably one that has created correctly, and the others cannot because they have the same name, or none have created because an I/O read was abandoned as a request to read again was received. Actually that is likely?? Read the same file again.... well, I will cancel the last read then!!... might be the response from the OS, and might be different depending on which OS it is, and whether it has an SSD or a hard drive. All OS's will treat media files differently than other file types. They often load just the header and a few Mbytes of media and wait for instruction to continue.

That is (maybe) where the problem lies, especially as the error prints just after loading the exact same wav file numerous times.

If the $0's are all the same then you should find that giving them $1 names instead of $0 will solve the problem.

You could try printing each loadbang in your sequence to the terminal (with an identifier) to narrow down the exact moment or the error, but I am pretty sure it is that $0, or a "read the file again" issue.

David.

[gme~] / [gmes~] - Game Music Emu

Allows you to play various game music formats, including:

AY - ZX Spectrum/Amstrad CPC

GBS - Nintendo Game Boy

GYM - Sega Genesis/Mega Drive

HES - NEC TurboGrafx-16/PC Engine

KSS - MSX Home Computer/other Z80 systems (doesn't support FM sound)

NSF/NSFE - Nintendo NES/Famicom (with VRC 6, Namco 106, and FME-7 sound)

SAP - Atari systems using POKEY sound chip

SPC - Super Nintendo/Super Famicom

VGM/VGZ - Sega Master System/Mark III, Sega Genesis/Mega Drive,BBC Micro

The externals use the game-music-emu library, which can be found here: https://bitbucket.org/mpyne/game-music-emu/wiki/Home

[gme~] has 2 outlets for left and right audio channels, while [gmes~] is a multi-channel variant that has 16 outlets for 8 voices, times 2 for stereo.

[gmes~] only works for certain emulator types that have implemented a special class called Classic_Emu. These types include AY, GBS, HES, KSS, NSF, SAP, and sometimes VGM. You can try loading other formats into [gmes~] but most likely all you'll get is a very sped-up version of the song and the voices will not be separated into their individual channels. Under Linux, [gmes~] doesn't appear to work even for those file types.

Luckily, there's a workaround which involves creating multiple instances of [gme~] and dedicating each one to a specific voice/channel. I've included an example of how that works in the zip file.

Methods

- [ info ( - Post game and song info, and track number in the case of multi-track formats

- this currently does not include .rsn files, though I plan to make that possible in the future. Since .rsn is essentially a .rar file, you'll need to first extract the .spc's and open them individually.

- [ path ( - Post the file's full path

- [ read $1 ( - Reads the path of a file

- To get gme~ to play music, start by reading in a file, then send either a bang or a number indicating a specific track.

- [ goto $1 ( - Seeks to a time in the track in milliseconds

- Still very buggy. It works well for some formats and not so well for others. My guess is it has something to do with emulators that implement Classic_Emu.

- [ tempo $1 ( - Sets the tempo

- 0.5 is half speed, while 2 is double. It caps at 4, though I might eventually remove or increase the cap if it's safe to do so.

- [ track $1 ( - Sets the track without playing it

- sending a float to gme~ will cause that track number to start playing if a file has been loaded.

- [ mute $1 ... ( - Mutes the channels specified.

- can be either one value or a list of values.

- [ solo $1 ... ( - Mutes all channels except the ones specified.

- it toggles between solo and unmute-all if it's the same channel(s) being solo'd.

- [ mask ($1) ( - Sets the muting mask directly, or posts its current state if no argument is given.

- muting actually works by reading an integer and interpreting each bit as an on/off switch for a channel.

- -1 mutes all channels, 0 unmutes all channels, 5 would mute the 1st and 3nd channels since 5 in binary is 0101.

- [ stop ( - Stops playback.

- start playback again by sending a bang or "play" message, or a float value

- [ play | bang ( - Starts playback or pauses/unpauses when playback has already started, or restarts playback if it has been stopped.

- "play" is just an alias for bang in the event that it needs to be interpreted as its own unique message.

Creation args

Both externals optionally take a list of numbers, indicating the channels that should be played, while the rest get muted. If there are no creation arguments, all channels will play normally.

Note: included in the zip are libgme.* files. These files, depending on which OS you're running, might need to accompany the externals. In the case of Windows, libgme.dll almost definitely needs to accompany gme(s)~.dll

Also, gme can read m3u's, but not directly. When you read a file like .nsf, gme will look for a file that has the exact same name but with the extension m3u, then use that file to determine track names and in which order the tracks are to be played.

creating a unique text file for mulitple instances of an abstraction

@nicnut

$0 is a VERY bad idea, as its value can change when reopening the patch, depending on other patches and abstractions already open. You will end up with text files with meaningless unpredictable names

It is great though for keeping messages private within a patch or an abstraction....

Also a $1 in a message box takes the value of the first atom of an incoming list ($2 the second), and is not the value of an argument of the abstraction. $1, $2 etc. only take the arguments of an abstraction when they are in an object box.

You can use a float, or a symbol as an argument.

Symbols can be easier to "follow" though the patch and the text file names, as they carry more meaning.

Give your abstractions its arguments (or just one if the text file name is all you need... $1) ....... [abstr 1 x y woof]...... [abstr 2 z a howl] etc.....

Then use one of them to name the text file to write....... so writing $4.txt would write woof.txt for the first and howl.txt for the second.

Writing $1.txt would write 1.txt for the first and 2.txt for the second....

Writing $2.txt would write x.txt for the first and z.txt for the second....

Etc.

Here is an example........ example.zip

[mother] contains two copies of [abstr]........ [abstr 1] and [abstr 2].

You will see that the 1, or the 2, replace the [$1] value which is then sent to the $1 in the write message.

If you want to use a symbol then replace [$1]... the float object...... with [symbol $1]....... as in the [abstr] example.

David.

2017/10/12 00:16 - "You, you , YOU, yoU"

Think some of you might get a kick out of this (caveat I haven't done any spoken-word improv in a long time, so I can't really vouch for the quality of my words):

The pd part:

The recording was done using an old ps2-jack logitech footpedal (which I found out really only has 4 electrical lines out: red, black, white, and green (directly connected to the potentiometers) so I routed them to my arduino and sent those signals (once normalized) to 2 effects (one to the left and one to the right channel of a stereo recording plus as second line (vocal) to the right input (using a really cool) double 1/4" female to 1/8" stereo male adapter cable I picked up at Guitar Center.

So I sent in 2 signals, left and right. Right I left clean as vocal and recorded to its own stereo track and left I divided and sent to a second stereo track with then left being a delay-feedback line (DIY2 abs) and right being a pitchshifter (DIY2).

So as I played my left foot ramped (from 0 to 1) the delay and my right foot ramped (also from 0-1) the pitchshifter.

Very happy with the results.

Found my self thinking it is kind of representative (given the vocals) of what might be called "folk acid-rock".

Tally ho. And Good Luck in all your endeavors.

Would love to hear what folks think.

Hope you enjoy it or at least find it entertaining.

Happy Pding.

Ciao for Now.

-S

oscformat and oscparse... /integer not routing as expected

@whale-av Hello David! Thanks for the reply. A bit confused about this, still.

So, here’s a message sent by TouchOSC:

list page4 multifader1 1 0.193394

My attempt at routing this…

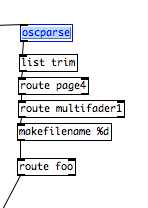

[oscparse]

| [list trim]

| [route page4]

| [route multifader1]

| [makefilename %d]

| |

[route foo]

Getting the error:

makefilename: no method for '1'

Might have misunderstood the patching itself, though.

Here’s what it looks like in Pd.

use of threads for i²c I/O external : looking for a good strategy

Hi there,

I'm developing an audio device based on a cubieboard2(armf). I use potentiometers read by an adc that communicates through i²c protocol with my cubie. An i²c lcd display shows the desired parameters values.

I wrote externals in order to get data from the potentiometers (and other switches, and a rotary encoder), or send data to the display. Everything was working, but the i²c I/O functions calls were leading to clicks 'n pops in the audio, which was unacceptable. I use a rt patched debian with selected rt priorities for irqs and audio as I always did with success, and I am looking for a smart way to make my pd patches communicate with my physical interface, in a transparent way at audio level.

Then a opened this topic : http://forum.pdpatchrepo.info/topic/9489/external-i2c-data-reader-leads-to-clicks-standalone-version-works-better , and @Eeight proposed to implement threads, kindly giving a template in order to show me the way.

And now I'd need some advice. I'm not a real C programmer, just learning the empirical way, and I'm reaching my limits... I made a threaded version of the external that reads the potentiometers every x milliseconds, and it works perfectly, no audio pollution anymore. But when I made a threaded version of the external that handles the i²c display (thus receiving incoming messages such as "position x y", or "write message"), it lead to timing problems.

Some of the messages get uninterpreted because my writing function is threaded, and takes the form of an infinite while(1) loop containing a "usleep(10000)" instruction at the end of it in order to limit cpu load. The problem is that this leads to the "loss" of most of the incoming messages...

When reading potentiometers values, it's ok to get them merely one every 10ms, but when you have to send messages that can be interpreted merely one every 1oms, you have to send them to the external with delays, which works (That's my present situation), but is tiedious and inelegant.

Would someone have an idea of how this problem could be addressed in a more elegant manner ?

Here is how I implemented it :

- I create a thread for an infinite while (1) loop containing a call to a writing function whenever a flag equals 1, followed by a usleep(10000) instruction.

This "thread" is running from the beginning and awaits for a nonzero value of the flag to enter in action. It reads the string to be displayed from the object's data structure, but only when the "clocking" allows it together with the flag - a "write" method can receive a t_symbol : the string to display. When a "write blahblah" message is received by the object, the string "blahblah" is stored in the object's data structure, as the flag which is set to 1.

- Then the next time the threaded loop evaluates the flag, it displays "blahblah", resets the flag to 0 and sleeps for 10ms.

But when you use incoming messages using 10 lines messages (allowing for cursor positioning and writing orders), they of course flow through the code in far less than 10ms, hence my problems... In other words the string to be displayed can be changed several times in the object's data structure without being actually displayed because of the relatively slow "clocking".

Please forget the incredible length of this message, together to the fact that I don't post the source yet because of a basic shame of my inelegant coding style. Maybe will I finally clean it and post it later.

Thank you,

Nau

Beatmaker Abstract

http://www.2shared.com/photo/mA24_LPF/820_am_July_26th_13_window_con.html

I conceptualized this the other day. The main reason I wanted to make this is because I'm a little tired of complicated ableton live. I wanted to just be able to right click parameters and tell them to follow midi tracks.

The big feature in this abstract is a "Midi CC Module Window" That contains an unlimited (or potentially very large)number of Midi CC Envelope Modules. In each Midi CC Envelope Module are Midi CC Envelope Clips. These clips hold a waveform that is plotted on a tempo divided graph. The waveform is played in a loop and synced to the tempo according to how long the loop is. Only one clip can be playing per module. If a parameter is right clicked, you can choose "Follow Midi CC Envelope Module 1" and the parameter will then be following the envelope that is looping in "Midi CC Envelope Module 1".

Midi note clips function in the same way. Every instrument will be able to select one Midi Notes Module. If you right clicked "Instrument Module 2" in the "Instrument Module Window" and selected "Midi input from Midi Notes Module 1", then the notes coming out of "Midi Notes Module 1" would be playing through the single virtual instrument you placed in "Instrument Module 2".

If you want the sound to come out of your speakers, then navigate to the "Bus" window. Select "Instrument Module 2" with a drop-down check off menu by right-clicking "Inputs". While still in the "Bus" window look at the "Output" window and check the box that says "Audio Output". Now the sound is coming through your speakers. Check off more Instrument Modules or Audio Track Modules to get more sound coming through the same bus.

Turn the "Aux" on to put all audio through effects.

Work in "Bounce" by selecting inputs like "Input Module 3" by right clicking and checking off Input Modules. Then press record and stop. Copy and paste your clip to an Audio Track Module, the "Sampler" or a Side Chain Audio Track Module.

Work in "Master Bounce" to produce audio clips by recording whatever is coming through the system for everyone to hear.

Chop and screw your audio in the sampler with highlight and right click processing effects. Glue your sample together and put it in an Audio Track Module or a Side Chain Audio Track Module.

Use the "Threshold Setter" to perform long linear modulation. Right click any parameter and select "Adjust to Threshold". The parameter will then adjust its minimum and maximum values over the length of time described in the "Threshold Setter".

The "Execution Engine" is used to make sure all changes happen in sync with the music.

IE>If you selected a subdivision of 2, and a length of 2, then it would take four quarter beats(starting from the next quarter beat) for the change to take place. So if you're somewhere in the a (1e+a) then you will have to wait for 2, 3, 4, 5, to pass and your change would happen on 6.

IE>If you selected a subdivision of 1 and a length of 3, you would have to wait 12 beats starting on the next quater beat.

IE>If you selected a subdivision of 8 and a length of 3, you would have to wait one and a half quarter beats starting on the next 8th note.

http://www.pdpatchrepo.info/hurleur/820_am,_July_26th_13_window_conception.png

Pduino-based multi-arduino wireless personal midi controller network

Saw your TED video, so maybe you've already solved this problem.

In my limited work with getting arduino and pd to play nice, I've found that things like pduino and firmata work great but can be restrictive. I had to multiplex inputs on my arduino, which doesn't play nice with something like firmata that automatically reads all the pin values.

It might be better to have each arduino on it's own [comport], and differentiate the arduinos that way. Dump pin values over each comport and keep reading it.

Here's the thread explaining what I did:

http://puredata.hurleur.com/sujet-5832-reading-multiplexed-input-streams-arduino

I'm a fan of your work.

Polyphonic voice management using \[poly\]

Keeping track of note-ons and note-offs for a polyphonic synth can be a pain. Luckily, the [poly] object can be used to take care of that for you. However, the nuts and bolts of how to use it may not be immediately obvious, particularly given its sparse help patch. Hopefully this tutorial will clarify its usefulness. It will probably be easier to follow along with this explanation if you open the attached patch. I'll try to be thorough, which hopefully won't actually make it more confusing!

To start, [poly] accepts a MIDI-style message of note number and velocity in its left and right inlets, respectively...

[notein]

| \

[poly 4]

...or as a list in it left inlet.

[60 100(

|

[poly 4]

The first argument is the maximum number of voices (or note-ons) that [poly] will keep track of. When [poly] receives a new note-on, it will assign it a voice number and output the voice number, note number, and velocity out its outlets. When [poly] gets a note-off, it will automatically match it with its corresponding note-on and pass it out with the same voice number.

By [pack]ing the outputs, you can use [route] to send the note number and velocity to the specified voice. For those of you not familiar, [route] will take a list, match the first element of the list to one of its arguments, and send the rest of the list through the outlet that goes with that argument. So, if you have [route 1 2 3], and you send it a list where the first element is 2, then it will pass the rest of the list to the second outlet because 2 is the second argument here. It's basically a way of assigning "tags" to messages and making sure they go where they are assigned. If there is no match, it sends the whole list out the last outlet (which we won't be using here).

[poly 4]

| \ \

[pack f f f] <-- create list of voice number, note, and velocity

|

[route 1 2 3 4] <-- send note and velocity to the outlet corresponding to voice number

At each outlet of [route] (except the last) there should be a voice subpatch or abstraction that can be triggered on and off using note-on and note-off messages, respectively. In most cases, you'll want each voice to be exact copies of each other. (See the attached for this. It's not very ASCII art friendly.)

The last thing I'll mention is the second argument to [poly]. This argument is to activate voice-stealing: 1 turns voice-stealing on, 0 or no argument turns it off. This determines how [poly] behaves when the maximum number of voices has been exceeded. With voice-stealing activated, once [poly] goes over its voice limit, it will first send a note-off for the oldest voice it has stored, thus freeing up a voice, then it will pass the new note-on. If it is off, new note-ons are simply ignored and don't get passed through.

And that's it. It's really just a few objects, and it's all you need to get polyphony going.

[notein]

| \

| \

[poly 4 1]

| \ \

[pack f f f]

|

[route 1 2 3 4]

| | | |

( voices )

Controling from the keyboard

Hi,

Route can be used to route pairs of data to specific places.

From [keyname] you get two pieces of information. The key label and a up/down action, e.g:

Up 0

Up 1

If you think about the first value being the receiver name and the second being the variable you want to pass with it. The [route] object will the output the variable associated with a receiver that matches its arguments. [route] will also strip off the receiver name so that you would be just left with the variable.

[keyname]

| \

// create a list from keyname e.g. 'Up 1'

|

// route doesn't like lists so we strip off the list prefix

|

[route Up] // looks for a message with 'Up' in it and pass the next value to its outlet

|

[sel 1]

|

[O]