GEM: pix_chroma_key terminology

"if the pixel in the key source lies within the range, then it is replaced by the corresponding pixel in the other stream"

and

"Inlet 1: direction 0|1 : which stream is the key-source (0=left stream;

1 = right stream)"

So -- "direction 0" should mean that matching colors in the left image would be replaced by pixels from the right image (because 0 means that the left stream is the key source and, per above, pixels from the key source within range are replaced).

So I've got an image of a bad CGI dinosaur with a solid green background = 0.129 0.945 0 (RGB) -- putting this into the left inlet of chromakey. This is the one whose pixels should be replaced, so... direction 0, right?

But when I set "value 0.129 0.875 0" and "range 0.15 0.15 0.15" to match the green background, then I get the background coming through solid, and a transparent hole in the middle where the dinosaur is.

If I set "direction 1" then I get the foreground dinosaur, and the green gets replaced.

So it seems that the "direction" message is explained backward? Or is that part correct, and the explanation of "key source" is wrong?

Or perhaps there is some other way to interpret those statements...?

hjh

Audio click occur when change start point and end point using |phasor~| and |tabread4~|

@Junzhe-hou said:

@ddw_music Hi professor!?!? good to see you here!

Yes, it's me -- I almost didn't notice your username

I read your email last week but im so confused with your

patch--varispeed-segment:|noise~|

|

|lop~ 3|

|

|*~ 30|

|

|+~ 1|

This is just a way to generate a modulator for the playback rate. It could be any other modulator (LFO, envelope, anything).

After that, this is multiplied by a sample rate scaling factor.

As you asked jameslo: "if sample rate (in audio setting) changed the result sound different":

-

If the file sample rate is 96 kHz and the soundcard sample rate is 96 kHz, then normal-speed playback is to move forward exactly 1 sample in the file for every output sample.

-

If the file sample rate is 96 kHz and the soundcard sample rate is 48 KHz, then normal-speed playback is to move forward exactly 2 samples in the file for every output sample. (If you playback at 1:1, then the file will sound slower at the lower soundcard sample rate.)

This was one of the big reasons for me to make [soundfiler2] in my abstraction set. It calculates file_sr / system_sr and saves this in a value object named after the ID+"scale". If you multiply the playback rate by this scaling factor, then the file should sound correct at any system sample rate.

(BTW you would have the same issue in SuperCollider: PlayBuf.ar(1, bufnum, rate: 1) will sound different depending on the hardware sample rate, but PlayBuf.ar(1, bufnum, rate: BufRateScale.kr(bufnum)) would sound the same, except maybe for aliasing when downsampling.)

You method "L inlet = rate * scale for sample increment",so is the rate always changing?

Yes -- variable-speed playback.

@jameslo "I'm sorry if I just did your student's homework" -- actually this isn't for my class -- independent project. There are still some students who do hard things just because it's fun to overcome challenges

hjh

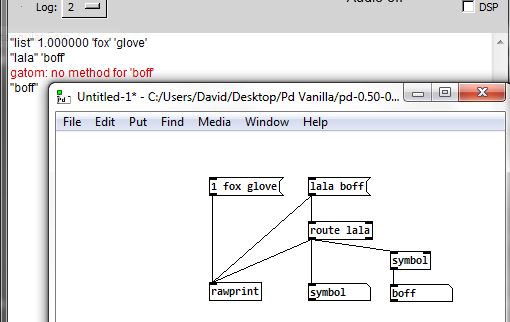

[route aSymbol] doesn't match tagged symbols?

@ingox said:

My opinion is that the whole selector system is dubious. [abc( should just be a symbol.

Yes. "all" is not special...... it is just that any single atom text is treated as text unless tagged as a symbol. A great source of confusion and changing that would be good.......

But it is not special....... it will trigger a bang through [route all] just like any other single atom text...... but will not trigger [sel all].

At the moment a single atom text will [route] and produce a bang........ but for it to trigger [select] it must first be tagged as a symbol........ crazy....

The other sources of problems can be that an automatically tagged list........ a list starting with a float..... can require a [list trim] before being passed on...... although for the user there is no indication that the message has had the list tag added.

And that a list of two symbols although routed correctly loses the symbol tag for the second atom when passing through [route] because it has become a singleton and reverted to text.... ...... That issue would also be addressed by your suggestion.

...... That issue would also be addressed by your suggestion.

So yes....... a singleton text should be a symbol....... and can still be a selector that will trigger a bang.

Consistency between objects needs addressing and the selector system would be fixed I think.

And single atom text being a symbol might be all that is required, if any single atom is treated as a selector in the same way as any first atom is currently and [list] is made consistent between atom types.

We have had this discussion previously...... https://forum.pdpatchrepo.info/topic/12794/route-list-vs-array-problem/15

But nothing can actually be done without a serious plan....... we are wasting our time except when we document these anomalies.

The elephant in the room is the millions of patches already in existence.

If we can work out how to make the changes we would like....... without any changes to those existing patches being necessary..... then we will have a proposal for the list....

That might be possible........ but I think that the current inconsistency of the triggering of a bang will put a spanner in the works.

So maybe only alternative objects..... [routeNEW] [selectNEW] etc. would be possible while continuing with the old message system..

David.

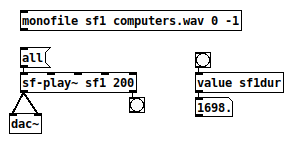

Properly usable soundfile playback without silly gotchas

I think I finally got sound file playback abstracted to the point where it's actually usable.

-

[monofile id path startframe numframes] or [stereofile ...]:

- Automatically creates 1 or 2 arrays.

- Loads the file.

- Sets up 5 [value] variables:

- idframes

- iddur (in ms -- automatically compensates if the disk file sample rate doesn't match the hardware sample rate)

- idsr -- disk file sample rate

- idsr001 -- sample rate * 0.001 = samples / ms (useful in many places in Pd)

- idscale -- file SR / system SR

-

[sf-play~] / [sf-play2~] uses the table(s) defined in [monofile] / [stereofile] and uses the [value] objects to drive a [cyclone/play~] in a sensible way. (E.g., play~ help says that times are given in milliseconds, but that's true only if the file sample rate matches the audio system -- my abstraction automatically multiplies by the sample rate scale variable, so you really can just specify time in milliseconds and not worry about it.)

I guess I might catch some heat for depending on cyclone, but 1/ cyclone is really indispensable and 2/ I've spent enough time on this and I don't want to rebuild a buffer player that's capable of crossfade looping when a fine one already exists.

https://github.com/jamshark70/hjh-abs with a few other miscellaneous little toys.

2021-09-19: Updated to fix a bug with looping.

hjh

[route aSymbol] doesn't match tagged symbols?

@ddw_music The old extended route-help explained the 3 modes for [route]...... route-help.pd in the "inlets" section.

And there was a bit more info in all_about_route.pd especially that the list header is stripped and so [route list] followed by [route list] will fail for the second.

But mixing data types in a single [route]..... i.e. [route 5 woof] will not work as expected..... so unsupported.

David.

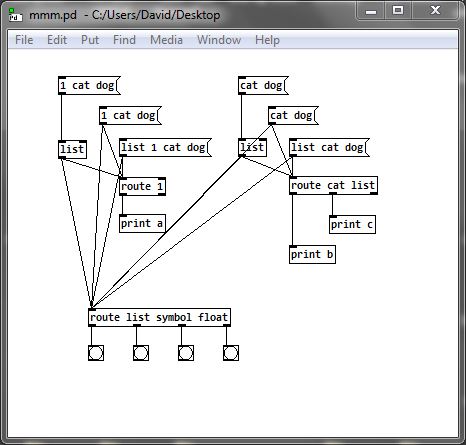

List Comparison

@buzzelljason Yes. Well. Everything sent by a control rate object is a message.

The messages can be floats or symbols or lists.

Lists are a list comprising floats or symbols or a mixture of the two.

Items (atoms) in a list are separated by spaces.

It gets complicated.

If the first atom in the list is a float then the "list" tag is automatically applied, and then is silently ignored and dropped as it passes through the next object (except for [route list symbol float].......)

If the first atom in the list is a symbol then that symbol becomes the tag.... the list header..... and the list can be routed by that tag. But if the list passes through a [list] object then "list" is added (prepended) as the header. That is why [list trim] is used almost everywhere after a [list] object...... to remove the header and then be able to [route] by the original tag (if it was a symbol).

HINT.. If you install the Zexy library then [rawprint] shows you whether an atom is a "tag", 'symbol' or float....

If you install the Zexy library then [rawprint] shows you whether an atom is a "tag", 'symbol' or float....

Trying to work with vanilla OSC objects can be just guesswork without [rawprint].

mmm.pd

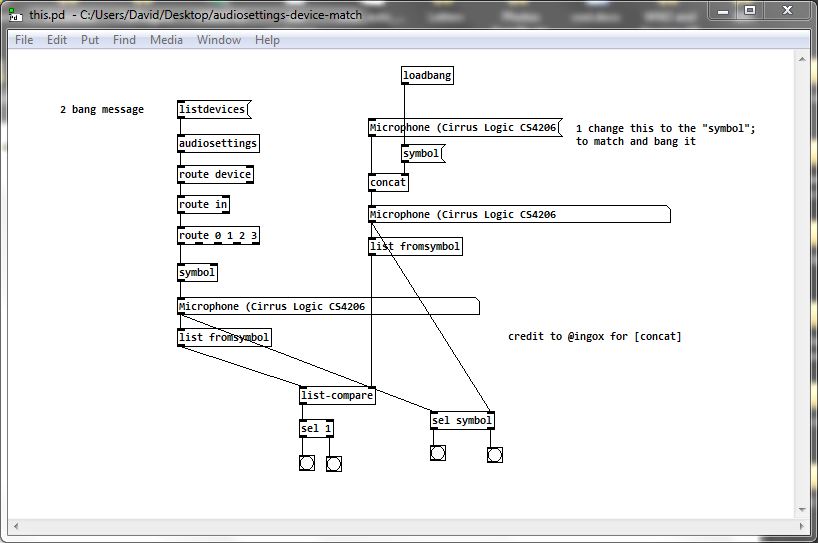

The messages arrive from [audiosettings] as a list which is a mixture of floats and symbols........ but the first atom is a symbol and there is no "list" tag.

[listdevices( returns a series of messages..... they are lists...... with the first atom "device"

That is why "device"....... a symbol.... and "0" or "1" can be routed. "1" could not be routed by [route 0 1] unless it is a float and "device" could not be routed by [route device] unless it is a symbol.

The device name is a symbol........ it can only be sent on in one hit because it is a symbol...... if it were a list then each part would be sent separately as a space normally "means" "next item".

But once it has passed through [route 0 1] it has lost its "symbol" tag. That can be put back by passing it through [symbol].

So the problem for [list-compare] is to create a symbol that is identical. Not easy as a message with component symbols will be treated as a list. Fortunately @ingox gave us a vanilla solution.... [concat].

And then of course to turn them both into lists for the comparison.

This will work........ this.zip

It can probably be simplified (and certainly @ingox will shortly post a more elegant solution....  ).

).

David.

route without initial parameter and symbols

@JMC64 Yes, you have to give it an argument so that it is defined as a [route float] or [route symbol] as it is created. It defaults to float if no argument is given.

Well done... solving the problem....... on your own in vanilla.

The help for [route] in Pd extended documented this...... and much more..... as [route] can do more than you might think and has some other quirks.....

More here...... route.zip

David.

Control MIDI sampler rate from -2x to 2x with CC messages

Hello.

I need help implementing logic.

Let me explain :

I have a MIDI sampler and I would like to take control of the rate (speed/pitch) via MIDI CC messages.

The rate goes from -2x (reverse) to 2x (forward. It takes CC values from 0-127.

With a value of 64, the rate is at 0, so the sampler on pause, kind of

With a value of 127, the sampler plays forward 2x the original rate

With a value of 0, it plays reverse 2x the original rate

And with a value of 95, it plays forward at the original rate (1x)

Here’s what I’m trying to achieve :

I would like to be able to play with the rate and at some point, momentarily, thru a CC message, jump to the opposite side with the same rate ; for example, from 1x to -1x or from 0.5x to -0.5. I know that from 1x to -1x need the CC value 95 (1x) and 31 (-1x). I found that by subtracting 64 to the CC values received and I get the value that correspond to the reverse rate value of the sampler. But I get confused when I send values below 64, then I get negative values. It would be great if when I get under 64, then the logic would be reversed and I would get values over 64. I don’t know if my explanations are clear.

Thank a lot for you help.

Regards.

Shared references to stateful objects?

This is how i see it:

| ┏━ | ━━━━━━━━━━━━━━━━━━ | ┳━ | ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ | ┓ |

|---|---|---|---|---|

| ┃ | Functional programming | ┃ | Pure Data | ┃ |

| ┡━ | ━━━━━━━━━━━━━━━━━━ | ╇━ | ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ | ┩ |

| ├ | Function | ┼ | Abstraction | ┤ |

| ├─ | ────────────────── | ┼─ | ──────────────────────────────── | ┤ |

| ├ | Define function in code | ┼ | Create abstraction as separate file | ┤ |

| ├─ | ────────────────── | ┼─ | ──────────────────────────────── | ┤ |

| ├ | Call function | ┼ | Send a message to left inlet of the abstraction | ┤ |

| ├─ | ────────────────── | ┼─ | ──────────────────────────────── | ┤ |

| ├ | Arguments | ┼ | Input into the inlets and creation arguments | ┤ |

| ├─ | ────────────────── | ┼─ | ──────────────────────────────── | ┤ |

| ├ | Return values | ┼ | Output of the outlets | ┤ |

| ├─ | ────────────────── | ┼─ | ──────────────────────────────── | ┤ |

| ├ | Local variables | ┼ | $0 in object names | ┤ |

| ├─ | ────────────────── | ┼─ | ──────────────────────────────── | ┤ |

| ├ | Recursive function | ┼ | Manual recursion by using [until] outside of the abstraction | ┤ |

| └─ | ────────────────── | ┴─ | ──────────────────────────────── | ┘ |

How to use OSC namespaces in MobMuPlat?

Is it possible to parse the namespace of OSC messages in MobMuPlat?

MobMuPlat handles OSC communication in a wrapper patch that provides a message called 'fromNetwork'.

Say the OSC message is: /level1/level2/levelN format value

It has the format: [path format value]. The path here uses a namespace that subdivides according to levels.

With oscparse, the namespace in the path could be parsed like this :

netreceive -> oscparse-> list trim -> route level1 -> route level 2 ... route levelN

https://forum.pdpatchrepo.info/topic/9856/oscformat-and-oscparse-integer-not-routing-as-expected/8

With MobMuPlat's 'valueFromNetwork', is it still possible to "route" the value of the message according to the namespace? Since valueToNetwork contains a list where the first argument is the entire path, I can only split the first argument from the remaining arguments:

receive fromNetwork -> route list -> route /level1/level2/levelN

But I can't further split within the path itself to divide leve1/level2/levelN:

receive fromNetwork -> route list -> route .level1 -> route level2 -> route levelN.

Is there a way of doing this?