@porres Well, the [markov] object takes source material and generates something like an implicit probability transition matrix from that. If you already have a probability matrix, you only need a starting point and can play the markov chains immediately. A much more simpler abstraction can do that. Mixing the two approaches seems complicated, since [markov] follows a different philosophy, i.e. it allows adding more source material later in the process.

Interesting could be to have two separate abstractions: One to generate a probability matrix from source material and another one that plays markov chains from that. So you can have both approaches and combine them.

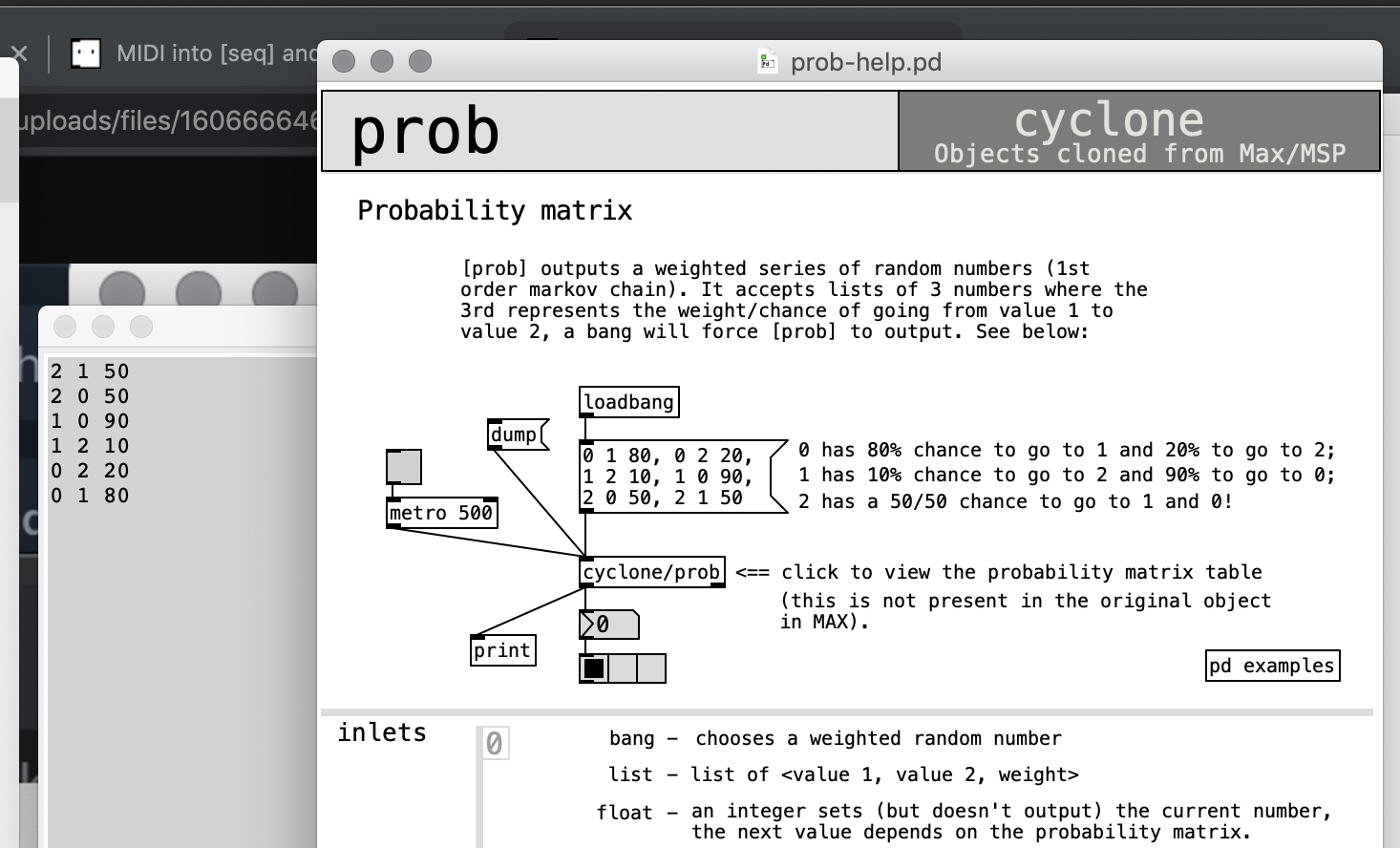

This would also be similar to the combination of [anal] and [prob], but as a generalized approach to have markov chains of arbitrary length.

The question is rather if it is a realistic scenario to have a complex probability matrix for markov chains of higher order. [markov] is built as a basic machine learning tool.

nice way to make multidimensional arrays

nice way to make multidimensional arrays