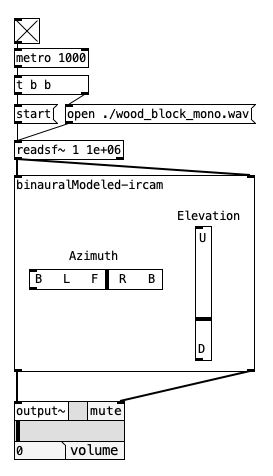

An efficient binaural spatializer (azimuth, elevation)

Thank you for sharing this.

That's nice to have a full Vanilla binaural spatializer!

After a short comparison with the online demo at https://ircam-rnd.github.io/binauralModeled/examples/, I find that the pd implementation reacts a bit differently.

An azimuth of -90 in the Ircam demo put the sound "far away" from the ear.

The implementation result is less obvious, like closer, with less feeling of space.

(Tested with Azimuth: -90 Elevation: 0 in both case, in 44.1, with the breakbeat.wav example in double mono, pd 53.1)

Another difference: with an elevation of 90 (max), we can't perceive any difference when the azimuth is changed.

I guess that's logical, but in the original you can still ear a lot of difference when changing the azimuth.

About the performances, what about a "bake" button that would find the datapoint and switch off the calculations?

In any case thank you for this, that's very helpful already!

An efficient binaural spatializer (azimuth, elevation)

An efficient binaural spatializer

The "binauralModeled-ircam" object is a pure-vanilla implementation of the binaural model created at IRCAM.. An example of its capabilities can be found at this page.

The object is found at my repository: https://github.com/andresbrocco/binauralModeled-pd

Main concept

Basically, it applies ITD and approximates the HRTF to a series of biquad filters, whose coefficients are avaliable here.

Sample Rate limitation: those coefficients work for audio at 44100Hz only!

Space interpolation

There is no interpolation in space (between datapoints): the chosen set of coefficients for the HRTF is the closest datapoint to the given azimuth and elevation (by euclidean distance).

Time interpolation

There is interpolation in time (so that a moving source sound smooth): two binauralModels run concurrently, and the transition is made by alternating which one to use (previous/current). That transition occurs in 20ms, whenever a new location is received.

Interface

You can control the Azimuth and Elevation through the interface, or pass them as argument to the first inlet.

Performace

Obs.: If the Azimuth and elevation does not match exactly the coordinates of a point in the dataset of HRTFs, the object will perform a search by distance, which is not optimal. Therefore, if this object is embedded in a higher level application and you are concerned about performance, you should implement a k-d tree search in order to find the exact datapoint before passing it to the "binauralModeled-ircam" object.

Ah, maybe this statement is obvious, but: it only works with headphones!

[vstplugin~] 0.6.0 - final release

Hi everyone,

I have just released [vstplugin~] v0.6.0! Binaries are available on Deken (search for "vstplugin~").

The most important new feature is support for multichannel signals: a single input or output signal can now contain multiple channels. This is particularly handy for spatialization plugins with many channels, such as higher-order ambisonics. Not only does it save you from drawing lots of patch cords, it also allows you to change the channel count dynamically! For example, you can now freely change the ambisonics order without rewriting your patch.

IMPORTANT: previous versions would hide certain (non-automatable) parameters in VST3 plugins. This has been fixed, but as a consequence, parameter indices might have changed. [vstplugin~] prints a warning for every plugin that is affected by this change!

Here is the full changelog: https://git.iem.at/pd/vstplugin/-/releases/v0.6.0

Please report any issues at https://git.iem.at/pd/vstplugin/-/issues

Have fun!

[vstplugin~] 0.6-test1

Hi,

here's a pre-release for [vstplugin~] v0.6 - an external for running VST2 and VST3 plugins inside Pd.

Binaries for all platforms are available on Deken (search for "vstplugin~").

The most important new feature is multichannel support (requires at least Pd 0.54)!

This is particularly handy for spatialization plugins with many channels. For example, a single signal may now contain a full 64-channel ambisonics bus. With the IEM plugins (https://plugins.iem.at/) you can even change the channel count dynamically and the plugins will automatically adjust the ambisonics order.

NOTE: previous versions would hide certain (non-automatable) parameters in VST3 plugins. This has been fixed, but as a consequence parameter indexes might have changed. [vstplugin~] prints a warning for every plugin that is affected by this change!

Here is the full changelog: https://git.iem.at/pd/vstplugin/-/releases/v0.6-test1

As always, please test and report any issues to https://git.iem.at/pd/vstplugin/-/issues.

Christof

Multichannel Sound Spatialization

Also note, if you use PlugData, then you can insert Pd directly into Ableton tracks as VST plugins. At that point, it's similar to a Max4Live device -- if you have a Max4Live license, you may as well use that, but if not, PlugData would have a similar workflow and should be more convenient to integrate into the workflow than standalone Pd.

Otherwise Obineg is right: the forum can help you with implementation, but it can't decide which spatialization technique best suits your needs. You'll have to decide that first.

hjh

[Pure-data externals] Let me know how to create a delay object using C programming.

Thank you for teaching the git-hub. I try to create my spatializer again using a ring buffer.

[Pure-data externals] Let me know how to create a delay object using C programming.

Hello world. I wanna know how to create a delay object in C programming. I'm creating my original spatializer system in Pure-data. The system is used by Pure-data externals. However I don't know how to reproduce delay in C language. Pure-data limits block size to 64, so I tried to reproduce the delay with the buffer stuffed with 0, but it didn't work. If you have a sample code, could you show it to me? Help me.

Shared references to stateful objects?

So, to sum up the strategies presented so far:

- Global storage -- Pro: Super-easy for numbers (with [value]), fairly easy for other entities with [text] as storage. Con: Potential for name collision, but that can be avoided.

- Trigger the sequencer/counter/whatever and save the value in a global location.

- The caller reads from the global location.

- Changing [send] target -- Pro: Caller directly specifies the return point. Con: A bit "magical."

- Caller sends the request with a [receive] name tag.

- The pseudo-function updates a [send] with the name, and passes the data there.

- Abstraction, globally-stored index -- Pro: Call and return are all in the same place. Con: Global storage name is hidden in the abstraction -- be careful of collision. Also, in the provided example, redundant memory usage (three copies of the [text], all with the same contents).

- Abstraction increments a counter, which is shared across all instances.

- Gets from [text] at that index and returns directly.

It seems to me that any or all of these could be useful in different cases -- the globally-stored index might be perfect in one case, while a name-switching [send] might be better in another. (Tbh I don't see this as "clunky" at all -- if you have widely spatially disparate references to the same source of data, as may easily be the case in a large and complex program, a simulated function call might be the cleanest way to represent it. Also, it seems to me that in all of the "shared storage" approaches, there is some risk of name collision, but this risk essentially doesn't exist with the send/receive approach, because the caller is completely in control of the location where the data will be returned. Oh, and I'm seeing a bit late that this is one of the options jameslo suggested.)

As you've probably gathered, I'm much more comfortable with text languages. (With apologies in advance... tbh I think in most cases, you can go farther, faster, with text languages. With the exception of slider --> number box, pretty much the only thing I can think of that Pd can do better than SC is [block~], which SC simply doesn't have. Especially when complexity scales up, I find text to be more concise and less troublesome. E.g., if the synthesis code is structured correctly, I can create 3, or 10, or 100 parallel oscillators just by changing one number in the code, and without saving extra files to use as [clone] abstractions. I would love to see some counterexamples here! What am I missing? Being proven wrong means that I learned something.)

WRT to Pd, after about a year of use in a couple of courses, I feel like I'm starting to get a bit better at it. For me, the most important point of this thread is to learn dataflow vocabulary for the text-programming concept of function calls. This discussion has been really valuable for me.

hjh

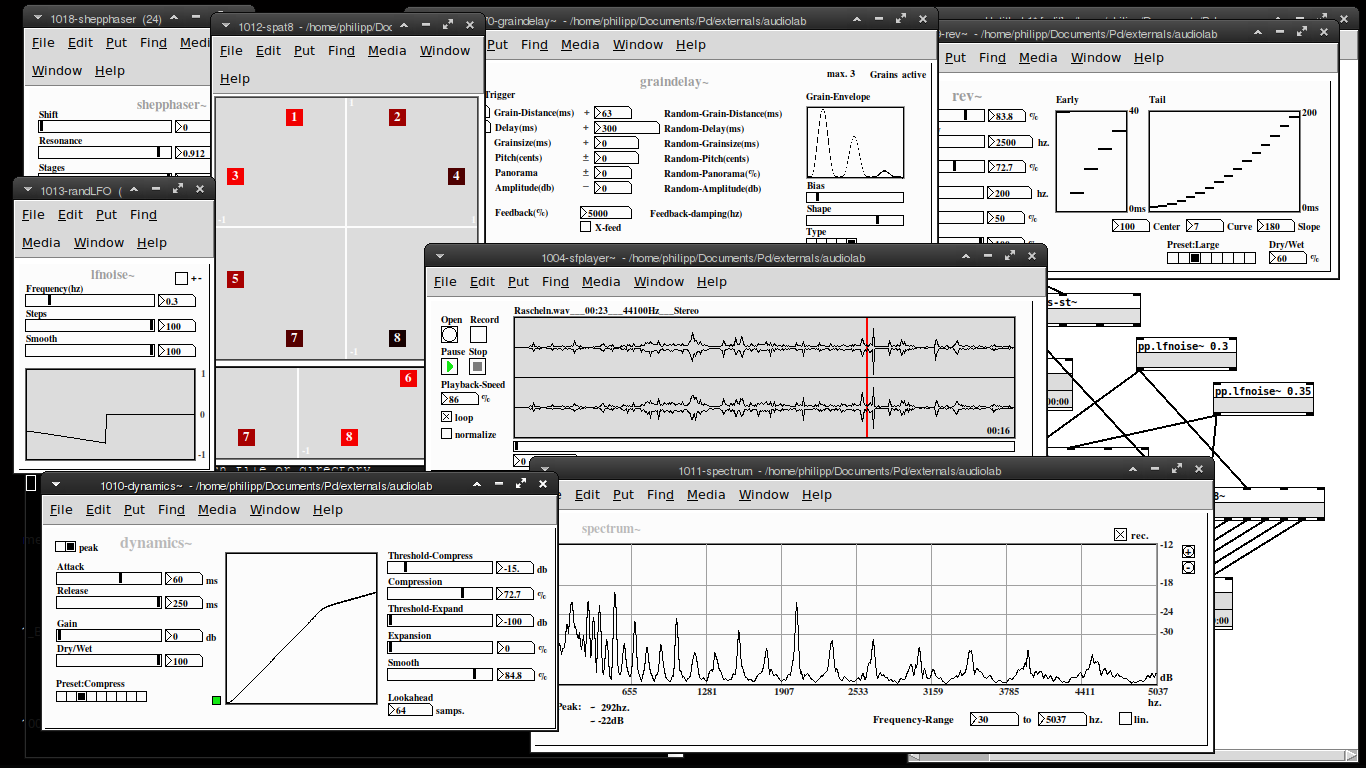

Audiolab is now available on deken!

my "audiolab" abstraction library is now available on deken. You'll need Pd-0.50 or later to run this.

Please report any bugs on github: https://github.com/solipd/AudioLab

here is a picture to draw you in (:

Edit:

list of objects:

Soundfle processing

pp.sfplayer~ ... variable-speed soundfile player

pp.grainer~ ... granular sampler

pp.fft-stretch~ ... pvoc time stretching & pitch shifting

Spatialization

pp.pan~ ... constant power stereo panning

pp.midside~ ... mid-side panning

pp. spat8~ ... 8-channel distance based amplitude panning

pp.doppler~ ... doppler effect, damping & amplitude modulation

pp.dopplerxy~ ... xy doppler effect

Effects

pp.freqshift~ ... ssb frequency shifter

pp.pitchshift~ ... pitch shifter

pp.eqfilter~ ... eq-filter (lowpass, highpass, resonant, bandpass, notch, peaking, lowshelf, highshelf or allpass)

pp.vcfilter~ ... signal controlled filter (lowpass, highpass, resonant)

pp.clop~ ... experimental comb-lop-filter

pp.ladder~ ... moogish filter

pp.dynamics~ ... compressor / expander

pp.env~ ... simple envelope follower

pp.graindelay~ ... granular delay

pp.rev~ ... fdn-reverberator based on rev3~

pp.twisted-delays~ ... multipurpose twisted delay-thing

pp.shepphaser~ ... shepard tone-like phaser effect

pp.echo~ ... "analog" delay

Spectral processing

pp.fft-block~ ... audio block delay

pp.fft-split~ ... spectral splitter

pp.fft-gate~ ... spectral gate

pp.fft-pitchshift~ ... pvoc based pitchshifter

pp.fft-timbre~ ... spectral bin-reordering

pp.fft-partconv~ ... partitioned low latency convolution

pp.fft-freeze~ ... spectral freezer

Misc.

pp.in~ .... mic. input

pp.out~ ... stereo output & soundfile recorder

pp.out-8~ ... 8 channel output & soundfile recorder

pp.sdel~ ... samplewise delay

pp.lfnoise~ ... low frequency noise generator

pp.spectrum~ ... spectrum analyser

pp.xycurve

poorman's 360 multi-channel pan

@whale-av Thanks a lot! I receive this message (about [audience~]):

audience~.pd_darwin: mach-o, but wrong architecture

--maybe they're too old and in an old OSX architecture? I just put the audience~.pd.darwin and audience~-help.pd in the same folder of my patch.

grampipan and grambidec by @ricky sounds just perfect --but the links are broken, couldn't find anything in the authors' website.

HOA looks just great, but I think nobody can run it in Mac nowadays? Somebody knows an alternative?

I can't believe Pure Data doesn't have a proper multi-channel / ambisonic / spatialization library --time to consider Max/MSP? that would suck, lots of work in PD already for this 8-ch installation :'-(

Thanks for any hint, guys!