-

zigmhount

posted in technical issues • read moreHello,

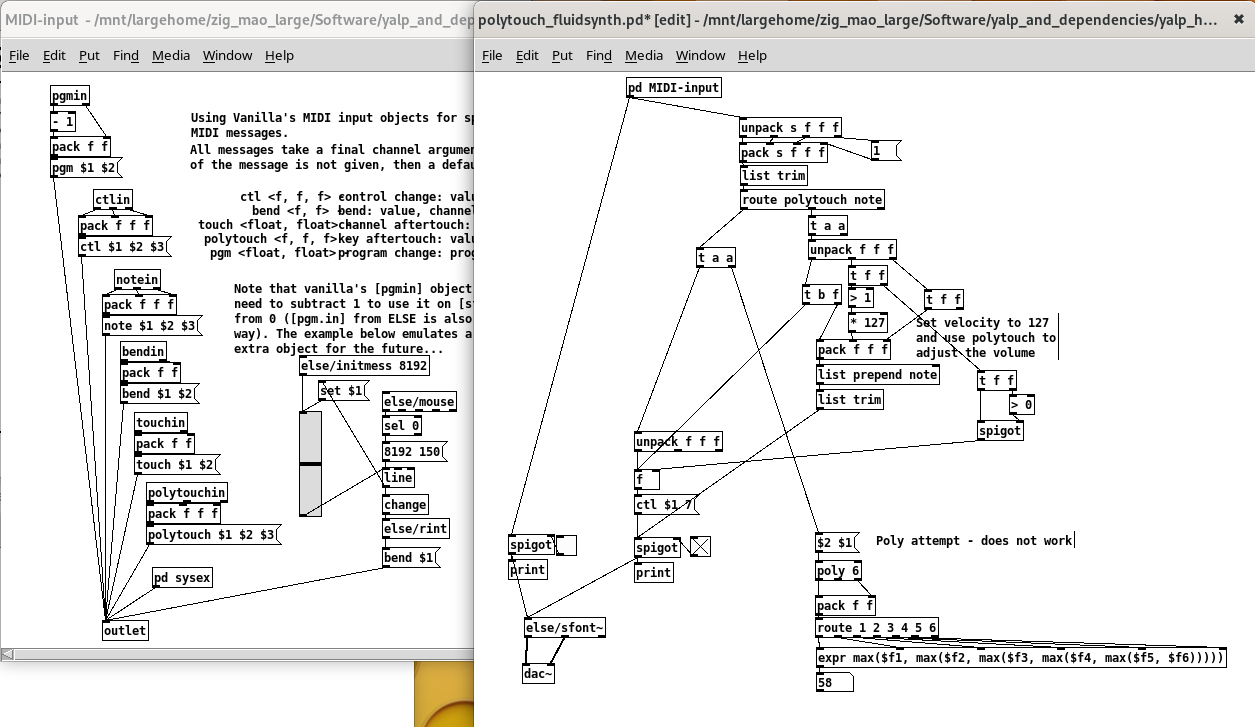

I am using sf2 soundfonts with

[else/sfont~(with a launchpad and a keyboard. Both the keyboard and the launchpad send the usualnotemessages, but the launchpad also sendspolytouchmessages ("key aftertouch": value, key, channel) when keeping pressure on the pad.

According to my tests (ELSE v1.0.0_RC14),sfont~does not seem to process thepolytouchmessages, i.e. the volume of the note played does not change with the pressure. Is this a limitation of fluidsynth itself, or am I missing something?I've tried to convert the polytouch messages into

[ctl $1 7(where CC 7 is the actual volume control of fluidsynth. It works when playing one note at a time, but when playing multiple notes then $1 changes too much - I'm currently trying to use[poly]to use only the max value from all current notes with polytouch value > 0, not working yet (poly seems to struggle to output the full stream of values coming from polytouch):

Edit: I just found out about

[else/voices]but it also does not output the full stream of polytouch values, only the first value after 0.Alternatively, I guess I could maybe use

[touch(instead of CC7, but I've never tried channel aftertouch yet.Any other ideas?

Thanks! -

zigmhount

posted in technical issues • read moreThanks @oid for your reply. Actually I'm on Android, didn't have much success with Cabbage for Android and I believe I tried csound on Android, I can't really recall but it must have been disappointing because it's not installed anymore

Anyway, MobMuPlat was pretty cool to also design the UI with PD's objects. That was a year ago though, today I want to use it in a DAW (on Linux).

Anyway, MobMuPlat was pretty cool to also design the UI with PD's objects. That was a year ago though, today I want to use it in a DAW (on Linux).the first thing I would do as a test is break it down into the three component sounds, finger strike, tine, and resonator and compare them individually, would help in identifying the problem.

I actually did this at the time, thanks for reminding me. I compared the signals between Pd and Csound in an analyzer for all members and combinations:

- Strike:

- aNoise

- aTone

- Tine

- aFundamental (I had to add

*~ 3000to the signal, but could not figure out why...) - aBeat (also

*~ 3000) - aHarmonic

- aReso

- aHi

- aFundamental (I had to add

- Resonator: that's where it starts looking bad

- aTone1 was not great

- aTone2 was kinda ok

- aTone3 was terrible

As far as I can recall and understand from these year-old screenshots (I should do it again), anything but the tonal sound (300-400Hz) was pretty much inexistent, and the tweaks I tried to add some power below 300Hz and above 600Hz (added a shelf using [rzero~] and [rpole~]) lead to strong harmonics, which causes the high-frequency sounds I hear when playing the patch I shared above. My conclusion at the time was thus that:

"Somehow the resonator's lpbutt and hpbutt let many high freq through in CSound compared to [butterworth3~] in Pd, don't know why, but I'll just continue with that for now:"

I'll try to investigate the Resonator again.

That said, your comment

I have never had much luck converting csound stuff to pd even on fairly simple patches, never seem to sound as good and sometimes sound nothing alike despite my best efforts.

tends to motivate me to work instead on the Cabbage plugin version of the csd patch and try to improve its performance (right now it uses a full core of my CPU regardless whether or not it plays notes). Csound forums, here I come!

- Strike:

-

zigmhount

posted in technical issues • read moreHi all,

I found a Csound patch synthesizing sounds for a handpan on this page and it sounds great.

I then wanted to interface it with my MIDI controller so that rather than learning Csound from scratch, I've embedded the CSD synth into a Pd patch using the [csound6~] external. Works great.

The next steps however were to use such Pd patch on my smartphone via MobMuPlat, or in a DAW using plugdata (my attempt at generating a VST with Cabbage shows very poor CPU performance), however both are based on libpd and don't support [csound6~].So I've taken the approach of reproducing the csound patch in vanilla Pd, which was very instructive. I've followed the same variable, function names and logic, read a lot of documentation, and came up with the patch attached.

handpan_comparison_csd_pd.zipHowever, the sound is not quite the same. There is a huge lot of high frequencies in the Pd version, which I couldn't quite eliminate even using up to 10 successive [lop~ 900] (I've left only one in the patch for now), but more generally I can't figure which differences between the csound patch and the patch may have this effect. Note that I am neither an expert in Csound or in filters - in particular I don't quite trust the Butterworth3 filter I hacked together - can I even realistically expect that the same algorithmic logic in Csound and Pd would produce the exact same sound?

So I'm now asking here for help, in case some experts may find the time to review what I've done in Pd and compare it with the Csound patch, and may be able to suggest improvements. I know it is quite tedious work but I am hopeful

Thanks in advance to anyone who gives it a try!

Thanks in advance to anyone who gives it a try!(Note that this patch was embedded in a more complicated one including interfacing with the midi controller, definition of various scales, debugging/monitoring etc., which I've removed before sharing it here, but I may have forgotten some bits here and there, let me know if it complicates the review!)

-

zigmhount

posted in technical issues • read moreI've progressed with libpd instead :D

I know that this will probably make things more difficult with complicated patches and externals (I would have to compile libpd with support for these externals if I understand correctly), but for simple patches it seems pretty straightforward. I haven't tested it properly yet, but this small script exposes virtual ALSA-MIDI ports in a patchbay (which I can connect to anything) and just forwards notes and CCs to Pd, and receives Pd's notes and CC back and forwards it out to ALSA:import mido # install python-rtmidi from pip from functools import partial from pylibpd import * my_client_name='My Libpd Client' input_port_name='Input' output_port_name='Output' # Open Input and Output ports (Rtmidi) # Creating multiple virtual ports will create multiple clients with the same name. outport = mido.open_output(output_port_name,virtual=True,client_name=my_client_name) inport = mido.open_input(name=input_port_name,virtual=True,client_name=my_client_name) def pd_receive(*s): print('Printed by pd:', s) def midi_out(*s): match s[0]: case 'note': if s[3] > 0: m=mido.Message('note_on', channel=s[1], note=s[2], velocity=s[3]) else: m=mido.Message('note_off', channel=s[1], note=s[2], velocity=s[3]) case 'cc': m.mido.Message('control_change', channel=s[1], control=s[2], value=s[3]) outport.send(m) # Callbacks to receive messages from Pd: libpd_set_print_callback(pd_receive) libpd_set_midibyte_callback(pd_receive) libpd_set_noteon_callback(partial(midi_out,'note')) libpd_set_controlchange_callback(partial(midi_out,'cc')) libpd_open_patch('midi_processing.pd','.') with inport: for msg in inport: match msg.type: case 'note_on': libpd_noteon(msg.channel,msg.note,msg.velocity) case 'note_off': libpd_noteon(msg.channel,msg.note,0) case 'control_change': libpd_controlchange(msg.channel,msg.controller,msg.value) case 'sysex': libpd_sysrealtime(1,msg.byte) libpd_release()And that's it! It's quick & dirty and it will get more complicated if I need to handle any type of MIDI messages, but notes and CCs should do for now.

Looks like this in the patchbay:

-

zigmhount

posted in technical issues • read moreThanks both,

@oid I have indeed used scripts before, callingaconnectto list, extract the name of the client/port I need to connect to Pd, and connect it to Pd's inputs and outputs. However in the current case I'd like very much something more modular to connect in a patchbay.

@whale-av thanks, I didn't know about the mediasettings external, this will probably useful in the future. Similar to @oid's suggestion though, this is also about connecting Pd with other MIDI clients from within the Pd patch.I'll continue exploring the libpd route, but I'll have to learn a bit more about Python's modules management to figure out how to properly install and use it.

Or maybe open the patch in plugdata inside a plugin host... -

zigmhount

posted in technical issues • read moreHi all,

I've built 3 small Pd patches to fit in my workflow, e.g. to convert one MIDI controller's messages into OSC, one to light up the LEDs of another controller, one to control a pedal, etc.

If I open 2 instances of Pd, each with its own MIDI (or audio, for that matter) inputs and outputs, Alsa-Midi refers to them as follows:

$ aconnect -l client 128: 'Pure Data' [type=user,pid=260121] 0 'Pure Data Midi-In 1' 1 'Pure Data Midi-Out 1' client 129: 'Pure Data' [type=user,pid=260548] 0 'Pure Data Midi-In 1' 1 'Pure Data Midi-Out 1' 2 'Pure Data Midi-Out 2'The client name is the same, and the client ID cannot be predicted - depending on which controllers I have plugged in and in which order, it might be any number incremented from 128. The tools that should remember and restore the patching (

jack_patch, Ray Session and the like) struggle the same as I do to figure out which instance of Pd to connect to which controllers.

I want to keep these patches in separate PD instances so I can mix and match, and not necessarily have all of them open, and always rely on the specific instance's MIDI input number and output number.So, here's my question: any idea if it is possible to rename Pd's alsa-midi ports? I found surprisingly little online about this issue, yet I can't really believe that nobody met this issue before...

I've never seen the command line option

-jacknameto actually have an effect, but in any case Pd does not use Jack MIDI as far as I understand.

I've been thinking about running the patch in a libpd wrapper instead, e.g. from a Python script in which I could specify the client's and port names, but I'm struggling a bit to install pylibpd. -

zigmhount

posted in technical issues • read moreThanks for your replies!

My conclusion is indeed that I don't really need to worry about efficiency in this specific case

@seb-harmonik.ar said:

imo the best way would be to use an

[array]for the button in each[clone].Oh interesting, I'll keep that in mind for the next time where I'll have to worry about Pd's performance. I'd have to see if I can handle it all with only numbers (while [text] leaves the possibility to also store and query symbols)..

why would the order change? why couldn't you just write each button's info to the corresponding line number?

The mapping between buttons and sequences may change, e.g. if I shift the buttons to cover the sequences in columns 2 to 9 rather than 1 to 8.

@oid Ecasound is a good suggestion, I looked into it a couple of years ago when I wanted to make music on a 700Mhz single-core Celeron. For the current project I need a graphical LV2 plugin host where I can easily map controller knobs to plugin parameters, side-chain audio outputs and easily insert new busses with synths or soundfonts, i.e. basically the mixer part of a DAW (I'm on Ardour for now, Qtractor could work also) without its timeline, or Non-Mixer if it supported LV2 plugins with their UI. I might consider something modular eventually (e.g. with Carla).

isolate a core and run the DAW on that core, can keep the random dropouts from happening

Also a good idea, I may give it a try. Although so far I've noticed dropouts when enabling synths in Ardour, Pd's calculations are probably negligible in comparison.

@ddw_music Thanks for the benchmark and the explanations, very interesting. Sticking to numbers in arrays rather than text is definitely something to keep in mind for future projects on smaller machines.

-

zigmhount

posted in technical issues • read moreHi @oid, thanks for the suggestion (I usually do split audio and control in different patches) but I forgot to mention that I am not processing any audio at all in this patch

And, also, that this patch is only used to interface my MIDI controller with OSC, so real-time performance is actually not really a big deal. So maybe the performance difference between my option will not be noticeable at all.For reference, the machine I'm running this on has 2 cores at 2GHz, but I'm also running a DAW in parallel to Pd. My previous project was a complete MIDI sequencer, with 24 ticks per beat and relatively fancy UI (moving canvas for sequences progression bars etc.) which lead to relatively heavy load (and low performance), which is why I looked into the [text] topic that I'm asking about here. But I guess that the performance was impacted much more by the continuous UI updates than by the text search

-

zigmhount

posted in technical issues • read moreHey all,

I am integrating a Pd patch with an existing sequencer/looper (Seq192) with an OSC interface, where my patch should convert my MIDI controller's button presses to OSC commands and send back MIDI signal out to lighten the controller's LEDs.

I can retrieve periodically the status and details of all clips/sequences and aggregate it into a list of parameters for each sequence. The LED colors and the actions that the next button press will trigger depend on these parameters, so I need to store them for reuse, which I like doing with [text] objects. I am then handling buttons' light status in a[clone 90](where each instance of the clone handles one button).This should be running on a fairly low-end laptop, so I'm wondering which of these approaches is the most CPU-efficient - if there is any significant difference at all - and I couldn't come up with a way to properly measure the performance difference:

- one

[text define $1-seq-status]object in each clone, with one single line in each. I compare the new sequence status input with[text get $1-seq-status 0]so that I update only line 0 with[text set $1-seq-status]when I know that the content has changed. - one single

[text define all-seq-status]object with 91 lines. I compare the new sequence status with

[ <button number> ( | [text search all-seq-status 0] | [sel -1] | [text get all-seq-status]and if it has changed, I update a button's line with

[ <new status content> ( | | [ <line number> ( | | [text set all-seq-status]The order in which buttons/sequence statuses are listed in the file might change, so I can't really avoid searching at least once per button to update.

- Should I instead uses simple lists in each clone instance? As far as I could test, getting a value from a list was a bit slower than getting a value from a text, but searching in a text was much slower than both. But I don't know the impact of processing 91 lists or text at the same time...

TL;DR: Is it more efficient to

[text search],[text get]and[text set]91 times in one[text]object, or to[text get]and[text set]1 time in each of 91[text]objects? or in 91[list]objects?Since you've gone through this long post and I gave a lot of context, I am of course very open to suggestions to do this in a completely different way :D

Thanks! - one

-

zigmhount

posted in technical issues • read moreI also noticed here..... http://www.music.mcgill.ca/~ich/classes/mumt306/StandardMIDIfileformat.html

Great resource, thanks! that's going to be useful in future (hopefully I will finish my project before MIDI 2.0 is widely available

)

)@ddw_music's video will definitely also be useful, in fact I'm working on a patch I want to run with plugdata in Ardour, so it's spot on, thank you very much!