-

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreFor exchanging via usb you can use [comport]

https://puredata.info/community/pdwiki/ComPortIt's part of Purr Data and it works well.

Xaver

-

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreThey don't necessarily play in time anyway.

Well, nor do I.

But you can fix this later at mastering. You could record the click track to a second channel (having sent it out and back in) to aid with re-syncing. The distance from ear to monitoring you can measure physically and calculate the delay.

And when I know the latency in advance, I can record while I play along and shift the recording afterwards, so it'll be in sync again.

I'm not shure, but I think, when I use Katja's latency patch, it measures the latency between speakers and microphone. Only trouble might be too much ambient noise.@LiamG

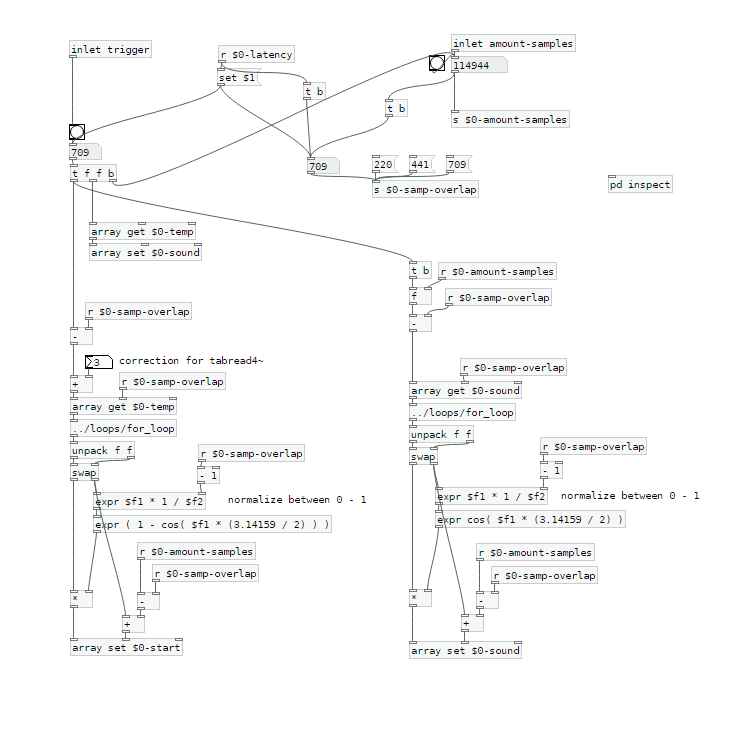

For now I tried to crossfade at the end of the sample. I'm mixing a fade-in of the start of the sample with a fade-out of the end of the sample.Maybe this picture is helpfull for a better understanding:

The startsamples are taken from $0-temp, which is recorded first and which is delayed by the latency.

$0-sound is the array used for playback.I tried to use a crossfade of equal power, but still I can hear a little dip when the sample is restarted.

This is the calculation: expr ( 1 - cos( $f1 * (3.14159 / 2) ) )

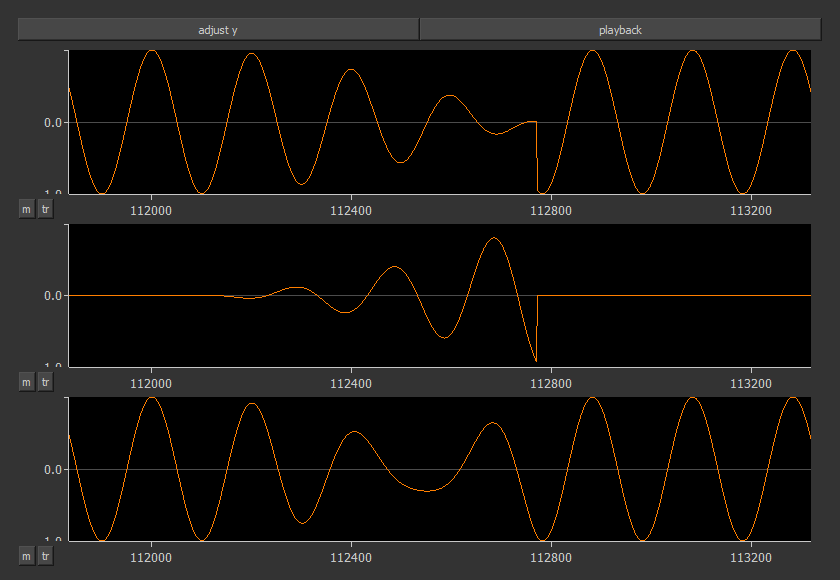

with 0 < $f1 < 1This is, what it looks like in a scope:

The first row shows the cutted sample $0-sound. It's start is around 112800 samples. On the left side you can see the end with a fade out.

The second row shows the fade-in of the start sample.

The third row is the mixed result.Here's the new patch:

loop-machine.7zAre there any ideas of how the mixing can be done better?

Xaver

-

xgr

posted in technical issues • read more

xgr

posted in technical issues • read more@whale-av

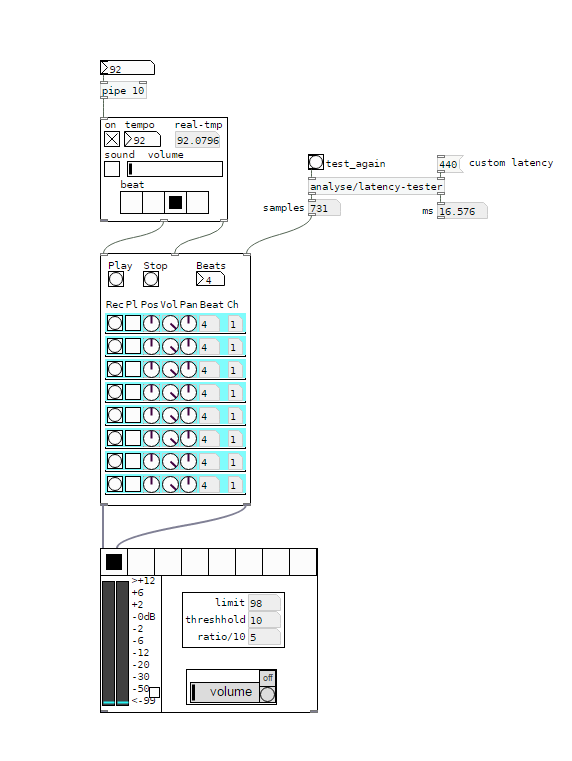

I'm using a RME Fireface uc with it's own Asio driver on Win7/10. On Purr Data or vanilla I can set latency to 2 ms as well, but the roundtrip latency tester gives me the same results for 2-5 ms (around 440 samples measured with cable). With a 48 sample buffer instead of 64 on my soundcard I receive even worse values.

But on pd-extended it just doesn't work. Don't know why, but as it's not updated anymore, I prefer working with vanilla and Purr Data.Anyway - in the manner I'm trying to loop, latency doesn't really matter, but you have to know it's length. Even with a latency of 50 ms or more you won't notice any offset in the recorded loops as long as you know the exact length of the latency.

Tracks get recorded and shifted by the latency afterwards, so latency should be compensated.You're right, latency won't add up in Pd. But I don't use an in ear system. So if you change your reference from a metronom to a recorded track and from that track to the next recorded one, you will add up latency when the latency isn't compensated.

Xaver

-

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreI changed my first post and uploaded a zip at the end.

Works with Purr Data or pd (plus zexy)@whale-av

Thanks for your patch. I tested it with extended, but I get latency issues. When I record saying something like "tik" on the beat, the "tik" is heavily delayed to the metronom on playback. But on pd extended I can't set the latency lower than 24 msecs. So there might be some issues with extendend on my machine. (pd or Purr Data let me set the latency to 5 msecs without problems.)You wrote: The biggest problem with (not noticing) an edit will be a difference of volume (power). Our ears are very sensitive to that.

The second biggest problem will be the quality.... timbre..... but as you say a crossfade could help. But the bigger the difference the longer the crossfade needs to be.

I thought about starting recording a little bit earlier and stop somewhat later, so I can crossfade between these extra samples. And maybe it's easier than I thought.@LiamG

Thanks. How can I trigger between blocks? I thought messages are only send once during a block, aren't they?

About 3): I was wondering, if it is a problem, not to be able to set the exact metronom tempoAnd here's a little screenshot to see what it looks like:

I would say, anything above 5 ms latency between the metronom and some voices starts to feel strange. And when you start to change your reference - say you start recording with the metronom, change to first recorded line as a reference, than to the second etc, - the latency will sum up and increase.

I wonder how latency is treated on hardware loop stations.

And I'm still asking myself how latency should be treated to get it right.Regards,

Xaver -

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreHi,

I'm creating a loop station and I've got several questions about different issues.

- Latency

- Fading/Cutting recorded samples at start and end

- Metronom settings

1) Latency

I'm using the latency tester from the repo.

http://www.pdpatchrepo.info/patches/patch/92Latency might be calculated with different methods:

a ) Connecting the output of the soundcard directly to the input via cable. This way the lowest possible latency is received. (Around 440 samples in my case)

b ) Calculating via microphone and speakers. Latency will be higher compared to a ). Also the bigger the distance between mic and speakers, the higher the latency will be.Which calculation should be used?

(To me it seemes to be logical, to use the latency calculated by b ), as that is the method which reflects the real circumstances. But I'm not shure and it's very hard to tell by what I hear.)2) Fading/Cutting recorded samples at start and end

When the recorded sample contains a phrase which is completly finished before the next 1, doing a fade with [vline~] sounds OK. But when you record a continuous sound (like a string pad) you will always hear that fade of [vline~].

Compared to Reaper: Cutting a sound and looping it, will result in a continuous sound. To me it seemes like some kind of crossfade between the beginning and the end of the sample is done.Does someone know more about this? How should it be done correctly?

Crossfading seemes to be a quite difficult task in my eyes, as a lot of calculation has to be done. (Recording has to start a bit earlier and to stop later, sync has to start earlier, and so on)

3) Metronom settings

This loop station depends on a metronom and works similar to Ableton Live. As pd uses blocks of 64 samples, the tempo is corrected to a tempo which corresponds to an array with a size multiple of 64. So playing can easily be kept in sync.

I'm not shure about this. Is it a good idea to do it like this?

But changing this to a manner, where the tempo might be chosen freely, will result in much more complicated calculations. Is it worth it?Thanks for any comments in advance,

XaverMy patch is still in development and can be found here:

https://github.com/XRoemer/puredata/tree/master/loop-machine

(downloading from github: go up one folder and use the download/clone button

https://github.com/XRoemer/puredata/tree/master) -

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreI connected the output of my soundcard to it's input, and it makes a great difference.

Via microphone I get a latency of 533 samples (12.08 msec), via cable 440 samples (9.97 msec)My Soundcard is set to 64 samples, pd delay to 5 msec. Smaller delays on pd have no influence.

My soundcard has the option to set the latency to 48 samples. Doing that, the measured latency increases. I tried a [block~ 48], but that didn't change anything.

Isn't it possible to set the latency of the soundcard to smaller values than 64 samples? -

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreThanks for reply.

OK, just to be shure:

Do you mean, I should plug the cable from the output of my soundcard directly to the input?Xaver

-

xgr

posted in technical issues • read more

xgr

posted in technical issues • read moreHi,

beeing busy with a live looping mechanism, I need a patch to measure the latency of my system.

I've found the roundtrip latency tester: http://www.pdpatchrepo.info/patches/patch/92It works well under two conditions:

- A microphone is plugged in and placed directly in front of the speakers.

- There is no loud noise in the background.

Both conditions might be problematic in live situations, especially the second.

My question is, if there's any other possibility to measure the latency?