Keyboard HID vs serial comport for Sensor values on pico

I'm trying to get values from analog sensors connected to a raspberry pi pico. That pico is plugged into a rp5 where I want to read those sensor values in Pure Data. Question-- to interface the pico's sensor values with the Pure Data I can either

Use the pico as a keyboard HID device (using MicroPython on the pico, and "HID" object in Pure Data), or

Use the "comport" object in pure data to unpack and read the sensor values over serial.

Does anyone have any thoughts about either way? Limitations or resolution(if that even applies). Someone please correct any of this- I'm thinking the HID might be a bit friendlier, both pi's and an audio interface are going in a Disney princess guitar I got from goodwill eventually, but I'm trying to get it functional first. I see values in pure data using a Logitech USB game controller and the "HID" object, and about 10 years ago I got sensor values over serial with the "comport" object in Pure Data with an Arduino Nano v3.1. I was reading a little about the u2if git repository that might be another option...and Just found out about the teensy boards... was thinking the teenzy 4.0 might be a better option. But- I haven't gotten this working, so that's first. I appreciate this space!

Trying to add key switch functionality to a midi controller

@whale-av said:

@Alan-Angel You could be right about the order of operations......... https://forum.pdpatchrepo.info/topic/13320/welcome-to-the-forum

You need to store the right hand note so that it can be played later by hitting the left key.

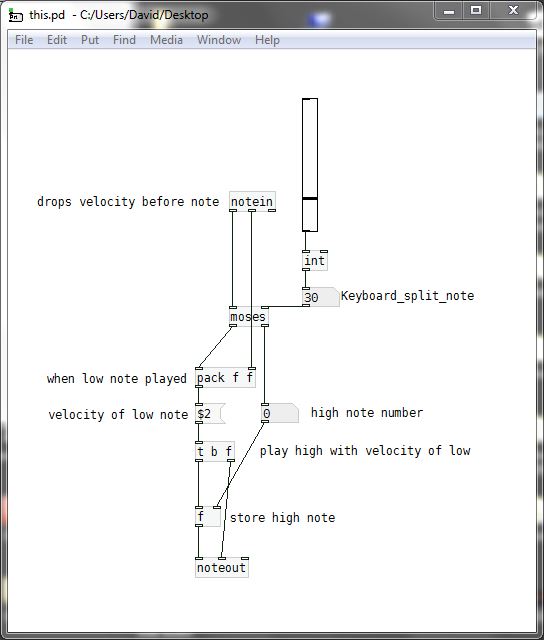

I have broken it down into (I hope) an understandable work flow for a mono player....... this.pd

So that even if it doesn't work you should be able to understand the order of operations and fix the problems...

[pack f f] receives the velocity of high notes.... but that value is replaced by the velocity of the low note just before the low note triggers the playing of the high note.If you want to do this polyphonically you will need to use the [poly] object and to use the finished mono patch as an abstraction within a master patch containing [poly]...... probably using [clone].

That's slightly more complex.... but you will get there.....

David.

Thanks for your help. the low velocity isn't registering, I'll try to figure out why not but I get the logic.

@kosuke16 said:

Whale is right, my bad. I tried it only with the numbers, no audio so while the numbers where good, the timing wasn’t. Note message not being continuous, you have to repack it so it is send at the same time than the velocity to make a coherent list of two values for noteout.

Thanks for your help so far!

@ddw_music said:

My question about this:

Right hand first, then left hand: Note should be produced upon the left-hand note on.

- What if one right-hand note is pressed, and two or three left-hand keys? Multiple notes, or only the first? (Polyphony, I suppose, would have to be right-left, right-left, not the same as right-left-left.)

Left hand first, then right hand: Should it play the note upon receipt of the right hand note-on, with the left-hand velocity? Or not play anything? Or something else?

- And, if it should sound, what about left-right-right? Portamento? Ignore the second?

Because a full solution will be different depending on the answer to these questions. These need to be thought through anyway because you can't guarantee correct input -- the logic needs to handle sequences that are not ideal, too.

hjh

-

I don't plan on play two left hand notes, I just want to play fast galloping basslines like dun du-du dun du-du dun du-du dun and it'seasier to do with one hand and play the notes with the other, like a real bass.

-

Not sure about this one, I guess I'll know the more I play how I want it to work. Maybe default it to a low E like on a real bass for fun?

-

Agree, for now I just want some basic functionality to even see if the instruments I use can handle fast notes in the first place.

Trying to add key switch functionality to a midi controller

My question about this:

-

Right hand first, then left hand: Note should be produced upon the left-hand note on.

- What if one right-hand note is pressed, and two or three left-hand keys? Multiple notes, or only the first? (Polyphony, I suppose, would have to be right-left, right-left, not the same as right-left-left.)

-

Left hand first, then right hand: Should it play the note upon receipt of the right hand note-on, with the left-hand velocity? Or not play anything? Or something else?

- And, if it should sound, what about left-right-right? Portamento? Ignore the second?

Because a full solution will be different depending on the answer to these questions. These need to be thought through anyway because you can't guarantee correct input -- the logic needs to handle sequences that are not ideal, too.

hjh

How to loop/reset an audio file to the beginning

I'm not at the computer now, but I would proceed by steps.

Sensor = 1 play/resume, sensor 0 = stop. Get this very simple specification working first.

Then start the timer on sensor = 0 and be sure that the timer is counting correctly.

Btw I think you can simplify this using the pd [timer] object. Why? Because you need to know the elapsed time only when the sensor was 0 but becomes 1. So there is no need to poll the time continuously. Sensor 0, bang to timer's left inlet, sensor 1 to timer's right inlet.

Then:

- If the sensor goes to 0, the playback rate should change to 0 (pause).

- If the sensor goes to 1, the playback rate should change to 1 (play again).

- Note that these two behaviors do not depend on the timer at all!

- Then, also at the moment when the sensor becomes 1, if the timer shows a long enough period, then you would also send "all" to the left inlet.

hjh

How to loop/reset an audio file to the beginning

I will check it late on my mac computer. for the moment I'm not on my computer, so I don't want to waste much time on it now as I think it will work on my computer.

For the moment I'm using your help file patch which is great. Your abstraction is so much more easy to use then from my patch I shared in the beginning.

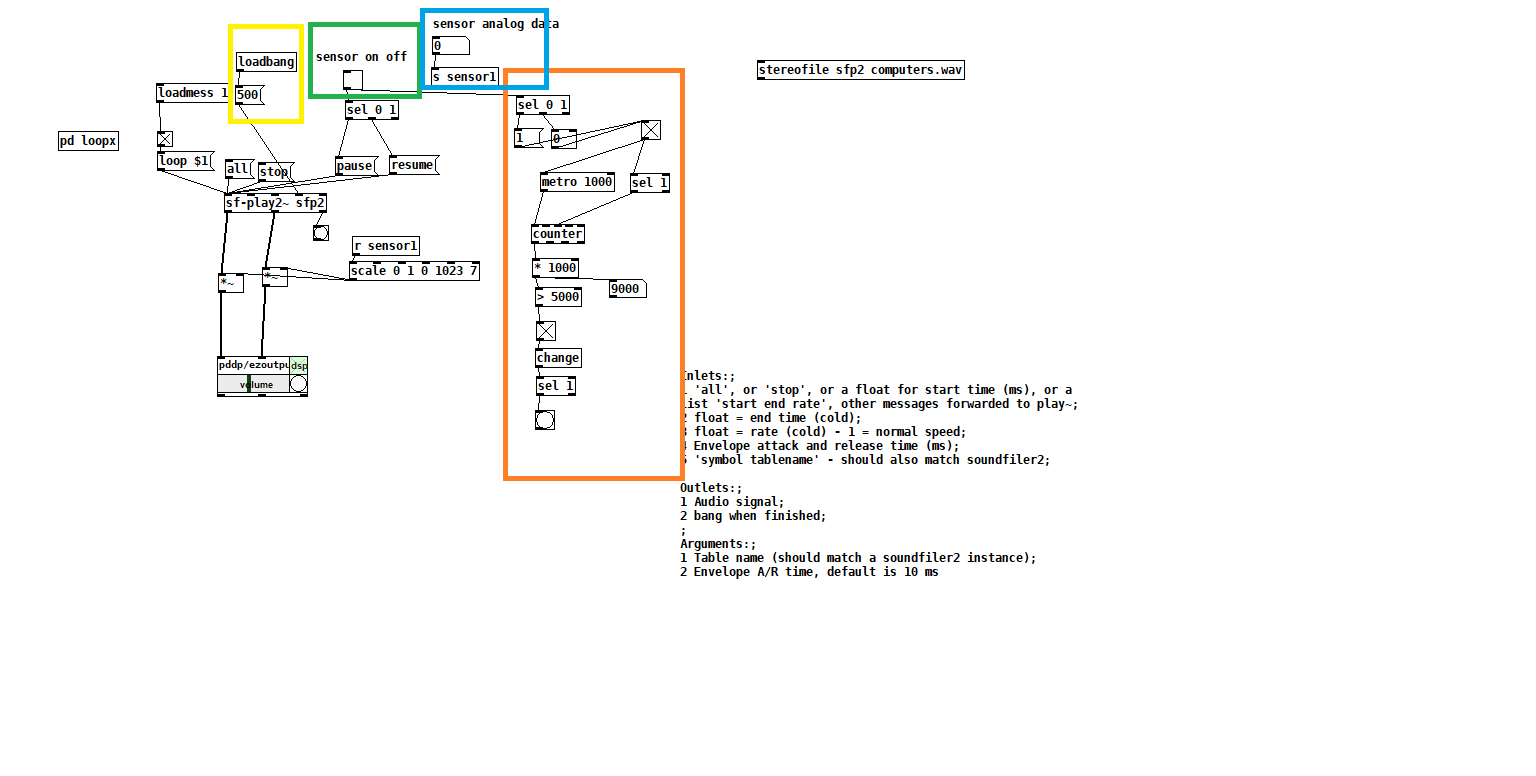

what I'm trying to do is the following -

I will have 8 different sound files. Each sound file should be in a individual sf-play2~ object.

I will have data from 8 diffrent sensors I'm reading via arduino. Each sensor will report 0 or 1 (if sensor is pressed or not) alongside with analog data of numbers between 0 - 1023.

What I would like to do is that when I'm reading 1 the audio file will play, when reading 0 the audio file will pause . if 1 is held and the audio file arrived to it ends it will continue from the beginning. (loop 1) . I will also have a clocker to report how much time has elpased since sensor is 0. If more then x seconds the audio file will go back to beginnnig so next time sensor is held (1) it will start from the beginnnig.

here is my try with your abstraction.

the green part is to imitate the on off from the sensor. the blue is the analog data from sensor.

the orange part is my try to make a clocker that will start count when sensor is off. The yellow part is for small envelop to avoide clicks when audio is pause and resume.

How can I make the audio file go to the beginning when the orange part is reporting a bang (that time was elapsed) ?

Also - I'm not sure if the yellow section is working. Meaning if it is indeed making an envelope on every pause and resume ? Is that possible?

Thanks!

edit: the scale of the sensor should be inverted. 0 1023 0 1

edit 2: how can I make the sf-play2~ go back to the beginning of the audio file without starting it immediately?

edit3: how can I load the file to the sf-play2~ object without starting it? if I don't press "all" message it won't load the file. I would lik the file to be loaded to the beginnnig, but to start and play only when receiving data from sensor on off. for the moment if I'm not pressing all message the file won't play.

Convert analog reading of a microphone back into sound

@MarcoDonnarumma Unable to see what is contained in the sub-patches I don't know how you have regulated the timing. The incoming messages have no clock as far as I know....... the messages arrive when the socket connection spits them out. I see you are measuring the sample period, but is that averaged or continuously updated?

Is there something in your patch that writes the samples at the correct time?

Maybe you could time stamp them in the sender?...... and then use [tabwrite] to index them correctly.

But are you sending repeated bangs? from [r $0-metroreg].

[tabwrite~] needs just one bang to start and will write at your Pd sample-rate (maybe 44100 samples per second). So maybe your array1 is simply far too small to show the data? You need to choose the arraysize for the time window you want to look at and then bang [tabwrite~] at that rate (new window draw as the last finishes).

If the scope is still "blocky" because of your actual low sample-rate of 500Hz you can interpolate afterwards.

This....... https://support.audient.com/hc/en-us/articles/202587353-Clocking-Explained sort of goes into the subject, but essentially samples in the wrong place introduces distortion and when there is enough distortion all you get is noise.

David.

P.S. Lastly (probably not) a 40Hz signal is inaudible for a lot of people. But with a low sample-rate the waveform will be drawn as blocks rather than a smooth curve (at 44100Hz), and your ears will hear it because of the sharp steps (almost like a square wave) in the waveform.

So maybe your patch is actually fine?

Haha here we go.... you can smooth the samples like this..... https://forum.pdpatchrepo.info/topic/11850/explanation-for-lop-object-in-this-patch-requested/2

.... a sort of "pre-interpolation" if you wish, that will smooth for the higher sample-rate in the [tabwrite~] array.

Question(s) about [mrpeach/midifile]

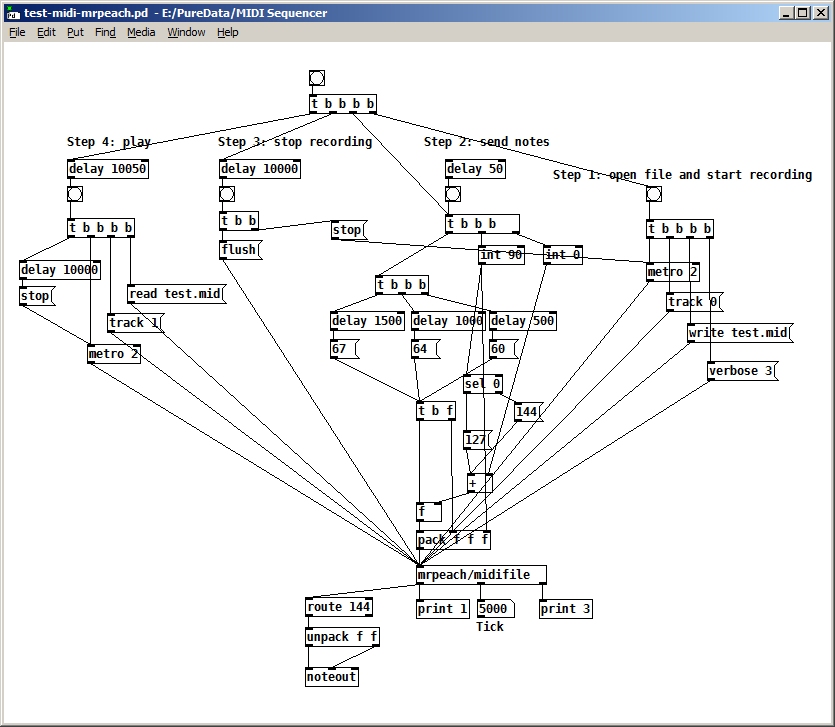

@dfkettle I would expect read/write to be first as they get the file/set the header for writing (MThd).

Then the track.... as that means moving on within the file to the next header for a read, or writing a Track header for a write (MTrk).

That's the order I tried first, but it didn't seem to work. Anyway, I changed it back again to agree with what you suggest, but it still seems to disregard the track number (it plays the track even though I specified track 0 when writing and track 1 when reading. Here's the latest version:

P.S. What does your midifile-help file look like?...... is it this one from 2010?

So for a write the track would go between "1" and "2".

Essentially, although it says "Martin Peach, 2010 - 2018" on mine. However, I'm wondering now how I would create a file with more than one track, if you have to specify the track number between steps "1" and "2". (in other words, before you start recording). Can you change the track number after you've started recording? How could you create two tracks that play at the same time? Maybe that isn't even supported by this library.

EDIT: Does the 'flush' message close the file after writing? I don't see anything in the help file about a 'close' message, so I'm assuming it does.

Midi Rotary Knob Direction Patch/Algorythm?

Hey everybody,

Sorry, for a lot of text. But the bold text at the bottom is my main question. The rest will help you to get a better understanding of my situation.

you helped me so much, with my last question here (the Faders are working dope now):

https://forum.pdpatchrepo.info/topic/13849/how-to-smoothe-out-arrays/25

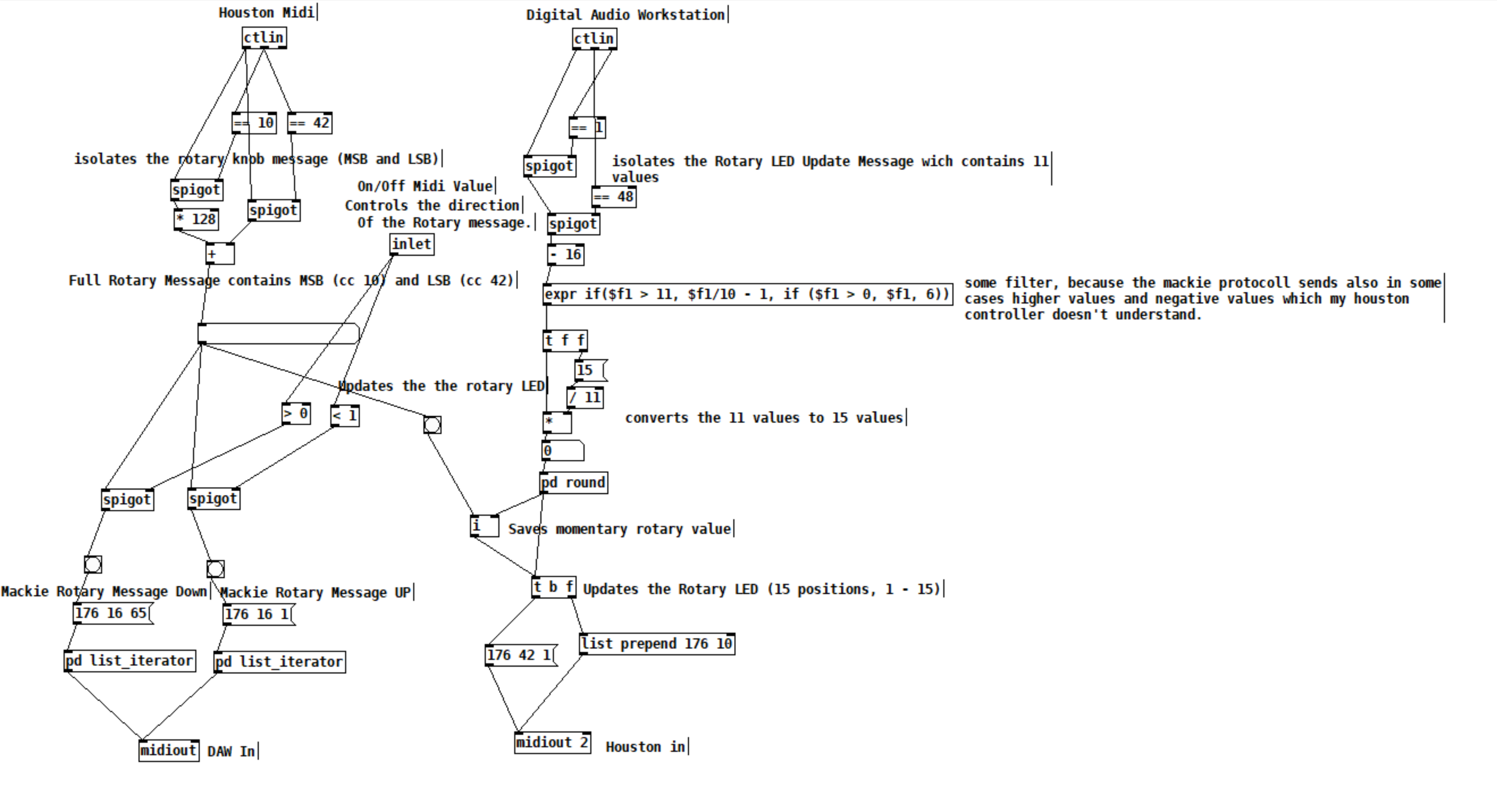

I am doing a Steinberg Houston to Mackie Control emulation at the moment, to use my controller with other DAWs than Cubase/Nuendo. Will upload it to the internet community, when I am finished for the handful of people that maybe are also using this controller.

I made good progress:

I got the Faders and the normal knobs to work. And the display puts out information. But it is with bugs, because the LCD Screen of the Houston has 40 characters for one line and the Mackie Universal Pro has 56 Characters. So i did a list algorithm, which deletes spaces of the mackie message until the message fits on the 40 character line. Maybe there is a method wich will work better but this subject eats too much time for me at the moment and it works rough okay. One defenitely get's some helpful information on the screen from the DAW.

The Faders and Rotary Knobs and normal knobs are the most important of this controller I guess. The Faders are working fine as I mentioned above, but there is a problem with the rotary knobs, wich I can't handle alone and hope you can help me.

The problem is, that the Mackie Controller send simple clicks to the DAW. If you are turning a rotary knob, it sends out a number of midi messages:

If you turn it right, it sends midi messages wich contains the value 1 and if you turn it down it sends messages wich are containing the value 65.

"When the VPots are rotated rapidly, a message equal to the number of clicks is sent."

BUT the Houston controller instead is sending values like it's faders with 15 (MSB) and 128(LSB) values. AND it is updating the rotary limit by itself. So if I turn a rotary, it will update it's LEDs and stops sending midi messages when it reaches the maximum or minimum value. So, I did this patch as a momentary state:

The DAW sends 11 values for the Houston LEDs. 11 is max and 1 is min. This is good, I send this values to my houston controller and can update the rotary values and LEDs.

With this updated values from the DAW, I can force my rotary knobs, that they don't stop to send values, because they are set to the values, which the DAW sends, every time I turn a knob. With this method I got it to work to imitate a Mackie Rotary knob. Everytime the Houston Rotary value changes, it sends Mackie "midi click values" according to the amount of midi value changes of the houston.

BUT the problem is, that this is working only in one direction. Now my main question:

How can I make pure Data know, if I am turning my knob in the left direction or in the right direction? There is also the problem, which I mentioned above, that I set the momentary value everytime, I move the rotary, so that I get a unlimited amount of possible rotary move "clicks". Also the midi values which the houston sends arent perfect smooth. It works fine, but it isn't like that, that if you move a rotary in one direction, every value one by another is perfectly lower or higher.

I think I maybe need a algorythm, which looks if the values in a time period are getting higher or lower and then send out bangs on two seperate outlets. For example the left outlet for lower values and the right outlet for higher values. And it should also detect, if I move the rotary fast or slow. So a constant smoothing or clocked bang is also not an option. This is defenitely to complicated for me. I have no idea and what I tried didn't worked.

Would be super cool, if you could help me out again.

fx3000~: 30 effect abstraction for use with guitar stompboxes effects racks, etc.

It still does not work.

I added my-guitar-rig/my-guitar-rig~ to a window and it generated a lot of errors

I am running version 0.52.1 of pd

Hopefully this is helpful. The errors I got are:

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

io pair already connected

delay(wavey)(v).pd 39 0 40 0 (snapshot~->gatom) connection failed

tof/pmenu 1 1 black white red

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

io pair already connected

delay(wavey)(v).pd 39 0 40 0 (snapshot~->gatom) connection failed

tof/pmenu 1 1 black white red

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

io pair already connected

delay(wavey)(v).pd 39 0 40 0 (snapshot~->gatom) connection failed

tof/pmenu 1 1 black white red

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

date

... couldn't create

time

... couldn't create

z~ 64

... couldn't create

limiter~ 98 1

... couldn't create

mknob 42 0 0 1 0 0 empty empty ratio:1.5:1 -2 -6 0 10 -262144 -1 -1 20175 1

... couldn't create

tof/pmenu 1 1 black white red

... couldn't create

pd-float: rounding to 2048 points

warning: fx3000-in-2: multiply defined

warning: fx3000-in-2: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-0: multiply defined

warning: fx3000-in-0: multiply defined

pd-float: rounding to 2048 points

pd-float: rounding to 2048 points

warning: fx3000-in-2: multiply defined

warning: fx3000-in-2: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-0: multiply defined

warning: fx3000-in-0: multiply defined

pd-float: rounding to 2048 points

pd-float: rounding to 2048 points

warning: fx3000-in-2: multiply defined

warning: fx3000-in-2: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-1: multiply defined

warning: fx3000-in-0: multiply defined

warning: fx3000-in-0: multiply defined

pd-float: rounding to 2048 points

Audio click occur when change start point and end point using |phasor~| and |tabread4~|

@Junzhe-hou said:

@ddw_music Hi professor!?!? good to see you here!

Yes, it's me -- I almost didn't notice your username

I read your email last week but im so confused with your

patch--varispeed-segment:|noise~|

|

|lop~ 3|

|

|*~ 30|

|

|+~ 1|

This is just a way to generate a modulator for the playback rate. It could be any other modulator (LFO, envelope, anything).

After that, this is multiplied by a sample rate scaling factor.

As you asked jameslo: "if sample rate (in audio setting) changed the result sound different":

-

If the file sample rate is 96 kHz and the soundcard sample rate is 96 kHz, then normal-speed playback is to move forward exactly 1 sample in the file for every output sample.

-

If the file sample rate is 96 kHz and the soundcard sample rate is 48 KHz, then normal-speed playback is to move forward exactly 2 samples in the file for every output sample. (If you playback at 1:1, then the file will sound slower at the lower soundcard sample rate.)

This was one of the big reasons for me to make [soundfiler2] in my abstraction set. It calculates file_sr / system_sr and saves this in a value object named after the ID+"scale". If you multiply the playback rate by this scaling factor, then the file should sound correct at any system sample rate.

(BTW you would have the same issue in SuperCollider: PlayBuf.ar(1, bufnum, rate: 1) will sound different depending on the hardware sample rate, but PlayBuf.ar(1, bufnum, rate: BufRateScale.kr(bufnum)) would sound the same, except maybe for aliasing when downsampling.)

You method "L inlet = rate * scale for sample increment",so is the rate always changing?

Yes -- variable-speed playback.

@jameslo "I'm sorry if I just did your student's homework" -- actually this isn't for my class -- independent project. There are still some students who do hard things just because it's fun to overcome challenges

hjh