Plugdata: Brutalist-minimalist SOLVED: How can one do "ratio approximation" - fraction from decimal-number?

Hi,

how can one do ratio approximation -- fraction from number? (need it for - well tempered - scale, that should also be used on time and modulation).

Some starting patch would be great, cause I did not find anything on web.

It was once provided by Omar Misa on FB, but it got deleted.. it was small patch using prepend and based on simple logic, that u consequently setting numerator w 1-9 denominator, until it match/ is close .. cool was, that u can also set/ show deviation.. and ultimately set what deviation is acceptable or set it some categories..

Imo for musical purposes, u often don't need complex object/ object-patch/ open object (that is hard to follow without Ph.D. or having Pd as almost only instrument and not just one of sometimes over hundred plugins), but rather a simpler patch w/ explanation of logic behind it/ context (like on U-he, D16groups manuals).. so u can alter it to suit your needs.

Just my opinion.

..................................

As a tribute to Omar I've shared patch - in patch section - using his interpolation technique and update it for 3 values.

Happy patching.

Edit: Ok, I am digging to it from scratch - I am now sure, that it will be one of the least eloquent patch on the planet heavily relying on brute force....

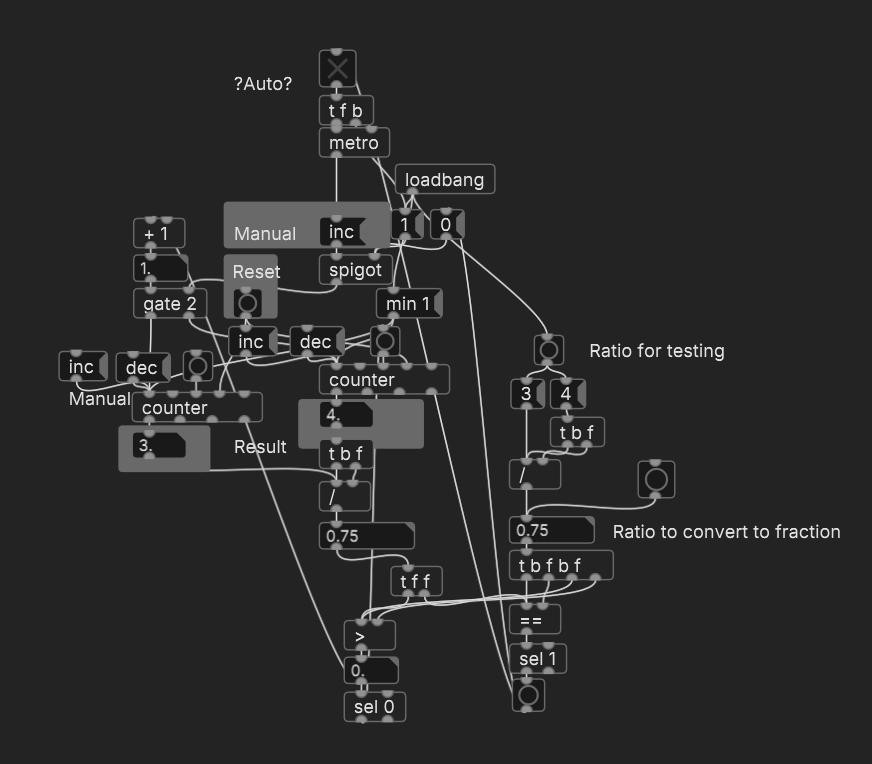

EDIT: As I ve said *- least eloquent patch is done..CounterFin.pd

Patch simply rise denominator,. When its fraction ratio is below numeric ratio, it increases numerator +1 and start rising denom. from 1 again...

Increment/Decrements - allow set +/- 1 manually in different part, giving some overview for ratios - specily those that are close by, helping build your scale or its function(s), can serve as modulation etc.

It allows also easy set of deviation parameters, cause chances to find clear fraction from large ratio are pretty slim,

instance specific dynamic patching documentation assistance

@Muology You can get more info on dynamic patching here........ https://forum.pdpatchrepo.info/topic/10813/collection-of-pd-internal-messages-dynamic-patching/4 and in that thread.

To replace just one object in an abstraction is complicated.... you have to find it and cut it..... using [find( [findagain( and [cut( messages........ then put your new object...... and then connect it.

To connect it you need to know it's object number (it's place in the order of creation of objects in the patch... starting at 0) .... and the object numbers of the objects you want to connect it to.

That is not really worth the bother.

As @oid says.... it is easier to clear the contents and use a message to replace everything you can do that in any canvas)..... but...

It is easier still to replace the abstraction with another..... but then you still have to reconnect it within your master patch...... so...

Replace the whole abstraction.... and use [send] [send~] etc. rather than [outlet] [outlet~] etc. so that you don't have to reconnect.

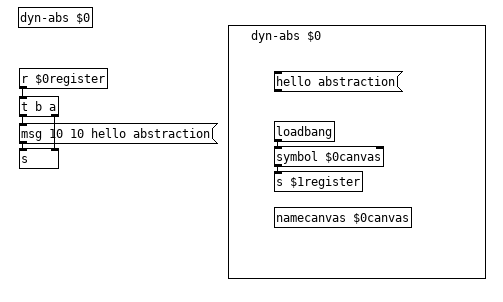

You don't need to use [namecanvas].... you can send messages to patches and sub-patches and arrays by their current name. $0 is an automatically assigned value greater than 1000 assigned to every canvas as it is opened (created). You can get its value by.......

[loadbang]

|

[$0]

|

[numberbox]

...... as it could well change when you reopen the patch (if for example you have opened another patch first).

So it can be used but it is better to actually give fixed arguments to your abstractions using $1 $2 etc using a counter as you create them.

My opening post here....... https://forum.pdpatchrepo.info/topic/9774/pure-data-noob contains a dynamic patch builder....... build_voices.pd .... which will help you to see how it can be done...... although I didn't show how to remove the objects you will be able to calculate their object numbers and cut them.

Further down that thread I attempted to thoroughly explain $0 $1 $2 etc...... "show me dollars".

For your particular purpose...... this.zip

A simple example, that you can build on once you learn a bit more.

Happy dynamic patching....

David.

instance specific dynamic patching documentation assistance

@Muology Generally it is better to stick a subpatch in the abstraction and do your dynamic patching in there so you can exploit the [clear( msg, method is about the same, don't need to keep track of object numbers as closely but sometimes it is the only way. iemguts is worth exploring if you are getting into dynamic patching.

dyn-abs.pd

dyn-abs-test.pd

Edit: Forgot the patch message question. PD messages send interface commands like save or open, patch messages are the dynamic patching messages, these are better found from opening patch files in a text editor or you can use my look abstraction, with it you can get you exact messages needed for any give dynamic patching, just patch together what you want to dynamically create in an empty patch or subpatch, create the [look] abstraction then select everything but it and control click the bang, your messages and their connects will print to the log for you to copy. It is important that [look] is created last other wise it will screw up your connect messages but it does number the objects for you so not difficult to adjust them as needed so you can even skip using an empty patch/subpatch without much fuss.

instance specific dynamic patching documentation assistance

@whale-av @ben.wes @ddw_music

I am learning about dynamic patching. The documentation describes instance specific dynamic patching as being able to send messages to a specific instance of an abstraction, by renaming the abstraction using namecanvas. The renaming can be automated using $0 expansion.

I am not familiar with where to locate namecanvas and how to use it and I am not familiar with how to use $0 expansion. Can someone please show a complete visual example of how to use instance specific dynamic patching using the exact instructions given in the documentation link?

https://puredata.info/docs/tutorials/TipsAndTricks#instance-specific-dynamic-patching

And can someone show a visual example of how to use dynamic patching to dynamically create instances of an object in an abstraction? For example, if I create a patch with a sine oscillator that can be assigned a frequency, an amplitude, and has a dac object and then make that patch an abstraction how would I be able to use dynamic patching to allow my gui to let me assign a new sound source in place of the sine oscillator? Objects like the switch object have limitations. I would want to be able to assign/unassign any number of new sounds sources in place of the sine oscillator. For example a phasor object, a noise object, or even synthesizer patch abstraction. Suggestions for other ways to do this are good and I also want to be sure to have explanations for using dynamic patching since that is what I am learning. How could this be done using dynamic patching?

General Dynamic Patching

What is the difference between pd messages and patch messages? Are they both used for dynamic patching? And how are they different from instance specific dynamic patching?

https://puredata.info/docs/tutorials/TipsAndTricks#pd-messages

https://puredata.info/docs/tutorials/TipsAndTricks#patch-messages

Reproducing csound patch in Pd

Hi all,

I found a Csound patch synthesizing sounds for a handpan on this page and it sounds great.

I then wanted to interface it with my MIDI controller so that rather than learning Csound from scratch, I've embedded the CSD synth into a Pd patch using the [csound6~] external. Works great.

The next steps however were to use such Pd patch on my smartphone via MobMuPlat, or in a DAW using plugdata (my attempt at generating a VST with Cabbage shows very poor CPU performance), however both are based on libpd and don't support [csound6~].

So I've taken the approach of reproducing the csound patch in vanilla Pd, which was very instructive. I've followed the same variable, function names and logic, read a lot of documentation, and came up with the patch attached.

handpan_comparison_csd_pd.zip

However, the sound is not quite the same. There is a huge lot of high frequencies in the Pd version, which I couldn't quite eliminate even using up to 10 successive [lop~ 900] (I've left only one in the patch for now), but more generally I can't figure which differences between the csound patch and the patch may have this effect. Note that I am neither an expert in Csound or in filters - in particular I don't quite trust the Butterworth3 filter I hacked together - can I even realistically expect that the same algorithmic logic in Csound and Pd would produce the exact same sound?

So I'm now asking here for help, in case some experts may find the time to review what I've done in Pd and compare it with the Csound patch, and may be able to suggest improvements. I know it is quite tedious work but I am hopeful  Thanks in advance to anyone who gives it a try!

Thanks in advance to anyone who gives it a try!

(Note that this patch was embedded in a more complicated one including interfacing with the midi controller, definition of various scales, debugging/monitoring etc., which I've removed before sharing it here, but I may have forgotten some bits here and there, let me know if it complicates the review!)

COMPUTATIONAL INTENSITY OF PD

@4poksy My largest patch is 230k and is a single patch which uses no abstractions* or even audio rate objects and it can eat every bit of ram and cpu my computer can give it without much fuss. I can probably write a small patch with just a handful of objects which can eat my cpu and ram. Knowing the resource use of individual objects will not be very useful, how you use them is fairly important and will effect resource usage, GUI and symbols can drag pd down even if the rest of the patch is fairly small. Your time would probably be better spent improving your patching skills so you can make more efficient use of pd, a slight change in methodology can have large effects on resource use. The first version of my 230k patch was only 84k and considerably less capable but hit the wall much more easily than my current version, difference is I learned about how pd worked and how to work with it, as a result my patches have gotten more efficient.

*Technically it uses one abstraction but it is a small abstraction of just a dozen objects which is dynamically created as needed, the patch can eat my computers resources with just one instance of the abstraction or run perfectly fine with 100 instances depending on what I ask of the patch.

COMPUTATIONAL INTENSITY OF PD

@4poksy 64 samples cannot be modified for links between patches and sub-patches.... [inlet~] etc. ...nor for final input and output.... [dac~] etc.

It can be modified within a patch or sub patch using [block~].

Patches are very small on disk and in memory..... your os will tell you the size.... they just contain text.

The biggest patch (hundreds of patches running together) that I have ever built uses less than 100K on disk. The objects you call are loaded to and run within the Pd binary as you open the patch.

Pd itself uses about 8.5K of ram with no patches open, and the gui program "wish" just over 15K (in windows 7).

When that massive 100K patch is running Pd expands to use 76K of ram and wish reduces its space to use 12K.

Arrays are the size you choose, and can be large if you are storing audio..

Pd can (sort of) report its cpu load....... dsp~.zip

For more on what actually happens "under the hood"..... http://puredata.info/docs/manuals/pd/x2.htm

.... but that document is also in your Pd installation in the "doc" folder........ /doc/1.manual/x2.htm

Pd builds a "super-patch" from all the patches you open, and the audio thread is rebuilt as you change or add objects and patches. That means that it is always running, but there is no buffer between patches unless you make a mistake..... see the doc above...

You can also use [block~] or [switch~] to turn off audio processing within individual patches and sub-patches...... useful for saving cpu load when switching between effects.

Welcome to the forum...!!

David.

P.S I am usually wrong about something and others will tell you where.

ofelia on raspberry pi?

Hi,

I am trying to get ofelia to run on a couple of rpi. Right now I am trying a rpi 3B+ running https://blokas.io/patchbox-os/

I run ofeila with the ofelia-fast-prototyping abs on my mac successfully.

Following install instructions here https://github.com/cuinjune/Ofelia

after running

sudo ./install_dependencies.sh

it ends like this:

detected Raspberry Pi

installing gstreamer omx

Reading package lists... Done

Building dependency tree... Done

Reading state information... Done

gstreamer1.0-omx is already the newest version (1.0.0.1-0+rpi12+jessiepmg).

The following package was automatically installed and is no longer required:

raspinfo

Use 'sudo apt autoremove' to remove it.

0 upgraded, 0 newly installed, 0 to remove and 0 not upgraded.

Updating ofxOpenCV to use openCV4

sed: can't read /home/patch/Documents/Pd/externals/addons/ofxOpenCv/addon_config.mk: No such file or directory

sed: can't read /home/patch/Documents/Pd/externals/addons/ofxOpenCv/addon_config.mk: No such file or directory

When running the example patches in Pd I get this in PD console:

opened alsa MIDI client 130 in:1 out:1

JACK: cannot connect input ports system:midi_capture_1 -> pure_data:input_2

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia d $0-of

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia d $0-of

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia d $0-of

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia d $0-of

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia d $0-of

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia f ;

ofBackground(20) ;

ofSetSmoothLighting(true) ;

ofSetSphereResolution(24) ;

local width , height = ofGetWidth() * 0.12 , ofGetHeight() * 0.12 ;

sphere = ofSpherePrimitive() ;

sphere:setRadius(width) ;

icoSphere = ofIcoSpherePrimitive() ;

icoSphere:setRadius(width) ;

plane = ofPlanePrimitive() ;

plane:set(width * 1.5 , height * 1.5) ;

cylinder = ofCylinderPrimitive() ;

cylinder:set(width * 0.7 , height * 2.2) ;

cone = ofConePrimitive() ;

cone:set(width * 0.75 , height * 2.2) ;

box = ofBoxPrimitive() ;

box:set(width * 1.25) ;

local screenWidth , screenHeight = ofGetWidth() , ofGetHeight() ;

plane:setPosition(screenWidth * 0.2 , screenHeight * 0.25 , 0) ;

box:setPosition(screenWidth * 0.5 , screenHeight * 0.25 , 0) ;

sphere:setPosition(screenWidth * 0.8 , screenHeight * 0.25 , 0) ;

icoSphere:setPosition(screenWidth * 0.2 , screenHeight * 0.75 , 0) ;

cylinder:setPosition(screenWidth * 0.5 , screenHeight * 0.75 , 0) ;

cone:setPosition(screenWidth * 0.8 , screenHeight * 0.75 , 0) ;

pointLight = ofLight() ;

pointLight:setPointLight() ;

pointLight:setDiffuseColor(ofFloatColor(0.85 , 0.85 , 0.55)) ;

pointLight:setSpecularColor(ofFloatColor(1 , 1 , 1)) ;

pointLight2 = ofLight() ;

pointLight2:setPointLight() ;

pointLight2:setDiffuseColor(ofFloatColor(238 / 255 , 57 / 255 , 135 / 255)) ;

pointLight2:setSpecularColor(ofFloatColor(0.8 , 0.8 , 0.9)) ;

pointLight3 = ofLight() ;

pointLight3:setPointLight() ;

pointLight3:setDiffuseColor(ofFloatColor(19 / 255 , 94 / 255 , 77 / 255)) ;

pointLight3:setSpecularColor(ofFloatColor(18 / 255 , 150 / 255 , 135 / 255)) ;

material = ofMaterial() ;

material:setShininess(120) ;

material:setSpecularColor(ofFloatColor(1 , 1 , 1)) ;

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia f ;

pointLight = nil ;

pointLight2 = nil ;

pointLight3 = nil ;

collectgarbage() ;

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia f ;

local width , height , time = ofGetWidth() , ofGetHeight() , ofGetElapsedTimef() ;

pointLight:setPosition((width * 0.5) + math.cos(time * 0.5) * (width * 0.3) , height / 2 , 500) ;

pointLight2:setPosition((width * 0.5) + math.cos(time * 0.15) * (width * 0.3) , height * 0.5 + math.sin(time * 0.7) * height , -300) ;

pointLight3:setPosition(math.cos(time * 1.5) * width * 0.5 , math.sin(time * 1.5) * width * 0.5 , math.cos(time * 0.2) * width) ;

... couldn't create

/home/patch/Documents/Pd/externals/ofelia/ofelia.l_arm: libboost_filesystem.so.1.67.0: cannot open shared object file: No such file or directory

ofelia f ;

local spinX = math.sin(ofGetElapsedTimef() * 0.35) ;

local spinY = math.cos(ofGetElapsedTimef() * 0.075) ;

ofEnableDepthTest() ;

ofEnableLighting() ;

pointLight:enable() ;

pointLight2:enable() ;

pointLight3:enable() ;

material:beginMaterial() ;

plane:rotateDeg(spinX , 1 , 0 , 0) ;

plane:rotateDeg(spinY , 0 , 1 , 0) ;

plane:draw() ;

box:rotateDeg(spinX , 1 , 0 , 0) ;

box:rotateDeg(spinY , 0 , 1 , 0) ;

box:draw() ;

sphere:rotateDeg(spinX , 1 , 0 , 0) ;

sphere:rotateDeg(spinY , 0 , 1 , 0) ;

sphere:draw() ;

icoSphere:rotateDeg(spinX , 1 , 0 , 0) ;

icoSphere:rotateDeg(spinY , 0 , 1 , 0) ;

icoSphere:draw() ;

cylinder:rotateDeg(spinX , 1 , 0 , 0) ;

cylinder:rotateDeg(spinY , 0 , 1 , 0) ;

cylinder:draw() ;

cone:rotateDeg(spinX , 1 , 0 , 0) ;

cone:rotateDeg(spinY , 0 , 1 , 0) ;

cone:draw() ;

material:endMaterial() ;

ofDisableLighting() ;

ofDisableDepthTest() ;

... couldn't create

Thankful for help!

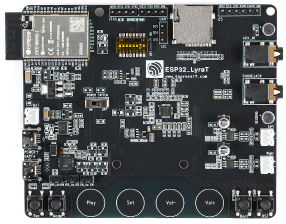

espd - tutorial

Hi all!

I had some time during vacations and I wanted to try running Miller's espd version. Here's a small tutorial.

The development board I bought: ESP32-LyraT > Mouser | Aliexpress

INSTALL ESP-IDF (IoT development framework)

mkdir ~/esp && cd ~/esp

git clone -b v4.4.2 --recursive https://github.com/espressif/esp-idf.git

cd esp-idf

./install.sh esp32

INSTALL ESP-ADF (audio development kit)

cd ~/esp && git clone --recursive https://github.com/espressif/esp-adf.git

SETUP ENV VAR

export ADF_PATH=~/esp/esp-adf && . ~/esp/esp-idf/export.sh

ESPD

download espd: http://msp.ucsd.edu/ideas/espd/

cd espd

git clone https://github.com/pure-data/pure-data.git pd

cd pd

git checkout 05bf346fa32510fd191fe77de24b3ea1c481f5ff

git apply ../patches/*.patch

Edit main/espd.h put your wifi credentials and the IP of the computer that will control espd:

#define CONFIG_ESP_WIFI_SSID "..."

#define CONFIG_ESP_WIFI_PASSWORD "..."

#define CONFIG_ESP_WIFI_SENDADDR "...."

mv sdkconfig.lyrat sdkconfig

idf.py build

idf.py -p /dev/ttyUSB0 flash (hold boot and then press reset on lyrat)

idf.py -p /dev/ttyUSB0 monitor (Ctrl+] to exit)

HOST

- open pd installed on (SENDADDR)

- open test-patch/host-patch.pd

If connected this message (ESPD: sendtcp: waiting for socket) will stop and you will see the mac address in the host patch.

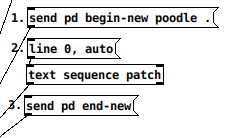

1- click on [send pf begin-new poodle .<

2- click on [line 0, auto< to send the defined patch (esp-patch.pd)

3 - click [send pd end-new<

4 - connect headphone, play with [send f 440< and [send f 660<

Custom patch:

- Use mono [dac~ 1] only

- Add this to your patch:

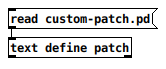

- in host-patch.pd change [read your-patch< -> [text define patch] redo step 1 to 3

TODO

Would love to play more with the code, right now I am not able to load complex vanilla patch. Also using the AUX (or built-in microphone) would be awesome (but I'm wondering about the round-trip latency (would it be under 15ms)).

Stop Stream of Bangs after threshold

@dkeller Even working with some of the Jazz "greats".... or should that be "grates"?..... I have met almost none that can truly improvise. A huge learned repertoire of perfect riffs seems to inhibit their ability to really innovate.

This could perhaps be a great learning tool.

Pd looks in its standard directory (Pd/bin) and the externals folder (Pd/extra) and the patch folder as it opens a patch. You can add other paths to search in Pd preferences.

If you put an external or an abstraction (like [once]... abstraction because just a patch... not compiled) in the same folder as the patch it will work just with that patch.

If you put it in a sub-folder then in the patch you will have to tell Pd where to look..... so [subfolder/once] in your patch instead of [once]....... or declare the path in the patch by placing an object [declare -path subfolder] in the patch.

If you want to use it everywhere then dump it in the extra folder...... which means you can put it in every patch..... BUT..... when you share the patch you will need to remember to bundle it or the recipient will not be able to create the object.

David.