How to keep number until turn knob

it gets in the weeds - basically you got a problem if your knob isn't a digital encoder which sends things like +1 or -1 to move numbers - typically these also have a push button.

if your knob is a potentiometer/slider/knob/pot like on an electric guitar - these only give discrete values and will Jump like on the left.

if you use one of those like I am on the right - and I apologize for it seeming messy, I'm cleaning up little problems - but the knob is still a potentiometer, so even tho I changed it into something that compares if its going higher or lower it ends up at weird places - so to do it right these needs to be an encoder type of knob - (which I'm simulating by just hitting the 1 and -1, a little surprised acting like an encoder isn't in [knob] yet.)knob-problems-pot-vs-encoder.pd

Midi Controller for PureData: experiences, recommendations, things to watch out for

@fina said:

I'm especially worried about multi mapping the controls to different pages/layers and how the controllers behave if the pot/encoder is in a different position

This can be a pain, faders (unless they are motorized) will jump, if you are at midi value 10 and switch too a different mapping that has that fader at max, when you move it it will jump down to 10, quite annoying but can be exploited and was a common trick on the early synths with patch memory that used analog controls, but this was generally more a hindrance than a help. Most modern controllers use endless rotary encoders which will update their values, in absolute mode you map out the controls on the controller itself and make presets, switch the mapping and the new values are there so no jump when you turn the knob but this has the disadvantage that they generally are limited to the low resolution 0-127 midi values. In relative mode the encoder sends only a plus or minus value so you can have unlimited resolution but you need to do more in pd since you need to add or subtract those plus or minus values from a stored value.

Personally I find mapping controls on a controller to be slow and I do need greater resolution so I do it all the mapping and the like in pd with relative mode, I made some abstractions to take care of all the work and they do some useful things like the first tick is ignored and just highlights the parameter being edited so when you forget what knob does what you can find your way without altering anything that is going on. This also means you are not limited by the controllers memory for mappings, I just do that in the patch so I have a virtually unlimited number of mappings. I use an Arturia Beatstep as a controller, 16 pads and 16 encoders, so each pad selects a mapping giving me 256 parameters I can edit or if I somehow needed more I could arrange the pads as 8 banks of 8 and 1024, but I have yet to need to do that. It also has the sequencer mode which is very limited but handy as an easy way to easily test sounds out. I can upload these abstractions if you decide on going with relative encoders, been on my list to get those uploaded but I tend to drag my feet on documentation so they have yet to get a proper upload, they have been uploaded a few times in various threads just not everything with actual documentation.

One thing to keep in mind, some controllers have software editors to make it easier to map stuff out, these software editors often do not work in linux even in Wine, so if you use linux you will want to make sure that the sysex commands have been published either by the maker or by someone who has sat down and figured them all out. For what ever reason some companies just will not release the sysex (Arturia being one of them). It is not terribly difficult to figure out the sysex on your own, just time consuming.

Midi Rotary Knob Direction Patch/Algorythm?

Hey everybody,

Sorry, for a lot of text. But the bold text at the bottom is my main question. The rest will help you to get a better understanding of my situation.

you helped me so much, with my last question here (the Faders are working dope now):

https://forum.pdpatchrepo.info/topic/13849/how-to-smoothe-out-arrays/25

I am doing a Steinberg Houston to Mackie Control emulation at the moment, to use my controller with other DAWs than Cubase/Nuendo. Will upload it to the internet community, when I am finished for the handful of people that maybe are also using this controller.

I made good progress:

I got the Faders and the normal knobs to work. And the display puts out information. But it is with bugs, because the LCD Screen of the Houston has 40 characters for one line and the Mackie Universal Pro has 56 Characters. So i did a list algorithm, which deletes spaces of the mackie message until the message fits on the 40 character line. Maybe there is a method wich will work better but this subject eats too much time for me at the moment and it works rough okay. One defenitely get's some helpful information on the screen from the DAW.

The Faders and Rotary Knobs and normal knobs are the most important of this controller I guess. The Faders are working fine as I mentioned above, but there is a problem with the rotary knobs, wich I can't handle alone and hope you can help me.

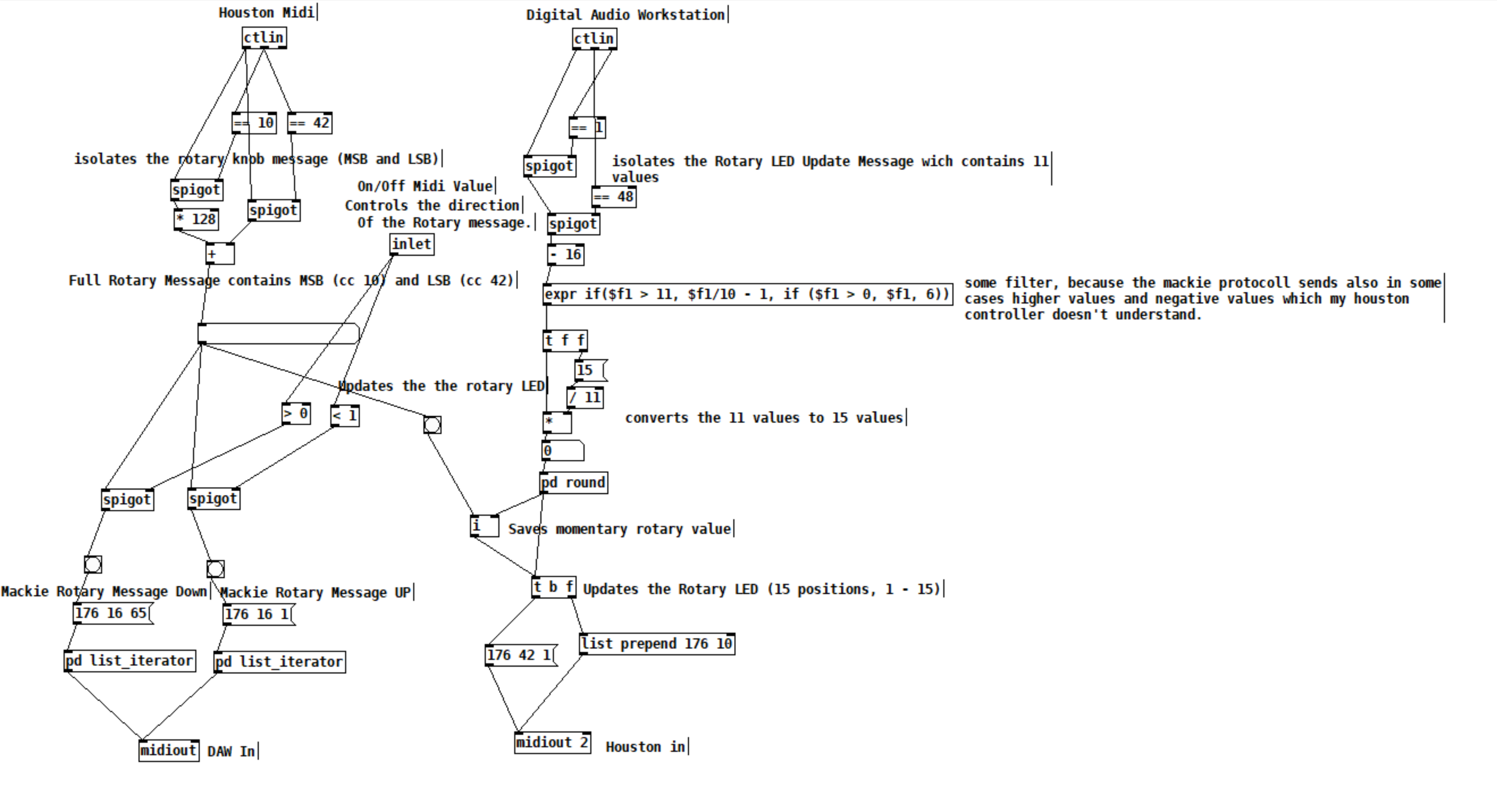

The problem is, that the Mackie Controller send simple clicks to the DAW. If you are turning a rotary knob, it sends out a number of midi messages:

If you turn it right, it sends midi messages wich contains the value 1 and if you turn it down it sends messages wich are containing the value 65.

"When the VPots are rotated rapidly, a message equal to the number of clicks is sent."

BUT the Houston controller instead is sending values like it's faders with 15 (MSB) and 128(LSB) values. AND it is updating the rotary limit by itself. So if I turn a rotary, it will update it's LEDs and stops sending midi messages when it reaches the maximum or minimum value. So, I did this patch as a momentary state:

The DAW sends 11 values for the Houston LEDs. 11 is max and 1 is min. This is good, I send this values to my houston controller and can update the rotary values and LEDs.

With this updated values from the DAW, I can force my rotary knobs, that they don't stop to send values, because they are set to the values, which the DAW sends, every time I turn a knob. With this method I got it to work to imitate a Mackie Rotary knob. Everytime the Houston Rotary value changes, it sends Mackie "midi click values" according to the amount of midi value changes of the houston.

BUT the problem is, that this is working only in one direction. Now my main question:

How can I make pure Data know, if I am turning my knob in the left direction or in the right direction? There is also the problem, which I mentioned above, that I set the momentary value everytime, I move the rotary, so that I get a unlimited amount of possible rotary move "clicks". Also the midi values which the houston sends arent perfect smooth. It works fine, but it isn't like that, that if you move a rotary in one direction, every value one by another is perfectly lower or higher.

I think I maybe need a algorythm, which looks if the values in a time period are getting higher or lower and then send out bangs on two seperate outlets. For example the left outlet for lower values and the right outlet for higher values. And it should also detect, if I move the rotary fast or slow. So a constant smoothing or clocked bang is also not an option. This is defenitely to complicated for me. I have no idea and what I tried didn't worked.

Would be super cool, if you could help me out again.

Why does Pd look so much worse on linux/windows than in macOS?

@ddw_music said:

I still think Tcl/Tk has failed to keep up with modern standards, and I have my doubts that it will ever catch up. So, I still think that Pd hampers its own progress by hitching itself to the Tcl/Tk wagon.

I think most people agree with you, myself included. Tk is clunky but it's in the current code base and has been kept so far since it works, although admittedly barely in many cases.

The last couple of posts here are encouraging.

I know development is slow, but it's really working against inertia. Momentum has been growing the last years and that's mostly due to more effective collaboration to tackle some of these issues. I think finding a sustainable approach to the GUI is one of the largest ones, so is taking a while to grow.

IMO a fair comparison is: normal screen size in Linux vs normal screen size in Mac.

Nope. See above.

Developer vs user perspective. The user sees clean diagonals on Mac, and jagged diagonals on Linux or Windows, and I think it's perfectly legitimate for the user not really to care that it's the OS rather than the drawing engine that makes it so in Mac. (It's correct, of course, to point out that the diagonals on a retina display will look smoother than antialiased diagonals without retina.)

Yup, you are totally right. I didn't mean to imply the Linux screenshot looked good since "that's the way it is" just more that it looks so much better on macOS since the screenshot is likely double the resolution as well.

I started using Pd on Windows circa 2006 then jumped to Linux for many years, then to macOS. My feeling was that Pd worked best on Linux but a lot of that has caught up as the underlying systems have developed but in some ways the Linux desktop has not (for many reasons, good and bad).

- Your point (c) already happened... you can use Purr Data (or the new Pd-L2ork etc). The GUI is implemented in Node/Electron/JS (I'm not sure of the details). Is it tracking Pd vanilla releases?... well that's a different issue.

I did try Purr Data, but abandoned it because (at the time, two years ago), Purr Data on Mac didn't support Gem and there were no concrete plans to address that. This might have changed in the last couple of years (has it? -- I've looked at https://agraef.github.io/purr-data/ and https://github.com/agraef/purr-data but it seems not quite straightforward to determine whether Gem+Mac has been added or not).

That's a result of many of the forks adopting the "kitchen sink" monolithic distribution model inherited from Pd-extended. The user gets the environment and many external libraries all together in one download, but the developers have to integrate changes from upstream themselves and build for the various platforms. It's less overheard for the user but more for the developers, which is why integrating a frankly large and complicated external like GEM is harder.

I'm happy to download the deken GEM package because I know the IEM people have done all the craziness to build it (it's a small nightmare of autotools/makefiles due to the amount of plugins and configuration options). I am also very happy to not have to build it and provide it to other users but in exchange we ask people to do that extra step and use deken. I recognize that the whole usage of [declare] is still not as easy or straightforward for many beginners. There are some thoughts of improving it and feedback for people in the teaching environment has also pushed certain solutions (ie. Pd Documents folder, etc).

Tracking vanilla releases was the other issue. A couple of years ago, [soundfiler] in Purr Data was older and didn't provide the full set of sound file stats. That issue had been logged and I'm sure it's been updated since then. "Tracking" seemed to be manual, case-by-case.

By "tracking" I am referring to a fork following the new developments in Pd-vanilla and integrating the changes. This becomes harder if/when the forks deviate with internal changes to the source code, so merging some changes then has to be done "by hand" which slows things down and is one reason why a fork may release a new version but be perhaps 1 or two versions behind Pd vanilla's internal objects, ie. new [soundfiler] right outlet, [clone] object, new [file] object, etc.

- As for updating Tk, it's probably not likely to happen as advanced graphics are not their focus. I could be wrong about this.

I agree that updating the GUI itself is the better solution for the long run. I also agree that it's a big undertaking when the current implementation is essentially still working fine after over 20 years...

Well, I mean, OK up to a point. There are things like the Tcl/Tk open/save file dialog, which is truly abhorrent in Linux.

Hah yeah, I totally understand. My first contribution to Pd(-extended) was to find out how to disable showing hidden files in the open/save panels on Linux. You would open a dialog, it defaulted to $HOME, then you had to wade through 100 dot folders. Terrible! Who did this and why? Well it wasn't on purpose, it was just the default setting for panels on Tk, and I added a little but of Tcl to turn it off and show a "Show hidden files/folders" button below.

OTOH I have a colleague at work who's internal toolkit still uses Motif. Why? Well it still works and he's the only user so he's ok with it. I'd say that Tk also still uses Motif... but there are many users and they have much different expectations. ;P

Some puzzling decisions here and there -- for example, when you use the mouse to drag an object to another location, why does it then switch to edit the text in the object box? To me, this is a disruptive workflow -- you're moving an object, not editing the object, but suddenly (without warning) you're forcibly switched into editing the object, and it takes a clumsy action of clicking outside the object and dragging the mouse into the object to get back to positioning mode. I feel so strongly about it that I even put in a PR to change that behavior (https://github.com/pure-data/pure-data/pull/922), which has been open for a year and a half without being either merged or rejected (only argued against initially, and then stalled).

Ah yes, I forgot about this one. It wasn't rejected, just came at a time when there were lots of other things shouting much louder, ie. if this isn't fixed Pd is broken on $PLATFORM. I would say that, personally, your initial posting style turned me off but I recognize where the frustration came from. I also appreciate making the point AND providing a solution, so the ball is in our court for sure. I will take a look at this soon and try it out.

"Still working for 20 years" but you could also say, still clunky for 20 years, with inertia when somebody does actually try to fix something.

Another approach would be to try to bring improvements to Tcl/Tk itself but the dev community is a little opaque, more so than Pd. However I admit to not having put a ton of time into it, other than flagging our custom patches to the TK macOS guy on Github.

WRT to the rest, any architectural improvements (e.g. drawing abstractions so that the Pd core isn't so tightly coupled to Tcl/Tk) that make it more feasible to move forward are great, would love to see it.

As would I... we are preparing for a major exhibition opening in December, but I will have some time off after and I may take a stab at a technical demo for this (among other things) then pull in some other perspectives / testers. It has been an itch I have wanted to scratch since, at least Pd Con 2016 in NYC.

Why does Pd look so much worse on linux/windows than in macOS?

Howdy all,

I just found this and want to respond from my perspective as someone who has spent by now a good amount of time (paid & unpaid) working on the Pure Data source code itself.

I'm just writing for myself and don't speak for Miller or anyone else.

Mac looks good

The antialiasing on macOS is provided by the system and utilized by Tk. It's essentially "free" and you can enable or disable it on the canvas. This is by design as I believe Apple pushed antialiasing at the system level starting with Mac OS X 1.

There are even some platform-specific settings to control the underlying CoreGraphics settings which I think Hans tried but had issues with: https://github.com/pure-data/pure-data/blob/master/tcl/apple_events.tcl#L16. As I recall, I actually disabled the font antialiasing as people complained that the canvas fonts on mac were "too fuzzy" while Linux was "nice and crisp."

In addition, the last few versions of Pd have had support for "Retina" high resolution displays enabled and the macOS compositor does a nice job of handling the point to pixel scaling for you, for free, in the background. Again, Tk simply uses the system for this and you can enable/disable via various app bundle plist settings and/or app defaults keys.

This is why the macOS screenshots look so good: antialiasing is on and it's likely the rendering is at double the resolution of the Linux screenshot.

IMO a fair comparison is: normal screen size in Linux vs normal screen size in Mac.

Nope. See above.

It could also just be Apple holding back a bit of the driver code from the open source community to make certain linux/BSD never gets quite as nice as OSX on their hardware, they seem to like to play such games, that one key bit of code that is not free and you must license from them if you want it and they only license it out in high volume and at high cost.

Nah. Apple simply invested in antialiasing via its accelerated compositor when OS X was released. I doubt there are patents or licensing on common antialiasing algorithms which go back to the 60s or even earlier.

tkpath exists, why not use it?

Last I checked, tkpath is long dead. Sure, it has a website and screenshots (uhh Mac OS X 10.2 anyone?) but the latest (and only?) Sourceforge download is dated 2005. I do see a mirror repo on Github but it is archived and the last commit was 5 years ago.

And I did check on this, in fact I spent about a day (unpaid) seeing if I could update the tkpath mac implementation to move away from the ATSU (Apple Type Support) APIs which were not available in 64 bit. In the end, I ran out of energy and stopped as it would be too much work, too many details, and likely to not be maintained reliably by probably anyone.

It makes sense to help out a thriving project but much harder to justify propping something up that is barely active beyond "it still works" on a couple of platforms.

Why aren't the fonts all the same yet?!

I also despise how linux/windows has 'bold' for default

I honestly don't really care about this... but I resisted because I know so many people do and are used to it already. We could clearly and easily make the change but then we have to deal with all the pushback. If you went to the Pd list and got an overwhelming consensus and Miller was fine with it, then ok, that would make sense. As it was, "I think it should be this way because it doesn't make sense to me" was not enough of a carrot for me to personally make and support the change.

Maybe my problem is that I feel a responsibility for making what seems like a quick and easy change to others?

And this view is after having put an in ordinate amount of time just getting (almost) the same font on all platforms, including writing and debugging a custom C Tcl extension just to load arbitrary TTF files on Windows.

Why don't we add abz, 123 to Pd? xyzzy already has it?!

What I've learned is that it's much easier to write new code than it is to maintain it. This is especially true for cross platform projects where you have to figure out platform intricacies and edge cases even when mediated by a common interface like Tk. It's true for any non-native wrapper like QT, WXWidgets, web browsers, etc.

Actually, I am pretty happy that Pd's only core dependencies a Tcl/Tk, PortAudio, and PortMidi as it greatly lowers the amount of vectors for bitrot. That being said, I just spent about 2 hours fixing the help browser for mac after trying Miller's latest 0.52-0test2 build. The end result is 4 lines of code.

For a software community to thrive over the long haul, it needs to attract new users. If new users get turned off by an outdated surface presentation, then it's harder to retain new users.

Yes, this is correct, but first we have to keep the damn thing working at all.  I think most people agree with you, including me when I was teaching with Pd.

I think most people agree with you, including me when I was teaching with Pd.

I've observed, at times, when someone points out a deficiency in Pd, the Pd community's response often downplays, or denies, or gets defensive about the deficiency. (Not always, but often enough for me to mention it.) I'm seeing that trend again here. Pd is all about lines, and the lines don't look good -- and some of the responses are "this is not important" or (oid) "I like the fact that it never changed." That's... thoroughly baffling to me.

I read this as "community" = "active developers." It's true, some people tend to poo poo the same reoccurring ideas but this is largely out of years of hearing discussions and decisions and treatises on the list or the forum or facebook or whatever but nothing more. In the end, code talks, even better, a working technical implementation that is honed with input from people who will most likely end up maintaining it, without probably understanding it completely at first.

This was very hard back on Sourceforge as people had to submit patches(!) to the bug tracker. Thanks to moving development to Github and the improvement of tools and community, I'm happy to see the new engagement over the last 5-10 years. This was one of the pushes for me to help overhaul the build system to make it possible and easy for people to build Pd itself, then they are much more likely to help contribute as opposed to waiting for binary builds and unleashing an unmanageable flood of bug reports and feature requests on the mailing list.

I know it's not going to change anytime soon, because the current options are a/ wait for Tcl/Tk to catch up with modern rendering or b/ burn Pd developer cycles implementing something that Tcl/Tk will(?) eventually implement or c/ rip the guts out of the GUI and rewrite the whole thing using a modern graphics framework like Qt. None of those is good (well, c might be a viable investment in the future -- SuperCollider, around 2010-2011, ripped out the Cocoa GUIs and went to Qt, and the benefits have been massive -- but I know the developer resources aren't there for Pd to dump Tcl/Tk).

A couple of points:

-

Your point (c) already happened... you can use Purr Data (or the new Pd-L2ork etc). The GUI is implemented in Node/Electron/JS (I'm not sure of the details). Is it tracking Pd vanilla releases?... well that's a different issue.

-

As for updating Tk, it's probably not likely to happen as advanced graphics are not their focus. I could be wrong about this.

I agree that updating the GUI itself is the better solution for the long run. I also agree that it's a big undertaking when the current implementation is essentially still working fine after over 20 years, especially since Miller's stated goal was for 50 year project support, ie. pieces composed in the late 90s should work in 2040. This is one reason why we don't just "switch over to QT or Juce so the lines can look like Max." At this point, Pd is aesthetically more Max than Max, at least judging by looking at the original Ircam Max documentation in an archive closet at work.

A way forward: libpd?

I my view, the best way forward is to build upon Jonathan Wilke's work in Purr Data for abstracting the GUI communication. He essentially replaced the raw Tcl commands with abstracted drawing commands such as "draw rectangle here of this color and thickness" or "open this window and put it here."

For those that don't know, "Pd" is actually two processes, similar to SuperCollider, where the "core" manages the audio, patch dsp/msg graph, and most of the canvas interaction event handling (mouse, key). The GUI is a separate process which communicates with the core over a localhost loopback networking connection. The GUI is basically just opening windows, showing settings, and forwarding interaction events to the core. When you open the audio preferences dialog, the core sends the current settings to the GUI, the GUI then sends everything back to the core after you make your changes and close the dialog. The same for working on a patch canvas: your mouse and key events are forwarded to the core, then drawing commands are sent back like "draw object outline here, draw osc~ text here inside. etc."

So basically, the core has almost all of the GUI's logic while the GUI just does the chrome like scroll bars and windows. This means it could be trivial to port the GUI to other toolkits or frameworks as compared to rewriting an overly interconnected monolithic application (trust me, I know...).

Basically, if we take Jonathan's approach, I feel adding a GUI communication abstraction layer to libpd would allow for making custom GUIs much easier. You basically just have to respond to the drawing and windowing commands and forward the input events.

Ideally, then each fork could use the same Pd core internally and implement their own GUIs or platform specific versions such as a pure Cocoa macOS Pd. There is some other re-organization that would be needed in the C core, but we've already ported a number of improvements from extended and Pd-L2ork, so it is indeed possible.

Also note: the libpd C sources are now part of the pure-data repo as of a couple months ago...

Discouraging Initiative?!

But there's a big difference between "we know it's a problem but can't do much about it" vs "it's not a serious problem." The former may invite new developers to take some initiative. The latter discourages initiative. A healthy open source software community should really be careful about the latter.

IMO Pd is healthier now than it has been as long as I've know it (2006). We have so many updates and improvements over every release the last few years, with many contributions by people in this thread. Thank you! THAT is how we make the project sustainable and work toward finding solutions for deep issues and aesthetic issues and usage issues and all of that.

We've managed to integrate a great many changes from Pd-Extended into vanilla and open up/decentralize the externals and in a collaborative manner. For this I am also grateful when I install an external for a project.

At this point, I encourage more people to pitch in. If you work at a university or institution, consider sponsoring some student work on specific issues which volunteering developers could help supervise, organize a Pd conference or developer meetup (this are super useful!), or consider some sort of paid residency or focused project for artists using Pd. A good amount of my own work on Pd and libpd has been sponsored in many of these ways and has helped encourage me to continue.

This is likely to be more positive toward the community as a whole than banging back and forth on the list or the forum. Besides, I'd rather see cool projects made with Pd than keep talking about working on Pd.

That being said, I know everyone here wants to see the project continue and improve and it will. We are still largely opening up the development and figuring how to support/maintain it. As with any such project, this is an ongoing process.

Out

Ok, that was long and rambly and it's way past my bed time.

Good night all.

Building on Windows - works from Git source, not from tarball

I wanted to build PD on Windows 10 to get ASIO support. I failed when I used the "Source" files from the PD website. I succeeded when I used source that I cloned from Github. I followed the same instructions from the wiki both when I failed and when I succeeded. (They are the same as in the manual, just a little more concise.)

I am sharing below my terminal output from the failed build attempts from the downloaded source code (the tar.gz file). Some of these messages suggest that there might be errors in the makefiles. I don't know anything about makefiles, so I can't really interpret the errors. But I did want to pass them along, in case a developer might find them useful. Here you go:

bhage@LAPTOP-F1TU0LRH MINGW64 /c/Users/bhage/Downloads/pd-0.51-4

$ ./autogen.sh

libtoolize: putting auxiliary files in AC_CONFIG_AUX_DIR, 'm4/config'.

libtoolize: linking file 'm4/config/config.guess'

libtoolize: linking file 'm4/config/config.sub'

libtoolize: linking file 'm4/config/install-sh'

libtoolize: linking file 'm4/config/ltmain.sh'

libtoolize: putting macros in AC_CONFIG_MACRO_DIRS, 'm4/generated'.

libtoolize: linking file 'm4/generated/libtool.m4'

libtoolize: linking file 'm4/generated/ltoptions.m4'

libtoolize: linking file 'm4/generated/ltsugar.m4'

libtoolize: linking file 'm4/generated/ltversion.m4'

libtoolize: linking file 'm4/generated/lt~obsolete.m4'

configure.ac:166: warning: The macro `AC_LIBTOOL_DLOPEN' is obsolete.

configure.ac:166: You should run autoupdate.

aclocal.m4:8488: AC_LIBTOOL_DLOPEN is expanded from...

configure.ac:166: the top level

configure.ac:166: warning: AC_LIBTOOL_DLOPEN: Remove this warning and the call to _LT_SET_OPTION when you

configure.ac:166: put the 'dlopen' option into LT_INIT's first parameter.

../autoconf-2.71/lib/autoconf/general.m4:2434: AC_DIAGNOSE is expanded from...

aclocal.m4:8488: AC_LIBTOOL_DLOPEN is expanded from...

configure.ac:166: the top level

configure.ac:167: warning: The macro `AC_LIBTOOL_WIN32_DLL' is obsolete.

configure.ac:167: You should run autoupdate.

aclocal.m4:8523: AC_LIBTOOL_WIN32_DLL is expanded from...

configure.ac:167: the top level

configure.ac:167: warning: AC_LIBTOOL_WIN32_DLL: Remove this warning and the call to _LT_SET_OPTION when you

configure.ac:167: put the 'win32-dll' option into LT_INIT's first parameter.

../autoconf-2.71/lib/autoconf/general.m4:2434: AC_DIAGNOSE is expanded from...

aclocal.m4:8523: AC_LIBTOOL_WIN32_DLL is expanded from...

configure.ac:167: the top level

configure.ac:168: warning: The macro `AC_PROG_LIBTOOL' is obsolete.

configure.ac:168: You should run autoupdate.

aclocal.m4:121: AC_PROG_LIBTOOL is expanded from...

configure.ac:168: the top level

configure.ac:182: warning: The macro `AC_HEADER_STDC' is obsolete.

configure.ac:182: You should run autoupdate.

../autoconf-2.71/lib/autoconf/headers.m4:704: AC_HEADER_STDC is expanded from...

configure.ac:182: the top level

configure.ac:213: warning: The macro `AC_TYPE_SIGNAL' is obsolete.

configure.ac:213: You should run autoupdate.

../autoconf-2.71/lib/autoconf/types.m4:776: AC_TYPE_SIGNAL is expanded from...

configure.ac:213: the top level

configure.ac:235: warning: The macro `AC_CHECK_LIBM' is obsolete.

configure.ac:235: You should run autoupdate.

aclocal.m4:3879: AC_CHECK_LIBM is expanded from...

configure.ac:235: the top level

configure.ac:276: warning: The macro `AC_TRY_LINK' is obsolete.

configure.ac:276: You should run autoupdate.

../autoconf-2.71/lib/autoconf/general.m4:2920: AC_TRY_LINK is expanded from...

m4/universal.m4:14: PD_CHECK_UNIVERSAL is expanded from...

configure.ac:276: the top level

configure.ac:168: installing 'm4/config/compile'

configure.ac:9: installing 'm4/config/missing'

asio/Makefile.am: installing 'm4/config/depcomp'

bhage@LAPTOP-F1TU0LRH MINGW64 /c/Users/bhage/Downloads/pd-0.51-4

$ autoupdate

configure.ac:182: warning: The preprocessor macro `STDC_HEADERS' is obsolete.

Except in unusual embedded environments, you can safely include all

ISO C90 headers unconditionally.

configure.ac:213: warning: your code may safely assume C89 semantics that RETSIGTYPE is void.

Remove this warning and the `AC_CACHE_CHECK' when you adjust the code.

bhage@LAPTOP-F1TU0LRH MINGW64 /c/Users/bhage/Downloads/pd-0.51-4

$ ^C

bhage@LAPTOP-F1TU0LRH MINGW64 /c/Users/bhage/Downloads/pd-0.51-4

$ ./configure

configure: loading site script /etc/config.site

checking for a BSD-compatible install... /usr/bin/install -c

checking whether build environment is sane... yes

checking for a race-free mkdir -p... /usr/bin/mkdir -p

checking for gawk... gawk

checking whether make sets $(MAKE)... yes

checking whether make supports nested variables... yes

checking build system type... x86_64-w64-mingw32

checking host system type... x86_64-w64-mingw32

configure: iPhone SDK only available for arm-apple-darwin hosts, skipping tests

configure: Android SDK only available for arm-linux hosts, skipping tests

checking for as... as

checking for dlltool... dlltool

checking for objdump... objdump

checking how to print strings... printf

checking whether make supports the include directive... yes (GNU style)

checking for gcc... gcc

checking whether the C compiler works... yes

checking for C compiler default output file name... a.exe

checking for suffix of executables... .exe

checking whether we are cross compiling... no

checking for suffix of object files... o

checking whether the compiler supports GNU C... yes

checking whether gcc accepts -g... yes

checking for gcc option to enable C11 features... none needed

checking whether gcc understands -c and -o together... yes

checking dependency style of gcc... gcc3

checking for a sed that does not truncate output... /usr/bin/sed

checking for grep that handles long lines and -e... /usr/bin/grep

checking for egrep... /usr/bin/grep -E

checking for fgrep... /usr/bin/grep -F

checking for ld used by gcc... C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe

checking if the linker (C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe) is GNU ld... yes

checking for BSD- or MS-compatible name lister (nm)... /mingw64/bin/nm -B

checking the name lister (/mingw64/bin/nm -B) interface... BSD nm

checking whether ln -s works... no, using cp -pR

checking the maximum length of command line arguments... 8192

checking how to convert x86_64-w64-mingw32 file names to x86_64-w64-mingw32 format... func_convert_file_msys_to_w32

checking how to convert x86_64-w64-mingw32 file names to toolchain format... func_convert_file_msys_to_w32

checking for C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe option to reload object files... -r

checking for objdump... (cached) objdump

checking how to recognize dependent libraries... file_magic ^x86 archive import|^x86 DLL

checking for dlltool... (cached) dlltool

checking how to associate runtime and link libraries... func_cygming_dll_for_implib

checking for ar... ar

checking for archiver @FILE support... @

checking for strip... strip

checking for ranlib... ranlib

checking command to parse /mingw64/bin/nm -B output from gcc object... ok

checking for sysroot... no

checking for a working dd... /usr/bin/dd

checking how to truncate binary pipes... /usr/bin/dd bs=4096 count=1

checking for mt... no

checking if : is a manifest tool... no

checking for stdio.h... yes

checking for stdlib.h... yes

checking for string.h... yes

checking for inttypes.h... yes

checking for stdint.h... yes

checking for strings.h... yes

checking for sys/stat.h... yes

checking for sys/types.h... yes

checking for unistd.h... yes

checking for vfork.h... no

checking for dlfcn.h... no

checking for objdir... .libs

checking if gcc supports -fno-rtti -fno-exceptions... no

checking for gcc option to produce PIC... -DDLL_EXPORT -DPIC

checking if gcc PIC flag -DDLL_EXPORT -DPIC works... yes

checking if gcc static flag -static works... yes

checking if gcc supports -c -o file.o... yes

checking if gcc supports -c -o file.o... (cached) yes

checking whether the gcc linker (C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe) supports shared libraries... yes

checking whether -lc should be explicitly linked in... yes

checking dynamic linker characteristics... Win32 ld.exe

checking how to hardcode library paths into programs... immediate

checking whether stripping libraries is possible... yes

checking if libtool supports shared libraries... yes

checking whether to build shared libraries... yes

checking whether to build static libraries... yes

checking for gcc... (cached) gcc

checking whether the compiler supports GNU C... (cached) yes

checking whether gcc accepts -g... (cached) yes

checking for gcc option to enable C11 features... (cached) none needed

checking whether gcc understands -c and -o together... (cached) yes

checking dependency style of gcc... (cached) gcc3

checking for g++... g++

checking whether the compiler supports GNU C++... yes

checking whether g++ accepts -g... yes

checking for g++ option to enable C++11 features... none needed

checking dependency style of g++... gcc3

checking how to run the C++ preprocessor... g++ -E

checking for ld used by g++... C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe

checking if the linker (C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe) is GNU ld... yes

checking whether the g++ linker (C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe) supports shared libraries... yes

checking for g++ option to produce PIC... -DDLL_EXPORT -DPIC

checking if g++ PIC flag -DDLL_EXPORT -DPIC works... yes

checking if g++ static flag -static works... yes

checking if g++ supports -c -o file.o... yes

checking if g++ supports -c -o file.o... (cached) yes

checking whether the g++ linker (C:/msys64/mingw64/x86_64-w64-mingw32/bin/ld.exe) supports shared libraries... yes

checking dynamic linker characteristics... Win32 ld.exe

checking how to hardcode library paths into programs... immediate

checking whether make sets $(MAKE)... (cached) yes

checking whether ln -s works... no, using cp -pR

checking for grep that handles long lines and -e... (cached) /usr/bin/grep

checking for a sed that does not truncate output... (cached) /usr/bin/sed

checking for windres... windres

checking for egrep... (cached) /usr/bin/grep -E

checking for fcntl.h... yes

checking for limits.h... yes

checking for malloc.h... yes

checking for netdb.h... no

checking for netinet/in.h... no

checking for stddef.h... yes

checking for stdlib.h... (cached) yes

checking for string.h... (cached) yes

checking for sys/ioctl.h... no

checking for sys/param.h... yes

checking for sys/socket.h... no

checking for sys/soundcard.h... no

checking for sys/time.h... yes

checking for sys/timeb.h... yes

checking for unistd.h... (cached) yes

checking for int16_t... yes

checking for int32_t... yes

checking for off_t... yes

checking for pid_t... yes

checking for size_t... yes

checking for working alloca.h... no

checking for alloca... yes

checking for error_at_line... no

checking for fork... no

checking for vfork... no

checking for GNU libc compatible malloc... (cached) yes

checking for GNU libc compatible realloc... (cached) yes

checking return type of signal handlers... void

checking for dup2... yes

checking for floor... yes

checking for getcwd... yes

checking for gethostbyname... no

checking for gettimeofday... yes

checking for memmove... yes

checking for memset... yes

checking for pow... yes

checking for regcomp... no

checking for select... no

checking for socket... no

checking for sqrt... yes

checking for strchr... yes

checking for strerror... yes

checking for strrchr... yes

checking for strstr... yes

checking for strtol... yes

checking for dlopen in -ldl... no

checking for cos in -lm... yes

checking for CoreAudio/CoreAudio.h... no

checking for pthread_create in -lpthread... yes

checking for msgfmt... yes

checking for sys/soundcard.h... (cached) no

checking for snd_pcm_info in -lasound... no

configure: Using included PortAudio

configure: Using included PortMidi

checking that generated files are newer than configure... done

configure: creating ./config.status

config.status: creating Makefile

config.status: creating asio/Makefile

config.status: creating doc/Makefile

config.status: creating font/Makefile

config.status: creating mac/Makefile

config.status: creating man/Makefile

config.status: creating msw/Makefile

config.status: creating portaudio/Makefile

config.status: creating portmidi/Makefile

config.status: creating tcl/Makefile

config.status: creating tcl/pd-gui

config.status: creating po/Makefile

config.status: creating src/Makefile

config.status: creating extra/Makefile

config.status: creating extra/bob~/GNUmakefile

config.status: creating extra/bonk~/GNUmakefile

config.status: creating extra/choice/GNUmakefile

config.status: creating extra/fiddle~/GNUmakefile

config.status: creating extra/loop~/GNUmakefile

config.status: creating extra/lrshift~/GNUmakefile

config.status: creating extra/pd~/GNUmakefile

config.status: creating extra/pique/GNUmakefile

config.status: creating extra/sigmund~/GNUmakefile

config.status: creating extra/stdout/GNUmakefile

config.status: creating pd.pc

config.status: executing depfiles commands

config.status: executing libtool commands

configure:

pd 0.51.4 is now configured

Platform: MinGW

Debug build: no

Universal build: no

Localizations: yes

Source directory: .

Installation prefix: /mingw64

Compiler: gcc

CPPFLAGS:

CFLAGS: -g -O2 -ffast-math -funroll-loops -fomit-frame-pointer -O3

LDFLAGS:

INCLUDES:

LIBS: -lpthread

External extension: dll

External CFLAGS: -mms-bitfields

External LDFLAGS: -s -Wl,--enable-auto-import -no-undefined -lpd

fftw: no

wish(tcl/tk): wish85.exe

audio APIs: PortAudio ASIO MMIO

midi APIs: PortMidi

bhage@LAPTOP-F1TU0LRH MINGW64 /c/Users/bhage/Downloads/pd-0.51-4

$ make

CDPATH="${ZSH_VERSION+.}:" && cd . && /bin/sh '/c/Useras/bhage/Downloads/pd-0.51-4/m4/config/missing' aclocal-1.16 -I m4/generated -I m4

configure.ac:170: error: AC_REQUIRE(): cannot be used outside of an AC_DEFUN'd macro

configure.ac:170: the top level

autom4te: error: /usr/bin/m4 failed with exit status: 1

aclocal-1.16: error: autom4te failed with exit status: 1

make: *** [Makefile:451: aclocal.m4] Error 1

bhage@LAPTOP-F1TU0LRH MINGW64 /c/Users/bhage/Downloads/pd-0.51-4

$ make app

CDPATH="${ZSH_VERSION+.}:" && cd . && /bin/sh '/c/Users/bhage/Downloads/pd-0.51-4/m4/config/missing' aclocal-1.16 -I m4/generated -I m4

configure.ac:170: error: AC_REQUIRE(): cannot be used outside of an AC_DEFUN'd macro

configure.ac:170: the top level

autom4te: error: /usr/bin/m4 failed with exit status: 1

aclocal-1.16: error: autom4te failed with exit status: 1

make: *** [Makefile:451: aclocal.m4] Error 1

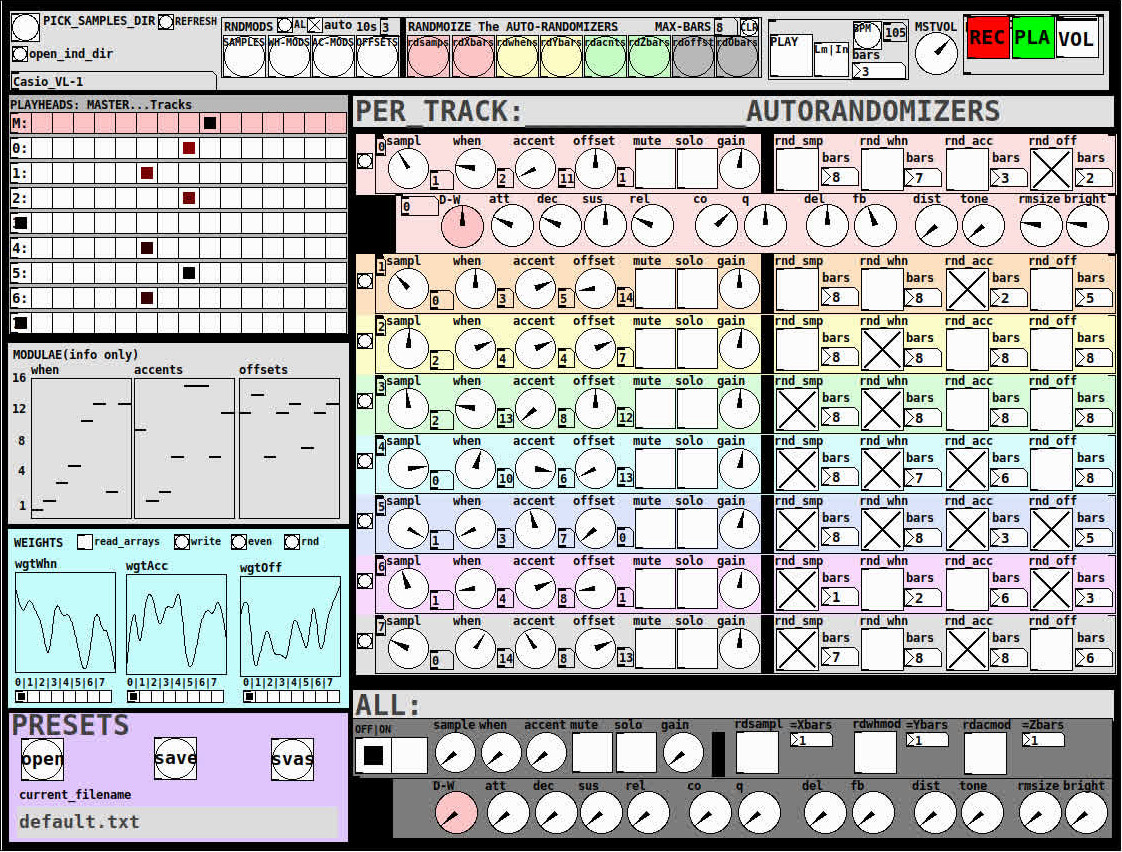

Ganymede: an 8-track, semi-automatic samples-looper and percussion instrument based on modulus instead of metro

Ganymede.7z (includes its own limited set of samples)

Background:

Ganymede was created to test a bet I made with myself:

that I could boil down drum sequencing to a single knob (i.e. instead of writing a pattern).

As far as I am concerned, I won the bet.

The trick is...

Instead of using a knob to turn, for example, up or down a metro, you use it to turn up or down the modulus of a counter, ie. counter[1..16]>[mod X]>[sel 0]>play the sample. If you do this then add an offset control, then where the beat occurs changes in Real-Time.

But you'll have to decide for yourself whether I won the bet.  .

.

(note: I have posted a few demos using it in various stages of its' carnation recently in the Output section of the Forum and intend to share a few more, now that I have posted this.)

Remember, Ganymede is an instrument, i.e. Not an editor.

It is intended to be "played" or...allowed to play by itself.

(aside: specifically designed to be played with an 8-channel, usb, midi, mixer controller and mouse, for instance an Akai Midimix or Novation LaunchPad XL.)

So it does Not save patterns nor do you "write" patterns.

Instead, you can play it and save the audio~ output to a wave file (for use later as a loop, song, etc.)

Jumping straight to The Chase...

How to use it:

REQUIRES:

moonlib, zexy, list-abs, hcs, cyclone, tof, freeverb~ and iemlib

THE 7 SECTIONS:

- GLOBAL:

- to set parameters for all 8 tracks, exs. pick the samples directory from a tof/pmenu or OPEN_IND_DIR (open an independent directory) (see below "Samples"for more detail)

- randomizing parameters, random all. randomize all every 10*seconds, maximum number of bars when randomizing bars, CLR the randomizer check boxes

- PLAY, L(imited) or I(nfinite) counter, if L then number of bars to play before resetting counter, bpm(menu)

- MSTVOL

- transport/recording (on REC files are automatically saved to ./ganymede/recordings with datestamp filename, the output is zexy limited to 98 and the volume controls the boost into the limiter)

- PLAYHEADS:

- indicating where the track is "beating"

- blank=no beat and black-to-red where redder implies greater env~ rms

- MODULAE:

- for information only to show the relative values of the selected modulators

- WEIGHTS:

- sent to [list-wrandom] when randomizing the When, Accent, and Offset modulators

- to use click READ_ARRAYS, adjust as desired, click WRITE, uncheck READ ARRAYS

- EVEN=unweighted, RND for random, and 0-7 for preset shapes

- PRESETS:

- ...self explanatory

-

PER TRACK ACCORDION:

- 8 sections, 1 per track

- each open-closable with the left most bang/track

- opening one track closes the previously opened track

- includes main (always shown)

- with knobs for the sample (with 300ms debounce)

- knobs for the modulators (When, Accent, and Offset) [1..16]

- toggles if you want that parameter to be randomized after X bars

- and when opened, 5 optional effects

- adsr, vcf, delayfb, distortion, and reverb

- D-W=dry-wet

- 2 parameters per effect

-

ALL:

when ON. sets the values for all of the tracks to the same value; reverts to the original values when turned OFF

MIDI:

CC 7=MASTER VOLUME

The other controls exposed to midi are the first four knobs of the accordion/main-gui. In other words, the Sample, When, Accent, and Offset knobs of each track. And the MUTE and SOLO of each track.

Control is based on a midimap file (./midimaps/midimap-default.txt).

So if it is easier to just edit that file to your controller, then just make a backup of it and edit as you need. In other words, midi-learn and changing midimap files is not supported.

The default midimap is:

By track

CCs

| ---TRACK--- | ---SAMPLE--- | ---WHEN--- | ---ACCENT--- | --- OFFSET--- |

|---|---|---|---|---|

| 0 | 16 | 17 | 18 | 19 |

| 1 | 20 | 21 | 22 | 23 |

| 2 | 24 | 25 | 26 | 27 |

| 3 | 28 | 29 | 30 | 31 |

| 4 | 46 | 47 | 48 | 49 |

| 5 | 50 | 51 | 52 | 53 |

| 6 | 54 | 55 | 56 | 57 |

| 7 | 58 | 59 | 60 | 61 |

NOTEs

| ---TRACK--- | ---MUTE--- | ---SOLO--- |

|---|---|---|

| 0 | 1 | 3 |

| 1 | 4 | 6 |

| 2 | 7 | 9 |

| 3 | 10 | 12 |

| 4 | 13 | 15 |

| 5 | 16 | 18 |

| 6 | 19 | 21 |

| 7 | 22 | 24 |

SAMPLES:

Ganymede looks for samples in its ./samples directory by subdirectory.

It generates a tof/pmenu from the directories in ./samples.

Once a directory is selected, it then searches for ./**/.wav (wavs within 1-deep subdirectories) and then ./*.wav (wavs within that main "kit" directory).

I have uploaded my collection of samples (that I gathered from https://archive.org/details/old-school-sample-cds-collection-01, Attribution-Non Commercial-Share Alike 4.0 International Creative Commons License, 90's Old School Sample CDs Collection by CyberYoukai) to the following link on my Google Drive:

https://drive.google.com/file/d/1SQmrLqhACOXXSmaEf0Iz-PiO7kTkYzO0/view?usp=sharing

It is a large 617 Mb .7z file, including two directories: by-instrument with 141 instruments and by-kit with 135 kits. The file names and directory structure have all been laid out according to Ganymede's needs, ex. no spaces, etc.

My suggestion to you is unpack the file into your Path so they are also available for all of your other patches.

MAKING KITS:

I found Kits are best made by adding directories in a "custom-kits" folder to your sampls directory and just adding files, but most especially shortcuts/symlinks to all the files or directories you want to include in the kit into that folder, ex. in a "bongs&congs" folder add shortcuts to those instument folders. Then, create a symnlink to "bongs&congs" in your ganymede/samples directory.

Note: if you want to experiment with kits on-the-fly (while the patch is on) just remember to click the REFRESH bang to get a new tof/pmenu of available kits from your latest ./samples directory.

If you want more freedom than a dynamic menu, you can use the OPEN_IND(depedent)_DIR bang to open any folder. But do bear in mind, Ganymede may not see all the wavs in that folder.

AFTERWARD/NOTES

-

the [hcs/folder_list] [tof/pmenu] can only hold (the first) 64 directories in the ./samples directory

-

the use of 1/16th notes (counter-interval) is completely arbitrary. However, that value (in the [pd global_metro] subpatch...at the noted hradio) is exposed and I will probably incorporate being able to change it in a future version)

-

rem: one of the beauties of this technique is: If you don't like the beat,rhythm, etc., you need only click ALL to get an entirely new beat or any of the other randomizers to re-randomize it OR let if do that by itself on AUTO until you like it, then just take it off AUTO.

-

One fun thing to do, is let it morph, with some set of toggles and bars selected, and just keep an ear out for the Really choice ones and record those or step in to "play" it, i.e. tweak the effects and parameters. It throws...rolls...a lot of them.

-

Another thing to play around with is the notion of Limited (bumpy) or Infinite(flat) sequences in conjunction with the number of bars. Since when and where the modulator triggers is contegent on when it resets.

-

Designed, as I said before, to be played, esp. once it gets rolling, it allows you to focus on the production (instead of writing beats) by controlling the ALL and Individual effects and parameters.

-

Note: if you really like the beat Don't forget to turn off the randomizers. CLEAR for instance works well. However you can't get the back the toggle values after they're cleared. (possible feature in next version)

-

The default.txt preset loads on loadbang. So if you want to save your state, then just click PRESETS>SAVE.

-

[folder_list] throws error messages if it can't find things, ex. when you're not using subdirectories in your kit. No need to worry about it. It just does that.

POSTSCRIPT

If you need any help, more explanation, advise, or have opinions or insight as to how I can make it better, I would love to hear from you.

I think that's >=95% of what I need to tell you.

If I think of anything else, I'll add it below.

Peace thru Music.

Love thru Pure Data.

-s

,

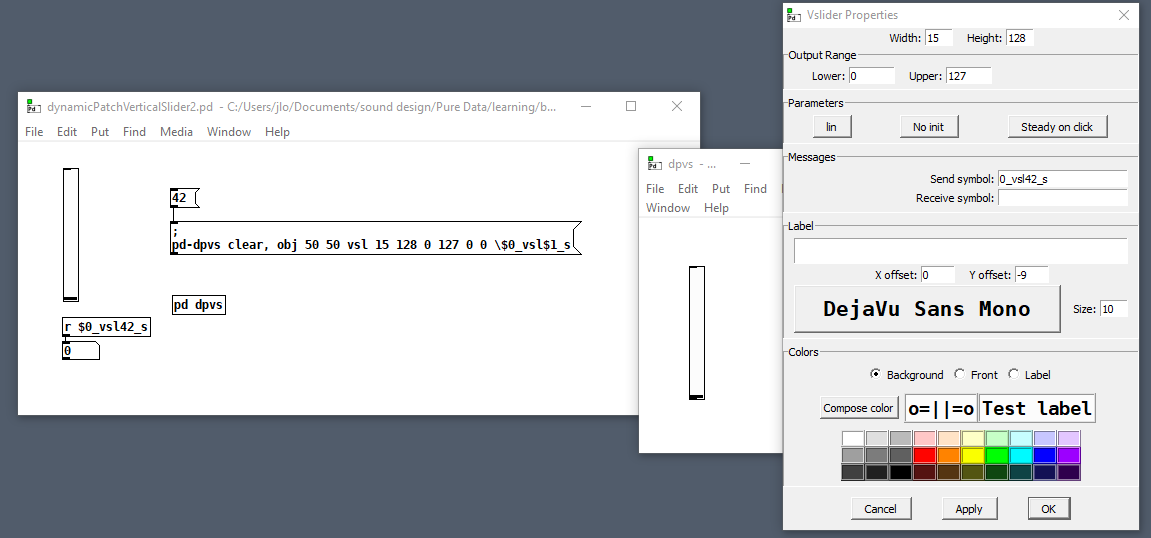

Dynamically patched vslider with local send symbol

@oid This is a problem from my 2nd ever dynamic patch, so I wouldn't try to read deep meaning into my questions  . Like my first dynamic patch, it's just a patch that generates the repetitive, static parts of other patches--a way to reduce the tedium of having to edit multiple send/receive names by hand, that's all. I'm expecting to have to cut and paste from this helper patch into the patch I'm really trying to write, so the value of $0 in the generating patch is irrelevant. Is that reasonable? In a patch that needed its UI to be dynamically generated, your way would make total sense, but I'm def not there yet. Also, regarding your other topic, I think the message that dynamically generates a vslider can itself be static, so I'm not sure that add2 technique is applicable.

. Like my first dynamic patch, it's just a patch that generates the repetitive, static parts of other patches--a way to reduce the tedium of having to edit multiple send/receive names by hand, that's all. I'm expecting to have to cut and paste from this helper patch into the patch I'm really trying to write, so the value of $0 in the generating patch is irrelevant. Is that reasonable? In a patch that needed its UI to be dynamically generated, your way would make total sense, but I'm def not there yet. Also, regarding your other topic, I think the message that dynamically generates a vslider can itself be static, so I'm not sure that add2 technique is applicable.

@ingox OK, now I'm getting the same results as you, which is odd because the patch I've presented is just a small example from a larger patch that was giving me trouble. After discovering the $\$0 syntax I applied it to that larger patch, saw that it too was working, and then posted this topic. But now my larger patch is broken, which makes me believe that the system is behaving differently since running your patches (and that I wasn't previously hallucinating). But that can't be true, right?

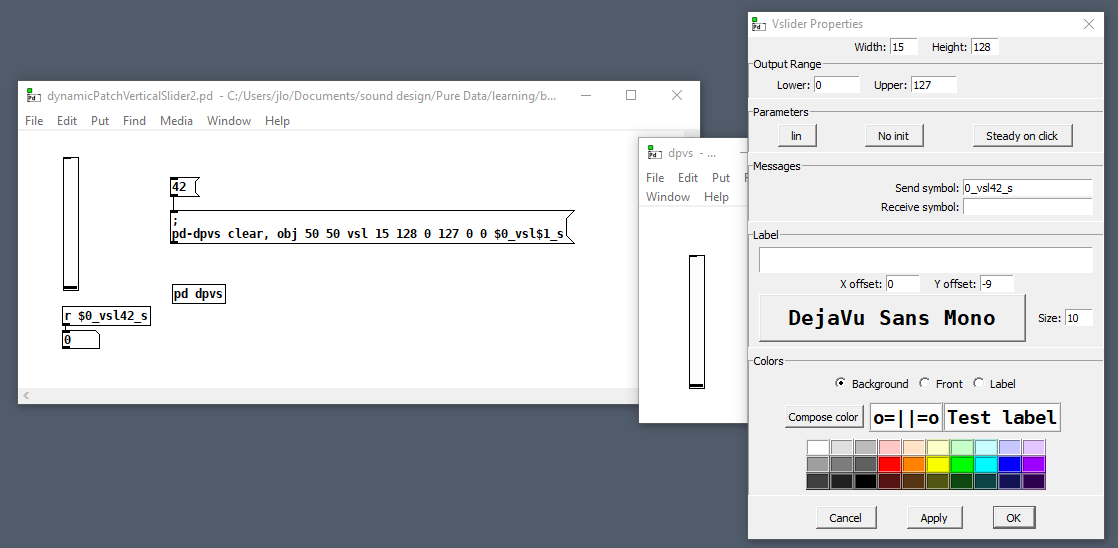

So here's another small patch to demonstrate how my larger patch is (now) broken: dynamicPatchVerticalSlider2.pd

See how \$0 is just generating a 0 in the send symbol? When I save and reload it to test the fader (which doesn't work), the backslash gets dropped from the creation message!

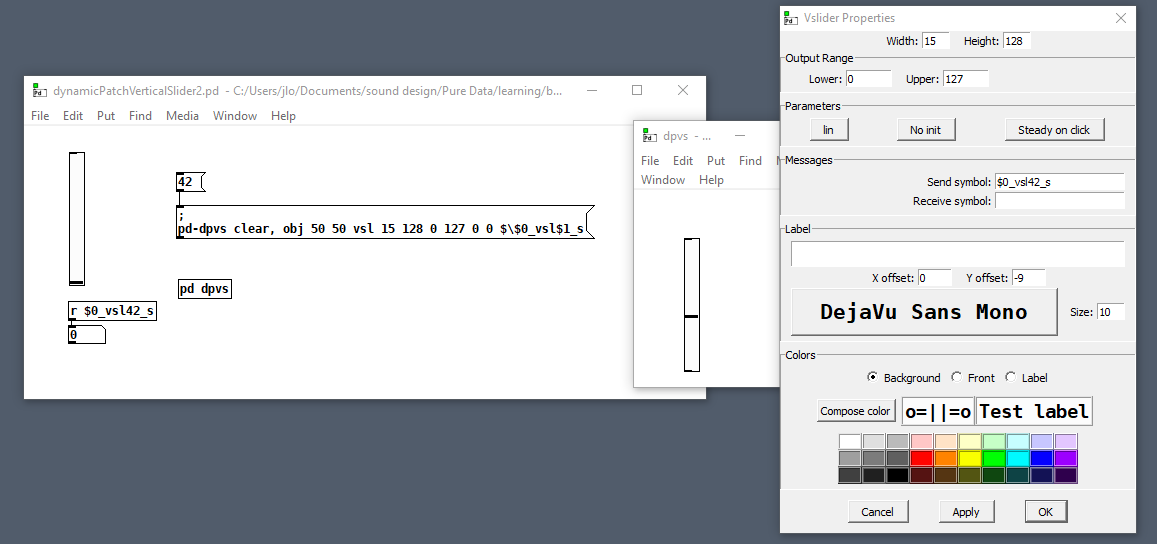

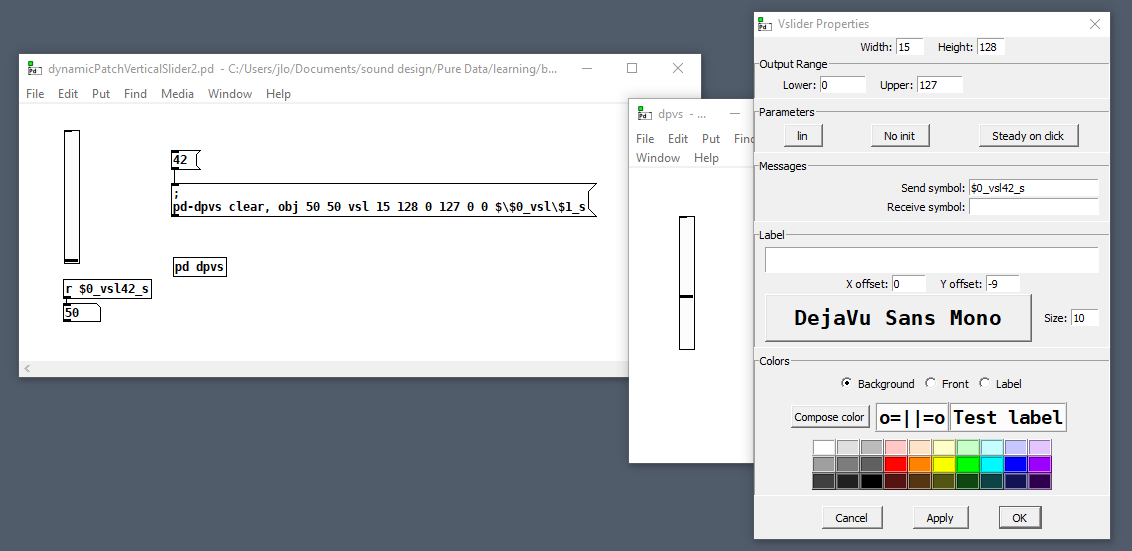

So next I try falling back to the syntax I got working earlier, and the generated vslider send symbol looks good

and on reloading it I can confirm that it is good, but now I can't use my generating patch because $1 is backslash escaped!

[pack] set without output?

The closer PD's user experience gets to Max the more people will expect it to be Max and complain about it not being Max.

That doesn't tend to happen. E.g., [expr] has been a core Pd Vanilla object for some time. It interprets its args as Max-style ints/floats, but (quite rightly) nobody requests adding the int/float difference to the core.

Also, Pd-l2ork and Purr Data have opportunistically pulled improvements from Max when backward compatible. I don't think I've had any complaints about it being insufficiently Max-like.

I did have a user request the strange Max feature of hiding all wires and xlets. But they didn't even complain when I implemented it as GUI preset named "footgun."

Trying to reproduce a sound with Pd

@JMC64 You would need to look up the specs of the VCF on the manufactures website and find its frequency range. They will most likely give the range in hz, so if they say it goes from 8hz to 16khz that would roughly be C1 to C11, so halfway would be C6 or roughly 262hz, remember that frequency is not linear! You can use a chart like http://subsynth.sourceforge.net/midinote2freq.html to convert between note names and hertz or you can have pd do the math for you with the [mtof] and [ftom] objects. Also remember that you do not need to get perfect frequencies, those small knobs have poor resolution and being analog have a fairly wide tolerance, if you went through a dozen of those modules you would find that 0.5 would be slightly different on each of them, so just get close and then tune by ear to perfection.

You can do the same for any module and any parameter, you just need to convert the numbers differently, a VCA will likely have its specs in decibells and a CV input or output in volts.

EDIT: Should mention, you need to look at what the frequency range of the cutoff knob is, not the VCF. Sometimes a VCF or VCO will have a larger range than can be reached by just its frequency knob, the CV inputs can often extend it, so the knob may only go bring you to C8, but a +5 volt signal into the CV input would bring you to C13 because frequency goes up one octave for every volt in a V/oct system like the Doepfers. Generally they will give the tuning range of the knob separately if the VCF can sweep beyond the range of the knob, most modules do not do this though and you should not have to worry about it unless it gives two different values.