Beginner Question

@nenleh said:

In an tutorial I have seen that someone marked the checkbox "compute audio" in the main window of PD (first picture) to solve this poblem.

But I do not have this checkbox (second picture).

Ancient tutorial, then. The current "DSP" checkbox is the same as the old "compute audio" box.

What can be the problem?

The console messages that you get after clicking DSP say that it's an audio driver problem: Pd is trying to connect to an Advanced Linux Sound Architecture instance and this is failing.

Linux audio is famously messy. The current best attempt to clean up the mess is Pipewire, so I guess your best option may be to install Pipewire and run pd under its power. If it's me, I'd use the Media menu to choose the JACK backend and then run pd as "pw-jack /path/to/pd-gui" (I think; not at the computer right now, and the path may vary depending on Linux distro).

I have not yet used Pipewire, though, so I couldn't troubleshoot beyond that. Linux audio forums could help more. (Note that Linux audio being chaotic and disorganized isn't Pd's fault -- but once you get the audio subsystem configured, then it works for every app. It's painful only the first time.)

Also, "priority 6 scheduling failed" means that you should add your user to the "audio" group.

hjh

Where does latency come from in Pure Data?

@Pandas As above..... Pd has an input > output delay equal to its block size.

Put something in and it will output 64 samples later.

But the soundcard cannot convert that to analogue instantly... so we add a buffer that gives it time to process the audio before the next block arrives.... MOTU 2-3ms with ASIO... On board soundcard usually 30-80ms.

I see you posted elsewhere on the forum..... https://forum.pdpatchrepo.info/topic/14495/sync-to-external-daw-midi-clock-audio-latency

I don't know those programs and maybe someone else will help.

Sending the audio samples to another program through a port will still not be instantaneous... but should be close unless you are listening to it at the same time.... but even media players will buffer at least 512 samples before they play a stream.

Does QSynth have compensation settings to delay the midi and attempt to match the audio timing?

What is your OS?

As you are communicating with software rather than a DAC you could try reducing the Delay (mSecs) in Pd media settings,,,,,, down to 3, then 2, then 1.. 0 probably not but you never know.... basically until it hangs and then go back to the last setting that worked.

Apply but don't save the settings until you are done.... or Pd might not start or might not let you change them again while it is "stuck" even if you restart it.

There is a fix for that..... I will upload it if that happens..

Running Pd at 96kHz audio rate should I think reduce the 64 sample (Pd internal) block delay.... you could try that too...... I think it should output in half the 48kHz time.

And try this..... it might help....... depends what you are wanting to achieve...... https://forum.pdpatchrepo.info/topic/13125/batch-processing-audio-faster-than-realtime

David.

Where does latency come from in Pure Data?

The amount of input-->output latency comes from the soundcard driver settings, specifically, the hardware buffer size.

"Where does latency come from" is this:

The audio card driver processes blocks of audio. (If it didn't process blocks of audio, then it would have to call into audio apps once per sample. There is no general purpose computer that can handle 40000+ interrupts per second with reliable timing. The only choice is to clump the samples into blocks so that you can do e.g. a few dozen or hundred interrupts per second.)

Audio apps can't start processing a block of input until the entire block is received. Obviously this is at the end of the block's time window. You can't provide audio samples at the beginning of a block for audio which hasn't happened yet.

You can't hear a block of output until it's processed and sent to the driver.

| --- block 1 --- | --- block 2 --- |

| input.......... | |

| \| |

| \_output......... |

This is true for all audio apps: it isn't a Pd problem. Also Pd's block size cannot reduce latency below the soundcard's latency.

You can't completely get rid of latency, but with system tuning, you can get the hardware buffer down to 5 or 6 ms (sometimes even 2 or 3), which is a/ good enough and b/ as good as it's going to get, on current computers.

hjh

Having issues with audio preferences and PD freezing up

Hello all,

Running 'Pd-0.52-1-really' on a 2021 M1 Macbook Pro. When I need to change my audio preferences (namely my audio output device) in PD, I can select the output I need, but when I click 'Save All Settings' or 'Apply' the following happens:

- 'Audio off' switches to 'Audio on' in the main PD window (the DSP checkbox does not respond, it remains unchecked)

- PD freezes: clicking OK or cancel in the Audio Settings window yields no response, the window will not close via any means. Similarly, if I have a patch open this will also freeze and cannot be closed. I have found I can close the main PD window, but I cannot quit PD- I have to force quit via the finder.

Upon reopening PD it's a gamble as to whether the audio setting I changed has even been remembered. This is extremely frustrating and is rendering the software very unreliable and hard to use (especially as I use it almost exclusively for audio).

Also, if I skip the process of clicking 'Save All Settings' or 'Apply', and just hit 'OK', the audio settings window closes, but the program still freezes in the same way.

Additionally, I'm trying to open a patch that contains a load bang to start DSP, and this will also freeze the program.

Has anyone else experienced this, and can they suggest any solutions? Thanks!

Round trip latency tests

yes, Katja's meter measures 1 sample latency additionally, so real latency is -1 sample.

here are threads of other forums:

https://forum.rme-audio.de/viewtopic.php?id=23459

linking to

https://web.archive.org/web/20160315074034/https://www.presonus.com/community/Learn/The-Truth-About-Digital-Audio-Latency

and an older comparision:

parts 1 2 3:

https://original.dawbench.com/audio-int-lowlatency.htm

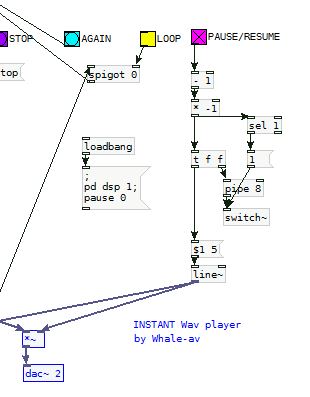

large array - how to visually update while writing? also how to pause+resume recording?

@esaruoho Updating the array while recording will probably cause dropouts. Redrawing onto the screen is hungry and unadvisable in Pd.

You can stop and start from the same point..... using a counter and restarting with a [start index(...... message, index being the next sample after where you stopped.

But.

Complicated.

It will probably be easier to stop audio computation when you press the [stop( button..... which will pause the writing to the array.

That will "freeze" audio for that window..... so you will no longer hear any audio that the window generates...... but other windows being unaffected you should be able to organise the patch to still hear what you need from elsewhere.

Put a [switch] object in the window with [tabwrite~] and toggle audio on off with a message..... 1 on.... 0 off.

Then connect [stop( to a 0 message and [resume( to a 1 message to [switch].

It will cause clicks, but you can stop that, while only losing a tiny bit of precision for the stop point, by "ducking" the audio before and after the [switch] operation like this.....

Use the [line~] output to duck the audio to [tabwrite~] so that there are no clicks on the recording as [switch] stops processing..... the audio recording level will be at 0 when processing stops and then fade up from 0 in 5ms when you start again (3ms after audio is turned on fully in the screenshot).

Set the [pipe] value lower if you wish..... 3 might be sufficient with 2 for the message to [line~].

The clicks might not matter at the stop/resume point of course....... and might make it easier to spot the "join" later.

David.

PD output is distorted at raspberry pi audio jack out

o I connected the UGREEN to my Pi and selected it (USB Audio Device (Hardware)) as an output on my pd.

Same problem as before. the audio distorted at unity gain (I'm multiple the audio playback by 1 and then send it to the dac~

In order to have clean sound I need to multiple the playback audio by 0.1 - 0.05. I don't understand why is happening?

Could it be something with my pi audio setting?

Thanks

edit: I connected my Motu ultra lite mk4 and the output is not distorted even when multiple by 1 (as it should be)

So I can assume the USB Audio Interface I bought is just not good enough?

What you can recommend me for small audio interface with stereo output? that for sure is not distorted the audio...

Circular buffer issues

@jameslo said:

Honestly, I didn't know if that was @fintg's requirement,

It's certainly a reasonable guess. If the requirement instead were "I just played something cool; write the last 10 seconds to disk" you can do that without a circular buffer at all.

I was just surprised and annoyed that one can only access the delay line's internal buffer at audio rate (and was hoping that someone would prove me wrong).

Access to the internal buffer wouldn't be very useful without also knowing the record-head position. In that case delwrite~ would need an outlet for the current frame being written.

That would actually be a very nice feature request.

In SuperCollider as well, DelayN, DelayL and DelayC don't give you access to the internal buffer. But you can create your own buffer and write into it, with total control over phase, with BufWr -- and, because you control the write phase, you already know what it is. It's quite nice way to do it.

Basically the lack of ipoke~ in vanilla causes some headaches.

Look at the hoops I have to jump through! The extra memory I have to use!

I don't think there is any way to do this without using some extra memory.

In a circular buffer, you have:

|~~~~~~ new audio ~~~~~~|~~~~~~ old audio ~~~~~~|

^ record head

When you write to disk, naturally you want the old audio earlier in the file. There are only two ways to do that. One is to write the "old" chunk without closing the file, and append the "new" chunk, and then close the file.

In SC, if I know the record head position, I'd do it like:

buf.write(path, "wav", "int24", startFrame: recHead, leaveOpen: true, completionMessage: { |buf|

buf.writeMsg(path, "wav", "int24", numFrames: recHead, startFrame: 0, leaveOpen: false)

});

AFAICS Pd does not support this, so you're left with duplicating new after old data. (FWIW, though, there's plenty of memory in modern computers; I wouldn't lose sleep over this.)

Then there is the problem of synchronous vs asynchronous disk access. AFAICS Pd's disk access is synchronous, and because the control layer is triggered from the audio loop, slow disk access could cause audio dropouts. OS file system caching might reduce the risk of that, but you never know. Ross Bencina's article about real-time audio performance advises against time-unbounded operations in the audio thread.

SC's buffer read/write commands run in a lower priority thread; wrt audio, they are asynchronous. This is good for audio stability, but it means that, by the time you get around to writing, the record head has moved forward. So, even though I could do the two-part write easily, I'd get a few ms of new data at the start of the file. I think I would solve that by allocating an extra, say, 2 seconds and then just don't write the 2 seconds after the sampled-held recHead value: startFrame: recHead + (s.sampleRate * 2). (If it takes 2 seconds to write a 10 second audio file, then you have bigger problems than circular buffers.) Then the record head can move freely into that zone without affecting audio written to disk.

hjh

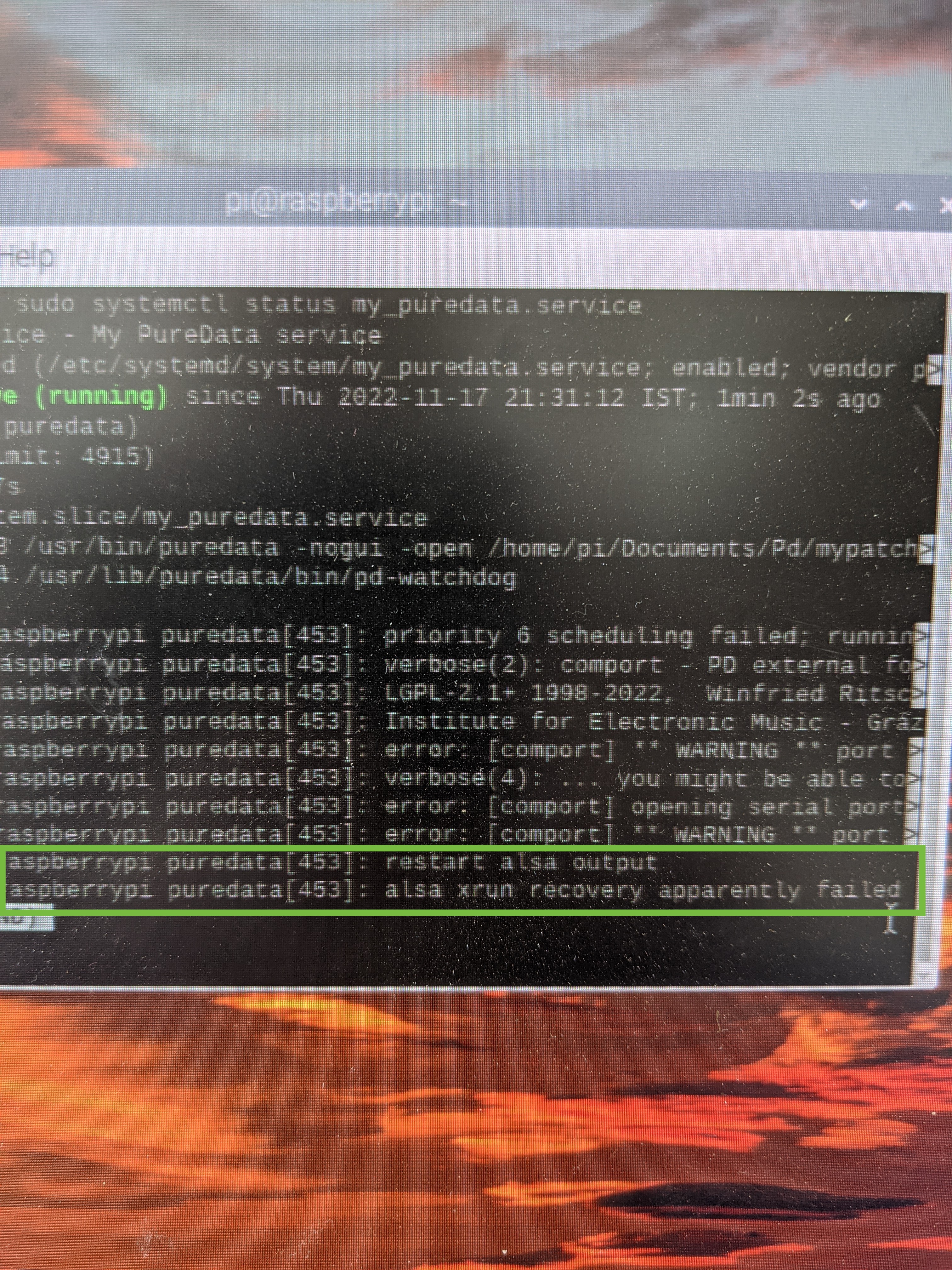

Failed to autostart PD on Pi using service

This is a continuation to the issue I wanted to solved in this topic. It just went to different places so I though I will open a new topic to this problem I'm facing.

I have a pd patch that doing some audio playback reading some files from buffer. I'm running it on my Pi4.

I wanted it to start on boot every time and to be able to reset itself if crashing for some reason.

I was suggested to use that service script:

[Unit]

Description=My PureData service

[Service]

Type=simple

LimitNOFILE=1000000

ExecStart=/usr/bin/puredata -nogui -open /home/pi/mypatch.pd

WorkingDirectory=/home/pi

User=pi

Group=pi

Restart=always

# Restart service after 10 seconds if service crashes

RestartSec=10

[Install]

WantedBy=multi-user.target

The above was working great using the built in 3.5mm audio jack.

I then bought UGREEN USB audio interface as I was facing with some poor audio quality at the output.

I set the audio preference in PD to choose the USB Audio Interface as the output.

When I boot the Pi I'm getting this error from the service (see picture)

If I'm typing sudo systemctl restart my_puredata.service the PD patch is back to work just fine. No Alsa error, but on the initial boot it is not working.

Any idea why this happen when using the USB AudioInterface? anything I can do in order to make it work?

So If to conclude:

When I start the same pd patch using the same service script but without a USB audio interface is working just fine.

When I start the same pd patch with the USB audio interface but using the autostart file:

sudo nano /etc/xdg/lxsession/LXDE-pi/autostart

Is also working just fine.

But the combination of the USB audio interface and the service script is just not working.

Thanks for any help.