I need an object to unpack an incoming midi chord (midi in, note in )

@whale-av said:

@gentleclockdivider "Let's recap , a chord ( thus separate incoming midi notes perceived as a chord ) goes into pure data , I want to extract the midi note values .

UNpack -only unpacks last received note+velocity , that's why I wrote that an object that detects the time between incoming events could be the solution .

SO let's say I play a simple c min (midiin) , which has the midi note nr's 48 , 51 , 55 ....I want these these to appear in separate numboxes ."[unpack] produces all the note numbers...... but you only see the last..... a [print] will show them.

As I said at the start of this thread, [poly] will separate them for you as it indexes the notes.

You could then use [route] to separate them but [clone] is more useful as you can clone a synth inside if you wish.

The indexes from [poly] can be used to allocate the notes to the clones, and [poly] then ensures that you have no hanging notes as the noteoff messages are allocated the same index.As the idea of a chord is human and has no meaning in Pd, midi, or even on a keyboard, why would you need to group the notes together with a timer or a threshold.... I am just curious...

To correct bad keyboard technique...?The method using [poly] and [route] is shown in your Pd/doc folder....... Pd/doc/7.stuff/synth/1.poly.synth.pd .... since at least 2011.

David.

I reall think we have a communication error here .

I know that a chord does not exist in pure data -midi etc...and that it's a sequential message of single notes , I have expresed that in my first post .

I am also aware that only the last of the messages is shown by the unpack module .

- quote-

As the idea of a chord is human and has no meaning in Pd, midi, or even on a keyboard, why would you need to group the notes together with a timer or a threshold.... I am just curious...

To correct bad keyboard technique...?

-unquote -

Bad keyboard technique ??

I just wanted pure data to SHOW all incoming midi notes that make up the chord , not in the console but in the structure view ., , your treshold value example did that .

Why is it so bizarre to ask for that , max msp has a dedicated object for exactly that , says enough .

zl look up - how to load a list to right inlet

@KMETE said:

when I try to type a number inside a number box it is not working. The only way for changing the numbers is by clicking the mouse and scrolling. There is no wy to type into number boxes?

OK.

If you create a button or toggle, you might notice that:

- If you are not in edit mode, clicking on the object produces the output.

- If you are in edit mode, clicking on the object selects it but does not trigger output.

- If you are in edit mode, ctrl- clicking on the object produces output.

Number boxes behave the same way. Not in edit mode, you can click and type a number. In edit mode, ctrl-click allows you to type. I just confirmed that this is true in PlugData as well as the vanilla Pd GUI.

(On Mac, cmd- instead of ctrl-.)

In both interfaces, use ctrl-E to toggle between edit and performance modes. In PlugData, the + icon in the toolbar is dimmed when not editing (AFAICS this is the only way to know which mode you're in).

Edit edit... one minor interface goof in PlugData is that: performance mode, clicking a number box turns the arrowhead blue (so you know it's typeable). In edit mode, ctrl-click does not turn it blue but it's still typeable (I tried it, no problem).

hjh

Does rotating a chromatic circle (constellation) 90 degrees create the Minor scale in ALL modes?

what the article says about dropping the 3rd, 6th, and 7th

The only thing that makes a scale/mode minor or major is the 3rd. The one we call natural minor or aeolian has minor 6th and 7th as well. There are other minor modes with less or more minor intervals

An easy way to think of the classic modes for me is the white keys on the keyboard, like @seb-harmonik.ar is hinting at. These are the start tones, modes and intervals (minor intervals denoted by lower case except 4rth and 5th on the account of musical convention)

C -> Ionian: I II III IV V VI VII (major)

D -> Dorian: I II iii IV V VI vii (minor)

E -> Phrygian: I ii iii IV V vi vii (minor)

F -> Lydian: I II III IV# V VI VII (major)

G -> Mixolydian: I II III IV V VI vii (major)

A -> Aeolian: I II iii IV V vi vii (minor)

B -> Locrian: I ii ii IV Vb vi vii (minor)

For each of the majors, they have a "parallel" minor which you could think of as a "90 degree shift" in the "no blacks allowed" chromatic circle - i.e.: 3 semitones down. Ionian becomes Aolian. Lydian becomes Dorian. Mixolydian becomes Phrygian.

HOWEVER, there are tons of modes/scales not accounted for here, a lot of which you can't play on white keys only. And there is no single major-to-minor transformative rule besides these:

1: Flattening the 3rd will effectively turn a major scale/mode into minor

2: A major scale will have a parallel minor scale 3 semitones below it IF AND ONLY IF the major scale has a major 6th in it

Save and recall midi settings in a project

I FINALLY made it work, it's now as reliable and foolproof as i can patch it!

I spent quite some time to make it clean and well working, so i'm not sure it will save me time in the end, but i'm sure it can save you some, so please use it! Bear in mind you HAVE to modify it to contain your own audio and midi devices. Their names can be found using audiosettings and midisettings objects.

Each patch containing this one, if correctly modified, will create and send to pd the correct message to tell it to put which device on which "slot" of the audio and midi settings menus.

I also made a patch for audiosettings, which sets the audio interface of your choice, with all the appropriate settings, plus another "plan B" setting in case the main audio interface is missing.

Once properly set up, you only have to worry about plugging your audio and midi devices before launching Pd. What a relief in terms of time and stress, especially in live situations!

Sadly it needs Loopmidi or something similar to work, with as much virtual midi devices as the real ones you'll be using: if one or several devices are missing, the virtual ones will replace them in the midi settings, so it doesn't ruin the order of devices. I named them DUMMYIN\ 1 and DUMMYOUT\ 1 to 5 because i don't have more than 5 midi devices plugged at once. you have to create these DUMMY things in loopmidi to get this patch to work.

(I tried with one dummy midi device in and one dummy out, but it doesn't work if two real midi devices are missing in a row: pd apparently won't let you have twice the same device on consecutive slots)

the next steps would be to make it more user friendly, by sending messages to it, so it can be used in several different projects without being edited inside.

The most convenient solution would be to modify Pd source code to make these settings behave differently, by assigning a UNIQUE number to each device, being plugged or not, or accepting messages that contain the order of devices BY THEIR NAME and not a randomly assigned number... then it would be possible to store a simple midi and audiosettings message in each patch.

Thx for reading

#proudofmyself #nobodycaresaboutthisbutyouwhoareherebecauseofthesamepreoblem

Any solution or work around for Mac not getting 2nd Midi controller channel number?

@nicnut No not normally.

If you have 2 devices the first will be channels 1-16 and the second will be 17-32.....etc,

That is because the midi standard is 16 channels per midi connection.

Somehow in the RPI linux configuration the two midi controllers must be "overlaid"?..... or there is a midi router running before Pd that is routing the two midi devices in parallel to one output for Pd.

So maybe there is such a router for the Mac...... maybe a midisettings app..... where you can combine them.

Midi A + Midi B in parallel > Midi C

Pd connected to Midi C

MidiPipe app might well be able to do that for you. if the built-in IAC controller cannot.

Otherwise maybe add another [ctlin] and [ctlout] to your patch and if necessary a [spigot] + [toggle] to switch between them.

David.

Externals in Purr-Data

there we go:

tried ./pd_helios.d_ppc and failed

tried ./pd_helios.pd_darwin and failed

tried ./pd_helios/pd_helios.d_ppc and failed

tried ./pd_helios/pd_helios.pd_darwin and failed

tried ./pd_helios/pd_helios-meta.pd and failed

tried ./pd_helios.pd_lua and failed

tried ./pd_helios.pd and failed

tried ./pd_helios.pat and failed

tried ./pd_helios/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios-meta.pd and failed

tried /Library/Pd-l2ork/pd_helios.pd_lua and failed

tried /Library/Pd-l2ork/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.pat and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd and failed

tried /System/Library/Fonts/pd_helios.d_ppc and failed

tried /System/Library/Fonts/pd_helios.pd_darwin and failed

tried /System/Library/Fonts/pd_helios/pd_helios.d_ppc and failed

tried /System/Library/Fonts/pd_helios/pd_helios.pd_darwin and failed

tried /System/Library/Fonts/pd_helios/pd_helios-meta.pd and failed

tried /System/Library/Fonts/pd_helios.pd_lua and failed

tried /System/Library/Fonts/pd_helios.pd and failed

tried /System/Library/Fonts/pd_helios.pat and failed

tried /System/Library/Fonts/pd_helios/pd_helios.pd and failed

tried /Library/Fonts/pd_helios.d_ppc and failed

tried /Library/Fonts/pd_helios.pd_darwin and failed

tried /Library/Fonts/pd_helios/pd_helios.d_ppc and failed

tried /Library/Fonts/pd_helios/pd_helios.pd_darwin and failed

tried /Library/Fonts/pd_helios/pd_helios-meta.pd and failed

tried /Library/Fonts/pd_helios.pd_lua and failed

tried /Library/Fonts/pd_helios.pd and failed

tried /Library/Fonts/pd_helios.pat and failed

tried /Library/Fonts/pd_helios/pd_helios.pd and failed

tried /Users/didipiman/Library/Fonts/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios-meta.pd and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pd_lua and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pd and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pat and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios-meta.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pd_lua and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pat and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios-meta.pd and failed

tried /Library/Pd-l2ork/pd_helios.pd_lua and failed

tried /Library/Pd-l2ork/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.pat and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd and failed

pd_helios: can't load library```Externals in Purr-Data

i see! sorry about that!

here's what i get. i'm pasting as much as i can here. i can paste more if you want, but perhaps this gives you an idea already? hope so. guessing the startup preferences are not right, but i'm not sure what to fix, sorry!

Welcome to Purr Data

nw.js version 0.28.1

GUI is starting Pd...

GUI listening on port 5400 on host 127.0.0.1

gui_path is /Applications/Pd-l2ork.app/Contents/Resources/app.nw

binary is /Applications/Pd-l2ork.app/Contents/Resources/app.nw/bin/pd-l2ork

Pd started.

incoming connection to GUI

canvasinfo: v0.1

stable canvasinfo methods: args dir dirty editmode vis

classinfo: v.0.1

stable classinfo methods: size

objectinfo: v.0.1

stable objectinfo methods: class

pdinfo: v.0.1

stable pdinfo methods: dir dsp version

[import] $Revision: 1.2 $

[import] is still in development, the interface could change!

compiled against Pd-l2ork version 2.15.2 (20201102-rev.a81c9ef5)

PD_FLOATSIZE = 32 bits

success reading preferences from: /Users/didipiman/Library/Preferences/org.puredata.pd-l2ork

input channels = 2, output channels = 2

working directory is /Users/didipiman

input channels = 2, output channels = 2

input device 0, channels 2

output device 0, channels 2

framesperbuf 64, nbufs 13

rate 44100

... opened OK.

tried ./libdir.d_ppc and failed

tried ./libdir.pd_darwin and failed

tried ./libdir/libdir.d_ppc and failed

tried ./libdir/libdir.pd_darwin and failed

tried ./libdir.pd and failed

tried ./libdir.pat and failed

tried ./libdir/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.pat and failed

tried /Library/Pd-l2ork/libdir/libdir.pd and failed

tried /System/Library/Fonts/libdir.d_ppc and failed

tried /System/Library/Fonts/libdir.pd_darwin and failed

tried /System/Library/Fonts/libdir/libdir.d_ppc and failed

tried /System/Library/Fonts/libdir/libdir.pd_darwin and failed

tried /System/Library/Fonts/libdir.pd and failed

tried /System/Library/Fonts/libdir.pat and failed

tried /System/Library/Fonts/libdir/libdir.pd and failed

tried /Library/Fonts/libdir.d_ppc and failed

tried /Library/Fonts/libdir.pd_darwin and failed

tried /Library/Fonts/libdir/libdir.d_ppc and failed

tried /Library/Fonts/libdir/libdir.pd_darwin and failed

tried /Library/Fonts/libdir.pd and failed

tried /Library/Fonts/libdir.pat and failed

tried /Library/Fonts/libdir/libdir.pd and failed

tried /Users/didipiman/Library/Fonts/libdir.d_ppc and failed

tried /Users/didipiman/Library/Fonts/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/libdir/libdir.d_ppc and failed

tried /Users/didipiman/Library/Fonts/libdir/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/libdir.pd and failed

tried /Users/didipiman/Library/Fonts/libdir.pat and failed

tried /Users/didipiman/Library/Fonts/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir/libdir.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir/libdir.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.pat and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.pat and failed

tried /Library/Pd-l2ork/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir/libdir.pd_darwin and succeeded

libdir loader 1.10

compiled on Nov 3 2020 at 11:39:53

compiled against Pd version 0.48.0.

tried ./cyclone.d_ppc and failed

tried ./cyclone.pd_darwin and failed

tried ./cyclone/cyclone.d_ppc and failed

tried ./cyclone/cyclone.pd_darwin and failed

tried ./cyclone/cyclone-meta.pd and failed

tried ./cyclone.pd and failed

tried ./cyclone.pat and failed

tried ./cyclone/cyclone.pd and failed

tried /Library/Pd-l2ork/cyclone.d_ppc and failed

tried /Library/Pd-l2ork/cyclone.pd_darwin and failed

tried /Library/Pd-l2ork/cyclone/cyclone.d_ppc and failed

tried /Library/Pd-l2ork/cyclone/cyclone.pd_darwin and failed

tried /Library/Pd-l2ork/cyclone/cyclone-meta.pd and failed

tried /Library/Pd-l2ork/cyclone.pd and failed

tried /Library/Pd-l2ork/cyclone.pat and failed

tried /Library/Pd-l2ork/cyclone/cyclone.pd and failed

tried /System/Library/Fonts/cyclone.d_ppc and failed

tried /System/Library/Fonts/cyclone.pd_darwin and failed

tried /System/Library/Fonts/cyclone/cyclone.d_ppc and failed

tried /System/Library/Fonts/cyclone/cyclone.pd_darwin and failed

tried /System/Library/Fonts/cyclone/cyclone-meta.pd and failed

tried /System/Library/Fonts/cyclone.pd and failed

tried /System/Library/Fonts/cyclone.pat and failed

tried /System/Library/Fonts/cyclone/cyclone.pd and failed

tried /Library/Fonts/cyclone.d_ppc and failed

tried /Library/Fonts/cyclone.pd_darwin and failed

tried /Library/Fonts/cyclone/cyclone.d_ppc and failed

tried /Library/Fonts/cyclone/cyclone.pd_darwin and failed

tried /Library/Fonts/cyclone/cyclone-meta.pd and failed

tried /Library/Fonts/cyclone.pd and failed

tried /Library/Fonts/cyclone.pat and failed

tried /Library/Fonts/cyclone/cyclone.pd and failed

tried /Users/didipiman/Library/Fonts/cyclone.d_ppc and failed

tried /Users/didipiman/Library/Fonts/cyclone.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone.d_ppc and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone-meta.pd and failed

tried /Users/didipiman/Library/Fonts/cyclone.pd and failed

tried /Users/didipiman/Library/Fonts/cyclone.pat and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone.pd and failed

JASS, Just Another Synth...Sort-of, codename: Gemini (UPDATED: esp with midi fixes)

JASS, Just Another Synth...Sort-of, codename: Gemini (UPDATED TO V-1.0.1)

jass-v1.0.1( esp with midi fixes).zip

1.0.1-CHANGES:

- Fixed issues with midi routing, re the mode selector (mentioned below)

- Upgraded the midi mode "fetch" abstraction to be less granular

- Fix (for midi) so changing cc["14","15","16"] to "rnd" outputs a random wave (It has always done this for non-midi.)

- Added a midi-mode-tester.pd (connect PD's midi out to PD's midi in to use it)

- Upgrade: cc-56 and cc-58 can now change pbend-cc and mod-cc in all modes

- Update: the (this) readme

INFO: Values setting to 0 on initial cc changes is (given midi) to be expected.

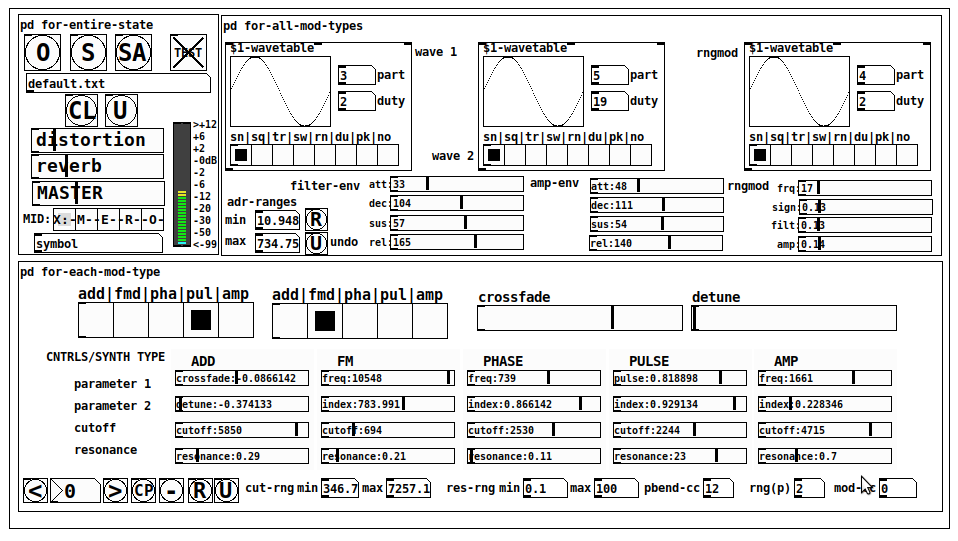

JASS is a clone-based, three wavetable, 16 voice polyphonic, Dual-channel synth.

With...

- The initial, two wavetables combined in 1 of 5 possible ways per channel and then adding those two channels. Example: additive+frequency modulation, phase+pulse-modulation, pulse-modulation+amplitude modulation, fm+fm, etc

- The third wavetable is a ring modulator, embedded inside each mod type

- 8 wave types, including a random with a settable number of partials and a square with a settable dutycycle

- A vcf~ filter embedded inside each modulation type

- The attack-decay-release, cutoff, and resonance ranges settable so they immediately and globally recalculate all relevant values

- Four parameters /mod type: p1,p2, cutoff, and resonance

- State-saving, at both the global level (wavetables, env, etc.), as well as, multiple "substates" of for-each-mod-type settings.

- Distortion, reverb

- Midiin, paying special attention to the use of 8-knob, usb, midi controllers (see below for details)

- zexy-limiters, for each channel, after the distortion, and just before dac~

Instructions

Requires: zexy

for-entire-state

- O: Open preset. "default.txt" is loaded by...default

- S: Save preset (all values incl. the multiple substates) (Note: I have Not included any presets, besides the default with 5 substates.)

- SA: Save as

- TEST: A sample player

- symbol: The filename of the currently loaded preset

- CL: Clear, sets all but a few values to 0

- U: Undo CL

- distortion,reverb,MASTER: operate on the total out, just before the limiter.

- MIDI (Each selection corresponds to a pgmin, 123,124,125,126,127, respectively, see below for more information)

- X: Default midi config, cc[1,7,8-64] available

- M: Modulators;cc[10-17] routed to ch1&ch2: p1,p2,cutoff,q controls

- E: Envelopes; cc[10-17] routed to filter- and amp-env controls

- R: Ranges; cc[10-17] routed to adr-min/max,cut-off min/max, resonance min/max, distortion, and reverb

- O: Other; cc[10-17] routed to rngmod controls, 3 wavetypes, and crossfade

- symbol: you may enter 8 cc#'s here to replace the default [10-17] from above to suit your midi-controller's knob configuration; these settings are saved to file upon entry

- vu: for total out to dac~

for-all-mod-types

- /wavetable

- graph: of the chosen wavetype

- part: partials, # of partials to use for the "rn" wavetype; the resulting, random sinesum is saved with the preset

- duty: dutycycle for the "du" wavetype

- type: sin | square | triangle | saw | random | duty | pink (pink-noise: a random sinesum with 128 partials, it is not saved with the preset) | noise (a random sinesum with 2051 partials, also not saved)

- filter-env: (self-explanatory)

- amp-env: (self-explanatory)

- rngmod: self-explanatory, except "sign" is to the modulated signal just before going into the vcf~

- adr-range: min,max[0-10000]; changing these values immediately recalculates all values for the filter- and amp-env's scaled to the new range

- R: randomizes all for-all-mod-types values, but excludes wavetype "noise"; rem: you must S or SA the preset to save the results

- U: Undoes R