Where does latency come from in Pure Data?

@whale-av Thanks.

It seems the MIDI clock patch does not reset properly. Or, there should be a delay of some sorts when syncing to MIDI clock.

My apologies, this went a bit too far out of scope.

I can nudge the MIDI clock input to 0, and sync it properly.

Just need to figure out how to nudge it automatically.

Thanks a lot,

Raymond

EDIT:

I am resetting the PPQN counter to 0 on FA (MIDI clock PLAY).

But this does not sync properly.

Clock SOURCE according to MIDI spec should give some delay for the target to sync (1ms). Figuring out how to implement that....

24 is a weird number

zl look up - how to load a list to right inlet

@KMETE said:

when I try to type a number inside a number box it is not working. The only way for changing the numbers is by clicking the mouse and scrolling. There is no wy to type into number boxes?

OK.

If you create a button or toggle, you might notice that:

- If you are not in edit mode, clicking on the object produces the output.

- If you are in edit mode, clicking on the object selects it but does not trigger output.

- If you are in edit mode, ctrl- clicking on the object produces output.

Number boxes behave the same way. Not in edit mode, you can click and type a number. In edit mode, ctrl-click allows you to type. I just confirmed that this is true in PlugData as well as the vanilla Pd GUI.

(On Mac, cmd- instead of ctrl-.)

In both interfaces, use ctrl-E to toggle between edit and performance modes. In PlugData, the + icon in the toolbar is dimmed when not editing (AFAICS this is the only way to know which mode you're in).

Edit edit... one minor interface goof in PlugData is that: performance mode, clicking a number box turns the arrowhead blue (so you know it's typeable). In edit mode, ctrl-click does not turn it blue but it's still typeable (I tried it, no problem).

hjh

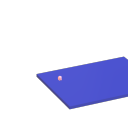

How to create a multi-mode knob?

I've been building some patches for the Befaco LICH modular platform which has potentiometers for the parameters that come through the framework as floats in [0-1]

Having run out of knobs on the LICH... I'd like to make it multi-mode so that each knob can control multiple-parameters, one per mode. The knob should remember (and output) each modes' value as the modes are changed. In the event that the knob and it's value get out of sync, it should have a simple way to re-sync the control, like turn it past it's previous value to re-sync.

I have a couple "working-ish" versions but I feel like I'm working too hard at it (against the system?) and there is a more straight-fwd approach that I am not seeing. Does anybody have any experience or thoughts on handling a multi-mode parameter gracefully and implementing it in Pd?

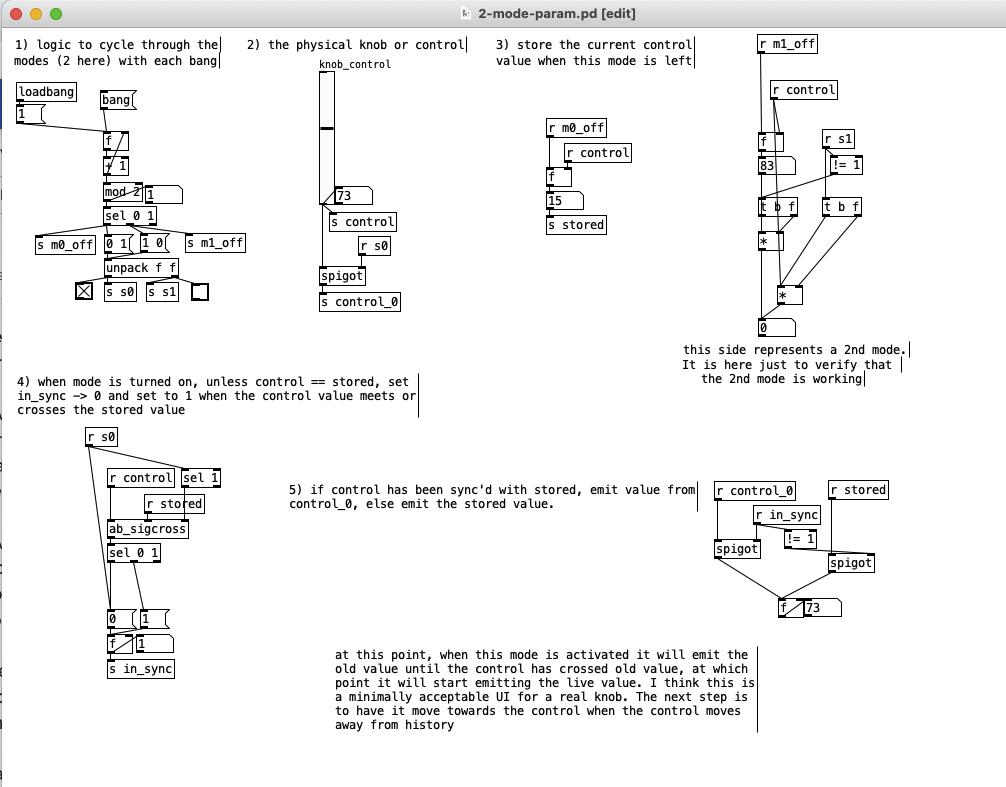

Just Another (Drum) Sequencer...SortOf, codename: Virgo

Just Another (Drum) Sequencer...SortOf, codename: Virgo

REQUIRES: zexy, moonlib, tof (as of Pd 0.50.2, all of which are in deken) and hcs (which comes by default with Pd 0.50.2 and is in deken (for extended))

Special Features

- Unique playhead per row; each with their own metro (beat)

- Up to 8 Volume states-per-beat (by clicking multiple times on the bang; where an rms=1 is divide among the states (2 states:0=rms=0(black), 1=rms=1(red); 3 states:rms=[0|0.5|1])

- Design approach: using creation arguments to alias abstractions, so subsequently they are referred to by their creation arguments, ex. in [KITS sample] sample is referred to as [$1]; which is how they are listed below)

(notes: what I learned experimenting with this design approach, I will share as a separate post. Currently, it does not include cut-copy-paste (of regions of the pattern)). I good way to start trying it out is clicking the "R" to get a random kit and a random pattern).

virgo:[virgo/PROJECT KITS PATTERNS]

- PROJECT[KITS PATTERNS]

- $1:[KITS sample]

- GUI

- K: openpanel to load a previously saved *.txt (text object) kit of samples; on loadbang the default.txt kit is loaded

- S: save the current set of samples to the most recently opened *.txt (kit) preset

- SA: saveas a *.txt of the current set of samples

- D: foldererpanel a sample directory to load the first (alphabetically) 16 samples into the 16 slots

- RD: load a random kit from the [text samples] object where the samples where previously loaded via the "SAMPLES" bang on the right

- U: undo; return to the previously opened or saved *.txt kit, so not the previously randomized

- MASTER: master gain

- (recorder~: of the total audio~ out)

- record

- ||: pause; either recording or play;

- play: output is combined with the sequencer output just before MASTER out to [dac~]

- SAMPLES: folderpanel to load a (recursive) directory of samples for generating random kits

- ABSTRACTIONS

- $1: sample

- bang: openpanel to locate and load a sample for a track

- canvas: filename of the opened sample; filenames are indexed in alignment with track indices in the PATTERNS section

- $1: sample

- GUI

- $2:[PATTERNS row]

- GUI

- P: openpanel to load a previously saved *.txt (pattern) preset file; on loadbang the default.txt pattern is loaded; the preset file includes the beat, pattern, and effect settings for the row

- S: save the current pattern to the most recently opened pattern .txt

- SA: save as (self-explanatory)

- states: the number of possible states [2..8] of each beat;

- %: weight; chance of a beat being randomized; not chance of what it will result in; ex. 100% implies all beats are randomized ; random beats result in a value)gain) between 1 and states-1

- PLAY(reset): play the pattern from "start" or on stop reset all playheads to start

- start: which beat to start the playheads on

- length: how many beats to play [+/-32]; if negative the playheads will play in reverse/from right to left

- bpm: beats-per-minute

- rate: to change the rate of play (ie metro times) by the listed factor for all playheads

- R: randomize the total pattern (incl period and beats, but not the effect settings; beats of 1/32 are not included in the possibilities)

- CL: clear, set all beats to "0", i.e. off

- U: undo random; return to the previously opened or saved preset, ie. not the previous random one

- M: mute all tracks; the playheads continue moving but audio does not come out of any track

- ||:pause all playheads; play will resume from that location when un-paused

- per: period; if 0=randomizes the period, >0 sets the period to be used for all beats

- Edit Mode

- Check the [E] to enter edit mode (to cut, copy, or paste selected regions of the pattern)

- Entering edit mode will pause the playing of the pattern

- Play, if doing so beforehand, will resume on leavng edit mode

- The top-left most beat of the pattern grid will be selected when first entering edit mode

- Single-click a beat to select the top-left corner of the region you wish to cut or copy

- Double-click a beat to select the bottom-right corner

- You may not double-click a beat "less than" the single-clicked (top-left) beat and vice-versa

- Click [CL] to clear your selection (i.e. start over)

- The selected region will turn to dark colors

- If only one beat is selected it will be the only one darkened

- Click the operation (bang) you wish to perform, either cut [CU] or copy [CP]

- Then, hold down the CTRL key and click the top-left corner of where you want to paste the region

- The clicked cell will turn white

- And click [P] to paste the region

- Cut and copied regions may both be pasted multiple times

- The difference being, cutting sets the values (gains) for the originating region to "0"

- Click [UN] to undo either the cut, copy, or paste operation

- Undoing cut will return the gains from 0s to their original value

- Check the [E] to enter edit mode (to cut, copy, or paste selected regions of the pattern)

- (effect settings applied to all tracks)

- co: vcf-cutoff

- Q: vcf-q

- del: delay-time

- fb: delay-feedback

- dist: distortion

- reverb

- gn: gain

- ABSTRACTIONS

- $1: [row (idx) b8] (()=a property not an abstraction)

- GUI

- (index): aligns with the track number in the KITS section

- R: randomize the row; same as above, but for the row

- C: clear the row, i.e. set all beats to 0

- U: undo the randomize; return to the originally opened one, ie. not the previous random one

- M: mute the row, so no audio plays, but the playhead continues to play

- S: solo the row

- (beat): unit of the beat(period); implying metro length (as calculated with the various other parameters);1/32,1/16,1/8, etc.

- (pattern): the pattern for the row; single-click on a beat from 0 to 8 times to increment the gain of that beat as a fraction of 1 rms, where resulting rms=value/states; black is rms=0; if all beats for a row =0 (are black) then the switch for that track is turned off; double-click it to decrement it

- (effects-per-row): same as above, but per-row, ex. first column is vcf-cutoff, second is vcf-q, etc.

- ABSTRACTIONS

- $1: b8 (properties:row column)

- 8-state bang: black, red, orange, yellow, green, light-blue, blue, purple; representing a fraction of rms(gain) for the beat

- $1: b8 (properties:row column)

- GUI

- $1: [row (idx) b8] (()=a property not an abstraction)

- GUI

- $1:[KITS sample]

Credits: The included drum samples are from: https://www.musicradar.com/news/sampleradar-494-free-essential-drum-kit-samples

p.s. Though I began working on cut-copy-paste, it began to pose a Huge challenge, so backed off, in order to query the community as to 1) its utility in the current state (w/o that) and 2) just how important including it really is.

p.p.s. Please, report any inconsistencies (between the instructions as listed and what it does) and/or bugs you may find, and I will try to get an update posted as soon as enough of those have collect.

Love and Peace through sharing,

Scott

Externals in Purr-Data

there we go:

tried ./pd_helios.d_ppc and failed

tried ./pd_helios.pd_darwin and failed

tried ./pd_helios/pd_helios.d_ppc and failed

tried ./pd_helios/pd_helios.pd_darwin and failed

tried ./pd_helios/pd_helios-meta.pd and failed

tried ./pd_helios.pd_lua and failed

tried ./pd_helios.pd and failed

tried ./pd_helios.pat and failed

tried ./pd_helios/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios-meta.pd and failed

tried /Library/Pd-l2ork/pd_helios.pd_lua and failed

tried /Library/Pd-l2ork/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.pat and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd and failed

tried /System/Library/Fonts/pd_helios.d_ppc and failed

tried /System/Library/Fonts/pd_helios.pd_darwin and failed

tried /System/Library/Fonts/pd_helios/pd_helios.d_ppc and failed

tried /System/Library/Fonts/pd_helios/pd_helios.pd_darwin and failed

tried /System/Library/Fonts/pd_helios/pd_helios-meta.pd and failed

tried /System/Library/Fonts/pd_helios.pd_lua and failed

tried /System/Library/Fonts/pd_helios.pd and failed

tried /System/Library/Fonts/pd_helios.pat and failed

tried /System/Library/Fonts/pd_helios/pd_helios.pd and failed

tried /Library/Fonts/pd_helios.d_ppc and failed

tried /Library/Fonts/pd_helios.pd_darwin and failed

tried /Library/Fonts/pd_helios/pd_helios.d_ppc and failed

tried /Library/Fonts/pd_helios/pd_helios.pd_darwin and failed

tried /Library/Fonts/pd_helios/pd_helios-meta.pd and failed

tried /Library/Fonts/pd_helios.pd_lua and failed

tried /Library/Fonts/pd_helios.pd and failed

tried /Library/Fonts/pd_helios.pat and failed

tried /Library/Fonts/pd_helios/pd_helios.pd and failed

tried /Users/didipiman/Library/Fonts/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios-meta.pd and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pd_lua and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pd and failed

tried /Users/didipiman/Library/Fonts/pd_helios.pat and failed

tried /Users/didipiman/Library/Fonts/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/pd_helios/pd_helios.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios-meta.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pd_lua and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios.pat and failed

tried /Users/didipiman/Library/Pd-l2ork/pd_helios/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.d_ppc and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd_darwin and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios-meta.pd and failed

tried /Library/Pd-l2ork/pd_helios.pd_lua and failed

tried /Library/Pd-l2ork/pd_helios.pd and failed

tried /Library/Pd-l2ork/pd_helios.pat and failed

tried /Library/Pd-l2ork/pd_helios/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pd_lua and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/pd_helios.pd and failed

pd_helios: can't load library```Externals in Purr-Data

i see! sorry about that!

here's what i get. i'm pasting as much as i can here. i can paste more if you want, but perhaps this gives you an idea already? hope so. guessing the startup preferences are not right, but i'm not sure what to fix, sorry!

Welcome to Purr Data

nw.js version 0.28.1

GUI is starting Pd...

GUI listening on port 5400 on host 127.0.0.1

gui_path is /Applications/Pd-l2ork.app/Contents/Resources/app.nw

binary is /Applications/Pd-l2ork.app/Contents/Resources/app.nw/bin/pd-l2ork

Pd started.

incoming connection to GUI

canvasinfo: v0.1

stable canvasinfo methods: args dir dirty editmode vis

classinfo: v.0.1

stable classinfo methods: size

objectinfo: v.0.1

stable objectinfo methods: class

pdinfo: v.0.1

stable pdinfo methods: dir dsp version

[import] $Revision: 1.2 $

[import] is still in development, the interface could change!

compiled against Pd-l2ork version 2.15.2 (20201102-rev.a81c9ef5)

PD_FLOATSIZE = 32 bits

success reading preferences from: /Users/didipiman/Library/Preferences/org.puredata.pd-l2ork

input channels = 2, output channels = 2

working directory is /Users/didipiman

input channels = 2, output channels = 2

input device 0, channels 2

output device 0, channels 2

framesperbuf 64, nbufs 13

rate 44100

... opened OK.

tried ./libdir.d_ppc and failed

tried ./libdir.pd_darwin and failed

tried ./libdir/libdir.d_ppc and failed

tried ./libdir/libdir.pd_darwin and failed

tried ./libdir.pd and failed

tried ./libdir.pat and failed

tried ./libdir/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.pat and failed

tried /Library/Pd-l2ork/libdir/libdir.pd and failed

tried /System/Library/Fonts/libdir.d_ppc and failed

tried /System/Library/Fonts/libdir.pd_darwin and failed

tried /System/Library/Fonts/libdir/libdir.d_ppc and failed

tried /System/Library/Fonts/libdir/libdir.pd_darwin and failed

tried /System/Library/Fonts/libdir.pd and failed

tried /System/Library/Fonts/libdir.pat and failed

tried /System/Library/Fonts/libdir/libdir.pd and failed

tried /Library/Fonts/libdir.d_ppc and failed

tried /Library/Fonts/libdir.pd_darwin and failed

tried /Library/Fonts/libdir/libdir.d_ppc and failed

tried /Library/Fonts/libdir/libdir.pd_darwin and failed

tried /Library/Fonts/libdir.pd and failed

tried /Library/Fonts/libdir.pat and failed

tried /Library/Fonts/libdir/libdir.pd and failed

tried /Users/didipiman/Library/Fonts/libdir.d_ppc and failed

tried /Users/didipiman/Library/Fonts/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/libdir/libdir.d_ppc and failed

tried /Users/didipiman/Library/Fonts/libdir/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/libdir.pd and failed

tried /Users/didipiman/Library/Fonts/libdir.pat and failed

tried /Users/didipiman/Library/Fonts/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/list-abs/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mapping/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/markex/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/maxlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/memento/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/mjlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/motex/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/oscx/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pddp/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pdogg/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pixeltango/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/rradical/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/sigpack/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/smlib/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/unauthorized/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pan/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/hcs/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/jmmmp/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ext13/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ggee/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/ekext/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/disis/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/lyonpotpourri/libdir/libdir.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir/libdir.d_ppc and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir/libdir.pd_darwin and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.pd and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir.pat and failed

tried /Users/didipiman/Library/Pd-l2ork/libdir/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir/libdir.d_ppc and failed

tried /Library/Pd-l2ork/libdir/libdir.pd_darwin and failed

tried /Library/Pd-l2ork/libdir.pd and failed

tried /Library/Pd-l2ork/libdir.pat and failed

tried /Library/Pd-l2ork/libdir/libdir.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir/libdir.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/libdir/libdir.pd_darwin and succeeded

libdir loader 1.10

compiled on Nov 3 2020 at 11:39:53

compiled against Pd version 0.48.0.

tried ./cyclone.d_ppc and failed

tried ./cyclone.pd_darwin and failed

tried ./cyclone/cyclone.d_ppc and failed

tried ./cyclone/cyclone.pd_darwin and failed

tried ./cyclone/cyclone-meta.pd and failed

tried ./cyclone.pd and failed

tried ./cyclone.pat and failed

tried ./cyclone/cyclone.pd and failed

tried /Library/Pd-l2ork/cyclone.d_ppc and failed

tried /Library/Pd-l2ork/cyclone.pd_darwin and failed

tried /Library/Pd-l2ork/cyclone/cyclone.d_ppc and failed

tried /Library/Pd-l2ork/cyclone/cyclone.pd_darwin and failed

tried /Library/Pd-l2ork/cyclone/cyclone-meta.pd and failed

tried /Library/Pd-l2ork/cyclone.pd and failed

tried /Library/Pd-l2ork/cyclone.pat and failed

tried /Library/Pd-l2ork/cyclone/cyclone.pd and failed

tried /System/Library/Fonts/cyclone.d_ppc and failed

tried /System/Library/Fonts/cyclone.pd_darwin and failed

tried /System/Library/Fonts/cyclone/cyclone.d_ppc and failed

tried /System/Library/Fonts/cyclone/cyclone.pd_darwin and failed

tried /System/Library/Fonts/cyclone/cyclone-meta.pd and failed

tried /System/Library/Fonts/cyclone.pd and failed

tried /System/Library/Fonts/cyclone.pat and failed

tried /System/Library/Fonts/cyclone/cyclone.pd and failed

tried /Library/Fonts/cyclone.d_ppc and failed

tried /Library/Fonts/cyclone.pd_darwin and failed

tried /Library/Fonts/cyclone/cyclone.d_ppc and failed

tried /Library/Fonts/cyclone/cyclone.pd_darwin and failed

tried /Library/Fonts/cyclone/cyclone-meta.pd and failed

tried /Library/Fonts/cyclone.pd and failed

tried /Library/Fonts/cyclone.pat and failed

tried /Library/Fonts/cyclone/cyclone.pd and failed

tried /Users/didipiman/Library/Fonts/cyclone.d_ppc and failed

tried /Users/didipiman/Library/Fonts/cyclone.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone.d_ppc and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone.pd_darwin and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone-meta.pd and failed

tried /Users/didipiman/Library/Fonts/cyclone.pd and failed

tried /Users/didipiman/Library/Fonts/cyclone.pat and failed

tried /Users/didipiman/Library/Fonts/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cyclone/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/pd_helios/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/zexy/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/creb/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/cxc/cyclone/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone.d_ppc and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone.pd_darwin and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone-meta.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.pd and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone.pat and failed

tried /Applications/Pd-l2ork.app/Contents/Resources/app.nw/extra/iemlib/cyclone/cyclone.pd and failed

Pd vanilla - gate - steady time interval

@JMC64 said:

I am struggling with creating a externally synched LFO receiving a clock from an external source. For instance a sawtooth (phasor~) .

receiving a tick( bang) from the emitter

But the emitter of the clock is of course is not perfect and the clock not completely steady.

What clock-source is this?

An audio signal? A Midi clock?

Keep in mind that PD's control/message domain, including Midi i/o and most control-objects, (such as [snapshot~] ) have a resolution of 64 samples only.

Is this the cause of jitter here?

With [tabsend~] you can use every sample of a block~ in the control-domain.

I tried averaging the value of the time interval with a running average but this is not satisfactory.

I also wish not to change the average value of the frequency of the LFO every time a tick is received is received, but only when a significant change occurs.

Maybe try the median, instead of a moving average?

Together with a hysteresis detecting greater changes, as @whale-av has suggested.

leading to a desynch with the incoming clock and a slow respond to real change of the external clock (i.e. 120 -> 130 BPM).

Every averaging filter won't be exact by definiton.

And to be "on time" at a change, you have to "look-ahead".

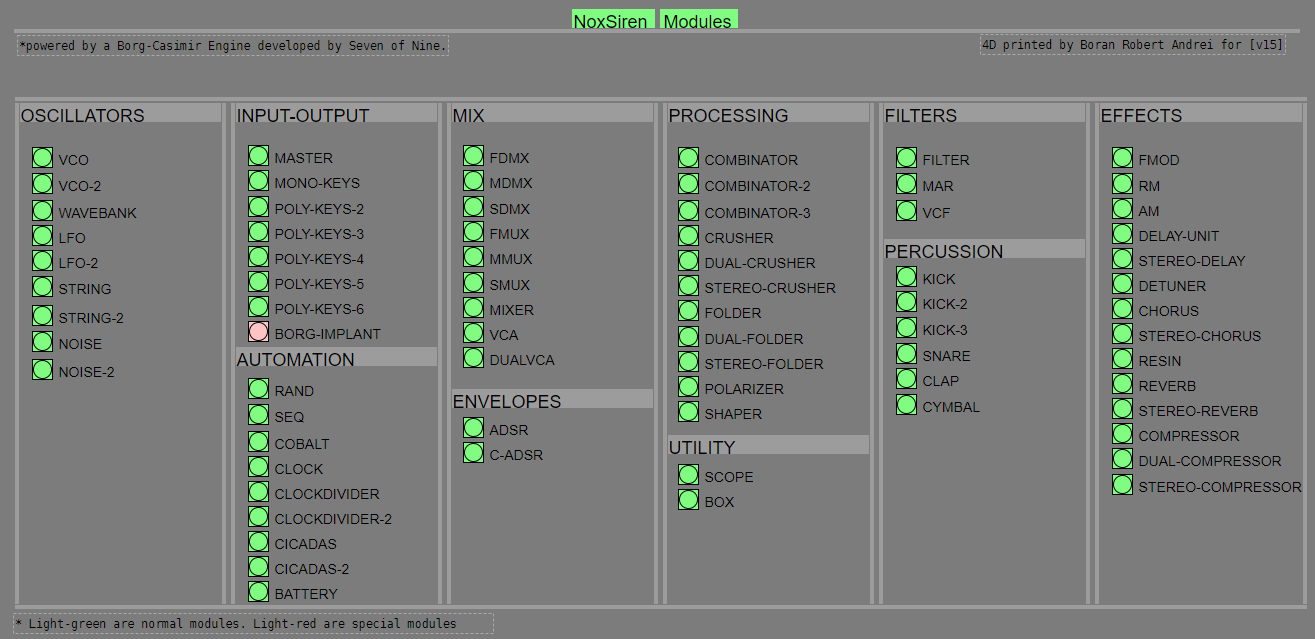

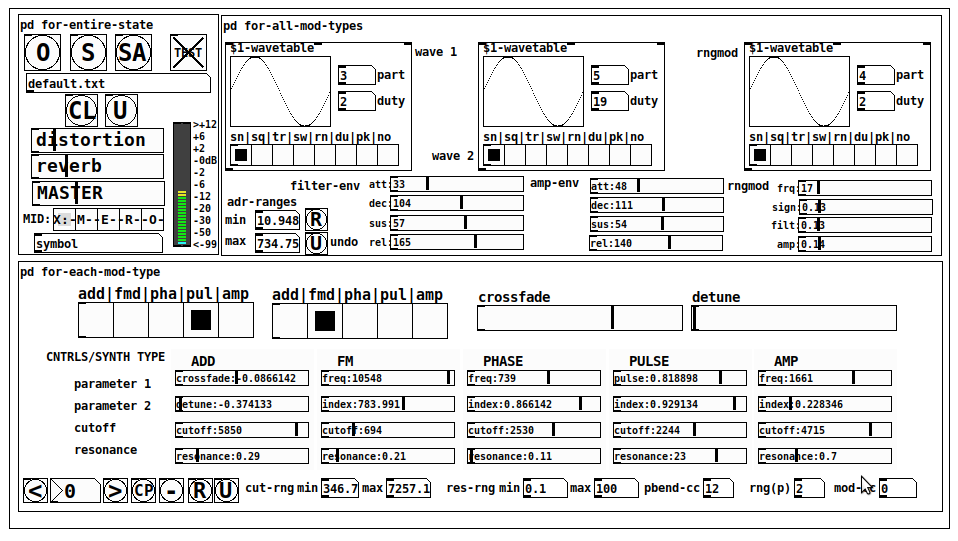

NoxSiren - Modular synthesizer system <- [v15]

NoxSiren is a modular synthesizer system where the punishment of failure is the beginning of a new invention.

--DOWNLOAD-- NoxSiren for :

-

Pure Data :

NoxSiren v15.rar

NoxSiren v14.rar -

Purr Data :

NoxSiren v15.rar

NoxSiren v14.rar

--DOWNLOAD-- ORCA for :

- x64, OSX, Linux :

https://hundredrabbits.itch.io/orca

In order to connect NoxSiren system to ORCA system you also need a virtual loopback MIDI-ports:

--DOWNLOAD-- loopMIDI for :

- Windows 7 up to Windows 10, 32 and 64 bit :

https://www.tobias-erichsen.de/software/loopmidi.html

#-= Cyber Notes [v15] =-#

- added BORG-IMPLANT module.

- introduction to special modules.

- more system testing.