phasor-makenote connection

@mkdewolf I recommend you take a step by step approach from the bottom up rather than from the top down because the first way you only have to learn one small thing at a time whereas the second way you have to learn many things at once. If you approach it this way then if something goes wrong at one of those small steps, you can just examine the few things you added or changed to find where the problem is.

Start by simply controlling [phasor~] with a frequency slider. Put a number display box between your slider and the phasor so you can see what's going on. You will immediately notice that frequency is different than pitch, and that a frequency of 10 sounds more like a beat than a tone. Next you could look for a way to convert a pitch number (i.e. MIDI) to a frequency. @nicnut suggested something you could use. Next take a look at that tutorial I suggested in your other topic, A02.amplitude.pd. The key insight here is that you can make the note turn on by taking the volume from 0 to some positive value, and the positive value it ends up on represents velocity. Conversely, you can make the note turn off by turning the volume back down to 0. The timing between when the note turns on and when it turns off is your duration, so you would next need a way to automate that. A delay from when the note turns on is one possibility, but you've already discovered [makenote], so that's another way. @nicnut's suggestion, setting the frequency to 0 after some delay, is another possibility for note off but I'd bet that it has side effects you wouldn't like. (Or maybe you would--that's up to you!)

Even if you do all these steps, the result will still be pretty crude, but you can continue refining one bit at a time and get closer and closer to something good at each step. You can get ideas about how to refine your patch by looking at some of the other tutorials, e.g. C10.monophonic.synth.pd and J08.classicsynth.pd. Once you have something that you like well enough, then you can add note-velocity-duration sequences (maybe using [text] as @whale-av suggested in your other topic), and finally, you can work on generating sequences that are serialized.

PS: if you're really only interested in serialization (and don't really care about making a synth from scratch) then there are other options available to you. Reply if so and then I or someone else can suggest alternatives.

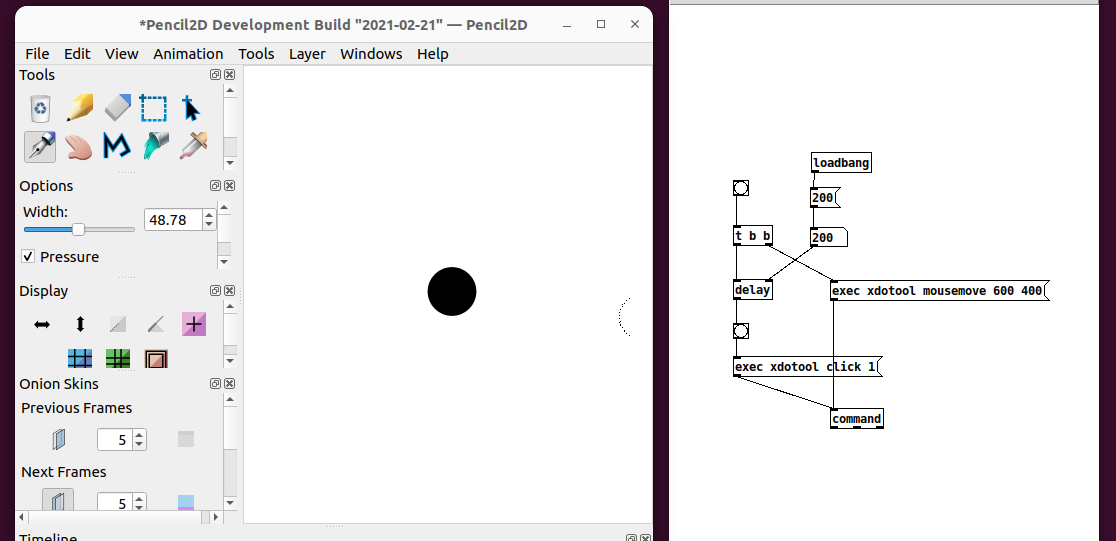

Controlling mouse position coordinates (Linux)

I think I found the problem.

This is my test.

I divide the display into two parts:

-on the left there is Pencil2D (a simple drawing app)

-on the right there is the Pure Data patch

By clicking on the bang in pd, a circle is drawn in Pencil2D, at the point of desired (x,y) coordinates.

Very nice.

Note: it also works without the "Windows id" (which isn't there).

So, this happens:

in puredata: it works only introducing a delay > 100ms between "mouse move" and "mouse click". With a delay <100ms it doesn't work.

But...running directly from terminal it also works without delay:

me:~$ xdotool mousemove 600 400 && xdotool click 1

...a circle is drawn istantly, without delay.

How do you explain that in puredata I have to insert a delay > 100ms?

Now, the problem is that...trying with other windows of other "non-drawing" programs, it doesn't always work: the click doesn't always have an effect. I have to understand why. Yet the arrow moves and the arrival window is highlighted (therefore it is active), but the click has no effect.

@oid : about your patch "cursor.pd", I wasn't able to use it for my purpose, but it's so cool... I didn't know it could be done, beautiful.

Bye,

a.

Avoid clicks controlling Time Delay with MIDI Knob

@Zooquest You will hear clicks because there will be jumps between samples.... if the playback was at a sample level of 0 and you change the delay time to a point where the level is 1 then the click will be loud.

You will hear "steps" as the playback point is probably being changed repeatedly by the ctrl messages.

You could experiment with a [lop~] filter in the delayed sound output, with a high value e.g... [lop~ 5000] but you will lose some high frequencies in the echo.

That might sound natural though, like an old tape echo, but you will probably still hear the clicks a little.

Or you could "duck" the echoes by not changing the delay time immediately, reducing the echo volume to zero in say 3msecs using using [vline~] and not bringing the echo volume back up to 1 (in, say, 3msecs again) until you have NOT received a ctrl message to change the delay time...... for again 3msecs.

The last part of that would need a [delay 3] using the property that any new bang arriving at its inlet will cancel the previously scheduled bang.

You would need to duck the FB signal as well though, and all that that might sound worse than the [lop~].

I cannot remember well...... but this "sampler_1.pd" might contain elements useful to demonstrate "ducking" https://forum.pdpatchrepo.info/topic/13641/best-way-to-avoid-clicks-tabread4/12

Or do a crossfade between separate delays once the incoming control messages have stopped..... https://forum.pdpatchrepo.info/topic/12357/smooth-delay-line-change-without-artifacts ..... as you can then avoid the "duck"ing effect.

David.

Karplus strong and strange issues with fexpr~

@reubenm I never care about computational expense until it's a problem, and even then it sometimes generates some cool stuttering that I would never have thought of. But if that fexpr~ way of doing things speaks to you, then maybe you should look into supercollider  And I'm not trying to be a jerk because as a former java/c# programmer I'd probably prefer it!

And I'm not trying to be a jerk because as a former java/c# programmer I'd probably prefer it!

Edit: Ouch! I think I'm confused about the delay line and forced order of execution. In KS you're feeding back the output of the delay line into the input, so either you have to have the delay line read upstream of the write (and so you have a min 1 block delay) or you have to send the delay line read signal back up to the delay line write (which also incurs a 1 block delay). So either way I think you have to reduce the block size to get frequencies above 689hz.

count~ pause option?

@KMETE said:

edit: and my reason is that if I want to do comparison of two numbers - lets say if the timer that s running is larger then constant number - if the timer will not output the numbers constantly I might miss this logic...

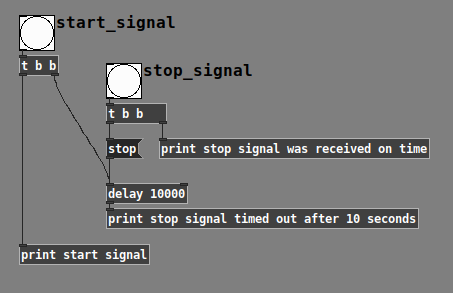

What you're talking about is a timeout situation -- checking whether something did or didn't happen within x amount of time.

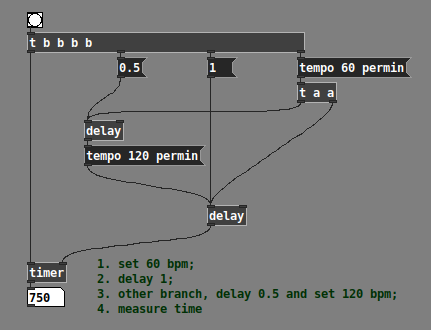

In the normal case, in Pd, you can do a timeout like this (no [metro], no constant polling):

Here, I'm using the word "start" and "stop," but they can be any messages being generated for any reason.

-

If the user hits only "start," then the [delay] time expires, [delay] generates a bang, and you know that the elapsed time >= threshold.

-

If the user hits "start" and then "stop" within the time limit, then 1/ you have the trigger from the user and 2/ the [delay] gets canceled (no erroneous timeout message).

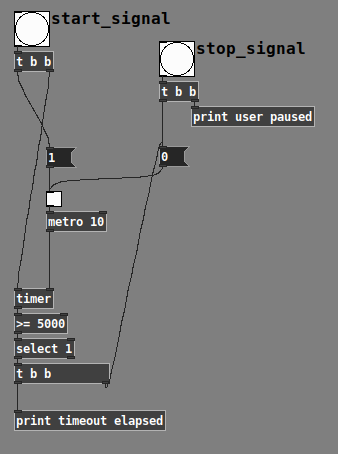

When you're talking about a timer that can pause, that complicates it a bit (because [delay] wouldn't know about the pause).

But, to be honest, based on your requirement from the other thread -- when audio playback pauses (because of user action or the 30-second(?) timeout), you're always resetting the timer. When the timer goes back to 0, then pause is irrelevant. So I kinda wonder if the original question in this post stems from an unclear requirement rather than an actual need (though it is a kinda cool abstraction, and I'll add it to my library later).

After all, if you only want to stop checking for the timeout based on user pause, you can just stop banging the [timer] at that point... then, no check, no false timeout, and no need to over-engineer. Or using the [delay] approach, just "stop" the delay.

So there's a polling timeout that is aware of paused status, without using a special pausable timer. So this idea that you have to keep banging the timing object and the timing object should be responsible for not advancing when paused is perhaps overcomplicated.

edit2: I could bang the poll message every 10ms using metro but then we again have an issue on cpu?

[timer] shouldn't use much CPU... it's probably fine, and if it's more comfortable for you, feel free to go that way. I'm just pointing out that brute force is not the only way here.

hjh

PlugData / Camomile "position" messages: What are these numbers?

@whale-av said:

@ddw_music Yes.... buffer.

Maybe some DAWs have implemented a tempo message since then?

Tempo must be available, since plug-ins have been doing e.g. tempo-synced delays for at least a decade already.

(In fact, it is available via Camomile: [route tempo position].)

Anyway... I've been considering solutions, and I think I can do this.

- Set some threshold close to the beat, but before the beat, say x.9 beats.

- For the first tick past the threshold, get "time to next beat" = roundUp(pos) - pos = int(pos + 0.99999999) - pos.

- [delay] by this many beats ([delay] and the tick-scheduler will have been fed "tempo x permin" messages all along).

- Then issue the tick message to the scheduler, in terms of whole beats (where the time-slice duration is always 1.0).

At constant tempo, I'd expect this to be sub-ms accurate.

If the tempo is changing, especially a large, sudden change, then there might be some overlaps or gaps. But [delay] correctly handles mid-delay tempo changes, so I'd expect these to be extremely small, probably undetectable to the ear.

Half a beat at 60 bpm + half a beat at 120 bpm does indeed = 750 ms -- so the delay object is definitively updating tempo in the middle of a wait period.

I'll have to build it out and I don't have time right now, but I don't see an obvious downside.

hjh

phase index of an oscillator and 1 sample delay in pd?

delread~ is not a sample delay it is a tapping buffer.

a tapping delay does not delay "the samples", it delays their content.

plus its minimum delay time is the vectorsize.

i think in pd you would replace [delay~ 1] with [fexpr~]... something like x = x1, no idea about the correct synthax.

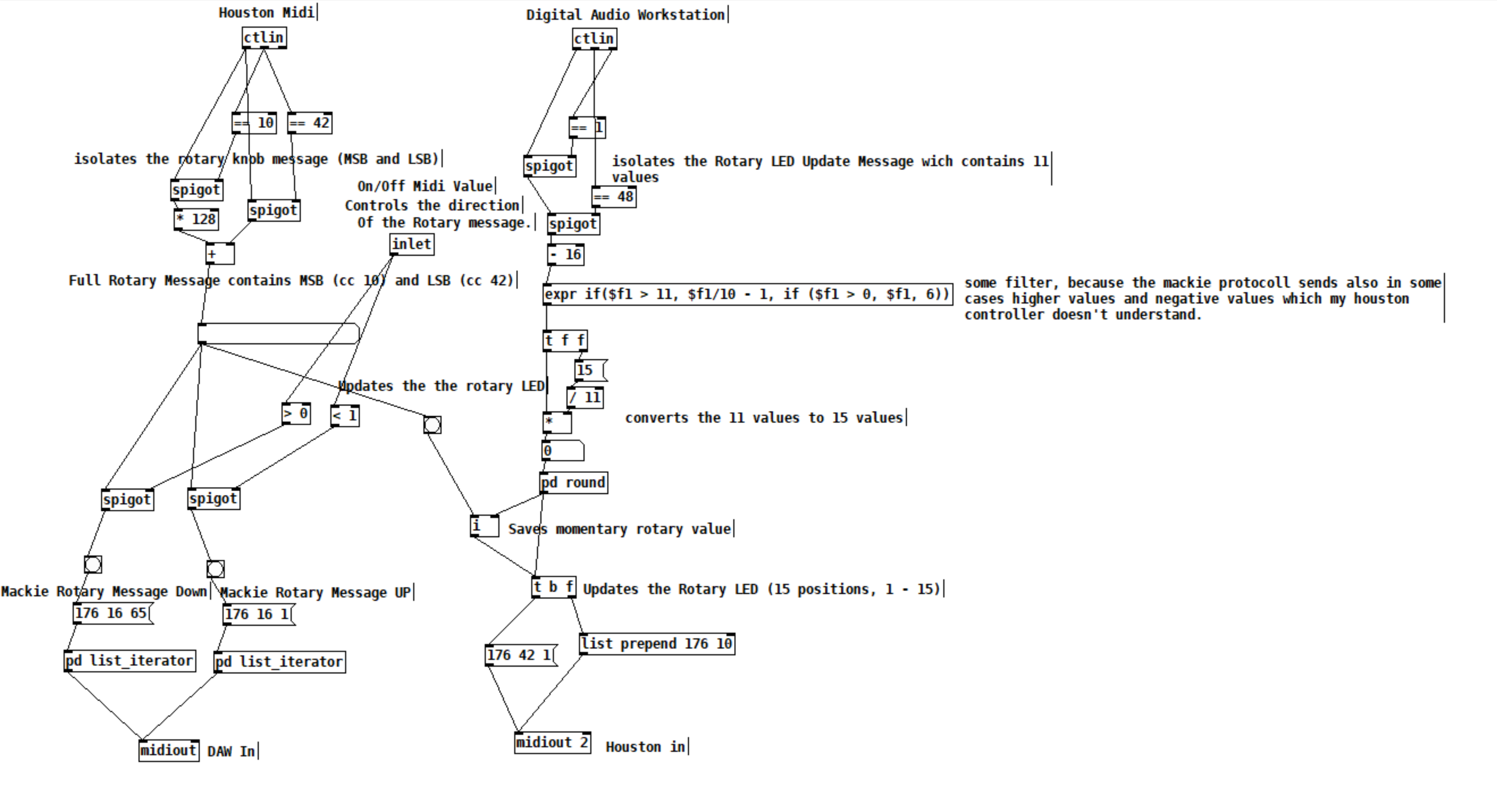

Midi Rotary Knob Direction Patch/Algorythm?

Hey everybody,

Sorry, for a lot of text. But the bold text at the bottom is my main question. The rest will help you to get a better understanding of my situation.

you helped me so much, with my last question here (the Faders are working dope now):

https://forum.pdpatchrepo.info/topic/13849/how-to-smoothe-out-arrays/25

I am doing a Steinberg Houston to Mackie Control emulation at the moment, to use my controller with other DAWs than Cubase/Nuendo. Will upload it to the internet community, when I am finished for the handful of people that maybe are also using this controller.

I made good progress:

I got the Faders and the normal knobs to work. And the display puts out information. But it is with bugs, because the LCD Screen of the Houston has 40 characters for one line and the Mackie Universal Pro has 56 Characters. So i did a list algorithm, which deletes spaces of the mackie message until the message fits on the 40 character line. Maybe there is a method wich will work better but this subject eats too much time for me at the moment and it works rough okay. One defenitely get's some helpful information on the screen from the DAW.

The Faders and Rotary Knobs and normal knobs are the most important of this controller I guess. The Faders are working fine as I mentioned above, but there is a problem with the rotary knobs, wich I can't handle alone and hope you can help me.

The problem is, that the Mackie Controller send simple clicks to the DAW. If you are turning a rotary knob, it sends out a number of midi messages:

If you turn it right, it sends midi messages wich contains the value 1 and if you turn it down it sends messages wich are containing the value 65.

"When the VPots are rotated rapidly, a message equal to the number of clicks is sent."

BUT the Houston controller instead is sending values like it's faders with 15 (MSB) and 128(LSB) values. AND it is updating the rotary limit by itself. So if I turn a rotary, it will update it's LEDs and stops sending midi messages when it reaches the maximum or minimum value. So, I did this patch as a momentary state:

The DAW sends 11 values for the Houston LEDs. 11 is max and 1 is min. This is good, I send this values to my houston controller and can update the rotary values and LEDs.

With this updated values from the DAW, I can force my rotary knobs, that they don't stop to send values, because they are set to the values, which the DAW sends, every time I turn a knob. With this method I got it to work to imitate a Mackie Rotary knob. Everytime the Houston Rotary value changes, it sends Mackie "midi click values" according to the amount of midi value changes of the houston.

BUT the problem is, that this is working only in one direction. Now my main question:

How can I make pure Data know, if I am turning my knob in the left direction or in the right direction? There is also the problem, which I mentioned above, that I set the momentary value everytime, I move the rotary, so that I get a unlimited amount of possible rotary move "clicks". Also the midi values which the houston sends arent perfect smooth. It works fine, but it isn't like that, that if you move a rotary in one direction, every value one by another is perfectly lower or higher.

I think I maybe need a algorythm, which looks if the values in a time period are getting higher or lower and then send out bangs on two seperate outlets. For example the left outlet for lower values and the right outlet for higher values. And it should also detect, if I move the rotary fast or slow. So a constant smoothing or clocked bang is also not an option. This is defenitely to complicated for me. I have no idea and what I tried didn't worked.

Would be super cool, if you could help me out again.

s~/r~ throw~/catch~ latency and object creation order

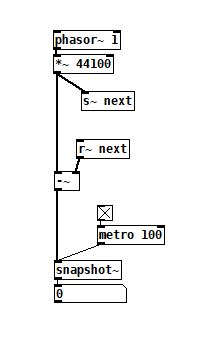

For a topic on matrix mixers by @lacuna I created a patch with audio paths that included a s~/r~ hop as well as a throw~/catch~ hop, fully expecting each hop to contribute a 1 block delay. To my surprise, there was no delay. It reminded me of another topic where @seb-harmonik.ar had investigated how object creation order affects the tilde object sort order, which in turn determines whether there is a 1 block delay or not. Object creation order even appears to affect the minimum delay you can get with a delay line. So I decided to do a deep dive into a small example to try to understand it.

Here's my test patch: s~r~NoLatency.pd

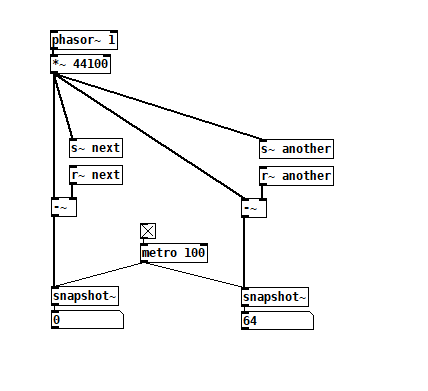

The s~/r~ hop produces either a 64 sample delay, or none at all, depending on the order that the objects are created. Here's an example that combines both: s~r~DifferingLatencies.pd

The s~/r~ hop produces either a 64 sample delay, or none at all, depending on the order that the objects are created. Here's an example that combines both: s~r~DifferingLatencies.pd

That's pretty kooky! On the one hand, it's probably good practice to avoid invisible properties like object creation order to get a particular delay, just as one should avoid using control connection creation order to get a particular execution order and use triggers instead. On the other hand, if you're not considering object creation order, you can't know what delay you will get without measuring it because there's no explicit sort order control. Well...technically there is one and it's described in G05.execution.order, but it defeats the purpose of having a non-local signal connection because it requires a local signal connection. Freeze dried water: just add water.

That's pretty kooky! On the one hand, it's probably good practice to avoid invisible properties like object creation order to get a particular delay, just as one should avoid using control connection creation order to get a particular execution order and use triggers instead. On the other hand, if you're not considering object creation order, you can't know what delay you will get without measuring it because there's no explicit sort order control. Well...technically there is one and it's described in G05.execution.order, but it defeats the purpose of having a non-local signal connection because it requires a local signal connection. Freeze dried water: just add water.

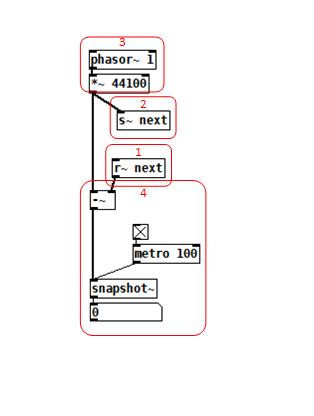

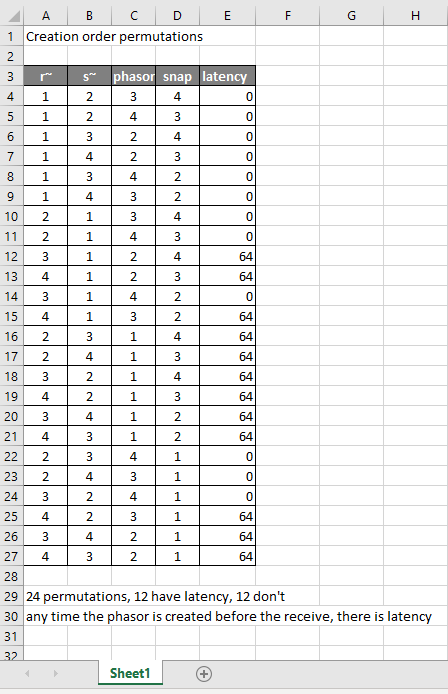

To reduce the number of cases I had to test, I grouped the objects into 4 subsets and permuted their creation orders:

The order labeled in the diagram has no latency and is one that I just stumbled on, but I wanted to know what part of it is significant, so I tested all 24 permuations. (Methodology note: you can see the object creation order if you open the .pd file in a text editor. The lines that begin with "#X obj" list the objects in the order they were created.)

The order labeled in the diagram has no latency and is one that I just stumbled on, but I wanted to know what part of it is significant, so I tested all 24 permuations. (Methodology note: you can see the object creation order if you open the .pd file in a text editor. The lines that begin with "#X obj" list the objects in the order they were created.)

It appears that any time the phasor group is created before the r~, there is latency. Nothing else matters. Why would that be? To test if it's the whole audio chain feeding s~ that has to be created before, or only certain objects in that group, I took the first permutation with latency and cut/pasted [phasor~ 1] and [*~ 44100] in turn to push the object's creation order to the end. Only pushing [phasor~ 1] creation to the end made the delay go away, so maybe it's because that object is the head of the audio chain?

I also tested a few of these permutations using throw~/catch~ and got the same results. And I looked to see if connection creation order mattered but I couldn't find one that did and gave up after a while because there were too many cases to test.

So here's what I think is going on. Both [r~ next] and [phasor~ 1] are the heads of their respective locally connected audio chains which join at [-~]. Pd has to decide which chain to process first, and I assume that it has to choose the [phasor~ 1] chain in order for the data buffered by [s~ next] to be immediately available to [r~ next]. But why it should choose the [phasor~ 1] chain to go first if it's head is created later seems arbitrary to me. Can anyone confirm these observations and conjectures? Is this behavior documented anywhere? Can I count on this behavior moving forward? If so, what does good coding practice look like, when we want the delay and also when we don't?

Signal-rate circular buffer?

@ddw_music I think whichever's easiest depends on if you're (over)writing the circular buffer continuously or not. If you are then [vd~]/[delread4~] with some kind of [samphold~] & [phasor~] combo to keep playing the same grain might be easier than managing your own but maybe the case is specific enough that it's worth making your own delay line that can pause writing.

but then how does it wrap around if you don't pause before the end? AFAICS there is no reliable way to make it do that.

you would have to make sure the array is a multiple of 64 samples and schedule the [tabwrite~] to start the next pass using message-rate (probably [delay]). granted, it might be far messier than the [poke~] object solution. But that would be the vanilla way to do it, and it may be more efficient since you don't have to provide your own [phasor~] (and things may be vectorized better by the compiler).

going that route might be more complicated in coordinating the exact delay time when stopping recording anyways though. (bc signal/message rate interaction).

But the thing about pausing writing is that when you move off of that grain the next time frame won't be available yet, the stuff in the array will be behind by however long you were playing that grain for, so you would have to wait for the entire array to fill up again in order to guarantee consistency in delay time.

What we really need is an object like max/msp's [stutter~] (which copies from a delay-line to another play buffer) with interpolation. I've been meaning to get to making a near-clone..

it may be possible to somehow do the same thing that [stutter~] does with complicated logic, but keeping track of what needs to be played from the delay line vs. what's already been copied to the play buffer (and therefore which should be read from for any given sample) would be even more of a headache in pd than it would be in c imo.